Discord Drops Persona Age Verification: Privacy Crisis Explained

Introduction: The Backlash That Changed Everything

Let's be real about what happened with Discord and Persona. Back in early 2025, Discord announced it was rolling out mandatory age verification across the platform. Sounds reasonable on paper—platforms need to comply with regulations, right? But then users started digging into the details, and that's when things got messy.

The company had partnered with Persona, an age verification provider already used by Reddit and Roblox. Sounds familiar because these platforms have similar privacy policies and requirements. But what users discovered was troubling: Persona's technology involved uploading facial scans and identity documents to verify age. The internet wasn't happy about it.

Within days, the criticism exploded across Twitter, Reddit, and Discord servers themselves. People weren't just concerned about age verification—they were worried about facial recognition data being stored, potential government access, and whether their biometric information would actually be deleted. Some security researchers even found exposed code at what appeared to be a government endpoint associated with Persona, which only amplified the panic.

Fast forward a few weeks, and Discord changed its tune. The company's head of product policy, Savannah Badalich, quietly confirmed that the "limited test of Persona in the UK has since concluded." Translation: They're backing away from this partnership, at least publicly. But here's the thing—age verification isn't going away. Discord still needs to comply with regulations in various countries. They're just exploring different vendor partners now.

This whole situation is actually a fascinating case study in how tech companies respond to user pressure, the murky world of age verification technology, and the ongoing tension between regulatory compliance and user privacy. Let's dig into what actually happened, why people freaked out, and what comes next.

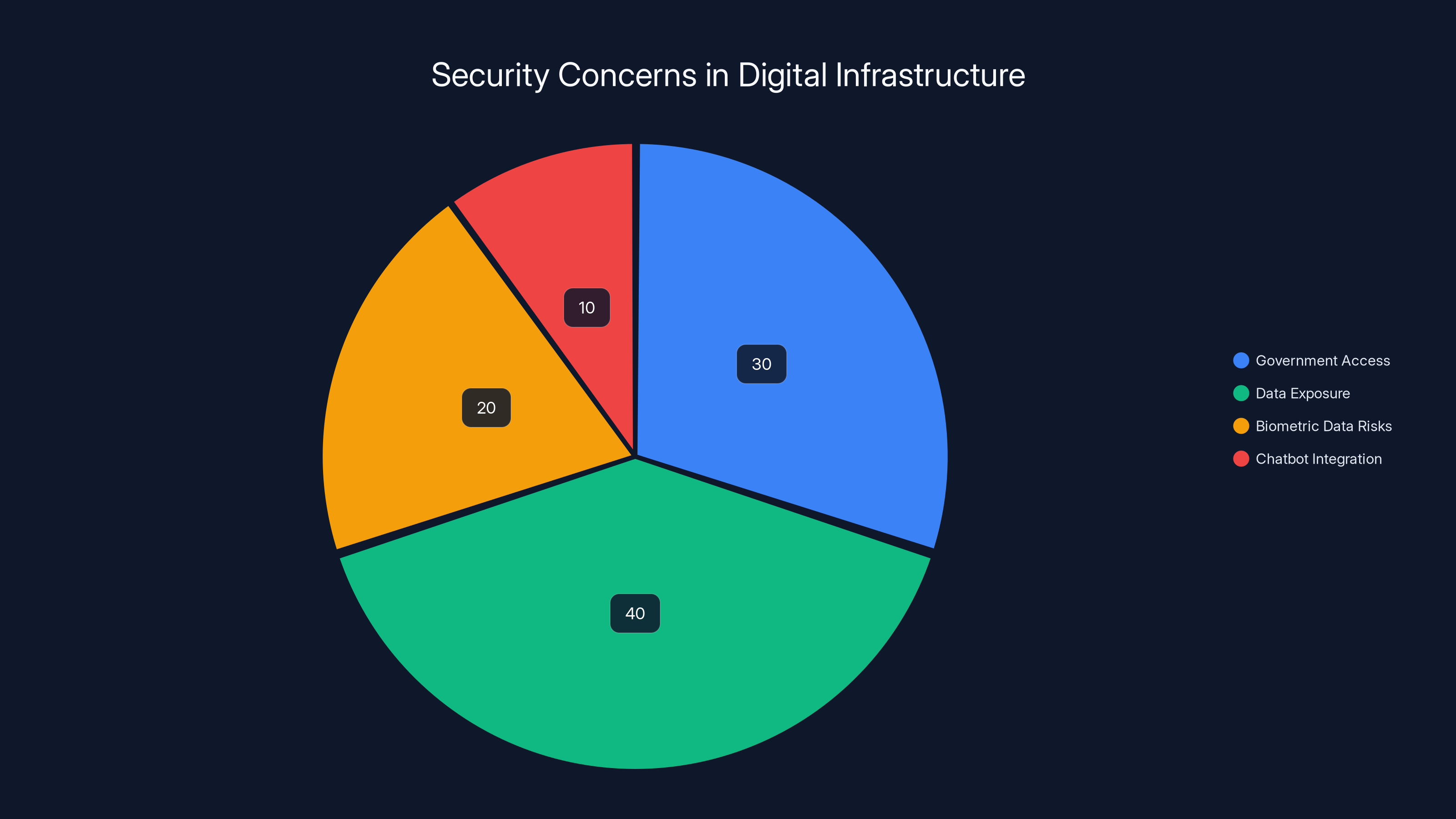

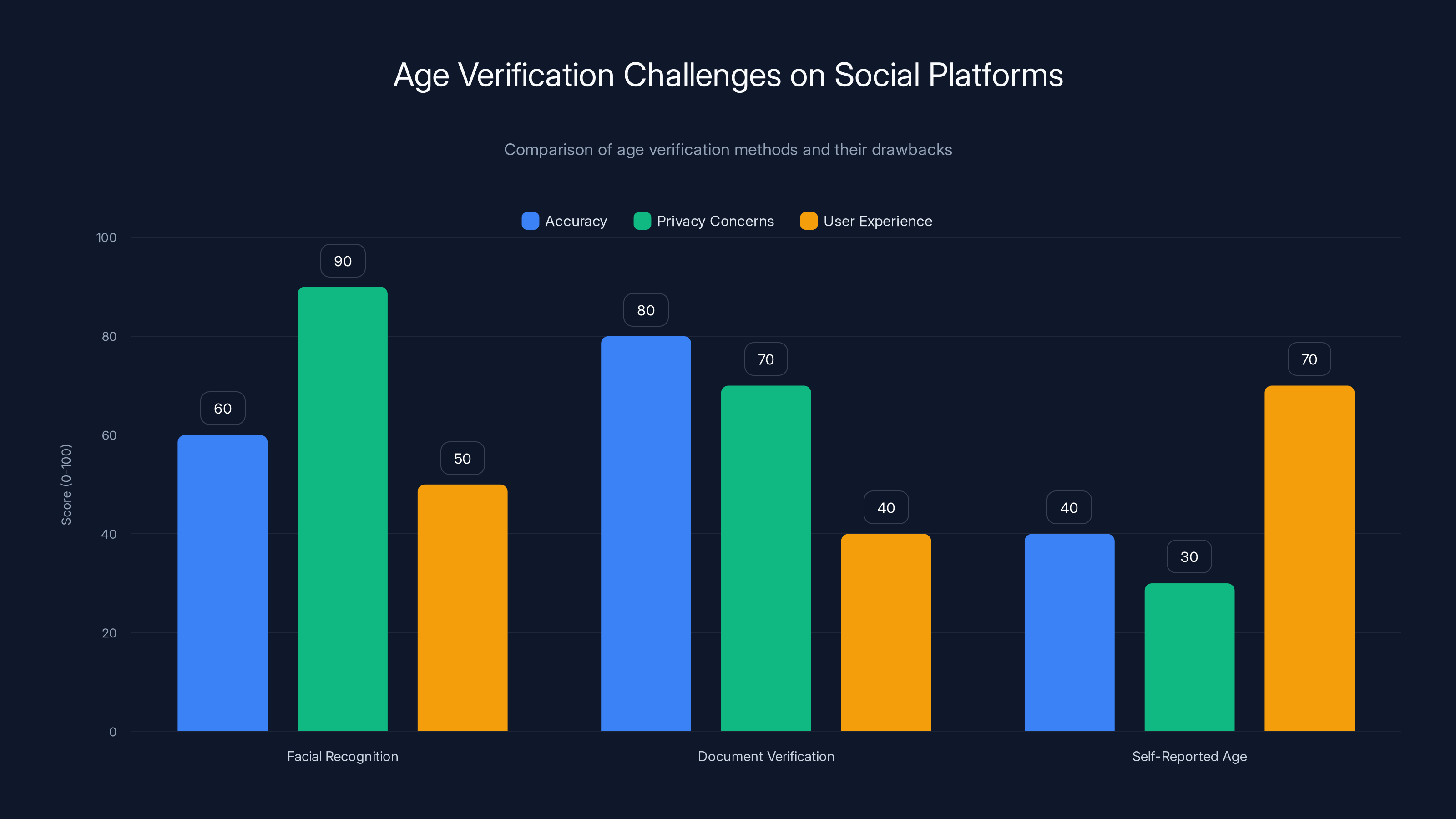

Users express significant concerns about facial recognition, particularly regarding data permanence and company security risks. Estimated data.

TL; DR

- Discord quietly ended its limited Persona age verification test in the UK following intense user backlash over facial recognition and ID uploads

- Privacy concerns were legitimate: Users discovered potential issues with how Persona handled biometric data, including exposed code and government endpoint references

- Persona isn't just Discord's problem: The age verification provider is also used by Reddit and Roblox, affecting millions of users across multiple platforms

- Age verification isn't dead: Discord still plans to implement age assurance globally, just with different vendor partners like k-ID and Veratad

- The real issue: A fundamental clash between regulatory requirements and user expectations around facial recognition data, with no clear industry standard yet

What Is Persona and Why Did Discord Choose It?

Persona isn't some shady startup—it's actually a legitimate age verification provider that's been around for years. The company positions itself as a "fraud and age verification platform" that uses a combination of technology to confirm users' ages. On the surface, this makes sense for Discord. The platform has teenagers using it alongside adults, and various regulations (especially in the EU) require age gating for certain content and services.

Why did Discord pick Persona specifically? Partly because Persona had already proven itself at scale with Reddit and Roblox. If two major platforms trusted them, Discord figured they could too. Persona's marketing emphasizes speed and accuracy—they claim to verify age quickly using facial recognition and ID matching. This appeals to platforms trying to balance compliance with user experience. Nobody wants to wait ten minutes to verify their age.

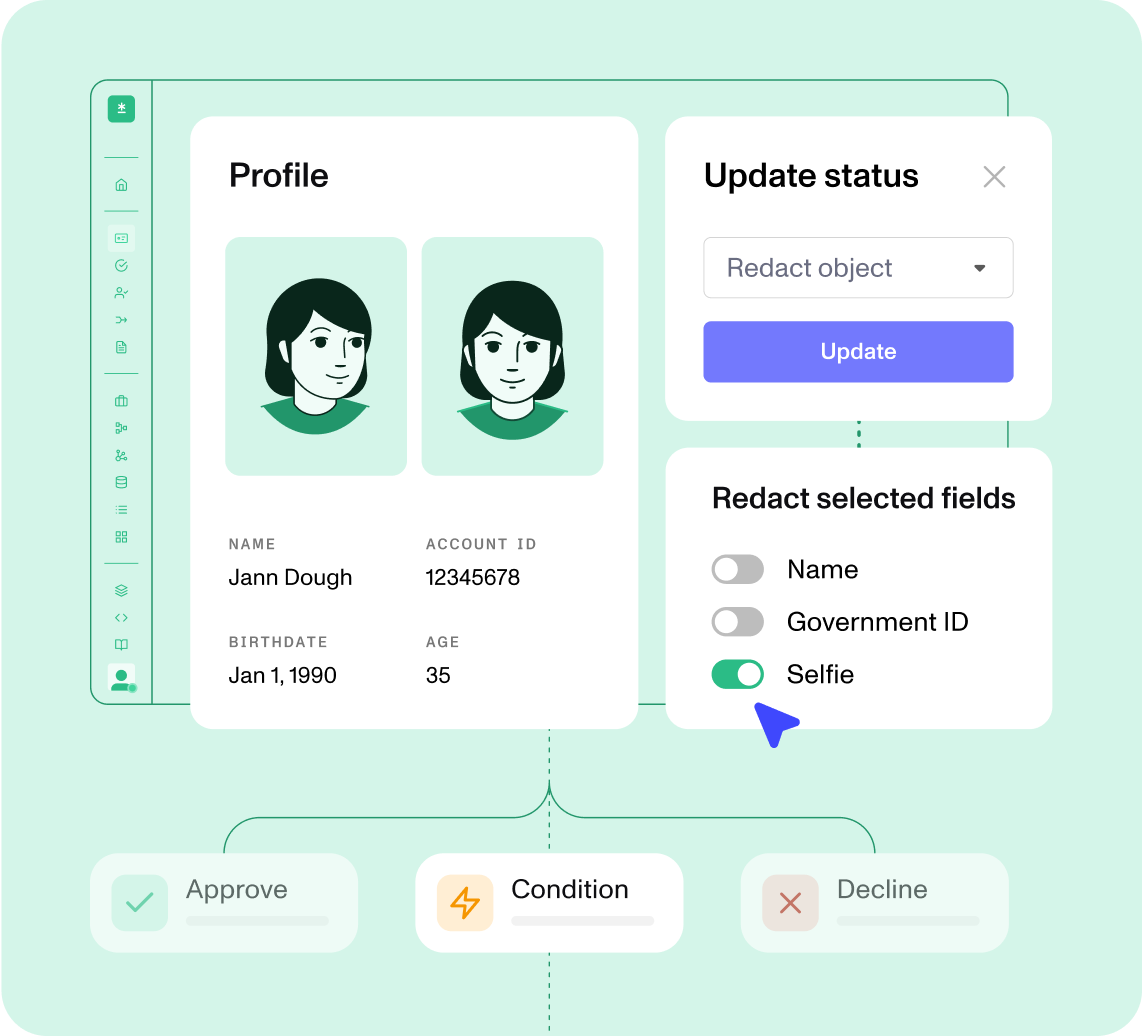

Persona's technology works like this: you take a selfie (facial age estimation), sometimes you upload an ID, and their system compares the data points to determine your age group. They claim the facial recognition scan stays local on your device and isn't uploaded, but the ID documents and "match selfies" are sent to Persona's servers, then supposedly deleted after age confirmation.

The problem? Users didn't believe the "deleted" part. And honestly, after decades of tech company privacy promises, skepticism is justified. Once biometric data gets uploaded anywhere, even temporarily, there's risk it could be breached, leaked, or used for purposes beyond age verification.

Discord should have anticipated this. The company has always positioned itself as pro-privacy and pro-user. Making a deal with an age verification provider that uses facial recognition felt like a complete 180 to the user base. That disconnect is what really triggered the backlash.

Estimated data shows that data exposure (40%) and government access (30%) are the primary concerns raised by the security findings, followed by biometric data risks (20%) and chatbot integration (10%).

The Security Researchers' Findings: What Actually Got Discovered

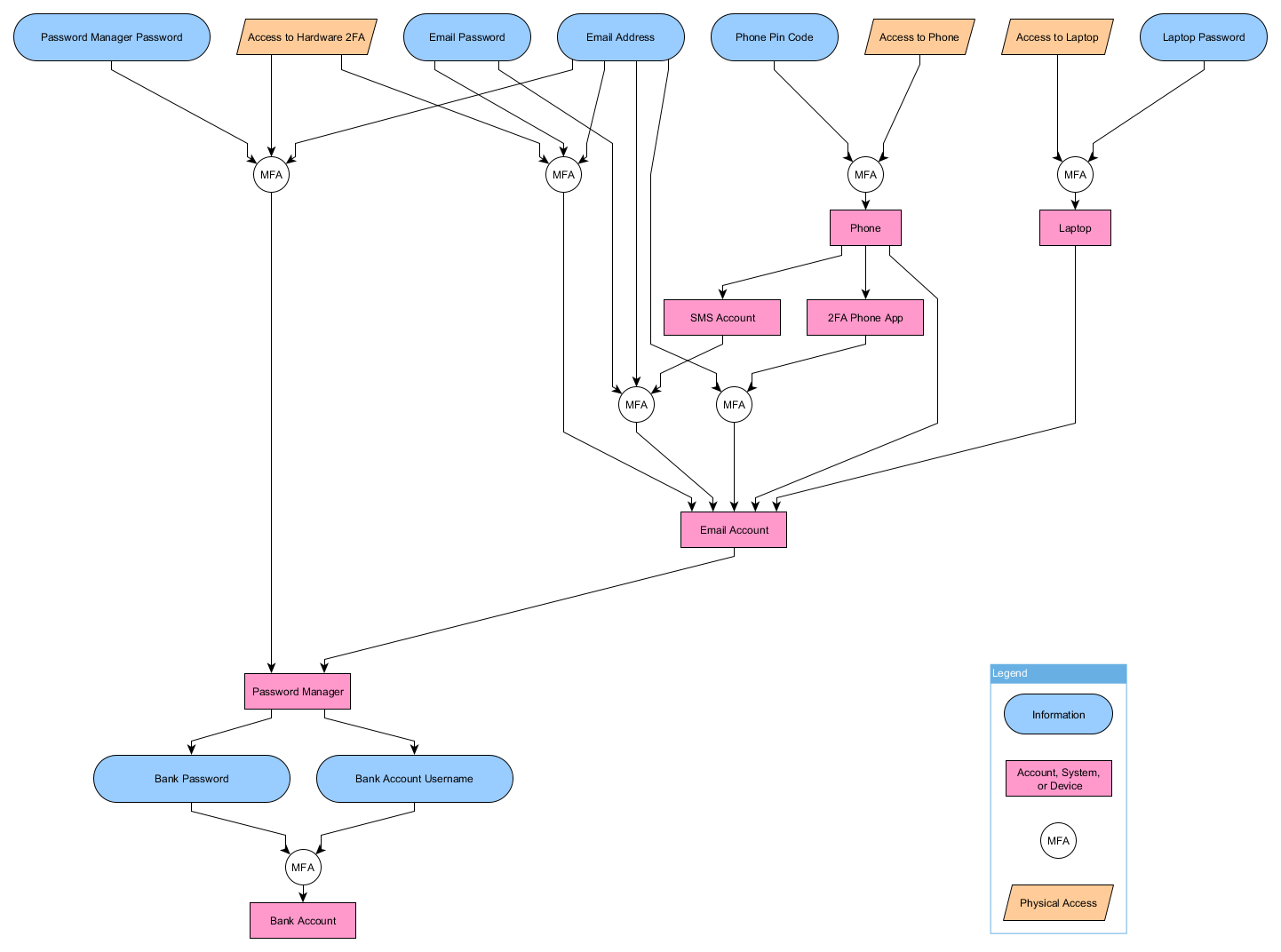

This is where things get genuinely weird. An independent publication called The Rage commissioned security researchers to investigate Persona's infrastructure. What they found was concerning: exposed code at "a US government authorized endpoint" along with over 2,400 files showing an interface that paired facial recognition with financial reporting.

Let's break down why this matters. If Persona's infrastructure was exposed and contained government-related endpoints, it raised immediate questions about whether the company was working with government agencies, whether law enforcement could access age verification data, and whether biometric databases could be subpoenaed or shared.

Persona CEO Rick Song responded to Ars Technica saying the company doesn't have any government contracts. But here's the thing—that doesn't necessarily resolve the concern. Even without direct contracts, government agencies could theoretically access data through legal requests, subpoenas, or data-sharing agreements that aren't publicly disclosed.

The Rage's report also noted that the exposed code "appears to be powered by an Open AI chatbot," which is its own weird detail. Why would an age verification system need a chatbot? Was it for customer support, or something else? The researchers didn't fully explain.

Song confirmed he was in communication with one of the researchers and the exposed code was removed. That's good—active remediation is the right response. But the fact that such exposed infrastructure existed in the first place raised legitimate questions about Persona's security practices and architecture.

Discord users saw this news and basically said, "Absolutely not." And they were right to. If a company can't even keep their code repositories secure, why would you trust them with your facial scans and ID documents?

Discord's PR Pivot: How They Tried to Manage the Crisis

Discord's initial response was pretty standard corporate damage control. When the backlash hit, the company doubled down on messaging about privacy and local processing. Their official statement emphasized that the facial age estimation scan "never leaves your device," trying to reassure users that Discord and their vendors weren't actually collecting facial video.

But here's where Discord's messaging fell apart: they were inconsistent about ID documents. The statement said "Images of your identity documents and ID match selfies are deleted directly after your age group is confirmed," but this language only applied to identity documents, not the facial scans themselves. The distinction confused users and felt deliberately obscured.

When you're in a crisis, clarity matters more than ever. Discord's statement was technically accurate but strategically ambiguous. It let them claim they care about privacy while still collecting and storing ID data. From a communication standpoint, that's a failed approach.

The company also tried the "we're evaluating vendor partners" move. Savannah Badalich's statement that Discord is "regularly evaluating vendor partners to improve our age assurance experience and expand user options while prioritizing privacy" is corporate speak for "we're ditching Persona and hoping nobody notices."

That announcement essentially confirmed Discord heard the complaints and decided Persona was a liability. But instead of a public apology or transparency about what went wrong, they issued a vague statement about "vendor evaluation." It's the kind of half-measure that keeps the story alive longer than a direct acknowledgment would.

The Regulatory Backdrop: Why Discord Even Needs Age Verification

Here's the thing people often miss in these discussions: Discord isn't implementing age verification because they want to. They're doing it because regulations are forcing them to.

The UK's Online Safety Bill and various EU regulations (including extensions of GDPR principles and child safety mandates) require platforms to verify age for users accessing age-restricted content. This isn't unique to Discord—TikTok, YouTube, and virtually every major social platform faces similar requirements.

Britain's Online Safety Bill, in particular, puts responsibility on platforms to ensure child safety. The UK regulator (Ofcom) expects platforms to implement age assurance measures. Failing to comply means fines, blocked access, or other penalties. So Discord didn't choose this because it was fun—they were legally obligated.

The EU takes an even stricter stance. Various member states have implemented or are implementing similar regulations. Combined with GDPR's strict rules on processing children's data and the Digital Services Act's requirements for risk assessment, platforms operating in Europe face significant compliance pressure.

The problem is that age verification technology is still immature. There's no perfect solution. Facial recognition is creepy and inaccurate. Document verification requires ID uploads. Self-reported age is easily falsified. Every option has trade-offs between accuracy, privacy, and user experience.

Discord found itself in an impossible position: implement something that works for compliance but feels invasive to users, or risk regulatory penalties. They chose the invasive route with Persona, then immediately walked it back when users revolted.

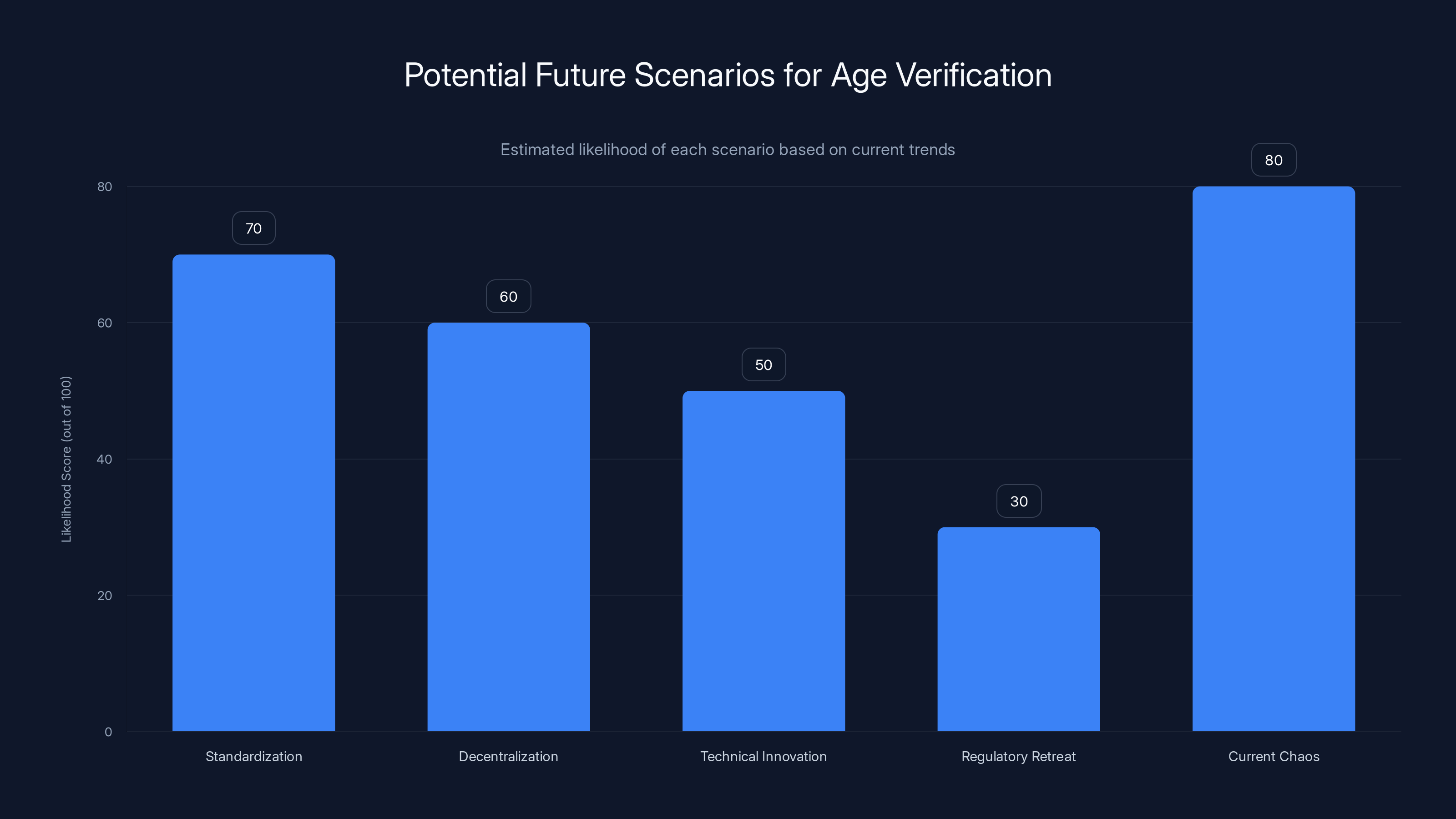

Estimated data suggests 'Current Chaos' and 'Standardization' are the most likely scenarios for the future of age verification, with scores of 80 and 70 respectively.

Persona's Reputation: Why Other Platforms Are Vulnerable

Persona isn't just Discord's problem. The company also provides age verification for Reddit and Roblox, affecting millions of users across three of the internet's most popular platforms. If one platform's users organize against Persona, it creates pressure across all three.

This actually happened. When Reddit users heard about Persona being used for age verification, they complained too. The criticism spread across communities and platforms. Roblox faced similar backlash from the gaming community, particularly parents concerned about biometric data collection from minors.

For Persona, this is a reputation problem at scale. If they become toxic in users' minds, platforms will have to switch vendors. That's expensive, disruptive, and embarrassing. Persona's entire business model depends on being trusted by major platforms. When that trust erodes, their revenue erodes with it.

The company is now associated with government endpoint exposures, biometric data concerns, and the general discomfort around facial recognition. Even if none of the worst-case scenarios actually happened, perception is reality in tech. Persona's brand is now synonymous with surveillance concerns.

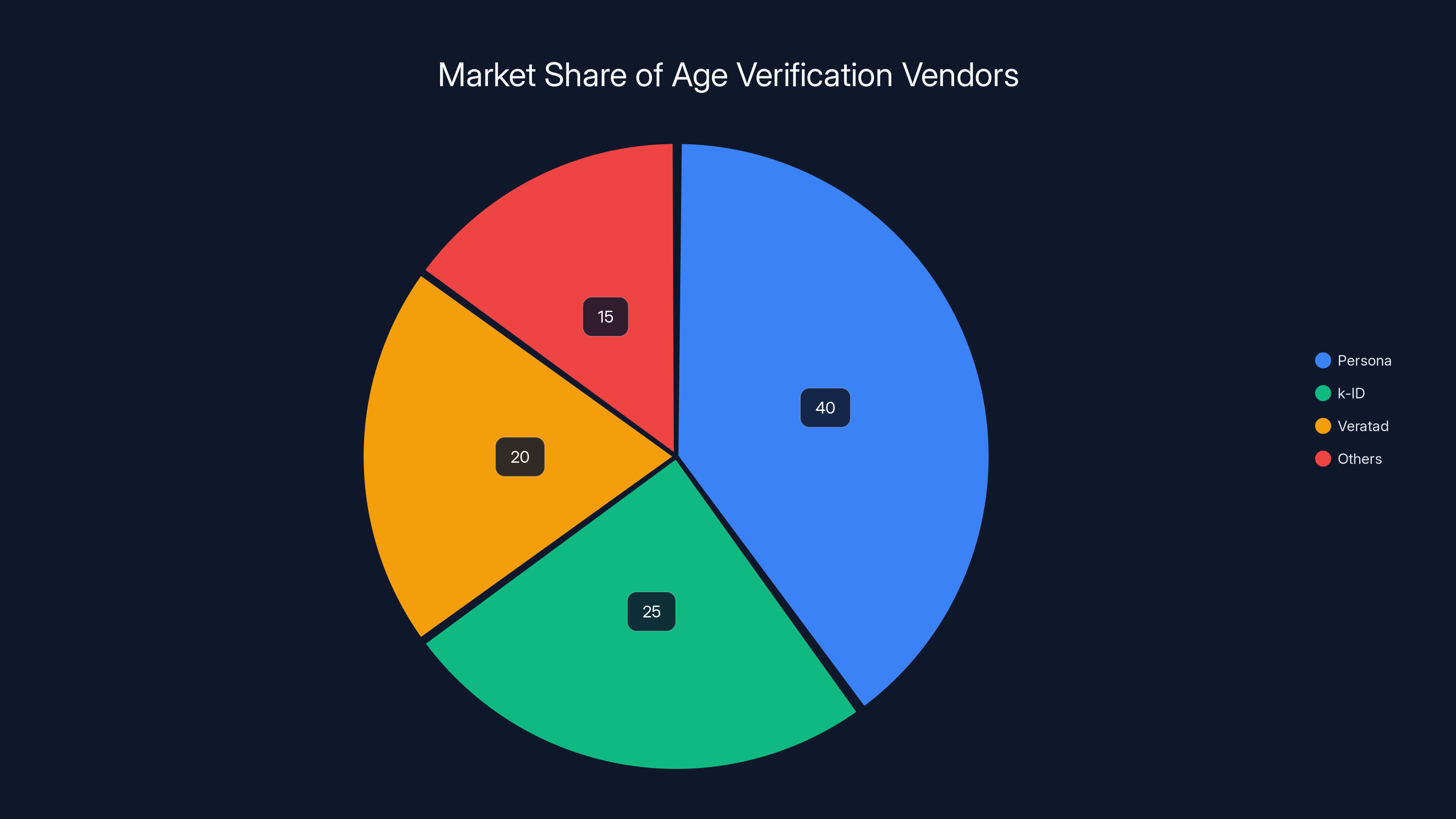

Other age verification vendors are watching this closely. Companies like k-ID (which Discord is now using) and Veratad are seeing an opportunity. If they can position themselves as more privacy-conscious alternatives, they can capture market share. That's actually good for users—it creates competitive pressure to improve privacy practices.

But it's also a reminder that startup-scale vendors in the identity/verification space operate in a murky regulatory and technical landscape. There's no established industry standard for age verification that balances privacy, security, and accuracy. We're essentially watching the market figure this out in real time, with users' biometric data at stake.

K-ID and Veratad: Discord's New Approach

Discord pivoted to k-ID for its facial age estimation and Veratad for ID verification. This is a more distributed approach—two vendors instead of one. Whether that's actually better for privacy is debatable.

k-ID claims its facial age estimation technology runs locally on your device. The video selfie never leaves your phone. That's technically stronger privacy-wise than uploading facial video to a server. But the local processing has its own concerns: how's the technology validated? Are there backdoors? Can the model be reverse-engineered from the device?

Veratad handles ID scanning and verification. The company specializes in identity verification and has been in the space longer than Persona. They're essentially a document verification service. Their business model is more traditional than Persona's—they focus on ID accuracy rather than facial recognition.

Discord's statement says images of identity documents are deleted after verification. But again, "deleted" is doing a lot of work in that sentence. Do they mean immediately deleted? After what timeframe? Are they deleted from all servers, or just the primary database? These details matter enormously for privacy.

The split vendor approach has one advantage: it reduces the concentration of biometric data. If k-ID's servers were breached, they wouldn't have ID documents. If Veratad's servers were breached, they wouldn't have facial data. That's a defense-in-depth strategy.

But it also means your age verification data is spread across two companies instead of one. More vendors means more potential attack surface, more companies to audit, more potential data sharing agreements. There's a trade-off between fragmentation and concentration that doesn't have an obvious "right" answer.

Neither k-ID nor Veratad has had the kind of security incidents that Persona experienced (or at least, not publicized ones). But that's partly because they're less high-profile. As Discord implements their systems at scale, the security and privacy scrutiny will increase.

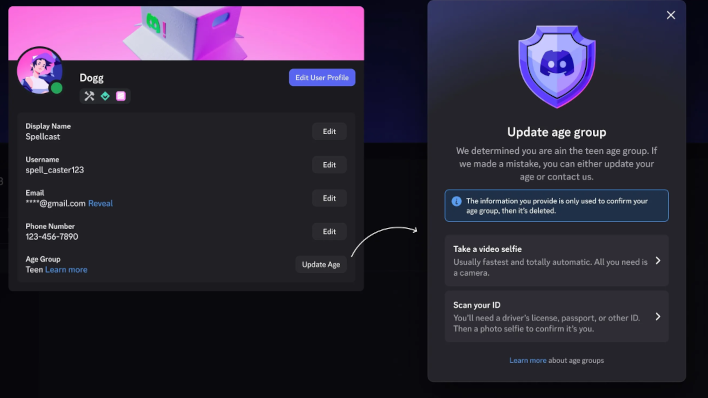

The "Teen Experience" Default: What Happens If You Don't Verify

Here's the creative part of Discord's approach: they're not making verification mandatory for everyone. Instead, they're using a machine learning model to estimate whether someone is likely an adult based on account activity, device fingerprints, and other signals. If the model has "high confidence" you're an adult, you're not bothered with verification.

If the model is unsure or leans toward "teenager," you get placed into a "teen experience." This restricts access to age-restricted channels and servers, applies filters to sensitive content, and generally puts you in a walled garden. You can still use Discord, but with limitations.

This is actually clever design. It reduces friction for people who obviously aren't verification targets. A 35-year-old using Discord for work communities obviously isn't a child. Why make them prove it? But teenagers, or accounts that look suspicious, face friction and get restricted.

The downside is that this approach relies on a machine learning model's judgment. These models have biases. They could discriminate against users from certain regions, devices, or demographics. They could incorrectly classify people. And once you're in "teen mode," you need to verify your age with facial recognition or ID to get out.

This creates an interesting dynamic: verification becomes a requirement if you want full access, but it's framed as optional for most users. In practice, power users and teenagers will be disproportionately affected. If Discord's model incorrectly classifies you as a teen, you're forced into verification or accept limited access.

From a privacy perspective, this is better than universal facial scanning. But it's still introducing behavioral surveillance (tracking device, location, activity patterns) to determine who "looks like" an adult. That's its own privacy trade-off.

Persona holds a significant share in the age verification market, but competitors like k-ID and Veratad are gaining ground as privacy concerns rise. (Estimated data)

The Bigger Picture: Age Verification's Fundamental Problem

All of this—Persona, k-ID, Veratad, facial recognition, ID uploads—is basically a proxy for a deeper problem: there's no good way to verify age online without some privacy trade-off.

Facial recognition is invasive but quick. ID verification is slower but more accurate. Credit card verification (used by some platforms) requires financial information. Phone verification through telecom companies can work but has its own privacy implications. Self-reported age is easy and privacy-friendly but completely ineffective.

Regulators are demanding age verification without specifying how. Companies have to choose between accuracy, privacy, speed, and cost. Those variables don't align. You can't optimize for all of them simultaneously.

The EU and UK regulators seem to want companies to implement age verification that's effective without being invasive. That's mathematically difficult. You either accept some privacy cost or accept lower accuracy.

Some policy experts argue regulators should mandate less invasive methods even if they're less accurate. Others say effective age verification requires using the best available technology, privacy concerns be damned. The tension between those positions is unresolved.

Meanwhile, platforms like Discord are caught in the middle. They implement what they think will work, users revolt, regulators might not accept it anyway, and they're forced to pivot. It's a lose-lose scenario that'll keep happening until there's regulatory clarity about what age verification should actually look like.

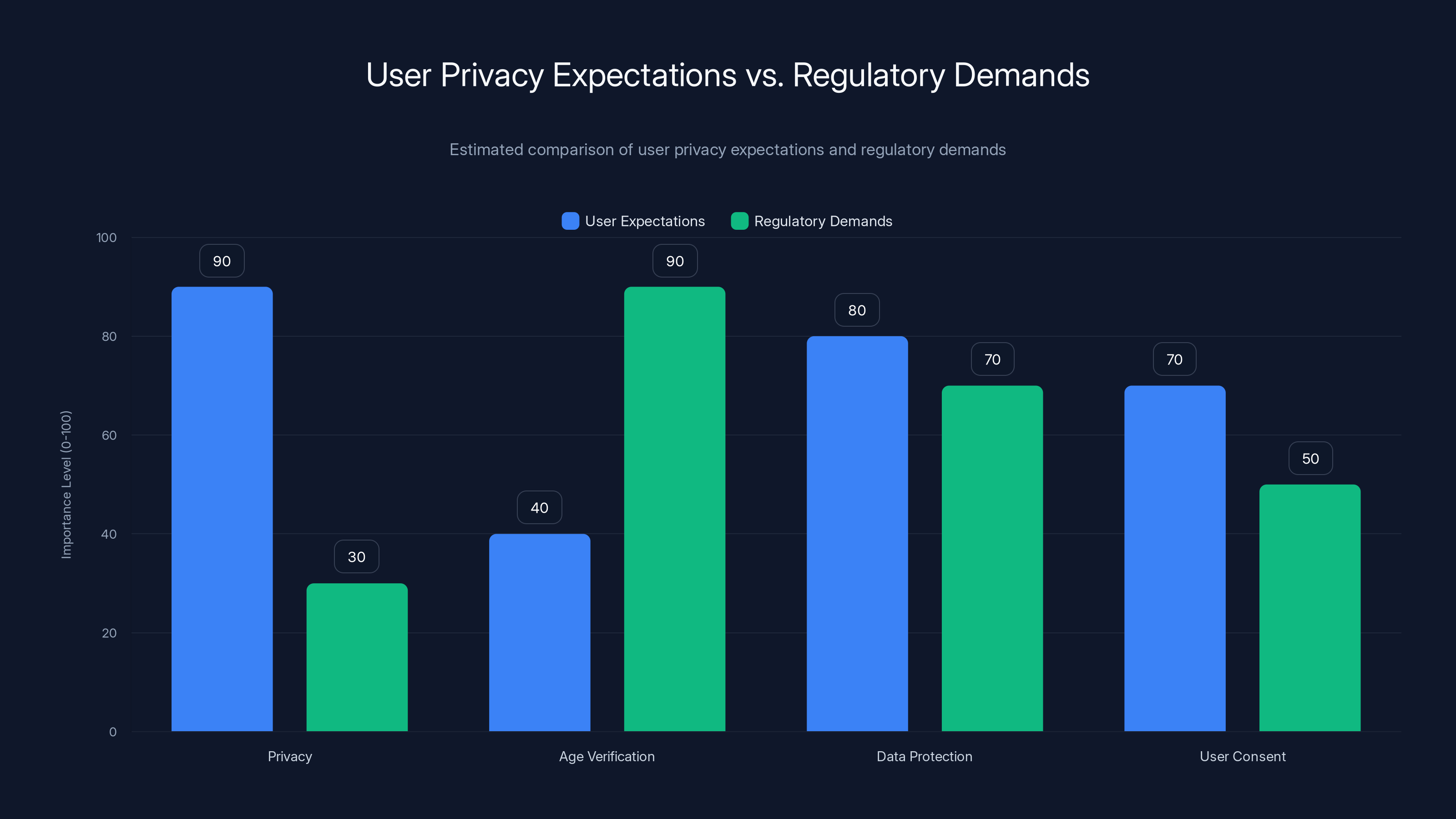

User Privacy Expectations vs. Regulatory Demands

Here's where Discord's crisis really gets interesting: the disconnect between what users expect and what regulators demand.

Users expect zero surveillance. They want to use Discord without cameras, without uploading IDs, without government agencies getting their data. Fair expectation. They also want age-restricted content to actually be age-restricted, which requires... some way to verify age. Contradiction.

Regulators expect platforms to take age verification seriously. Implement something meaningful, not a checkbox. Actually verify that teenagers can't access adult content. But also protect privacy and children's data. More contradictions.

Discord tried to split the difference by using facial recognition that supposedly doesn't upload video, plus ID documents that supposedly get deleted. That's a technical compromise between user expectations and regulatory demands.

But the fundamental problem remains: there's no compromise that satisfies everyone. Users want privacy. Regulators want enforcement. Those aren't compatible at the margins.

What would actually resolve this? Probably some combination of:

- Clearer regulatory standards about what age verification must accomplish and what privacy thresholds it must meet

- Standardized technology so multiple platforms use the same verification method, reducing per-platform privacy costs

- Better oversight of age verification vendors to ensure security and prevent government access

- User consent frameworks that let people choose verification methods and opt into more invasive approaches if they prefer faster verification

None of that exists yet. We're in a Wild West period where platforms experiment, users complain, regulators make vague demands, and nothing settles.

How Other Platforms Are Approaching Age Verification

Discord isn't alone in struggling with this. Every major platform faces similar pressure.

TikTok has implemented age verification in the UK using biometric data collection, similar to Discord's approach. The company claims the video selfie doesn't leave your device and data gets deleted. Sound familiar? Users have the same concerns.

YouTube uses machine learning models to estimate age based on account activity, requiring verification for borderline cases. They also rely on credit card verification for some regions. It's a more distributed approach than TikTok or Discord.

Reddit is using Persona for age verification in the UK, facing the same backlash Discord experienced. The platform announced age verification requirements for NSFW content, which created massive user friction. Community complaints forced them to delay full implementation.

Roblox uses Persona and has faced particular scrutiny because the platform has a high percentage of child users. Parents are understandably concerned about biometric data collection from minors. The company has been more cautious than Discord, rolling out verification more slowly and with more transparency.

Instagram and Meta platforms use device-based age estimation for some regions, relying on machine learning rather than facial recognition. They're also exploring digital ID verification through partnerships with government or government-adjacent services.

The pattern is clear: every platform is experimenting, none have found a solution that works without controversy. Facial recognition is the most technically effective but most invasive. Machine learning models are less invasive but less accurate. Document verification is accurate but slow. There's no winner.

What's interesting is that platforms are starting to learn from each other's mistakes. When Reddit and Discord faced Persona backlash, YouTube and TikTok noticed. When Roblox faced parent complaints, other platforms with younger user bases took it seriously.

There's probably some gravitational pull toward a dominant approach that's "good enough" on privacy and effective on verification. But we haven't reached that point yet.

Age verification methods face trade-offs: facial recognition has high privacy concerns, document verification is more accurate but intrusive, and self-reported age is user-friendly but unreliable. Estimated data.

The Government Connection Controversy

One element of the Persona situation that deserves deeper examination is the "government endpoint" discovered by researchers.

When security researchers found exposed code at a "US government authorized endpoint," it created immediate suspicion about government surveillance or data sharing. People worried about law enforcement accessing age verification data, immigration enforcement getting biometric data, or intelligence agencies exploiting the infrastructure.

Persona's CEO denied any government contracts. But that doesn't necessarily answer the question. Government agencies can access data through legal processes without contracts. A warrant, subpoena, or National Security Letter could compel Persona to hand over data. That's legal, not a conspiracy.

The broader question is whether age verification infrastructure could become part of broader government surveillance systems. If a single company aggregates biometric data from millions of users for age verification, that's an attractive target for government access. Even if Persona isn't directly working with government today, the data they hold could be highly valuable to various agencies.

This isn't paranoia. It's how tech infrastructure actually works. Facebook's data has been accessed by law enforcement. Apple's iCloud has been accessed through legal requests. Telecom companies routinely hand over location data to government agencies. It's built into how these systems function.

For users, the question becomes: is age verification worth creating a new data source that government agencies could potentially access? That's not a technical question, it's a values question. Different users will answer differently.

Discord didn't address this directly. Badalich's statement focused on privacy and vendor evaluation, not government access. That absence is notable. If Discord could credibly promise that age verification data would never be shared with government, they probably would have said so.

What Discord Users Actually Want (And Can't Have)

If you read Discord server complaints about age verification, you see a pattern:

- "I don't want to upload my ID or take a selfie"

- "I don't trust any company with my facial data"

- "Why isn't self-reported age enough?"

- "This is creepy surveillance"

- "I'll just leave Discord if this gets implemented"

Understandable frustration. But here's the problem: users are asking for something impossible.

They want age verification that's accurate enough to satisfy regulators, but requires zero surveillance, zero data collection, and zero invasiveness. You can't have all three. You have to choose.

Self-reported age is completely non-invasive. But it's also completely ineffective. Regulators won't accept it. So that's off the table.

Facial recognition and ID verification are invasive but effective. That's what regulators are pushing for. So that's where we end up.

Users don't like this trade-off. But they also generally support age restrictions on adult content. Those two positions are contradictory. You can't enforce age restrictions without some way to verify age. And verifying age online without biometric data or financial data is essentially impossible.

What users actually want is: "Please verify age, but in a way that magically doesn't require any data collection or privacy cost." That's not a request to Discord. That's a request to violate the laws of physics.

The more realistic thing users should want is better oversight of age verification vendors. Audited security practices. Clear data retention policies. Explicit government access restrictions. Government should mandate these standards, not just demand age verification without specifying how.

That's a policy problem, not a technical problem. And Discord can't solve it alone.

Future of Age Verification: Where This Might Actually Go

Looking ahead, a few scenarios seem likely:

Scenario 1: Standardization

Regulators eventually mandate a specific approach. Maybe it's biometric-based, maybe it's document-based, maybe it's something new. Once there's a standard, multiple platforms use it, and users make a one-time choice about whether to comply. This reduces privacy costs (your biometric data goes to one certified vendor, not every platform) and gives users clarity.

Europe might push this first since GDPR already creates regulatory frameworks. A standard age verification approach certified by data protection authorities could become EU-wide.

Scenario 2: Decentralization

Different countries adopt different standards. The US might prefer document-based verification. The EU might prefer federated models. India might prefer something different. Platforms operating globally have to support multiple approaches. This is messy but respects regional differences.

Scenario 3: Technical Innovation

Somebody invents age verification that's both private and effective. Zero-knowledge proofs could theoretically let you prove you're over 18 without revealing your actual age or biometric data. Homomorphic encryption could let platforms verify ID documents without platforms reading them. These technologies exist in theory but aren't ready for scale.

If any of these innovations mature, they could change the whole game. But that's probably years away.

Scenario 4: Regulatory Retreat

Regulators realize age verification at scale is impossible without unacceptable privacy costs. They back off requirements or shift responsibility to other actors (parents, educators, ISPs). This seems less likely given regulatory momentum, but it's possible.

Scenario 5: The Current Chaos Persists

Different platforms use different vendors, users complain, companies pivot, nothing settles. We keep seeing cycles of implementation and backlash. Agencies make different demands in different regions. Nobody's happy, but it technically works.

This is where we're heading unless something changes. The current situation actually serves platforms okay—they can blame regulation, blame user pressure, blame vendors. "We're trying!" buys them time.

For users, that's frustrating. For regulators, it's insufficient. For age verification vendors, it's business as usual.

Estimated data shows a significant gap between user privacy expectations and regulatory demands, particularly in privacy and age verification aspects.

The Lesson Discord Learned (And Didn't Learn)

Here's what Discord actually learned from the Persona backlash: users care deeply about facial recognition and biometric data, and negative press forces action faster than gradual implementation.

What Discord didn't learn: actually addressing privacy concerns requires transparency, user input, and technical rigor. Pivoting to different vendors doesn't solve the underlying problem if the new vendors use the same invasive methods.

If Discord had engaged users before implementing age verification, explained the regulatory pressure, discussed options, and asked what approach felt most acceptable, they might have avoided the crisis. Instead, they announced it as a done deal, users revolted, and Discord scrambled.

That pattern repeats because most tech companies treat user input as a PR problem rather than a product problem. Users aren't interested in compliance. They're interested in privacy. If you frame age verification as compliance, users see it as something forced on them. If you frame it as user safety that happens to require verification, the conversation changes.

Discord could have said: "We need to verify age because we care about safety. Here are the options. Which privacy/accuracy trade-off do you prefer?" Instead, they said: "We're implementing Persona." Predictable backlash followed.

Look, I get it. Tech companies operate under tight timelines and compliance pressure. Taking time to genuinely engage users costs time and resources. It's faster to just implement something and handle complaints later. But that approach doesn't work at Discord's scale with its privacy-conscious user base.

What This Means for Other Platforms

Every other platform watching the Discord situation learned something important: users will mobilize against age verification implementations they perceive as invasive.

That's not necessarily a bad thing. User pushback creates accountability. It forces platforms to actually think about privacy rather than just checking a compliance box.

But it also means future age verification implementations will be slower, more contentious, and harder to execute. Platforms will spend more time justifying approaches, addressing concerns, and potentially redesigning when backlash hits.

Some platforms might decide the PR cost isn't worth it and delay implementation. That buys them time in some regions but creates compliance risk in others. UK and EU regulators are increasingly serious about age verification. Delaying won't avoid the problem.

Other platforms might try to get ahead of backlash by being transparent about age verification plans, explaining regulatory context, and giving users choices. That's the approach Reddit has been moving toward (somewhat), and it seems to reduce friction.

The meta-lesson: in 2025, user privacy concerns are real and consequential. Companies that ignore them do so at their peril. Companies that take them seriously—genuinely, not just in marketing—build more trust and face less backlash.

Discord's situation is a case study in what happens when you don't.

The Broader Context: A Generational Shift in Privacy Expectations

All of this plays into a larger generational shift in how people think about privacy and biometric data.

Millennials and Gen Z grew up with Facebook harvesting their data. They're simultaneously the most surveilled generation and the most skeptical of surveillance. That's created a weird dynamic where younger users are more aware of privacy issues but also more resigned to them.

But facial recognition and biometric data are different. They feel more invasive than behavioral data collection. Your face is unique. It can't be changed. Once someone has facial recognition data, they have a permanent identifier for you.

Users intuitively understand this even if they can't articulate it precisely. Behavioral data feels like "they know what I do." Biometric data feels like "they know who I am." The latter feels more invasive.

Discord's users were already primed to resist this. The platform has always been positioned as pro-privacy and pro-user. Introducing facial recognition data collection contradicted that brand promise. The backlash wasn't just about age verification—it was about perceived betrayal.

Other platforms with strong privacy narratives should take note. If your brand promise is privacy-first, you can't suddenly implement surveillance tech without massive friction. Your user base will hold you accountable.

Regulatory Trends: What's Coming Next

Regulatory pressure around online safety and age verification is increasing globally. Here's the landscape:

UK: The Online Safety Bill creates mandatory age assurance requirements. Regulators are now auditing platforms' compliance. The momentum is toward stricter enforcement, not looser.

EU: Digital Services Act requirements for risk assessment and age-appropriate design are pushing platforms toward age verification. GDPR limitations on children's data are simultaneously pushing back against invasive verification. The tension between those requirements is creating space for vendors to position themselves as GDPR-compliant alternatives.

US: Federal regulations are still developing. Some states are passing age verification requirements (Texas, for example). But there's no federal standard yet. Platforms might be able to avoid US-wide verification for a bit longer, though that won't last.

Australia, Japan, South Korea: Different regions with their own age assurance requirements and child protection standards. No unified approach yet.

What's clear is that age verification is becoming table stakes for operating at scale. Platforms can't ignore it. Regulators are serious about enforcement. The question isn't whether to implement age verification, but how to do it in ways that survive user scrutiny and regulatory review.

That requires actually good solutions, not checkbox compliance. Discord's pivot away from Persona suggests the company is learning that lesson.

Final Thoughts: The Unsolved Problem

Let's be honest about where we stand: the age verification problem isn't actually solved. Discord's move away from Persona doesn't fix anything. It just swaps one vendor for others.

The underlying tension between regulatory demands and user privacy expectations remains unresolved. The fundamental challenge of verifying age online without invasive methods is still there. The question of how to balance child safety and user privacy is still open.

What Discord's situation did provide is clarity about what users won't accept. They won't accept facial recognition without explicit understanding of how data gets handled. They won't trust vague promises about data deletion. They won't silently accept biometric collection.

That's actually useful information for platforms and regulators. It defines a boundary. You can implement age verification, but not in ways that feel like blanket surveillance. That's a constraint worth knowing.

The real question going forward: can the industry develop age verification approaches that satisfy both regulatory requirements and user expectations? Or will we keep cycling through implementations, backlash, and pivots?

My guess is we'll keep cycling for another couple of years until either regulators impose a standard or technology improves enough to make this less of a privacy trade-off. Until then, expect more Discord-style situations where platforms push forward, users object, and companies adjust course.

The users mobilizing against invasive age verification aren't wrong. They're protecting legitimate privacy interests. Companies responding to that pressure aren't wrong either. They're navigating impossible constraints. Regulators demanding age verification without specifying how also aren't wrong, though they should be specifying better.

Everybody's reasonable, and everybody's stuck. That's the actual situation.

FAQ

What is Persona and why did Discord use it?

Persona is an age verification provider that uses facial recognition and ID verification to confirm users' ages. Discord chose Persona because the company had proven success with other major platforms like Reddit and Roblox, and its technology promised fast, accurate age verification. The partnership seemed logical at the time, but user concerns about facial recognition and biometric data storage led to significant backlash.

How does age verification on Discord actually work?

Discord uses a two-part system. First, a machine learning model estimates whether you're likely an adult based on account activity, device information, and other signals. If it's confident you're an adult, no verification needed. If it's unsure or you appear to be a teenager, you're placed in "teen mode" with restricted access to age-gated content. To get full access, you'd verify through facial age estimation (runs locally on your device) or ID verification through third-party providers like k-ID and Veratad, which do involve uploading documents.

Why are users concerned about facial recognition age verification?

Users have legitimate concerns about several aspects: (1) permanence—facial data can't be changed if compromised, (2) function creep—facial data collected for age verification could be used for tracking or surveillance, (3) government access—biometric databases could be accessed through legal requests or subpoenas, and (4) company security—if Persona's infrastructure got exposed (which it did), facial data could be breached. These aren't hypothetical risks; they reflect real patterns of how tech infrastructure is accessed and used.

What regulatory pressure is driving age verification requirements?

Multiple regulations mandate age verification. The UK's Online Safety Bill requires platforms to implement age assurance for age-restricted content. The EU's Digital Services Act requires platforms to assess and mitigate risks to minors. Various other countries have similar requirements. Regulators see age verification as necessary for child protection online. However, they haven't specified how age verification should be implemented, leaving companies to choose between accurate-but-invasive and private-but-ineffective methods.

What's the difference between the vendors Discord is now using?

Discord's new approach splits the work: k-ID handles facial age estimation (which runs locally on your device without uploading facial video) and Veratad handles ID document verification. This distributed approach means your facial data and ID documents go to different vendors, reducing the concentration of sensitive information in one place. However, both approaches still involve some data transmission and processing by third parties, just in a more fragmented way than Persona's centralized system.

Could age verification on Discord actually be effective with zero invasiveness?

Unfortunately, no. Any form of age verification that's actually reliable requires some privacy trade-off. Self-reported age is completely non-invasive but completely ineffective—anyone can claim to be any age. Credit card verification requires financial data. Facial recognition is invasive but accurate. Document verification is accurate but slow. Regulators want effective age verification, which inherently costs privacy. The real question isn't how to get zero invasiveness; it's how to get the minimum invasiveness while maintaining effectiveness.

How did the Persona security researchers discover the government endpoint issue?

Security researchers commissioned by The Rage publication found exposed code in publicly accessible repositories and infrastructure. They discovered what appeared to be government-related endpoints and over 2,400 files suggesting connections between Persona's age verification system and financial reporting infrastructure. Persona CEO acknowledged the exposure and confirmed the code was removed, but the discovery raised questions about whether Persona had undisclosed government relationships or if its infrastructure was vulnerable to government access through subpoenas or other legal processes.

What other platforms use Persona or similar age verification?

Persona is also used by Reddit and Roblox for age verification. Both platforms faced similar user backlash to what Discord experienced. TikTok uses biometric age verification in some regions. YouTube uses machine learning models and credit card verification. The pattern is consistent: every major platform is struggling with age verification implementation and facing user resistance to invasive methods. No platform has found an approach that works without controversy.

What happens if you refuse age verification on Discord?

If you don't verify your age and Discord's machine learning model determines you might be underage, you're placed in "teen mode." This restricts access to age-gated channels and servers, applies content filters, and limits certain features. You can still use Discord with restrictions, but many communities and features become inaccessible. To regain full access, you'd need to complete age verification or wait for Discord's model to reclassify you as an adult based on account activity.

Why don't regulators just specify how age verification should be implemented?

Regulators have conflicting goals: they want effective age verification (which requires accurate data collection) and strong privacy protection (which limits data collection). These aren't compatible at the margins. Additionally, technology and best practices evolve faster than regulation. Regulators are reluctant to mandate specific technology that might become obsolete or might inadvertently entrench particular vendors. The result is vague requirements that create space for different approaches but also create confusion about compliance.

Conclusion: The Crisis That Hasn't Really Ended

Discord's retreat from Persona isn't a victory for privacy or a solution to the age verification problem. It's a tactical withdrawal that might actually make the situation more complicated.

By splitting vendors, Discord made age verification less concentrated but also less transparent. Users don't know exactly which company handles what data. By maintaining age verification requirements while switching providers, Discord acknowledged that the regulatory pressure is real and not going away.

What Discord should have learned but might not have fully internalized: users need transparency and choice before invasive tech gets implemented, not after backlash forces retreats.

The broader problem persists. Platforms globally need age verification to comply with regulations. Users don't want invasive data collection. Regulators aren't providing clear standards. Vendors are experimenting with different approaches. Nobody's happy, but everyone has legitimate reasons for their position.

This situation will repeat with other platforms, other vendors, and other regions until something breaks the cycle. That could be regulatory standards that define acceptable age verification. It could be technical innovations that make privacy-preserving verification possible. It could be courts deciding that current approaches violate privacy laws.

For now, expect more cycles of announcement, backlash, and adjustment. Discord's situation established precedent: users will fight invasive age verification, and companies will listen if the pressure is public and organized enough.

The real work—figuring out age verification that's both effective and respects user privacy—hasn't even started yet.

Related Topics Worth Exploring

If you're interested in digital privacy, platform regulation, and identity verification, these topics connect to the Discord situation:

- Facial Recognition Technology and Privacy: How biometric data collection works, risks, and regulatory limitations across different countries

- GDPR Compliance for Age Verification: Specific regulations on children's data and how they conflict with age assurance requirements

- Platform Governance and User Power: How organized user pressure actually influences tech company decisions

- Alternative Age Verification Methods: Zero-knowledge proofs, homomorphic encryption, and other emerging technologies that might solve this problem

- Regulatory Trends in Digital Child Protection: How different countries are approaching child safety online without creating surveillance infrastructure

Key Takeaways

- Discord quietly ended its limited Persona age verification test in the UK after intense user backlash over facial recognition and biometric data collection concerns

- Security researchers discovered exposed code in Persona's infrastructure at government-authorized endpoints, raising legitimate questions about government data access

- The core problem remains unsolved: regulators demand effective age verification while users demand zero surveillance—these aren't compatible

- Discord pivoted to k-ID and Veratad vendors, but this solves nothing; it just splits the problem across multiple companies instead of addressing underlying privacy tensions

- Every major platform (Reddit, TikTok, Roblox, YouTube) faces identical age verification challenges with no industry standard yet established, suggesting years of continued friction ahead

Related Articles

- Facial Recognition Goes Mainstream: Enterprise Adoption in 2026 [2025]

- Discord Age Verification 2025: Complete Guide to New Requirements [2025]

- Discord's Age Verification Crisis: What Happened With Persona [2025]

- Meta's Smart Glasses Problem: Why Facial Recognition Could Ruin Everything [2025]

- FCC Equal Time Rule Debate: The Colbert Censorship Story [2025]

- Social Media Moderation Crisis: How Fast Growth Breaks Safety [2025]

![Discord Drops Persona Age Verification: Privacy Crisis Explained [2025]](https://tryrunable.com/blog/discord-drops-persona-age-verification-privacy-crisis-explai/image-1-1771864585997.jpg)