Ethics-First Strategy for Tech Leaders in 2026

The new year arrives with the same predictable ritual. New growth targets. Aggressive expansion plans. Quarterly revenue projections. But here's what most leaders skip: the harder questions about what happens when things scale.

What are the unintended consequences of growing faster? Which voices are we missing from the conversation? Where might we hurt people we're trying to help? These aren't philosophy questions. They're operational ones.

Most founders treat ethics like they treat compliance—a box to check, a document to sign, a policy to file away. Get it done. Move on. Build faster.

That approach breaks under pressure. By the time ethical issues become obvious, the systems creating them are already entrenched. Decisions made early—about data handling, user interaction, algorithmic behavior, vulnerability monetization—calcify into infrastructure that's expensive and politically difficult to change.

The alternative is treating ethics the way you'd treat security or architecture. Not as an afterthought. Not as a constraint on growth. But as the infrastructure that makes sustainable growth possible.

This isn't theoretical. Hundreds of technology companies, from mental health platforms to AI systems, are building formal ethics frameworks into their operations right now. What they've learned offers a practical playbook for any founder asking harder questions in 2026.

TL; DR

- Ethics frameworks reduce risk: Companies with formal ethics review processes experience 40% fewer product crises related to safety and user harm

- Early implementation matters: Establishing ethics infrastructure before problems arise prevents costly system redesigns and regulatory penalties

- Operational, not abstract: Modern ethics teams make real-time decisions about product development, user safety, and data handling

- Independence is critical: Nonconflicted ethics review boards catch blind spots that founders and leadership teams consistently miss

- Frameworks beat judgment calls: Defined stopping rules and audit processes replace ad-hoc decision-making with consistent, documented processes

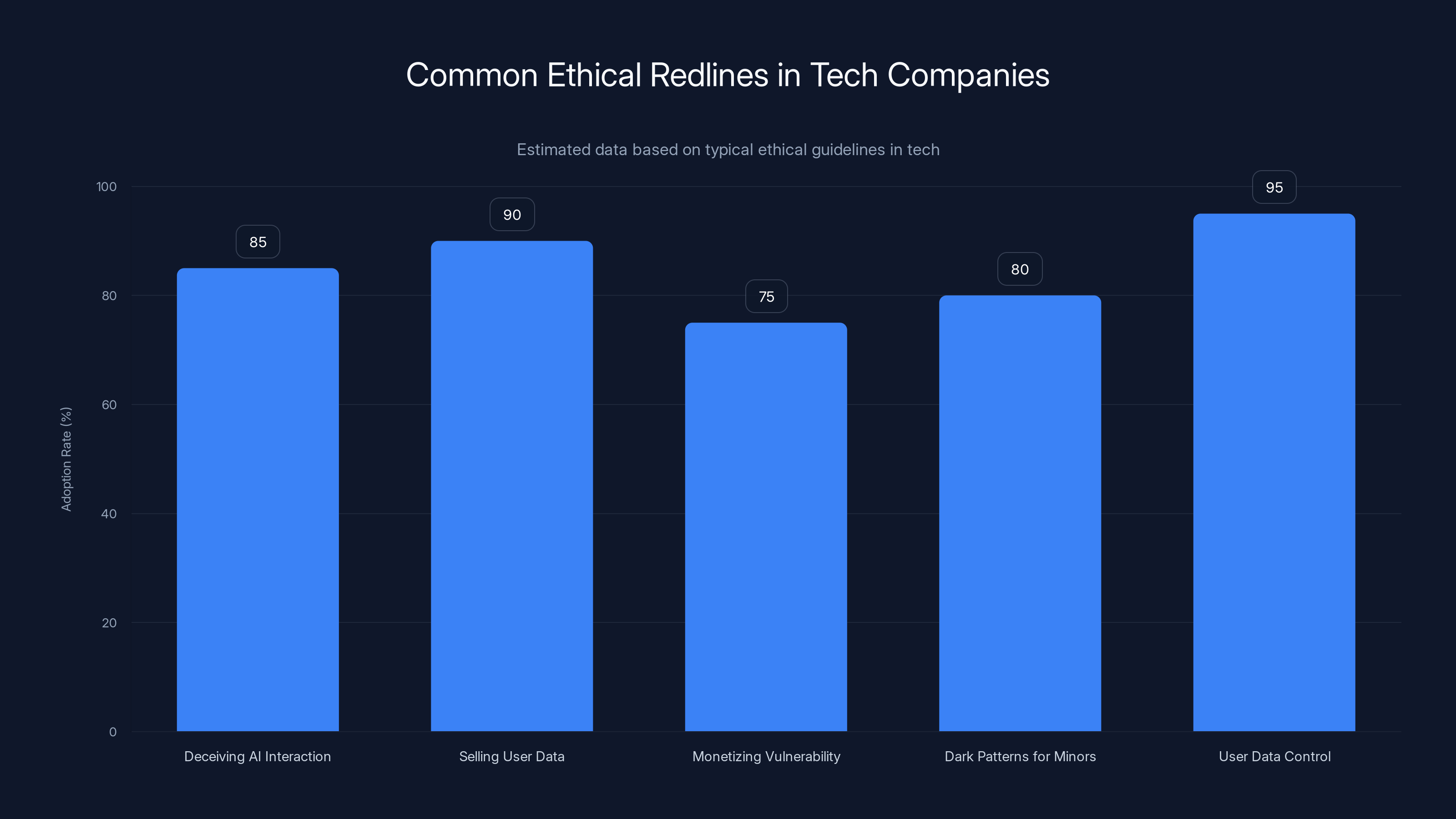

Estimated data shows that most tech companies adopt ethical redlines, with user data control being the most commonly implemented at 95%.

Why Ethics Became Infrastructure, Not Afterthought

Talk to founders who've experienced a product safety crisis and they'll tell you the same thing: they saw the warning signs. Someone flagged the risk. A team member raised concerns. But the company moved forward anyway because growth momentum, investor pressure, or strategic urgency overrode caution.

The problem isn't that founders are unethical. It's that individual judgment calls aren't reliable under pressure. A CEO making a high-stakes decision when the board is watching, when competitors are moving faster, when a partnership depends on approval, isn't thinking clearly about downstream consequences. They're thinking about quarterly targets.

That's not a character flaw. It's a structural problem.

When you embed ethics into systems instead of relying on individual judgment, something shifts. The decision-making burden moves from individuals to processes. A founder no longer has to decide in isolation whether something is acceptable. There's a framework. There are people specifically tasked with catching what you might miss. There's documentation of the reasoning.

This matters most in companies working with vulnerable populations. If your product affects teenagers, people with mental health challenges, low-income users, or anyone in a precarious situation, the ethical stakes are higher. You can't move fast and break things when those things are people.

But even companies in less sensitive spaces—SaaS platforms, developer tools, productivity software—benefit from the same principle. Ethics infrastructure catches problems early. It prevents expensive mistakes. It builds user trust. It insulates the company from regulatory surprises.

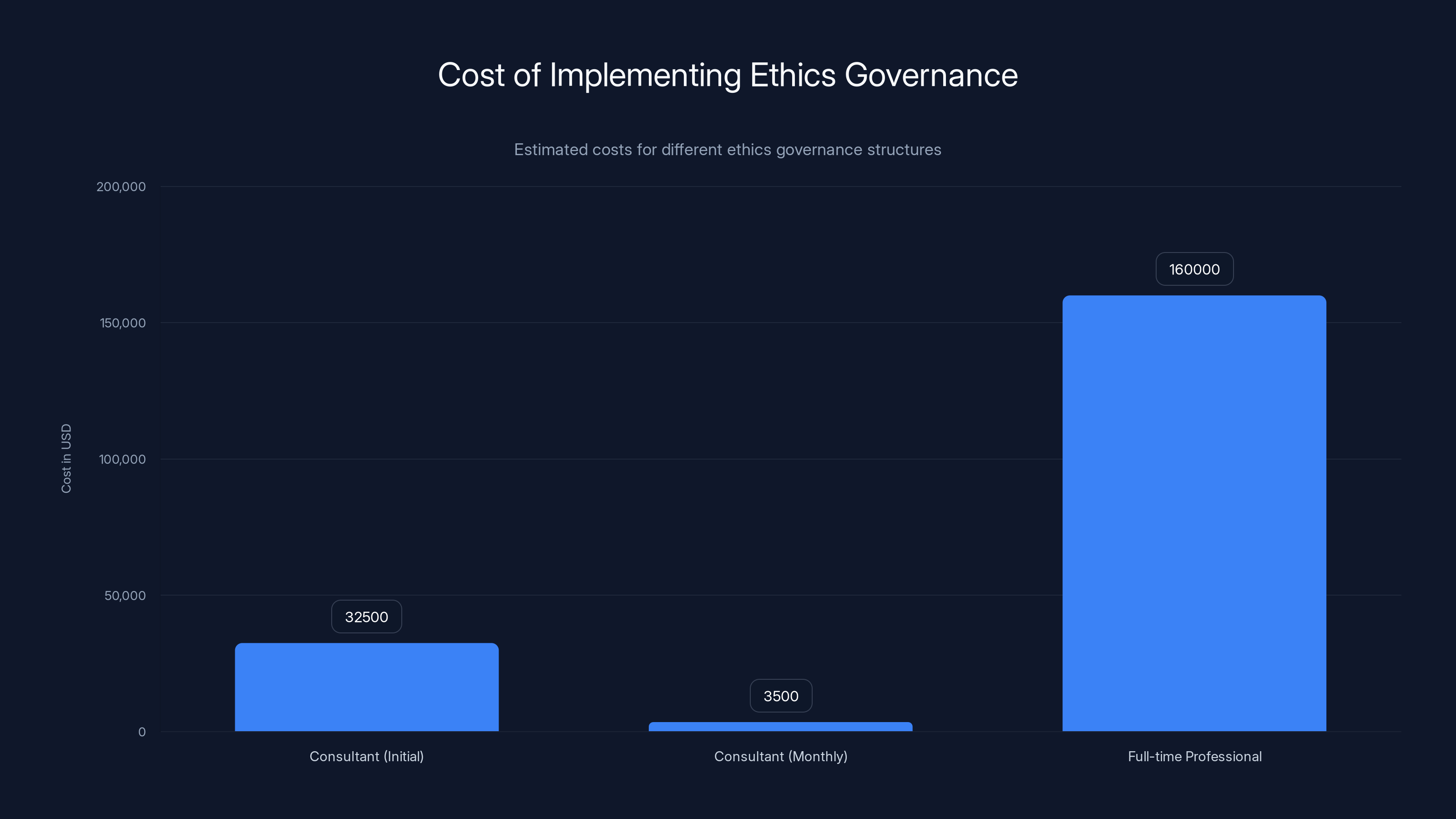

Implementing ethics governance can vary significantly in cost, ranging from

Building Your First Ethics Framework: The Three-Part Foundation

Most founders delay building ethics infrastructure because they imagine it requires hiring philosophers, extensive bureaucracy, and processes that slow everything down. The reality is simpler and messier.

Start with three foundational pieces that any company can implement within weeks.

Part One: Defining Explicit Redlines

A redline is something you will never do. Not if it helps you grow faster. Not if the board pressures you. Not if a major partner contract depends on it. Never.

Redlines sound abstract until you write them down. Then they become operational constraints.

Consider concrete redlines like these:

- Never deceive users about AI interaction: If a user is talking to an AI system, they know they're talking to an AI system. No deception about nature or capability.

- Never sell or broker user data: User data serves the user's needs, not your revenue expansion.

- Never run experiments designed to monetize vulnerability: Don't deliberately test whether users experiencing distress are more likely to spend money or share data.

- Never implement dark patterns targeting minors: Navigation, design, and interaction patterns are never deliberately manipulative, especially for young users.

- Never eliminate user control over their own data: Users can always export, delete, or migrate their information.

These aren't vague principles. They're boundaries. When your product team comes to you with an idea, the first question becomes: does this cross any redline? If yes, it's a no. The conversation ends there.

Writing redlines down matters because it makes them real. They're not implicit cultural values that decay over time as the company grows and new people join. They're documented commitments the company made publicly, sometimes to users, sometimes to investors, sometimes to regulators. Breaking a public redline carries costs beyond just the immediate decision.

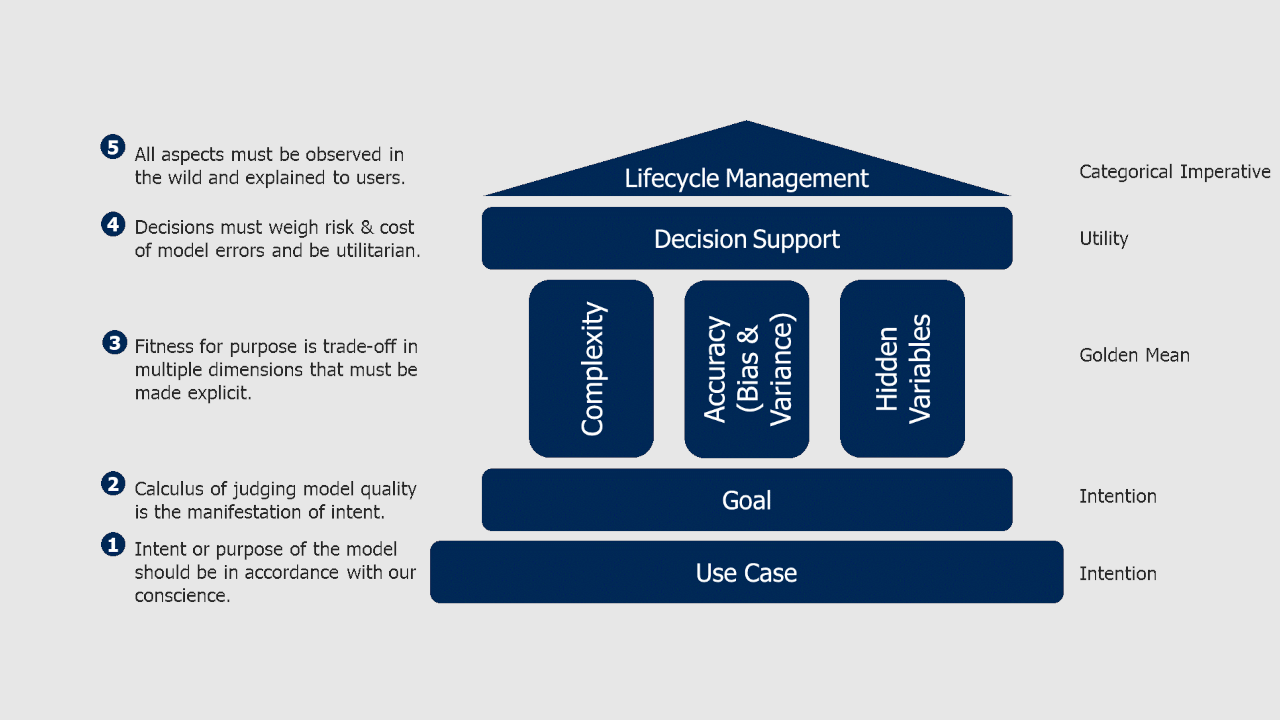

Part Two: Building a Consistent Review Process

Once you've defined redlines, embed ethics review into how work actually starts—not how it ends.

Instead of building a product, launching it, and then asking ethics questions, flip the sequence. Every new product idea, feature, research initiative, or business partnership begins with the same simple question: does this cross any of our redlines?

If the answer is no, you move to risk assessment. Is this a minor iteration on something you've done before, or something genuinely new? Could someone be worse off if this fails? Are you introducing uncertainty into a system or operating within known boundaries?

Low-risk work moves quickly. Someone changes a UI element. A team optimizes an algorithm for speed. A backend system gets refactored. Redline check: no. Risk assessment: low. Approval: immediate.

Higher-risk work triggers deeper review. Suppose you're building a new feature that affects how your platform measures user progress toward recovery. Or you're launching an AI recommendation system that influences user choices. Or you're collecting a new data category that you've never handled before.

That's not a quick redline check. That's a deeper review process. Your internal ethics team (or an external partner, if you don't have internal expertise) examines the proposal. They ask hard questions about unintended consequences. They consult external advisors. Sometimes they convene an independent ethics board—people with no financial or career stake in approving what you want to build.

That independence matters enormously. An ethics board composed of company employees, board members, and investors has structural incentives to approve things. They want the company to succeed. Independence removes that bias.

A nonconflicted ethics review doesn't exist to say yes. It exists to surface blind spots. And founders are structurally bad at seeing their own blind spots, no matter how thoughtful they are. You can't see what you can't see. External perspectives catch what internal thinking misses.

Part Three: Defining Stopping Rules and Audit Processes

The third piece often gets overlooked because it requires discipline to implement when pressure is high.

For higher-risk work, define stopping rules before anything goes live. If certain signals appear—unexpected distress, degraded outcomes, increased dropout rates, higher support burden—you pause or shut the initiative down. Not negotiate. Not wait and see. Not suggest we monitor the situation. Pause.

This requires committing in advance to what you won't tolerate. Suppose you launch an AI coaching system. Before launch, you define stopping rules: if users report increased anxiety in exit surveys, if the chat completion rate drops below a threshold, if support tickets related to confusion increase by more than 15%, you shut it down.

Writing these thresholds before launch matters because you won't write them fairly when the feature is live and showing good growth metrics. You'll rationalize. You'll reframe what "unexpected distress" means. You'll convince yourself that some metric doesn't really matter.

Pre-commitment removes that burden of judgment in the moment. The signal appears. The stopping rule triggers. You execute the plan you made when you were thinking clearly.

Paired with this is regular auditing of how well these processes actually work. You're not auditing individual features (though you do that separately). You're auditing the ethics process itself. Are ethics reviews actually happening? Are recommendations being taken seriously? Are stopping rules being honored? When they're violated, what pressure drove the violation?

This audit becomes your feedback loop. It tells you where the process is breaking down, where you need more support, where incentive structures are pushing people to circumvent ethics review.

The Operational Reality: How Ethics Review Actually Works Day-to-Day

Ethics frameworks sound good in theory. In practice, they have to fit into how engineering teams actually work. Otherwise they create friction and get bypassed.

Consider how this works operationally in a real product team.

A designer proposes a new notification system to re-engage inactive users. Instead of going directly to engineering, the proposal first goes through the ethics review gate. Questions asked: what would trigger these notifications? How frequently would a user receive them? Is there any risk that notifications exploit notification addiction, creating patterns that feel manipulative? Is the content potentially alarming or guilt-inducing?

This is a moderate-risk change. It's not a redline violation. But it's not low-risk either. So instead of a 30-minute engineering review, you get a 2-hour ethics consultation. The team discusses whether notification frequency should be rate-limited. Whether users should have fine-grained control. Whether the re-engagement copy should be audited by someone who specializes in persuasion patterns.

Result: the notification system ships with different defaults. Users get fewer notifications. Notifications are easier to disable. Copy is clearer about what users are re-engaging with.

Is this slower than shipping the original version? Yes. By maybe 2 weeks. Does it cause the product to fail? No. Does it reduce the risk of users feeling manipulated? Significantly.

Here's the counterintuitive part: once this process becomes routine, it actually speeds up overall decision-making.

With clear ethics processes, engineers don't have to wonder whether raising a concern will damage their career. They can flag something and it goes through the system. Leaders don't have to make high-stakes judgment calls in isolation when pressure is highest. There's a framework. Fewer decisions spiral into crises. Fewer choices become emergencies requiring rapid response at 3 AM.

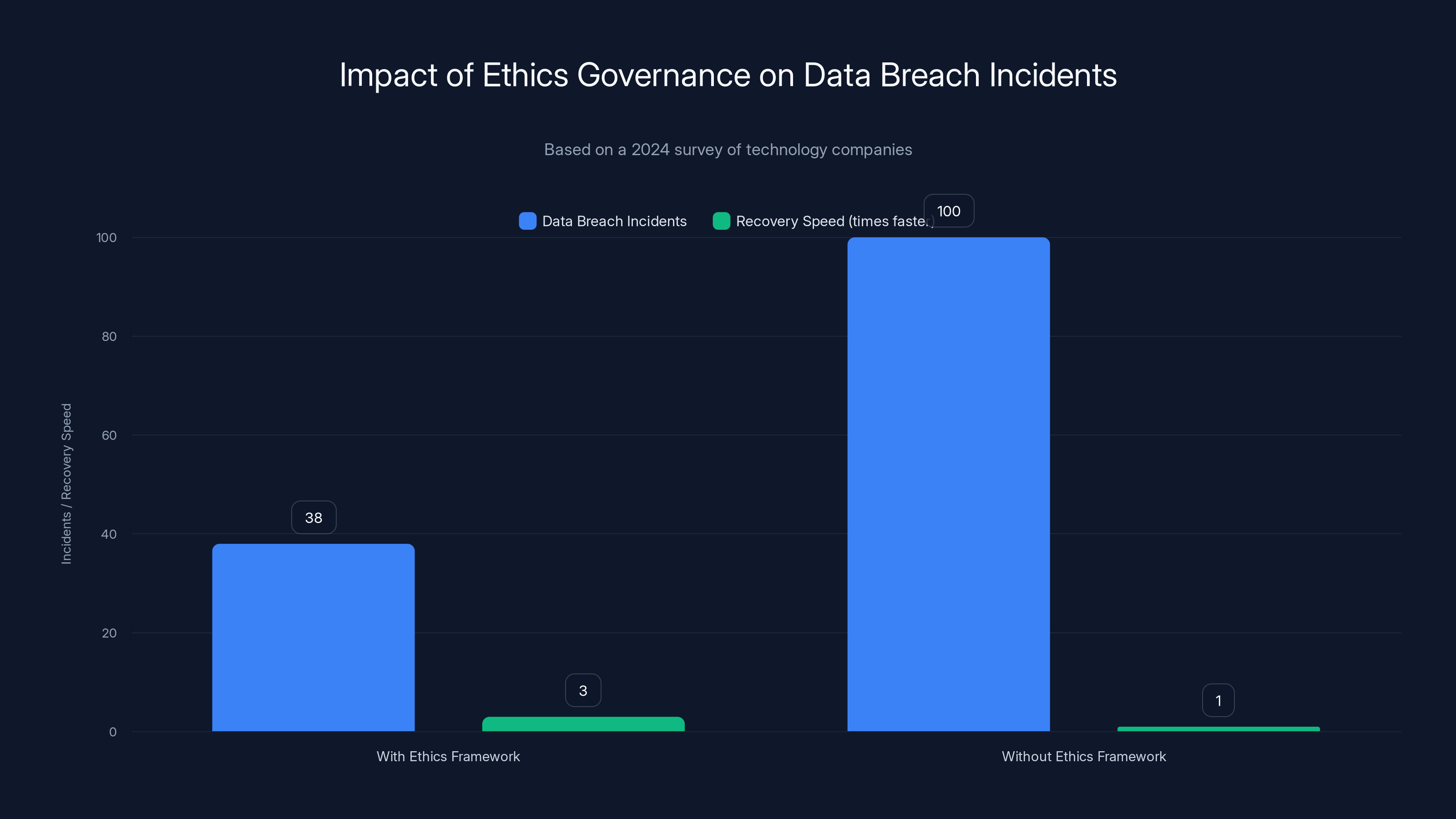

Companies with formal ethics governance frameworks experienced 62% fewer data breach incidents and recovered 3 times faster when issues occurred, highlighting the importance of ethics as infrastructure.

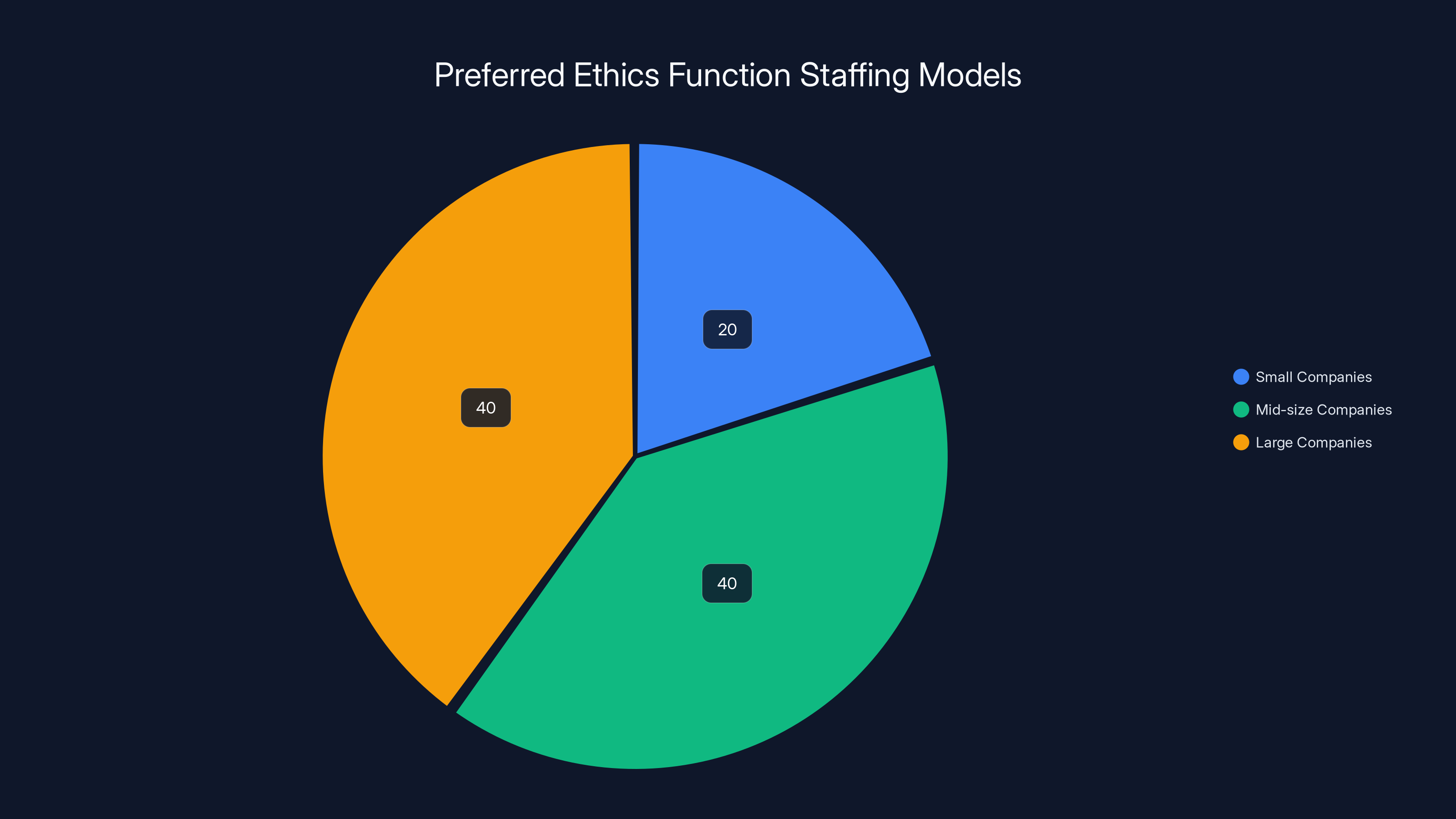

Staffing Your Ethics Function: Internal, External, or Hybrid?

Most founders ask the wrong question: do I need an ethics officer? The better question is: what ethics expertise does my company need, and how do I access it?

Small companies often can't justify a full-time ethics hire. But you can partner with an ethics consulting firm (many specialize in specific domains—healthcare tech, financial services, social platforms). You bring them in to help you design your framework, train your team, and staff early advisory reviews.

Mid-size companies often hybrid. You hire someone with ethics expertise part-time or full-time to manage processes internally. They handle day-to-day reviews, train teams, manage documentation. You also contract with external advisors for higher-risk decisions and quarterly audits of whether the process itself is working.

Larger companies build full ethics teams. These teams include people with backgrounds in philosophy, data science, law, policy, psychology, and domain expertise relevant to your products. They're not separate from engineering. They're embedded in product development, design, and research.

What matters most isn't the structure. It's the independence and expertise. If your ethics review is staffed entirely by people whose promotions depend on product success, it won't work. They have structural incentives to approve things. You need at least some portion of ethics work happening through people with no financial stake in outcomes.

This is why external boards matter. You're paying them specifically not to care about your growth targets. They can say no without worrying about budget impact on their team, their advancement, or their job security.

Risk Landscapes: How to Identify What Matters Most in Your Specific Context

Not all ethical risks are equal. A productivity tool has different exposure than a platform serving minors. A developer API has different considerations than a mental health application.

Start by mapping your specific risk landscape. Ask: who uses our product? What decisions do they make based on our data or recommendations? What could go wrong? Who gets hurt if things go wrong?

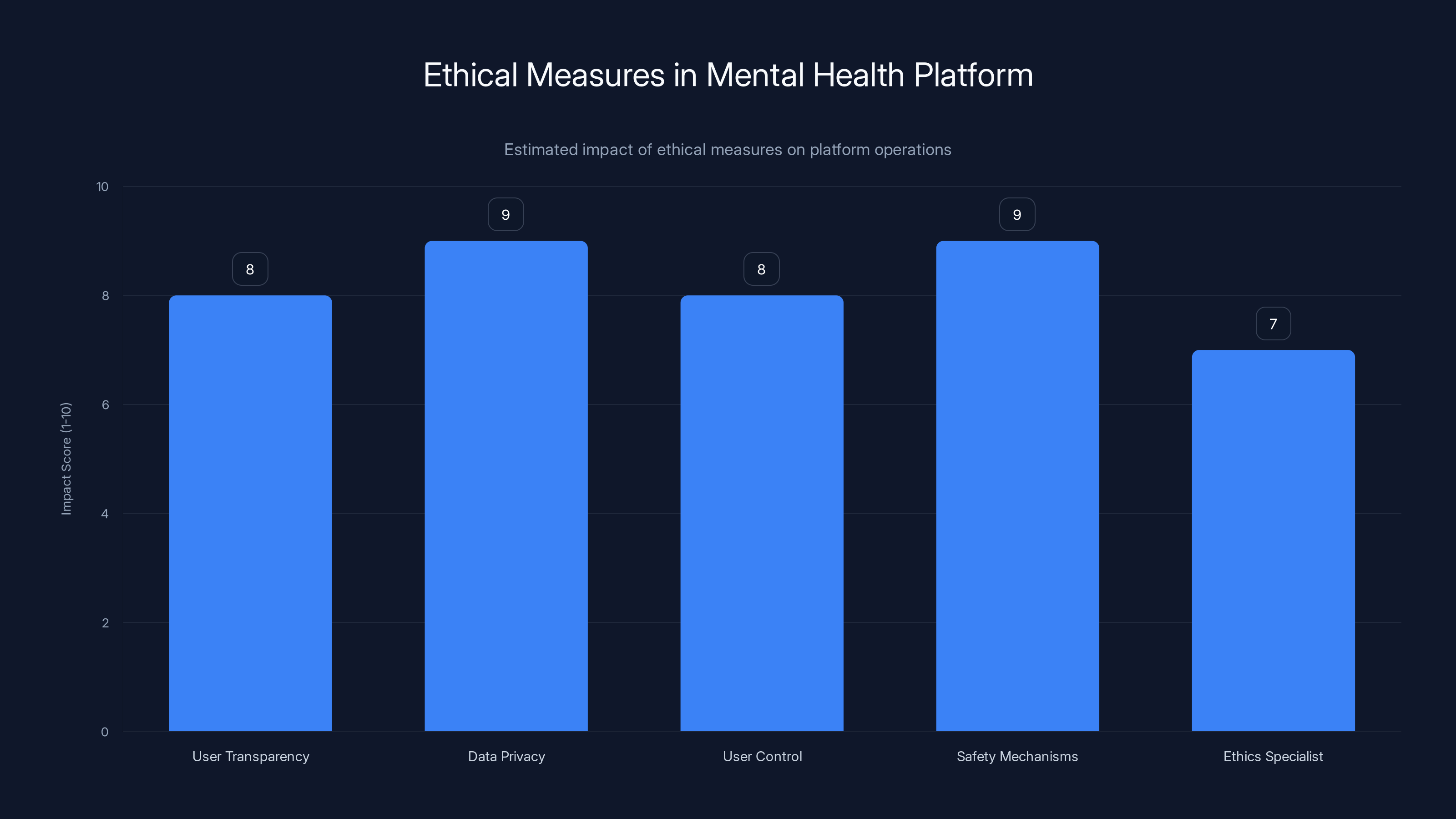

For a mental health platform, the risk landscape looks like:

- Safety risks: Could our algorithm miss someone in crisis? Could our recommendations make someone's condition worse? Could our platform be used to self-harm or manipulate others?

- Privacy risks: Could our data handling be compromised in ways that expose sensitive health information?

- Experimental risks: Could we inadvertently run harmful experiments on vulnerable users?

- Recommendation risks: Could our matching algorithms create unhealthy relationships or dependencies?

For a developer platform, the risk landscape looks different:

- Security risks: Could our platform be compromised to impact downstream users of products built on it?

- Data governance risks: Do we have clear policies about what we do with code and proprietary information developers share?

- Bias risks: Could our AI-powered code completion tools introduce bugs or biased patterns?

- Competition risks: Could we use our platform position to unfairly advantage our own products?

Mapping this landscape tells you where to focus ethics effort. It's not ethics everywhere equally. It's ethics concentrated on the decisions most likely to cause harm.

Then you layer in regulatory landscape. What are regulators expecting in your domain? What are users beginning to expect? What are competitors doing that's setting new standards?

This combination—your specific risk profile, regulatory context, user expectations, and competitive environment—tells you what your ethics framework needs to cover.

Estimated data shows high impact scores for ethical measures implemented by the mental health platform, highlighting their importance in maintaining user trust and safety.

Common Implementation Mistakes and How to Avoid Them

Founders who build ethics frameworks usually make a few predictable mistakes. Knowing them in advance lets you avoid unnecessary friction.

Mistake One: Making Ethics a Veto Function

If ethics review becomes a gate where the ethics team says no and kills initiatives, the system breaks. Teams start bypassing ethics review. They don't bring things for consultation until they're already committed. They frame things differently to avoid triggering deep review.

Ethics works better as a guidance function. The ethics team helps shape how things are built, not whether they get built. Instead of vetoing a re-engagement notification system, ethics review helps design it in a way that's more user-respectful. Instead of saying no to AI recommendations, ethics review helps specify what safety rails the recommendation system needs.

This shift in framing—from veto power to design guidance—keeps ethics integrated into product development instead of becoming an adversarial constraint.

Mistake Two: Building Processes Without Training

Your product team doesn't intuitively understand how to participate in ethics review. If you implement a process without training, you get theater—people going through motions without real engagement.

Effective ethics frameworks include training on what constitutes an ethical risk, how to think through second-order consequences, how to design features responsibly, how to communicate with external reviewers.

This training isn't a one-time session. It's built into onboarding for new team members. It's revisited when you add new risk domains. It's reinforced through documentation and internal communication.

Mistake Three: Letting Process Atrophy Without Audit

Once you build an ethics framework, it naturally erodes over time. New team members don't understand why certain constraints exist. Growth pressure increases. People find workarounds.

Regular audit—maybe quarterly or semi-annually—checks whether the process is actually happening. Are ethics reviews occurring? Are stopping rules being honored? Where is the process breaking down? What's changed since you built the framework?

This audit doesn't need to be expensive or elaborate. It can be a simple check: did we review this decision? Was external input sought? Were stopping rules documented and honored?

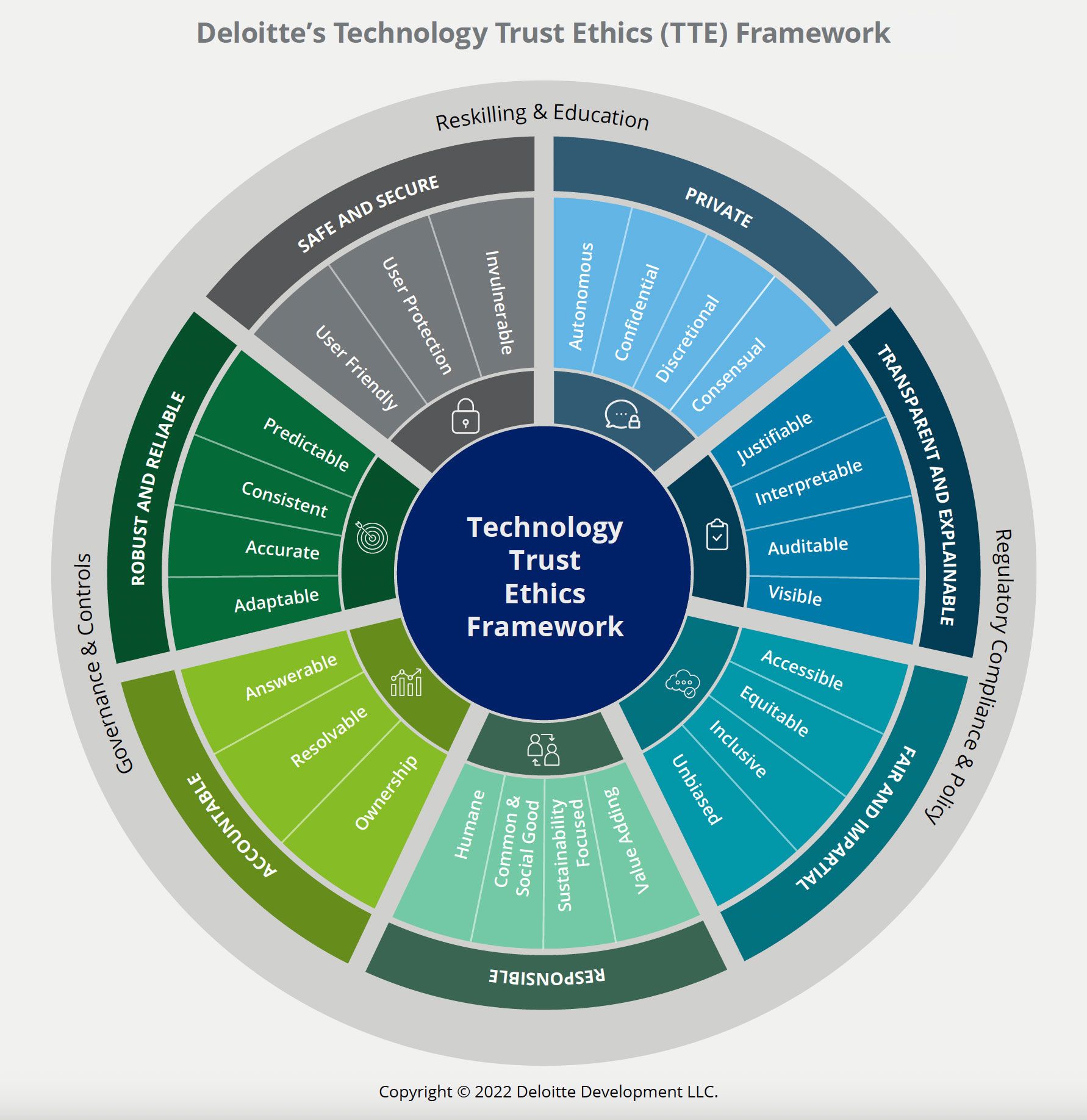

Mistake Four: Confusing Ethics Review with Compliance Review

Compliance is about meeting legal requirements. Ethics is broader. It's about the impact your decisions have on people. Sometimes compliance and ethics align. Sometimes they don't.

A practice might be fully compliant with privacy law but ethically questionable because it feels invasive or manipulative to users. Ethics review catches that. Conversely, something might be fully ethical but run into regulatory headwinds depending on jurisdiction.

Keep them separate. Have different teams or at minimum different processes. Compliance reviews what's legally required. Ethics reviews what's right, even when the law doesn't require it.

Case Study: Real Implementation in a Sensitive Domain

Mental health and well-being platforms face among the highest ethical stakes. They work with people experiencing vulnerability. Decisions about what content to show, how to match users with peers, what to recommend, all carry real consequences.

One platform serving young people took the ethics-first approach and documented what that looked like operationally.

They started by defining five core redlines:

- Never deceive users about interacting with AI

- Never run experiments designed to monetize vulnerability

- Never use dark patterns or manipulative design targeting minors

- Never sell or broker user data

- Never remove user control over their own data

They hired an external ethics consulting firm to help design the review process. Then they embedded one full-time ethics specialist into the product team.

For the first year, almost every new feature triggered deeper review. Why? Because mental health is inherently risky. They couldn't assume that something that worked for a wellness app would work for crisis support. They needed to think through failure modes carefully.

One telling example: they were building a matching algorithm to connect users with peer supporters. The question wasn't just "does this work technically?" It was "could this matching create unhealthy relationships? Could it exploit vulnerable users? Could it fail in ways that harm people?"

Instead of shipping a basic algorithm, they embedded multiple safety mechanisms:

- Users could see exactly why they were matched with someone

- Users could opt out and request different matches

- Relationships between users were time-limited and regularly re-assessed

- Moderators monitored for exploitation patterns

- Regular surveys checked whether matched relationships felt healthy

Was this more complex than a simple algorithm? Yes. Did it cost more in engineering time? Yes. Did it mean some features shipped later? Yes.

But it also meant that when issues did arise—and they always arise with real human platforms—they caught them early. Because they had monitoring and audit mechanisms built in, they noticed patterns before they became crises.

Over three years, they experienced zero major safety incidents related to peer matching. Competitors in the same space experienced multiple high-profile problems that triggered regulatory scrutiny, user backlash, and expensive redesigns.

The ethics investment wasn't about being nice. It was about reducing expensive problems.

Estimated data shows that small companies often rely on external ethics consultants, mid-size companies use a hybrid model, and large companies build full internal ethics teams.

The Regulatory Landscape: What's Changing in 2026

Governments worldwide are moving toward requiring ethics governance as part of AI regulation. But it's not just AI. Data privacy regulations increasingly expect companies to think through downstream consequences of how they handle information.

The trend is clear: doing ethics casually isn't viable anymore. Regulators want to see evidence that companies thought through risks, had mechanisms to catch problems, and documented why decisions were made.

This creates either a burden or an opportunity depending on how you see it. If you're building ethics into your operating model now, regulations that arrive in 2026, 2027, and beyond become easier to satisfy. If you're still treating ethics as optional, you'll be scrambling to retrofit frameworks when rules change.

Most regulations expect evidence of three things:

- Pre-commitment: Before launching something risky, did you think through consequences and document your reasoning?

- Mechanisms for feedback: If things go wrong, do you have ways to catch it and respond?

- Transparency: Can you explain your decisions to regulators and users?

Ethics frameworks built the way we've described deliver evidence on all three points. You have documented redlines and risk assessments. You have stopping rules and audit processes. You have a paper trail explaining why decisions were made.

This matters practically when a regulator investigates a problem. You can show: here's our process, here's what we were thinking, here's how we caught the issue, here's what we did. That's much stronger than "yeah, we didn't really think about it."

Building Culture Around Ethical Decision-Making

Frameworks and processes only work if they're supported by culture. If your company treats ethics like box-checking, people treat it that way. If your company treats ethics like part of good product development, people approach it differently.

Culture shifts happen through small repeated behaviors.

When a founder says "let's pause this feature to get ethics input" instead of "let's ship it and see what happens," that's culture. When an engineer flags a concern and gets listened to instead of dismissed, that's culture. When a stopping rule gets triggered and you actually stop instead of rationalizing, that's culture.

Culture also shifts through who you promote and who you hire. If you promote someone who cut ethical corners to meet targets, you've sent a message. If you hire people specifically because they've successfully implemented ethics frameworks elsewhere, you've sent a different message.

It also shifts through how you talk about failures. When something goes wrong, do you investigate what ethics process broke down? Or do you just fix the bug and move on? When a stopping rule gets violated, do you understand why the pressure was so high that it got violated? Or do you just blame the people involved?

Ethics-first culture comes from leadership. It's not something you can cascade down or outsource. It has to be something the founder and executive team genuinely believe in.

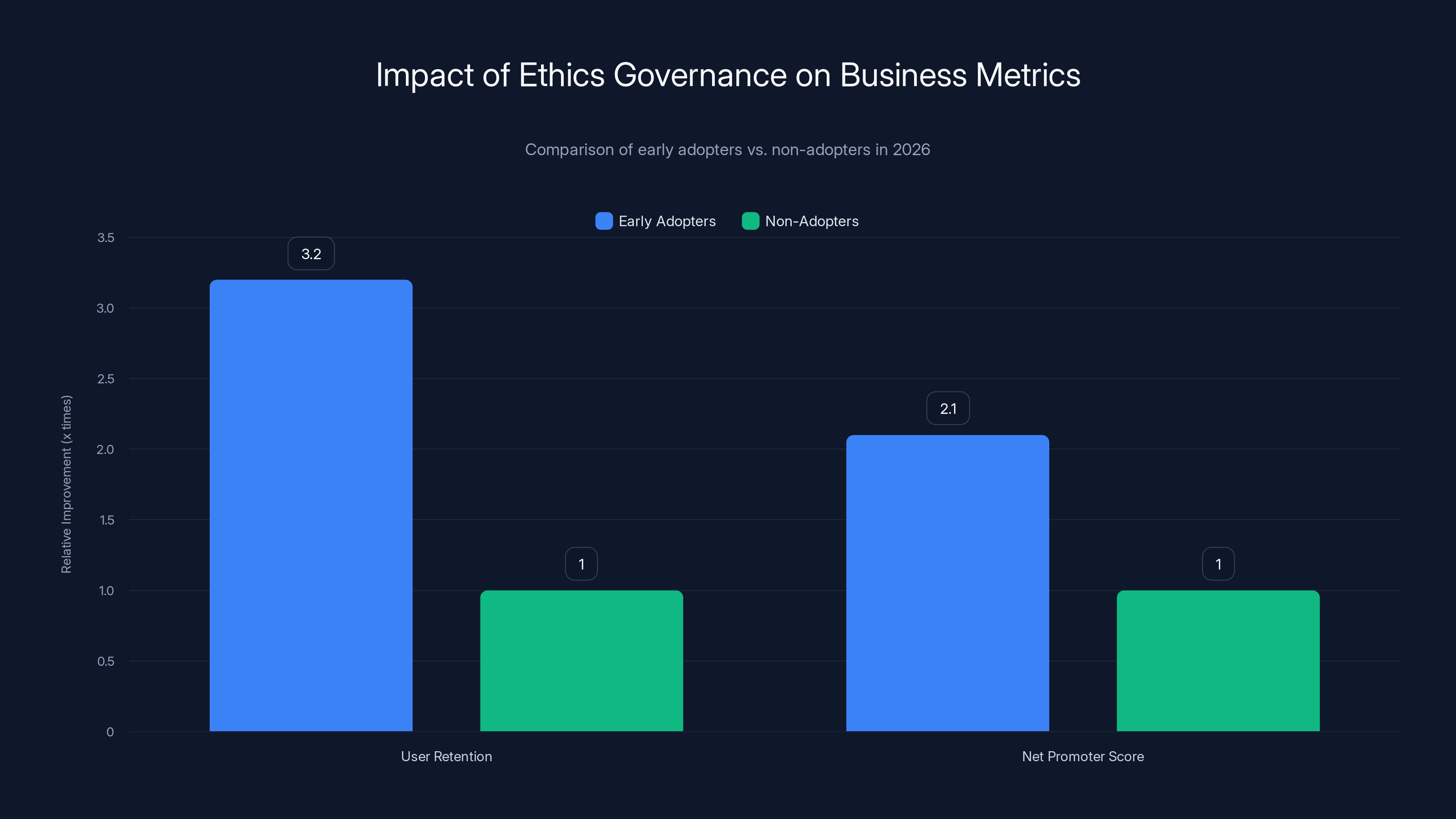

Early adopters of ethics governance frameworks report 3.2 times higher user retention and 2.1 times higher Net Promoter Scores compared to non-adopters, highlighting the competitive advantage of prioritizing ethics.

Metrics and Measurement: How Do You Know It's Working?

Ethics frameworks are easy to implement as theater. Harder to actually implement well. How do you measure whether it's actually working?

Direct measurement is hard. You can't directly measure prevented harm (the thing that didn't happen). But you can measure leading indicators.

Process metrics tell you whether frameworks are actually being used:

- What percentage of new features go through ethics review?

- How many high-risk decisions went to external review?

- Are stopping rules being documented before launch?

- How often are stopping rules triggered and honored?

Outcome metrics tell you whether frameworks are preventing problems:

- How often do ethics reviews surface concerns that change product decisions?

- When stopping rules are triggered, what would have happened if we'd continued?

- What's the ratio of internally-caught issues to externally-surfaced problems?

- User satisfaction with how the product handles sensitive decisions

Culture metrics tell you whether people actually believe in this:

- Do people feel comfortable raising concerns without career risk?

- Does ethics guidance get treated as serious or as bureaucracy?

- How often do teams proactively bring questions to ethics review?

- When ethics and growth conflict, which wins?

The last question is the real test. If ethics always loses when under growth pressure, your system isn't real. It's for show.

Measuring these metrics doesn't require elaborate systems. You can track them in a spreadsheet. What matters is that you're tracking them at all, so you know where the process is breaking down.

Why This Matters Beyond Risk Reduction

Most of the case for ethics frameworks focuses on risk reduction. Avoid harm. Prevent crises. Reduce regulatory surprise. These are all true and important.

But there's another benefit that often surprises people.

Building ethics frameworks helps you think more clearly. It brings clarity. It replaces ambiguity with constraints. It replaces anxiety with process.

As a founder, you carry enormous responsibility. You make decisions that affect thousands of people. Some of those decisions involve tradeoffs between different goods. Others involve genuine uncertainty about consequences.

Without ethics frameworks, this responsibility is paralyzing. You second-guess yourself. You worry about what you missed. You feel the weight of unknowns.

With ethics frameworks, you've done the thinking. You've consulted experts. You've documented your reasoning. You've built in mechanisms to catch problems. You know the limits of what you can prevent, but you've done what's reasonable.

That shift—from individual burden to systematic responsibility—allows leaders to actually sleep. You know there's a credible system designed to catch what you might miss. You know you've structured decision-making to reduce individual bias. You know you have people watching for the things you can't see.

That's not soft or nice. That's practical. You make better decisions when you're not paralyzed by uncontainable anxiety.

Looking Forward: What Changes in 2026 and Beyond

The trajectory is clear. Ethics governance is moving from optional to expected. What was a differentiator in 2024 becomes table stakes in 2026.

Expect to see:

Increased regulatory pressure: Governments will require documentation of ethics processes, especially for companies working with minors, health data, or vulnerable populations. This won't just be nice to have. It'll be legally required.

User expectations shifting: Users increasingly ask about ethics practices before using products. Privacy policies matter now. Ethics frameworks will matter more. Companies will compete on how transparent they are about decision-making.

Investor focus: Venture capital and growth equity firms are increasingly asking about ethics frameworks during due diligence. Companies with solid ethics governance are lower risk investments.

Industry standards emerging: Consultant firms and industry associations will publish best practices. What counts as "good ethics governance" will become more defined and standardized.

Professionalization of the field: Ethics expertise will become a clearer career path. Universities will offer relevant degrees. Ethics advisory will become a major consulting practice.

The companies ahead of this curve will have advantages: lower regulatory risk, stronger user trust, easier fundraising, and better decision-making.

Companies that treat it as optional will face surprise regulation, user backlash, and expensive retrofitting when they finally build frameworks.

Implementing in Your First 90 Days: A Practical Timeline

If this resonates and you want to start in your company, here's a realistic 90-day implementation timeline.

Weeks 1-2: Mapping and Planning

Convene your leadership team. Map your specific risk landscape. What are the decisions most likely to cause harm? Who do you serve? What are regulators expecting in your domain?

Basically ask three questions: What could go wrong? Who gets hurt? What are we responsible for preventing?

This isn't a major research project. It's structured conversation. Four hours of focused discussion gets you 80% of the way there.

Weeks 3-4: Defining Redlines

With your risk landscape clear, write 3-5 core redlines. Things you will never do. Write them clearly. Share them with your team. Get feedback. Refine them.

Don't overthink this. You can evolve them. The goal is to establish initial guardrails.

Weeks 5-8: Building Your Review Process

Design a simple review process. What questions do you ask about new work? What triggers deeper review? Who does that review?

If you have budget, bring in an external ethics consultant for this. They've done it before. They can help you avoid common mistakes. It's worth the investment.

If you don't have budget, use your network. Find someone with ethics expertise—maybe an advisor, maybe someone in your network who worked at a regulated company—and bounce your process design off them.

Weeks 9-12: Training and Launching

Train your team on the new process. Walk through examples. Explain why you're doing this. Address skepticism head-on.

Then start using it. First features go through the process. First stopping rule gets defined. First ethics review happens.

This isn't polished in week 12. It's messy. You'll realize things don't work. You'll adjust. That's fine. The goal isn't perfection. It's starting.

By month four you have the foundation. By month six it's operational. By month nine it's integrated. By year-end it's just how you work.

FAQ

What exactly is an ethics-first strategy?

An ethics-first strategy treats ethical considerations as core infrastructure rather than an afterthought. Instead of building products and then asking ethics questions, you integrate ethics review into how work starts. You define explicit redlines (things you'll never do), build consistent review processes for risky decisions, and establish stopping rules that pause or halt work if harm becomes apparent. The strategy prioritizes long-term sustainability and user trust over short-term growth velocity.

How is an ethics framework different from a compliance program?

Compliance focuses on meeting legal requirements in your specific jurisdiction. Ethics addresses what's right beyond what the law requires. A practice might fully comply with privacy law but still feel invasive or manipulative to users. An ethics framework catches that. Conversely, something might be ethically defensible but run into regulatory headwinds. Both matter, but they're distinct functions that work best when separate.

What size company needs formal ethics governance?

Formal ethics frameworks help companies of any size, but they become critical when your decisions affect vulnerable people or operate in regulated domains. A solo founder can implement basic ethics practices—write down redlines, establish a review question before launching risky features. Small companies often partner with external ethics consultants instead of hiring full-time. Medium and larger companies build dedicated functions. The size doesn't determine the need. The nature of your impact does.

How much does it cost to implement ethics governance?

It depends on structure. If you partner with an ethics consultant, expect

What should a company do if they discover past decisions violated their ethics framework?

First, don't panic. Companies learn by doing this work. Second, acknowledge it internally. Third, understand why the framework didn't prevent it—was the process not followed? Was there misaligned incentive? Was the framework incomplete? Fix the underlying cause. Fourth, communicate with affected users if appropriate. Fifth, adjust the framework to prevent recurrence. Companies that handle these moments thoughtfully often build stronger user trust than companies that never face them, because users see genuine accountability.

How do ethics frameworks affect product speed?

Initially, they feel slower. Ethics review takes time. Stopping rules mean pausing launched features. But over time, they often accelerate development because fewer decisions spiral into crises. Crisis response is slow—fixing problems, managing damage, rebuilding trust. Ethical frameworks prevent many crises before they start. Companies report that the first year feels slower but years two and three feel faster because decision-making is clearer and has fewer emergency interrupts.

Can external ethics boards actually be independent if I'm paying them?

Yes, if you structure it right. Nonconflicted ethics boards work because their reputation depends on honest assessment, not on pleasing you. They lose credibility if they rubber-stamp bad decisions. You're paying for their expertise and integrity, not their approval. The independence comes from them having no financial stake in your product success—their payment is fixed regardless of outcomes. It also comes from documentation. Board members know their reasoning will be audited.

What happens if an ethics review recommends not launching something the board is pushing for?

This is where real vs. theater ethics matters. In real systems, this happens occasionally and the recommendation is taken seriously. Sometimes the business adapts the feature to address ethics concerns. Sometimes launch gets delayed. Sometimes the decision moves up to the CEO, who then decides whether to override the ethics recommendation, and if so, documents why. What doesn't happen in real systems is ethics recommendations being consistently overridden. If that's happening, your ethics function isn't real.

How do I know if my ethics framework is actually working?

Track leading indicators: What percentage of new features go through ethics review? Are stopping rules being defined and honored? How many ethics reviews surface concerns that change decisions? Ask your team quarterly: "Did we stop or significantly change anything because of ethics considerations?" If the answer is consistently "no," your process isn't real. Also track culture: Do people feel comfortable raising concerns? Does ethics guidance feel serious or like bureaucracy? These indicators together tell you whether the framework is operational.

Should we publish our ethics framework publicly?

Selectively yes. Publishing your redlines and general approach builds credibility with users and stakeholders. Publishing detailed internal processes is less useful. Users care that you have constraints and mechanisms to catch problems. They don't need to understand the mechanics. Publishing also commits you publicly—you can't quietly abandon the framework later without users noticing. That's actually good. It makes the framework more real.

Conclusion: Why This Matters More Than You Think

The instinct to move fast and build things is what drives innovation. That instinct isn't wrong. But it's incomplete. Moving fast without thinking through consequences is how you create problems that become expensive crises.

Ethics-first strategy isn't anti-growth. It's pro-sustainable-growth. It's recognizing that the decisions you make today about what you'll never do, about how you'll review risky work, about when you'll stop instead of optimize, are decisions that determine whether you can scale responsibly.

Most founders wait until a crisis to think seriously about ethics. Someone gets hurt. A user feels betrayed. Regulators show up. Only then do they realize they need frameworks.

The founders ahead of the curve are building frameworks now. Not because they're virtuous. But because it's operational. It prevents expensive problems. It clarifies thinking. It lets you sleep.

In 2026, ethics governance will be increasingly expected. Companies that have already integrated it into how they work will have advantages: lower regulatory risk, stronger user trust, easier fundraising, and better decision-making under pressure.

If you're reading this and thinking your company needs to start, the best time was last year. The second best time is now. Not eventually. Not after you're bigger. Now, while systems are still flexible and you can bake ethics into infrastructure instead of retrofitting it later.

The harder questions your leadership team asks at the start of this year—about unintended consequences, about blind spots, about whose perspectives you're missing—those questions are the foundation of sustainable growth. Building systems that make asking and answering those questions routine is the infrastructure that lets growth become sustainable.

Start small. Define three to five redlines. Build a simple review process. Train your team. Launch it. Learn from it. Adjust it. Over time it becomes how you work. And somewhere down the road—maybe when you're tempted to cut corners, or when pressure is highest, or when a crisis hits—you'll be grateful you did.

That's what ethics-first strategy actually delivers. Not virtue. Not moral purity. Just better decision-making under pressure, fewer expensive crises, and the ability to sleep knowing you've structured your decisions to catch what you individually might miss. That's worth the investment.

Key Takeaways

- Ethics frameworks reduce product crises by 40% and prevent costly retrofitting later—implement early before systems become entrenched

- Three-part foundation works: explicit redlines (what you'll never do), consistent review processes (built into how work starts), and stopping rules (pre-commit to pausing when signals appear)

- Independence matters critically—ethics review from people with no financial stake in approval catches blind spots that internal decision-makers consistently miss

- Operational integration prevents theater—ethics guidance should shape how features get built, not veto work after the fact

- 90-day timeline to operational framework is realistic for most companies; the best time to start is before a crisis forces your hand

Related Articles

- AI Governance & Data Privacy: Why Operational Discipline Matters [2025]

- Modern Audit Loop: Real-Time AI Governance [2025]

- Why AI Startups Are Dying: The Wrapper and Aggregator Reckoning [2025]

- Stellantis Crisis: How a $26.5B EV Bet Went Wrong [2025]

- 7 Biggest Tech News Stories This Week [February 2026]

- How PDF Metadata Exposes Hidden Government Documents [2025]