How Hackers Are Using AI: The Threats Reshaping Cybersecurity

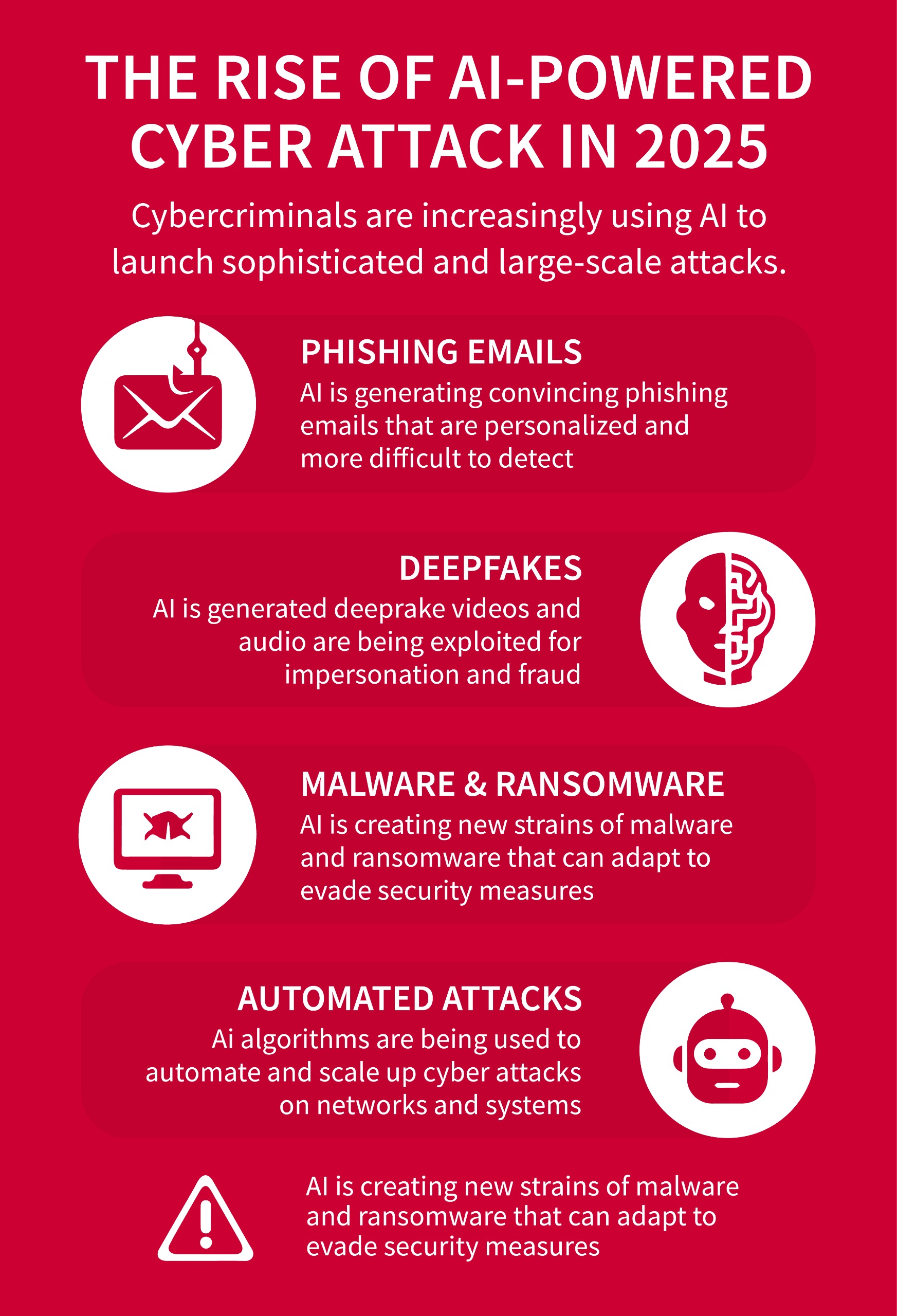

AI isn't just changing how we work. It's changing how criminals attack us.

We're past the point of wondering if threat actors would weaponize artificial intelligence. They already have. And what's happening right now goes way beyond slick phishing emails. We're talking about malware that rewrites itself in real time, attackers cloning your company's AI tools, and state-sponsored groups using AI to orchestrate massive social engineering campaigns with surgical precision.

The gap between corporate AI deployment and actual security preparedness? It's getting wider every month.

Last year, security researchers watched attackers experiment with AI mostly in isolation. Distillation attacks here, a proof-of-concept there. But the latest threat intelligence shows something darker: threat actors have moved past experimentation. They're integrating AI into operational attack chains. They're building custom tools specifically designed for malicious purposes. And they're doing it faster than most organizations can patch their existing vulnerabilities.

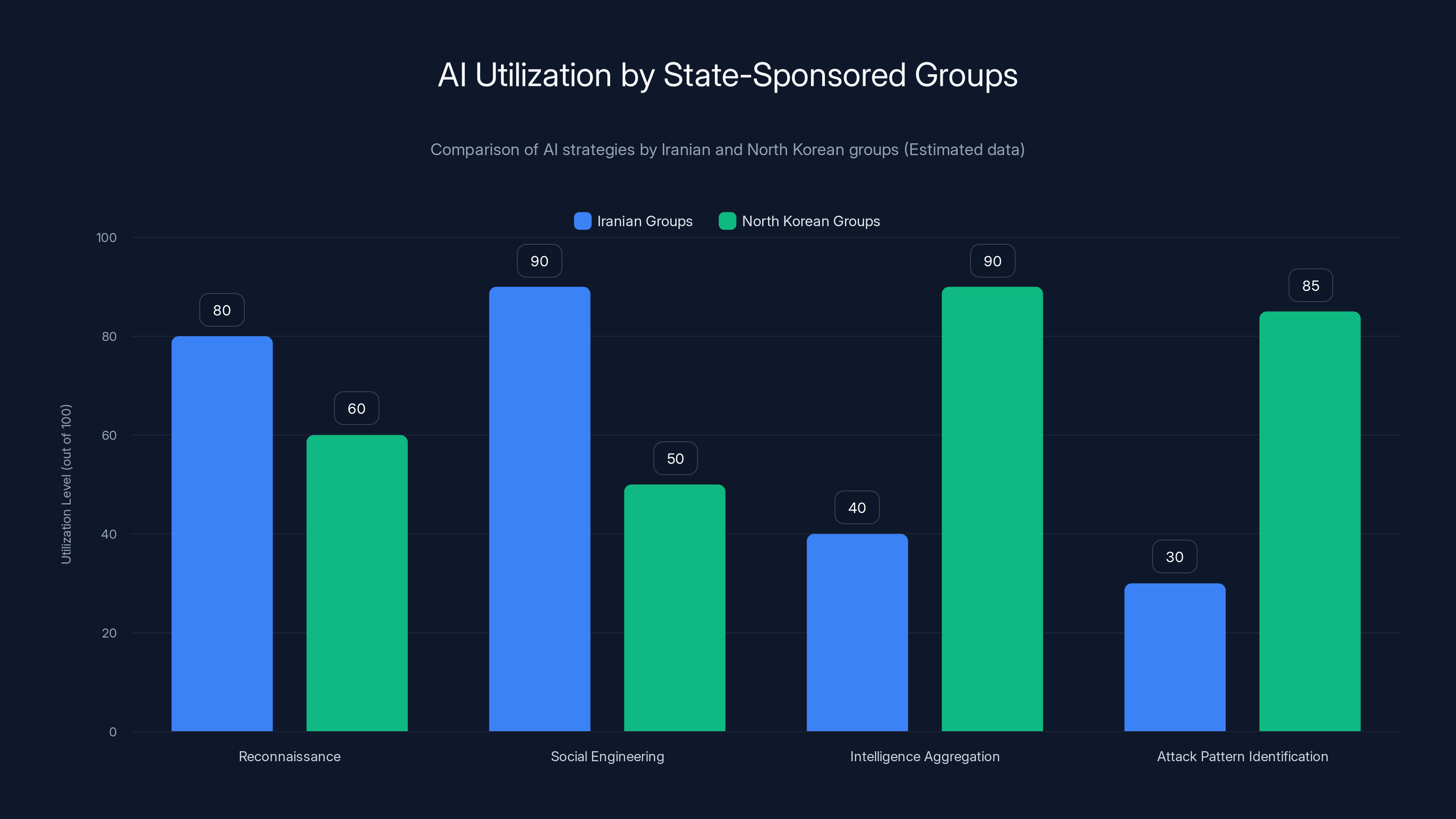

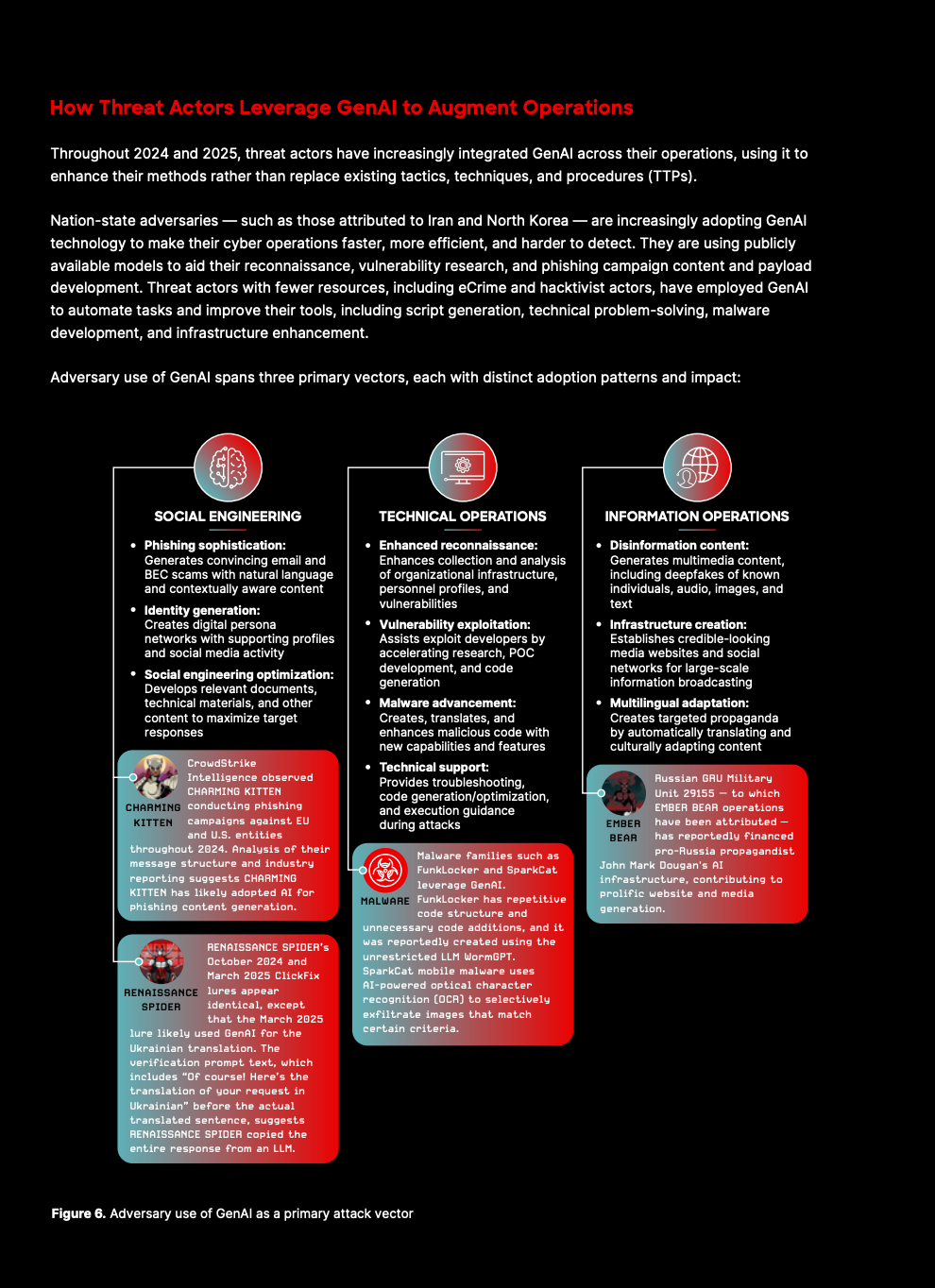

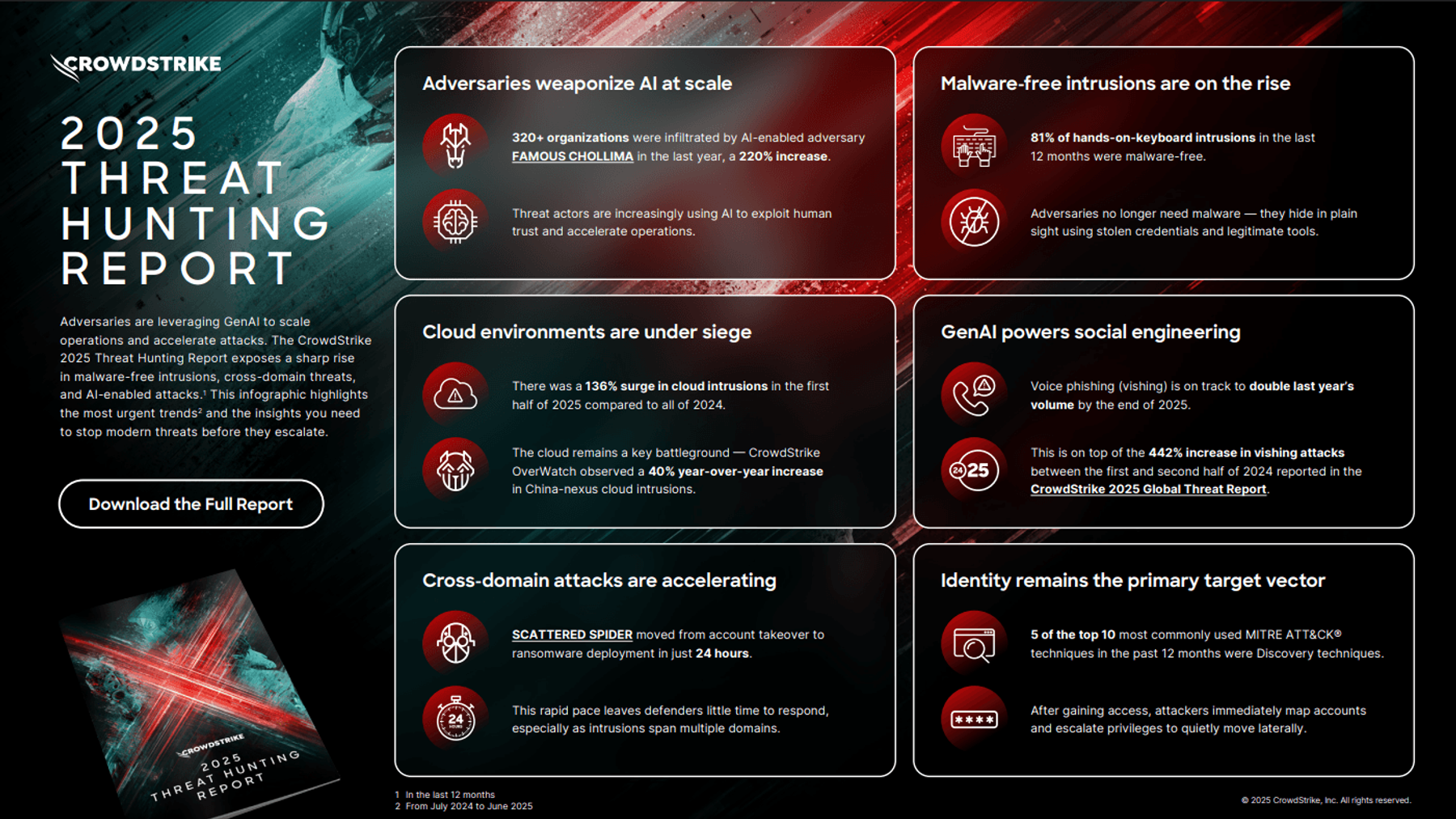

What makes this moment different is scale and sophistication. We're not talking about script kiddies using Chat GPT to write better social engineering messages. We're seeing state-sponsored groups from Iran and North Korea coordinate AI-powered intelligence gathering. We're seeing sophisticated malware that uses AI to analyze network traffic, predict detection patterns, and adapt in real time. We're seeing attackers demand custom AI tools built specifically for offensive operations.

Here's what you need to understand: your security posture from six months ago is already outdated. The adversary toolkit has fundamentally changed. And if you're still relying on static analysis, signature-based detection, and human review to catch threats, you're playing a game where the rules shifted without your permission.

This article breaks down exactly what threat actors are doing with AI right now, why it works, and what actually stops it. Because understanding the threat is the only way to stay ahead of it.

TL; DR

- Distillation attacks are now standard: Threat actors use hundreds of prompts to reverse-engineer how AI models work, then clone them for free

- Malware is becoming adaptive: Tools like HONESTCUE use AI (specifically Gemini) to rewrite malicious code in real time, evading static detection entirely

- Phishing has evolved: AI creates hyper-convincing mass-distribution phishing kits that harvest credentials at scale with minimal manual work

- State-sponsored groups lead the way: Iranian and North Korean operatives use AI for intelligence gathering, social engineering, and attack coordination

- Detection must be AI-powered too: Traditional security tools can't keep up, which is why major vendors are deploying their own AI to detect AI-augmented threats

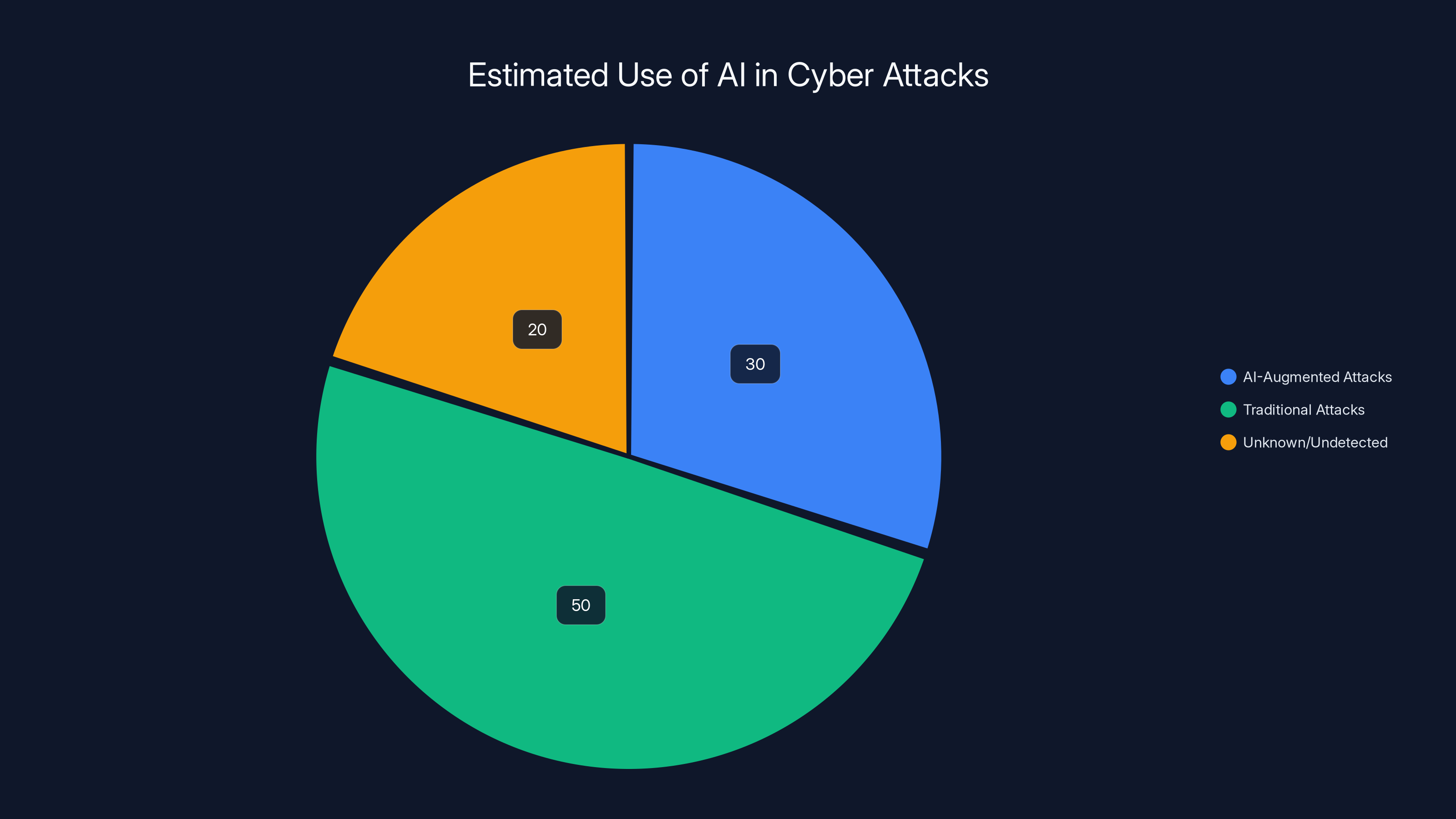

An estimated 30% of cyber attacks now involve AI, highlighting the growing sophistication of threat actors. Estimated data.

The AI Threat Landscape in 2025

If you're not tracking how threat actors are actually using AI right now, you're operating blind.

The security industry spent 2023 and early 2024 debating whether AI would become a real threat. That debate is over. Threat actors have already crossed the threshold from experimentation to operational deployment. We're seeing AI integrated into attack chains across multiple threat actor groups, from nation-state actors to financially motivated cybercriminals.

What's particularly unsettling is the speed. AI development moves fast. Threat actor adoption moves faster. By the time a major AI platform patches a vulnerability or implements better safeguards, attackers have already mapped the platform's weaknesses, created workarounds, and integrated the techniques into live attack infrastructure.

The threat intelligence community now tracks specific campaigns that rely on AI-augmented techniques. These aren't hypothetical attacks written up in academic papers. They're real incidents affecting real organizations. The shift happened quietly, without a watershed moment, but the data is clear: AI is now a standard tool in the threat actor toolkit.

What makes this different from previous security innovations is the learning curve. Previous attack vectors required significant technical skill. Custom malware required reverse engineering knowledge. Phishing kits required social engineering expertise. But AI democratizes this. A threat actor with minimal technical background can now use AI to automate tasks that previously required specialized knowledge.

The result? Threat actors are operating at a scale they couldn't before. What previously took a team of specialists can now be automated, scaled, and distributed to thousands of compromised accounts simultaneously.

Iranian groups focus heavily on reconnaissance and social engineering, while North Korean groups excel in intelligence aggregation and attack pattern identification. Estimated data based on documented strategies.

How Threat Actors Clone AI Models with Distillation Attacks

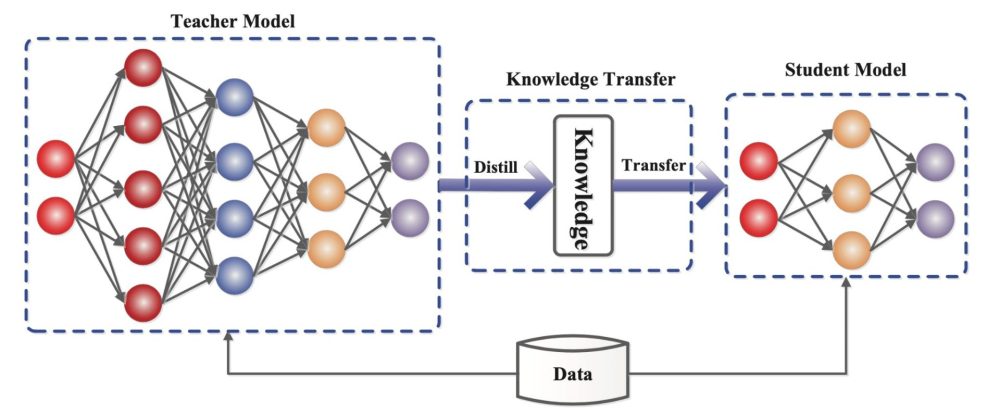

Let's start with the foundational technique that makes everything else possible: distillation attacks.

If you pay for an AI service like Claude or Gemini, you're paying for computational resources. Threat actors don't want to pay. They want the same capabilities for free. Distillation attacks make that possible.

Here's how it works in practice. An attacker crafts hundreds or thousands of prompts designed to probe how the AI model responds. They're not asking it to write phishing emails or malware. They're asking it seemingly innocent questions: "What's the difference between these two approaches?" "How would you structure this problem?" "What are the edge cases here?"

Each response is a data point. Thousands of data points, aggregated together, create a map of how the original model thinks. What kinds of answers does it give? How does it handle ambiguous queries? What's its underlying reasoning process?

Once threat actors have gathered enough responses, they train their own model on this data. The distilled model doesn't need to be perfect. It just needs to capture the essential reasoning patterns. And here's the dangerous part: a distilled model is often faster and cheaper to run than the original, which makes it more practical for operational use.

Why does this matter? Because threat actors now have a free copy of the model that they control completely. They can test attack techniques against it without worry. They can modify it. They can integrate it directly into malware or attack infrastructure. And critically, the original model creator has no visibility into what they're doing with it.

The attack creates three distinct advantages for the attacker:

First, cost elimination. If you're launching a phishing campaign using AI to personalize messages for 100,000 targets, the API costs would be substantial. With a distilled model running locally, the cost approaches zero.

Second, model analysis. Once attackers have a copy of the model, they can analyze its architecture, its training data, its decision boundaries. This reveals weaknesses. It reveals what kinds of prompts cause unexpected behavior. It reveals how to manipulate the model's outputs.

Third, exploit identification. Understanding how the original model works creates a roadmap for finding exploits in the legitimate service. If the distilled model has a vulnerability, the original often does too. This creates a feedback loop where stealing the model actually helps attackers find ways to compromise the genuine service.

Google's threat intelligence team has documented multiple distillation attacks in the wild. Attackers have successfully cloned Open AI models, Anthropic models, and Google's own Gemini. The attacks are happening right now, at scale, and there's no simple defense beyond rate limiting and anomaly detection.

The concerning part? Distillation attacks are just the foundation. Everything that follows builds on top of this capability.

AI-Augmented Malware: The HONESTCUE Case Study

Malware has always adapted. But it used to adapt slowly.

A security team would detect a variant, analyze it, extract the signature, and deploy detection rules. Malware would go dormant for weeks while the authors updated the code to bypass the signatures. Then the cycle would repeat.

Now? The adaptation happens in real time.

HONESTCUE is a malware family that security researchers began tracking recently. The remarkable thing about HONESTCUE isn't its payload. It's its delivery mechanism. The malware uses Gemini to rewrite its own code during execution.

Here's the attack flow. First, the initial compromise happens through conventional means: phishing, credential theft, supply chain compromise. Once the attacker has a foothold, they deploy HONESTCUE onto the target system.

When HONESTCUE executes, it doesn't just run a static payload. Instead, it queries Gemini with prompts like: "Rewrite this code to perform the same function but use different variable names, function calls, and control flow." The AI generates new code that's functionally identical but structurally different.

The system then executes this AI-generated code. The result is malware that looks completely different every time it runs. Static analysis tools, which work by analyzing malware samples and extracting signatures, become useless. Signature-based detection fails because there's no consistent signature to detect.

The implications are severe. Network-based detection, which looks for known malware communication patterns, gets confused because the malware is actively modifying itself. Behavioral analysis, which looks for suspicious patterns, becomes harder because the AI rewrite might avoid known suspicious behaviors while maintaining the same malicious function.

What makes HONESTCUE particularly dangerous is that it's not a one-off experiment. It's a proof of concept that works. And threat actors are watching. If one group figured out how to weaponize AI for malware adaptation, others will too. The technique will be copied, improved, and deployed across multiple attack campaigns.

The technical sophistication required is surprisingly low. You don't need to understand deep learning or model fine-tuning. You just need API access to a capable AI model and a willingness to break terms of service. Most threat actors meet both criteria.

What really concerns security researchers is the acceleration curve. The first AI-augmented malware took months to develop. The second might take weeks. By the time we're a year into widespread adoption, every major malware family might be AI-augmented as a matter of standard practice.

Defense against this is fundamentally different from defense against conventional malware. You can't rely on signatures. You can't rely on static analysis. You need behavioral detection that works in real time, using machine learning to identify malicious patterns even when the malware code itself is constantly changing.

This is why security vendors are now deploying their own AI tools specifically designed to detect AI-augmented malware. It's not a luxury anymore. It's a necessity.

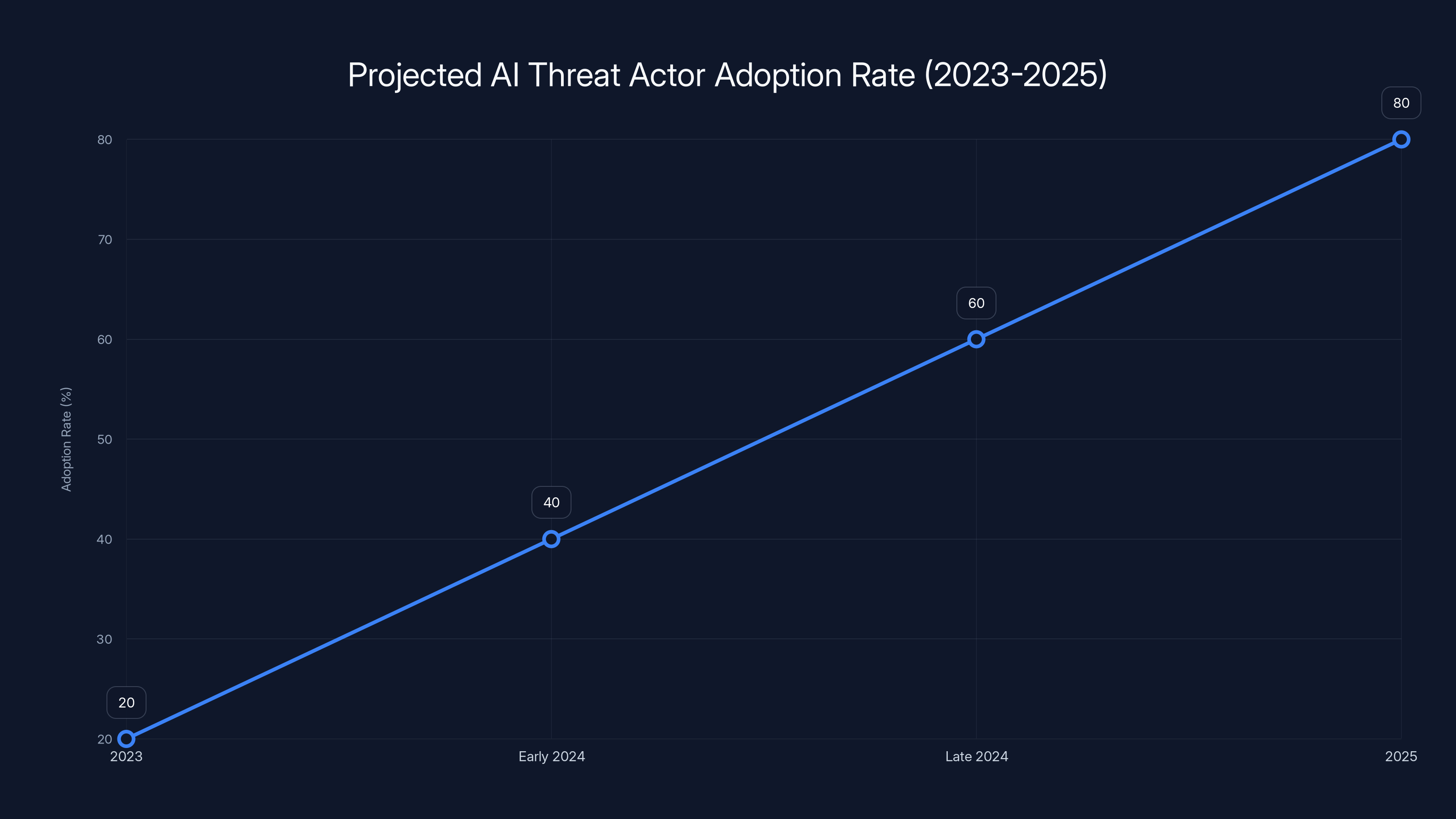

The adoption of AI by threat actors is projected to increase rapidly, reaching 80% by 2025. Estimated data based on current trends.

State-Sponsored Groups and AI-Powered Intelligence Gathering

State-sponsored threat actors have always been the most sophisticated adversaries. They have funding, patience, and zero concern for legal consequences. So it makes sense that they'd be among the first to operationalize AI.

What's been documented so far shows two distinct approaches from different regions.

Iranian state-sponsored groups are using AI for reconnaissance and social engineering preparation. The technique is actually straightforward but effective. They use AI to analyze publicly available information about a target organization: Linked In profiles, published papers, org charts, news articles, social media posts. The AI aggregates this data and identifies relationships: who talks to whom, who has decision-making authority, what are the professional relationships between key employees.

This creates a detailed social graph of the organization. The attackers then use this graph to identify optimal targets for social engineering. Instead of sending generic phishing messages to random employees, they can send highly personalized messages to specific people, referencing legitimate business relationships and using actual names and contexts.

The effectiveness is striking. Traditional phishing relies on volume: send 10,000 messages, hope some people fall for it. AI-augmented social engineering relies on precision: send one message to one person, making it so personalized that it's nearly indistinguishable from legitimate communication.

North Korean state-sponsored groups are using AI more aggressively. They're using it to aggregate intelligence from multiple sources and identify attack patterns. If a North Korean group wants to target a specific industry sector, they use AI to rapidly process thousands of news articles, technical papers, conference presentations, and job postings to understand current technology adoption, likely vulnerabilities, and organizational structures.

This intelligence aggregation creates a knowledge base that would take a human analyst weeks or months to assemble. The AI does it in hours.

Both approaches share a common goal: reducing human labor while increasing precision. State-sponsored actors have time and resources, but they don't have unlimited personnel. Using AI to automate intelligence gathering lets them scale their operations without proportionally scaling their headcount.

The concerning part is that these techniques are working. We're seeing successful compromises of organizations that thought they were security-conscious. The common thread? They got compromised through highly personalized social engineering that was clearly augmented with AI.

What's particularly insidious is that the attacks are getting harder to detect from a defensive perspective. If you're monitoring for phishing, you're looking for common tell-tale signs: generic greetings, impersonal language, suspicious links. AI-augmented social engineering doesn't have those signatures. The greeting is personalized. The language matches the target's communication style. The links route through legitimate infrastructure that's been previously compromised.

Defense requires a shift in thinking. Instead of trying to detect phishing emails, you need to detect social engineering behaviors: unexpected requests for sensitive information, unusual urgency, requests that deviate from normal procedures. You need to train people to be suspicious of precision, not generic phishing.

You also need threat intelligence sharing. If a state-sponsored group is running AI-assisted reconnaissance against companies in your industry, you need to know about it. This requires active participation in intelligence sharing communities and close attention to vendor threat intelligence reports.

Phishing Kits and Mass-Scale Credential Harvesting

Phishing used to be a game of volume and luck.

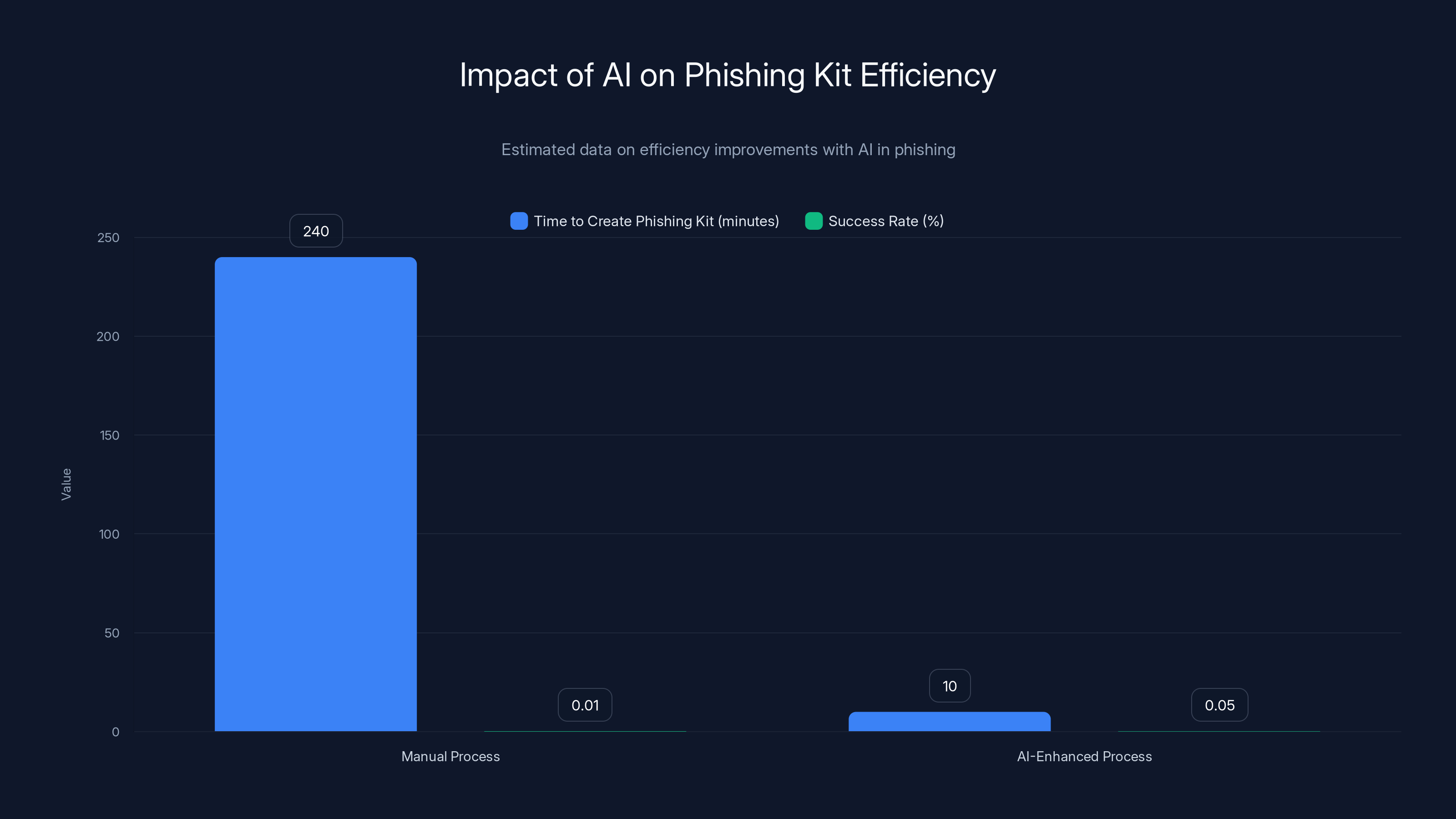

You'd send millions of messages, hoping some users would click the link or open the attachment. The conversion rate was terrible, but with enough volume, even 0.01% success rate generates thousands of compromised accounts.

AI is changing this by automating the entire phishing kit creation and deployment process.

Here's what threat actors are doing now. They use AI to generate convincing replicas of legitimate login pages, payment forms, or credential entry screens. The replicas aren't just superficially similar. They're pixel-perfect copies that incorporate legitimate SSL certificates, legitimate domain names (purchased through bullet-proof hosting or compromised registrars), and legitimate branding.

The key innovation is speed. Creating a phishing kit used to require manual design work. Someone had to screen-capture the legitimate site, manually recreate the HTML, test the functionality. This took hours of work per kit. Now, AI tools can generate a complete, functional phishing kit from a single screenshot in minutes.

Once the kit exists, distribution is automated. Threat actors use AI to personalize phishing messages for mass distribution. Instead of generic emails, each message is tailored to its recipient. The AI pulls information from social media profiles, email signatures, professional networks, and crafts messages that reference legitimate business contexts.

"Sarah, I noticed you're connected to the migration project at Acme Corp. We've updated our contractor portal credentials. Please click below to verify your access." The message is specific, professional, and completely fake.

When users click the link and enter their credentials, the system captures them automatically. The infrastructure is completely automated. No human review needed. The captured credentials are then sold, used for further attacks, or exploited for lateral movement within compromised organizations.

What's particularly effective about this approach is that it scales. A single attack campaign using AI-generated phishing kits and personalized messages can target hundreds of thousands of people simultaneously, and each person gets a slightly different message customized to their perceived role and context.

The result is that traditional anti-phishing training becomes less effective. People are trained to look for common red flags: generic greetings, poor grammar, suspicious URLs. But AI-generated phishing doesn't have those red flags. The greeting is personalized. The grammar is perfect. The URL looks legitimate.

Defense requires multiple layers. You need technical controls: email filtering that analyzes sender reputation, message patterns, and domain reputation. You need behavioral controls: multi-factor authentication that works even if credentials are compromised. You need detection: monitoring for unusual login patterns or credential usage from unexpected locations.

But critically, you need to understand that phishing prevention isn't 100% possible anymore. Some emails will get through. Some users will fall for it. The goal has to shift from "prevent all phishing" to "detect and respond quickly when phishing succeeds."

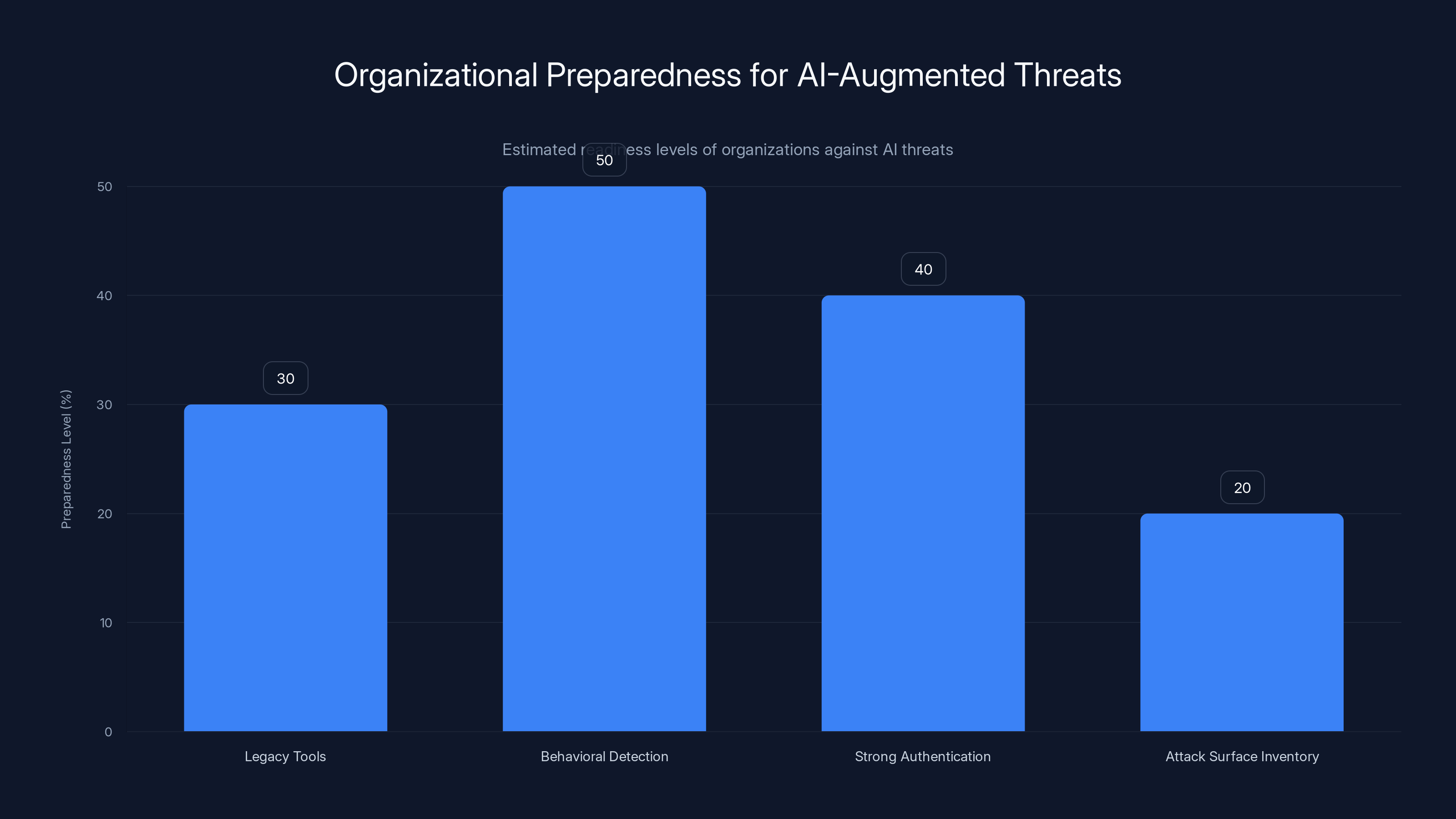

Estimated data shows that most organizations have low preparedness levels for AI-augmented threats, with only 20% having a comprehensive attack surface inventory.

The Custom AI Tool Demand and Dark Web Markets

Threat actors want purpose-built tools. And they're starting to organize markets around them.

In dark web forums and underground communities, there's increasing demand for AI tools specifically designed for offensive operations. We're talking about tools that:

- Write malware code that's optimized for evasion

- Generate social engineering content that maximizes user engagement

- Analyze code to identify vulnerabilities

- Create convincing deepfakes for social engineering

- Automate the reconnaissance process against target organizations

Right now, most threat actors are using distillation attacks to create these tools from legitimate AI services. They're effectively stealing the capability and repurposing it.

But there's emerging demand for custom-built tools that don't rely on APIs or stolen models. If a threat actor group could develop their own AI system designed specifically for malware generation, they'd have a significant advantage. The tool would be optimized for their specific use cases. It would be tailored to evade specific detection technologies. It would be independent of any commercial service.

This is concerning because it suggests the next phase of this threat. Right now, AI is being integrated into attacks opportunistically: threat actors use whatever AI tools they can access. Soon, threat actor groups might commission or develop their own AI systems specifically for offensive operations.

The barriers to this are lower than you might think. Training an AI model doesn't require infinite resources anymore. Inference (actually using a trained model) requires computational power, but that's obtainable through cloud resources. The main barriers are expertise and data.

Threat actor groups have access to both. Many nation-state groups employ researchers with AI expertise. All of them have access to large datasets of code (through malware repositories and open-source code) and phishing examples (from their own operations and shared within underground communities).

So the timeline looks roughly like this:

Present (2025): Threat actors use distillation attacks and stolen APIs to access AI capabilities. This is cheap but requires ongoing access to external services.

Near-term (2025-2026): Some sophisticated threat actor groups commission custom AI tools from capable researchers or hire AI talent. These custom tools are proprietary and not shared broadly.

Medium-term (2026-2027): Custom AI tools become more common. Some are leaked or sold to other groups. Open-source AI projects emerge that are specifically designed for offensive purposes, making the tools widely available.

Long-term (2027+): AI augmentation becomes standard across all threat actors. Just like malware and phishing kits are now commodity attacks, AI-augmented versions become the default.

This timeline is speculative, but it's based on how other attack techniques have evolved historically. Once a new capability emerges, it follows a predictable diffusion curve. The more successful it is, the faster it diffuses.

Real-Time Code Rewriting and Behavioral Evasion

One of the most sophisticated capabilities we're seeing is real-time code adaptation.

Previously, malware was either polymorphic (changing its code on each infection) or metamorphic (changing its code to avoid detection). Both techniques were effective but computationally expensive and required significant coding expertise to implement correctly.

AI changes this by providing a more flexible approach. Instead of pre-programming all possible code variations, malware can now generate new variations on demand using an AI model.

The advantage is flexibility. The code doesn't just change randomly. It changes strategically, informed by what the AI understands about detection mechanisms. The malware can analyze a detection environment and generate code that specifically avoids the techniques being used to detect it.

Here's a concrete example. Let's say a piece of malware uses a specific Windows API call to inject code into another process. This API call is monitored by endpoint detection and response (EDR) tools, so the malware gets caught.

With AI-augmented code generation, the malware can query: "What are alternative approaches to achieve code injection without using the standard API?" The AI generates several approaches. The malware selects the approach that, based on its analysis of the system, is least likely to be detected.

This creates a feedback loop where the malware gets smarter over time as it encounters different security environments. Malware in organizations with strong EDR tools develops different techniques than malware in organizations with basic firewalls.

The detection problem becomes genuinely difficult. Traditional behavioral analysis looks for known-bad behaviors. But if the behavior is constantly changing, and the changes are informed by AI analysis of the detection environment, then behavioral analysis becomes unreliable.

The answer is that detection also needs to become AI-powered. Instead of looking for specific behaviors, AI-based detection systems look for suspicious patterns in behavior that persist despite the code changes. Did the process access sensitive files? Did it attempt to spread to other systems? Did it create new user accounts? These behaviors might be achieved through different code, but the underlying intent and impact are consistent.

This is why companies like Google are actively developing tools to detect AI-augmented threats. The traditional security stack can't keep up with AI-driven attack innovation. The only viable defense is AI-driven detection.

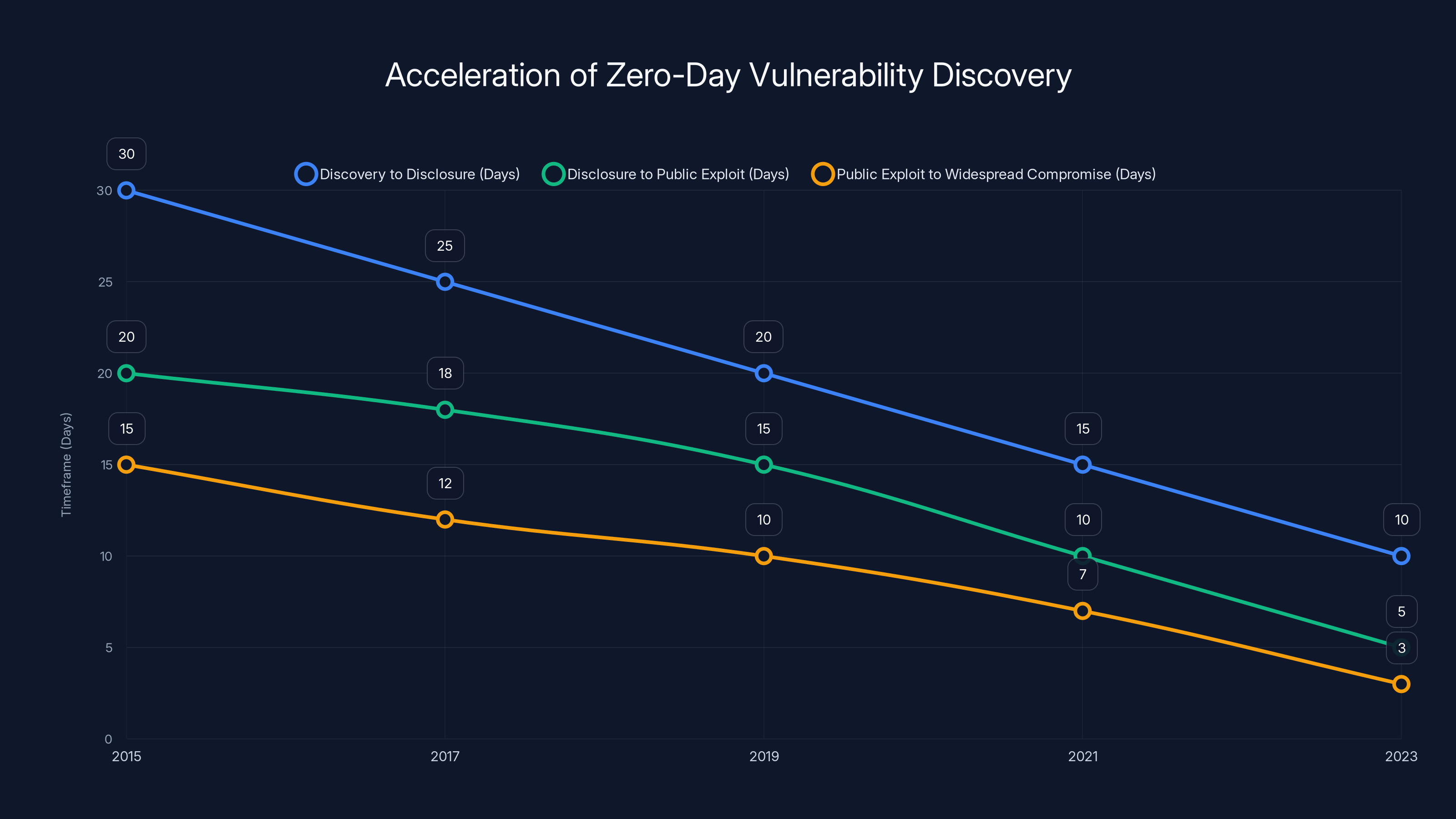

The timeframes for zero-day vulnerability discovery, disclosure, and exploitation have significantly decreased from 2015 to 2023, highlighting the need for faster defensive measures. Estimated data.

Vulnerability Discovery and Automated Exploitation

Finding vulnerabilities used to require specialized security research skills. You needed to understand systems deeply, have the ability to think creatively about edge cases, and be comfortable with debugging complex systems.

AI is changing this by automating the vulnerability discovery process.

Threat actors are using AI to analyze code and identify weaknesses. The process works like this: feed large codebases into an AI system trained on known vulnerabilities and their patterns. The AI learns the signatures of vulnerable code: unsafe memory operations, improper input validation, race conditions, buffer overflows.

Once trained, the AI can scan new code and identify likely vulnerabilities. Not all identified vulnerabilities are real, but the AI significantly narrows the search space. Instead of a researcher manually analyzing millions of lines of code, they can focus on the specific functions and code paths that the AI flagged as potentially vulnerable.

The result is faster vulnerability discovery and faster exploit development. Code that might have taken weeks to analyze for vulnerabilities can now be analyzed in days or hours.

What's particularly concerning is that this capability is being used not just against open-source software but against closed-source systems. Threat actors are analyzing firmware, proprietary applications, and internal code they've obtained through compromise. The AI vulnerability scanner helps them move from "we have code, but it's too complex to analyze" to "we have code and we found the vulnerabilities."

For software vendors and organizations, this is accelerating the timeline to exploit. If a vendor releases a patch for a vulnerability, threat actors can now analyze the patch, understand what it fixed, reverse that logic, and create an exploit for unpatched systems much faster than they could previously.

The volume of vulnerabilities being discovered is also increasing. Researchers thought they had most of the low-hanging fruit in major software projects. But AI-assisted analysis is finding vulnerabilities in thoroughly-studied code, suggesting there are more vulnerabilities than previously believed.

This creates a patch management nightmare. More vulnerabilities are being discovered faster than organizations can patch them. The time window between vulnerability disclosure and exploit availability is shrinking. The time window between exploit availability and detection is getting tighter.

Defense requires accepting that you can't patch everything immediately. The strategy has to be layered: patch the most critical systems first, implement compensating controls for unpatched systems (network segmentation, access restrictions, monitoring), and maintain detection for exploitation of known vulnerabilities.

Detection and Response: How Security Tools Are Fighting Back

The threat landscape is changing. Security tools have to change with it.

Traditional security was built around detection signatures. You identify malware, extract its signature, and scan for that signature. The approach works great when malware is relatively static. But when malware is constantly changing through AI-generated code variations, signature-based detection fails.

Security vendors are responding by deploying AI-based detection tools that don't rely on signatures. Instead of looking for specific malware, these tools look for malicious behavior patterns.

Here's how it works in practice. The detection system builds a baseline of normal behavior for each user, process, and system. It learns what normal looks like for your organization. Sarah's role involves accessing customer databases but not production systems. The server in your data center normally has high network traffic but not unusual file system changes.

Once the baseline is established, the detection system looks for deviations that suggest an attack. Sarah's account suddenly accessing production systems is abnormal. The server suddenly creating thousands of files is abnormal. The detection system flags these behaviors for investigation.

The advantage is that this approach works regardless of whether malware uses AI-generated code or traditional code. The behavior being analyzed is the same. What changes is the code that achieves that behavior.

Google is particularly aggressive in deploying AI-based detection. They've created tools like Big Sleep specifically designed to identify vulnerabilities in code before attackers find them. They've deployed Code Mender to automate patching and reduce the window where vulnerabilities exist.

More importantly, Google actively scans for malicious AI usage within Gemini. If someone tries to use Gemini to generate malware code or create phishing kits, the system detects it and blocks it. The detection is imperfect, but it raises the cost for threat actors trying to use Gemini for attacks.

Email security is also being transformed by AI. Traditional email filtering looks for known phishing signatures: suspicious links, known malicious attachments, sender reputation. AI-based email security analyzes the full message context: sender behavior patterns, recipient relationship to sender, message content in relation to typical business communication.

When someone sends a highly personalized message asking for access to sensitive systems, traditional email security might miss it because nothing in the message looks inherently malicious. AI-based security flags it because the message pattern is unusual relative to historical communication.

The scale advantage matters too. AI-based detection can analyze billions of emails, looking for phishing campaigns that operate at massive scale. Human review of even a tiny fraction of email traffic is infeasible. AI makes it possible to detect patterns in email traffic that humans would never spot.

What's emerging is an adversarial dynamic. Threat actors use AI to attack. Defenders use AI to detect. Threat actors improve their AI techniques. Defenders improve their detection. The pace of innovation on both sides is accelerating.

The good news is that defenders have some inherent advantages. Defenders have visibility into systems being attacked. Threat actors operate in the dark, inferring what defense systems are deployed through trial and error. Defenders can instrument their systems to collect telemetry. Threat actors can only observe the effects of their attacks.

But the arms race is real. It's not a temporary phase. It's the future of security.

AI significantly reduces the time to create phishing kits from hours to minutes and increases the success rate by five times. (Estimated data)

Organizational Preparedness and the Detection Gap

Most organizations are unprepared for AI-augmented threats.

This isn't cynical assessment. It's based on what we're seeing in the market. Most organizations are still using security tools from 5-10 years ago. They're still relying on antivirus software that uses signature-based detection. They're still using firewalls that analyze traffic patterns. They're still doing incident response the old way: detect incident, investigate, contain, eradicate.

That playbook doesn't work against threats where the attack code is constantly changing and the attack is informed by real-time intelligence about the defense environment.

The detection gap is widening. Threat actors are moving faster than defenders. They're adopting AI-augmented techniques faster than defenders are deploying detection. They're finding vulnerabilities faster than defenders can patch them.

The organizations that will survive this transition are those that treat it like a serious, fundamental shift in the threat landscape. Not as "oh, we need to update our tools" but as "we need to completely rethink how we approach security."

What does preparedness look like?

First, inventory your attack surface honestly. How many systems are you running? How many are patched? How many are monitored? Most organizations dramatically underestimate their attack surface. You can't defend what you don't know you have.

Second, deploy behavioral detection. Invest in EDR (endpoint detection and response) tools. Invest in email security that analyzes behavior, not just signatures. Invest in network monitoring that looks for unusual patterns, not just known-bad traffic.

Third, implement strong authentication. If phishing succeeds but multi-factor authentication prevents account compromise, you've neutralized the most common attack vector. This should be table stakes by now, but many organizations still don't have it implemented universally.

Fourth, segment your network. Don't assume you can prevent all breaches. Assume you will get breached. The goal is to contain the damage. Network segmentation means that compromising one system doesn't immediately give access to everything.

Fifth, actively threat hunt. Don't wait for automated detection to find threats. Regularly search your logs and systems for indicators of compromise. Most organizations that discover breaches do so through proactive threat hunting, not from their automated alerts.

Sixth, engage in intelligence sharing. If a threat actor is running a campaign against your industry, you need to know about it. This requires participating in industry threat intelligence communities and sharing indicators of compromise with peers.

The organizations that do these things are still vulnerable, but they're significantly better positioned than those that don't.

Specific Attack Vectors: Social Engineering on Steroids

Social engineering has always been effective. AI is making it devastatingly effective.

The traditional social engineering attack requires effort. An attacker researches a target, identifies vulnerability, and crafts a personalized pretext. The process is manual, time-intensive, and doesn't scale well.

AI-augmented social engineering automates the scaling. Instead of researching and crafting five personalized attacks, you can now research and craft five hundred.

Here's how it works in practice. An attacker wants to compromise an organization. They use AI to scrape all publicly available information: Linked In profiles, Git Hub commits, conference presentations, news articles, Twitter posts, job postings. The AI aggregates this information into a knowledge graph showing relationships, roles, and decision-making authority.

The AI then identifies the most valuable targets. Instead of attacking random employees, it identifies people in procurement, finance, IT administration, or security roles. These are people who have access to valuable systems or can be tricked into granting access.

Next, the AI analyzes the target's communication patterns. What terminology do they use? What are their interests? What are their typical communication styles? This information is used to craft messages that feel natural coming from people they know or trust.

Finally, the AI generates a customized social engineering attack. It might be a message referencing a legitimate project the target is working on, requesting urgent access to a system. It might be a message from a trusted vendor mentioning a critical security patch that needs immediate installation. It might be a message from a senior executive requesting an urgent wire transfer for acquisition preparation.

Each message is personalized, references legitimate business contexts, and uses communication patterns that feel natural. These messages bypass human detection because they're not trying to trick you with generic phishing. They're trying to trick you with legitimate-sounding requests that reference real information about you and your organization.

The result is devastatingly effective. Users who can spot generic phishing emails get caught because this doesn't feel like phishing. It feels like normal business communication.

Defense requires organizational culture changes. You need employees to understand that even personalized requests should be verified independently. If a senior executive asks for a wire transfer, don't respond to the email. Call them directly on a known number. If someone requests urgent system access, don't grant it immediately. Verify the request through normal channels. If a vendor mentions a critical patch, don't install it immediately. Verify the vulnerability through independent sources.

You also need compensating controls. If a user can't grant critical access themselves, then social engineering targeting them has limited impact. If transfers above certain amounts require additional approval, then social engineering targets multiple people rather than one. If systems have security policies that prevent installation of random patches, then social engineering can't directly compromise systems.

But ultimately, the best defense against sophisticated social engineering is accepting that some percentage of users will fall for it. Instead of trying to prevent all social engineering, implement detection. Monitor for unusual account behavior. Monitor for unusual system access requests. Monitor for unusual data access patterns. When social engineering succeeds, detect it quickly and respond.

The Vulnerability Research Race and Zero-Day Acceleration

Zero-day vulnerabilities are becoming less rare.

Historically, zero-day vulnerabilities (vulnerabilities unknown to vendors) were valuable and rare. Security researchers might find one per project per year. Nation-states and advanced threat actors would stockpile zero-days, using them sparingly because they knew they'd eventually be discovered and patched.

AI-assisted vulnerability research is changing this. The rate of zero-day discovery is accelerating. Tools like Big Sleep can analyze millions of lines of code and identify potentially vulnerable patterns in hours.

What's particularly significant is that this capability is available to threat actors, not just defenders. If Google's Big Sleep finds vulnerabilities that researchers missed, then similar techniques can be used by attackers who have access to code.

The dynamics are shifting. Zero-days are becoming less valuable because they're being discovered faster. The time between discovery and disclosure is shrinking. The time between disclosure and public exploit availability is shrinking. The time between public exploit and widespread compromise is shrinking.

This compresses the window available for defenders to patch systems before they're exploited. In the old model, you might have weeks between zero-day discovery and exploit availability. In the new model, you might have days. In the worst case, you might have hours.

The defense has to shift from "patch everything immediately" (impossible for many organizations) to "identify critical systems, patch those first, and implement compensating controls for systems you can't patch immediately."

This also changes the economics of vulnerability research. Security researchers used to hoard zero-days, knowing their value. If they sold a zero-day to a vendor for a bug bounty, they'd get paid once. If they sold it to a threat actor, they'd get paid once but with more money and no legal consequences.

Now that zero-days are easier to find, the incentive structure is changing. Some researchers are transitioning from zero-day hunting to vulnerability assessment and penetration testing, where they're paid to find vulnerabilities in systems and help organizations fix them. Others are staying in zero-day hunting but facing increased competition and lower prices as the supply increases.

For organizations, this means more vulnerabilities are being discovered in your software. More zero-days exist. More threat actors have exploit code. The vulnerability management problem is getting harder, not easier.

The only viable approach is recognizing that you can't patch everything immediately and building your security architecture around that constraint. Use compensating controls. Use network segmentation. Use detection. Plan for inevitable compromise and focus on containing it.

Building Resilient Defenses Against AI-Powered Attacks

You can't prevent all attacks. You can build systems that detect and contain them.

Resilient defense has several layers. None are perfect individually. Together, they create enough friction that simple attacks become expensive and complex attacks become detectable.

Layer 1: Prevention. Use controls to prevent attacks when possible. Patch systems. Implement access controls. Use network segmentation. Deploy web application firewalls. These aren't foolproof, but they stop the easiest attacks.

Layer 2: Detection. Use behavioral analysis to detect when prevention fails. Deploy EDR tools on endpoints. Deploy network detection tools on network segments. Deploy email security tools on email systems. These tools look for suspicious behavior that suggests an attack is underway.

Layer 3: Containment. When attacks are detected, respond quickly to limit damage. Have incident response playbooks. Have communication channels for rapid response. Have backup systems so you can restore from known-good state.

Layer 4: Recovery. Plan to restore systems to a known-good state. Maintain backups. Document recovery procedures. Test them regularly. Don't assume you can prevent all compromises. Plan to recover from them.

Layer 5: Learning. After incidents, analyze what happened. What failed? What detection worked? What could have been better? Use this information to improve the security architecture.

The organizations that survive attacks are the ones with good incident response and recovery capabilities, not necessarily the ones that prevent all attacks. The ones that don't survive are the ones that get attacked and have no way to recover.

This requires investment in people, not just tools. Tools don't detect threats. People analyzing tool outputs detect threats. Tools don't respond to threats. People execute response playbooks. Tools don't recover systems. People execute recovery procedures.

The security industry has sold organizations on the idea that good tools will protect them. The reality is that good people with adequate tools will protect them. The people problem is harder to solve and costs more than the tool problem, so organizations underinvest in people.

If you're building a security team, prioritize hiring strong incident responders and threat hunters. These roles are harder to fill and more valuable than additional tool administrators.

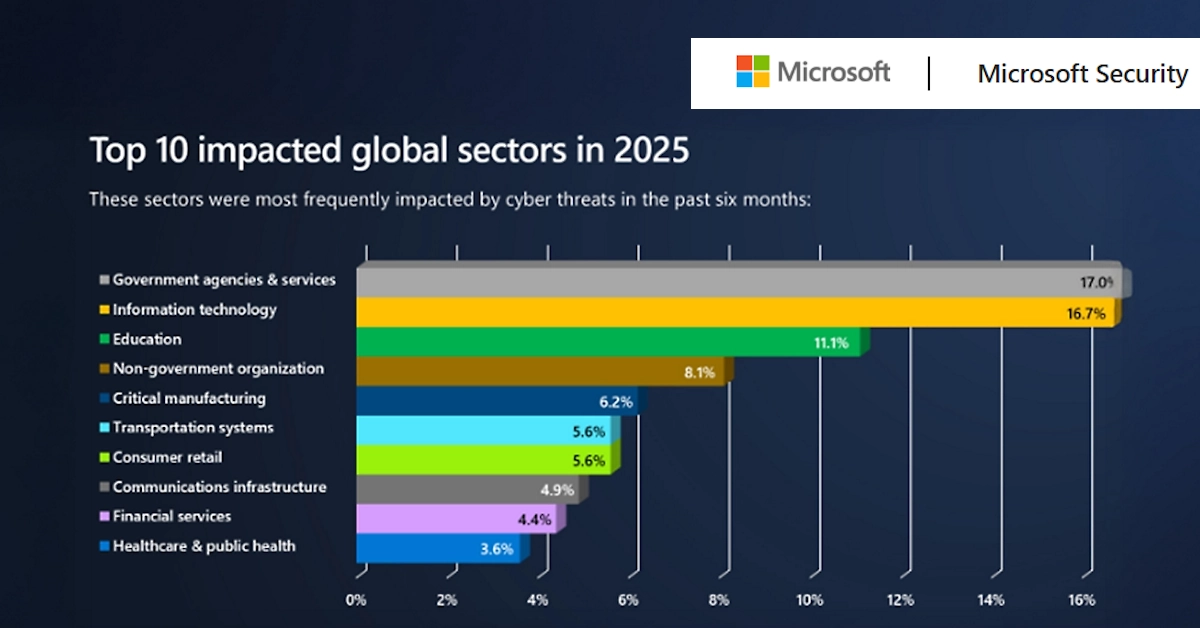

Industry-Specific Threats and Sector Analysis

Different industries are being targeted differently.

Financial services are experiencing the most advanced social engineering attacks. Threat actors are using AI to analyze financial systems, identify decision-making processes, and target people with authority to move money. A significant portion of wire fraud losses are now AI-assisted social engineering.

Healthcare is being targeted for both ransomware and data theft. AI is being used to identify valuable data (patient records, research data) and craft phishing messages targeting healthcare workers. The urgency inherent in healthcare environments makes workers more likely to make mistakes under pressure.

Manufacturing is being targeted for IP theft and supply chain compromise. AI is being used to identify valuable IP, understand supply chains, and identify personnel who have access to critical systems or information.

Government and defense are being targeted by state-sponsored actors using sophisticated AI-assisted reconnaissance and social engineering. The stakes are high enough that nation-states are willing to invest in custom tools.

Retail and e-commerce are being targeted for payment card data and customer information. AI-assisted vulnerability research has identified credit card processing systems that can be exploited at scale.

The common thread across all sectors is that AI is being used to improve targeting, persistence, and evasion. Attackers are smarter about who they attack, better at staying undetected, and faster at adapting when defenses evolve.

Industry-specific defenses look different. Financial services might focus on anomaly detection in transaction processing. Healthcare might focus on data loss prevention and medical device security. Manufacturing might focus on OT (operational technology) security and supply chain verification.

But the underlying principle is the same: assume you will be breached, and focus on detection and containment.

Future Threat Trajectory: What's Coming

The threat landscape will continue evolving. Here's what security researchers are watching for.

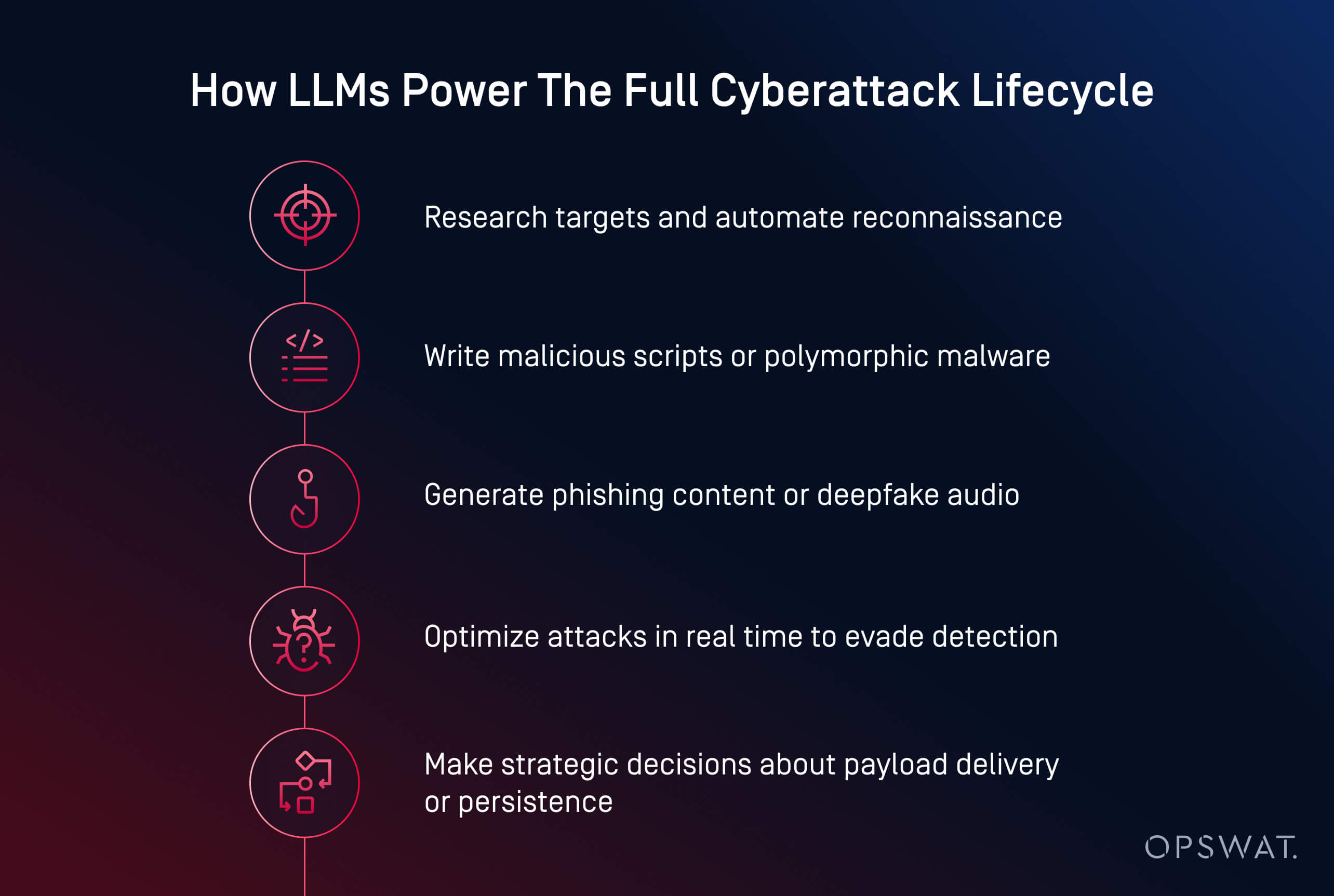

Phase 1 (Present): AI is being used opportunistically for specific attack stages. Distillation to steal models. Malware that uses AI to rewrite code. Phishing that uses AI to personalize messages. Social engineering that uses AI for reconnaissance.

Phase 2 (2025-2026): AI will become more integrated into attack workflows. Instead of using AI for specific tasks, threat actors will use AI throughout the entire attack chain. Initial reconnaissance uses AI. Social engineering uses AI. Exploitation uses AI. Post-exploitation uses AI. Evasion uses AI.

Phase 3 (2026-2027): Custom AI tools built specifically for offensive operations will become available. Threat actors will commission tools optimized for their specific targets. These tools will be more effective than general-purpose AI because they're designed for attack.

Phase 4 (2027+): AI-augmented attacks will become the default. Non-AI attacks will become the exception. Defending against attacks will require AI-augmented detection as a baseline expectation. Organizations without AI-based security will be considered negligent.

The acceleration curve is steep. What takes three years to mature in other technology domains seems to be happening in one year in the threat space. The pressure on defenders is intense.

What will determine success or failure? Organizations that adapt quickly. That invest in behavioral detection. That build strong incident response capabilities. That treat this not as a problem to be solved once, but as an ongoing adversarial dynamic.

The good news is that the defense has fundamental advantages. Defenders have visibility. Defenders can learn from attacks. Defenders can share intelligence. Organizations that act on these advantages now will be better positioned as the threat landscape continues to evolve.

Immediate Actions: What You Should Do Today

You don't need to solve this problem completely. You need to make measurable progress.

Start with these specific actions:

This week:

- Inventory your critical systems. Which systems would cripple your business if compromised? Those are your priority targets for security improvement.

- Check which of your systems have multi-factor authentication enabled. If critical systems don't have MFA, enable it.

- Review your incident response plan. Is it current? Do people know their roles? Is it actually practiced?

This month:

- Deploy behavioral endpoint detection on critical systems. Start with servers, not necessarily all endpoints.

- Evaluate your email security. Does it go beyond signature-based detection? Can it analyze sender behavior?

- Conduct a vulnerability scan of critical systems. What vulnerabilities exist? What's patched, what's not?

This quarter:

- Implement network segmentation. Critical systems should be in their own network segments with restricted access.

- Conduct threat hunting. Search your logs for indicators of compromise. Have you been breached and not realized it?

- Develop your incident response team. Hire or train people who can respond to incidents quickly.

This year:

- Implement a security awareness program focused on social engineering. Make it specific to your organization, not generic training.

- Develop a patch management process that prioritizes critical systems.

- Build relationships with threat intelligence providers. You need to know about threats targeting your industry.

These aren't complete solutions. But they move you significantly closer to being prepared for AI-augmented threats. And they're achievable without requiring a complete security overhaul.

Conclusion: This Is Not a Hypothetical Threat

Threat actors are using AI in production attacks right now. This is not a future threat. This is not a theoretical risk. This is happening.

Organizations that act now, implementing detection and resilience capabilities, will survive this transition. Organizations that wait for their security tools to magically solve the problem will get caught off guard.

The threat landscape is fundamentally different than it was two years ago. The gap between threat actor sophistication and defender capability is widening. The only way to close that gap is to treat this as a priority and invest accordingly.

What's particularly important to understand is that you don't need perfect security. You need better security than your threats expect. You need detection capabilities that work even when attacks are AI-augmented. You need response capabilities that work even when attacks are sophisticated.

You need to accept that breaches will happen. That breach sophistication will increase. That adaptation will be necessary. And you need to build your security architecture around those realities.

The organizations that will thrive in this environment are the ones that understand that security is not a state of being secured, but a process of continuous adaptation to evolving threats. It's uncomfortable. It's expensive. It's necessary.

Start with the basics. Implement multi-factor authentication. Deploy behavioral detection. Build incident response capabilities. Then layer additional controls on top. Every step improves your position.

The threat actors are not going to slow down. The capabilities they're using will only improve. But defenders have advantages too: visibility, intelligence sharing, ability to learn from attacks. Organizations that leverage those advantages will be far better positioned than those that don't.

The transition is happening now. The time to prepare is now. Not next year. Not next quarter. Now.

FAQ

What exactly is a distillation attack?

A distillation attack is a technique where threat actors use hundreds or thousands of carefully crafted prompts to probe how a large language model thinks and responds. By analyzing these responses, they build a comprehensive understanding of the model's reasoning patterns, then create their own artificial intelligence model based on this knowledge. This allows them to have a free, offline copy of the model that they fully control, eliminating API costs and enabling deep analysis of the original model's architecture and vulnerabilities.

How does AI-augmented malware evade detection?

AI-augmented malware uses artificial intelligence to dynamically rewrite its own code in real time, ensuring that no two versions are identical. Traditional signature-based detection tools fail because they rely on identifying consistent malware signatures, but when the malware changes every time it runs, there's no consistent signature to detect. The malware might rewrite itself hundreds of times as it moves through a system, each time adapting to avoid known detection patterns and security technologies present in the environment.

Can state-sponsored groups really use AI for social engineering?

Yes, and they're already doing it. State-sponsored actors use AI to analyze massive amounts of public data about target organizations, build relationship maps, identify decision-makers, and understand organizational structures. They then use this intelligence to craft highly personalized social engineering messages that reference legitimate business contexts and use communication patterns that match the target's normal style. This makes the attacks far more effective because they don't look like generic phishing.

What percentage of attacks now involve AI?

Accurate statistics are difficult because many organizations don't have the visibility to detect AI-augmented attacks, and threat actors don't announce when they're using AI. However, threat intelligence reports from Google and other major security vendors indicate that AI is now integrated into attacks across multiple threat actor groups, from financially motivated cybercriminals to nation-state actors. Conservative estimates suggest that 15-25% of sophisticated attacks now involve some AI component, with this percentage increasing rapidly.

Should I be worried about custom AI tools built specifically for attacks?

Yes, but not immediately. Right now, most threat actors are using distillation attacks to steal existing AI models or using commercial AI services for attacks. Building custom AI tools from scratch requires expertise and resources. However, some sophisticated threat actor groups are actively developing custom tools. If this becomes widespread, it will make attacks more effective because the tools can be optimized specifically for offensive purposes. This is likely to become a major concern within 2-3 years.

How can I detect AI-augmented phishing if it looks legitimate?

AI-augmented phishing is designed to look legitimate, so detection becomes more difficult. The key is implementing multiple layers of defense: multi-factor authentication (so stolen credentials don't grant access), behavioral analysis (looking for unusual account access patterns), and process controls (requiring verification of sensitive requests through independent channels). No single detection method is reliable against sophisticated social engineering, so layered defenses and organizational procedures are essential.

What's the fastest way to improve my security posture against these threats?

Implement multi-factor authentication on all critical accounts and deploy endpoint detection and response (EDR) tools on critical systems. These two changes alone will neutralize a significant portion of AI-augmented attacks. They're relatively fast to implement, don't require major architecture changes, and provide substantial security improvement. After these are in place, focus on incident response capabilities and threat hunting.

Are traditional antivirus solutions adequate for protecting against AI-augmented malware?

No. Traditional antivirus relies on signature-based detection, which fails when malware constantly changes through AI-generated code variations. You need behavioral analysis tools that look for suspicious activity patterns rather than specific malware signatures. Endpoint Detection and Response (EDR) tools are far more effective, though more complex and expensive than traditional antivirus. The security industry is rapidly moving away from signature-based detection for this exact reason.

How quickly are threat actors adopting AI-augmented attack techniques?

Very quickly. The historical adoption curve for new attack techniques is accelerating dramatically. What previously took 3-5 years to go from research to widespread adoption is now happening in 1-2 years. AI-augmented attacks are ahead of schedule compared to historical patterns, which means defenders are under significant time pressure to update their tools and processes. Organizations that don't prioritize this now will find themselves unprepared within the next 6-12 months.

What role does network segmentation play in defending against AI-augmented attacks?

Network segmentation is critical because it limits the damage from successful breaches. If an attacker compromises one system through phishing or vulnerability exploitation, network segmentation prevents them from immediately moving laterally across your entire network. This gives you time to detect the compromise and respond before critical systems are accessed. Even perfect phishing prevention and malware detection will fail sometimes, so network segmentation is essential for containing inevitable breaches.

Is there any evidence that Google, Microsoft, or other major AI vendors are intentionally helping threat actors?

No. Major AI vendors have consistently tried to prevent their tools from being used for attacks, implementing usage policies, deploying detection tools, and responding to abuse reports. However, determined threat actors will always find ways around these controls, whether through distillation attacks, payment fraud using stolen credentials, or other techniques. The cat-and-mouse game is ongoing, with vendors deploying new controls and threat actors developing new workarounds.

Key Takeaways

- Threat actors are actively using distillation attacks to clone AI models, creating free offline copies they control completely for malicious purposes

- HONESTCUE malware demonstrates AI-augmented code adaptation in real time, making traditional signature-based detection completely ineffective

- State-sponsored groups from Iran and North Korea are automating intelligence gathering and social engineering at scale using AI analysis of public data

- AI-generated phishing kits create personalized, highly convincing messages at massive scale, defeating traditional anti-phishing controls

- Detection and response capabilities must shift to behavioral analysis and AI-based tools because signature-based security cannot detect constantly-evolving AI-augmented threats

- Organizations unprepared for AI-augmented threats face compressed attack timelines: vulnerabilities discovered faster, exploits developed faster, and detection windows shrinking from weeks to days

Related Articles

- DNS Malware Detour Dog: How 30K+ Sites Harbor Hidden Threats [2025]

- BeyondTrust RCE Vulnerability CVE-2026-1731: What You Need to Know [2025]

- How AI Transforms Startup Economics: Enterprise Agents & Cost Reduction [2025]

- NordVPN Complete Plan 70% Off: Full Deal Breakdown [2025]

- Singapore's Telecom Crisis: UNC3886 Breaches All Four Major Carriers [2025]

- SmarterTools Ransomware Breach: How One Unpatched VM Compromised Everything [2025]

![How Hackers Are Using AI: The Threats Reshaping Cybersecurity [2025]](https://tryrunable.com/blog/how-hackers-are-using-ai-the-threats-reshaping-cybersecurity/image-1-1770905354625.jpg)