The Unseen Threat: AI Agent Backdoors in Open-Source Repositories [2025]

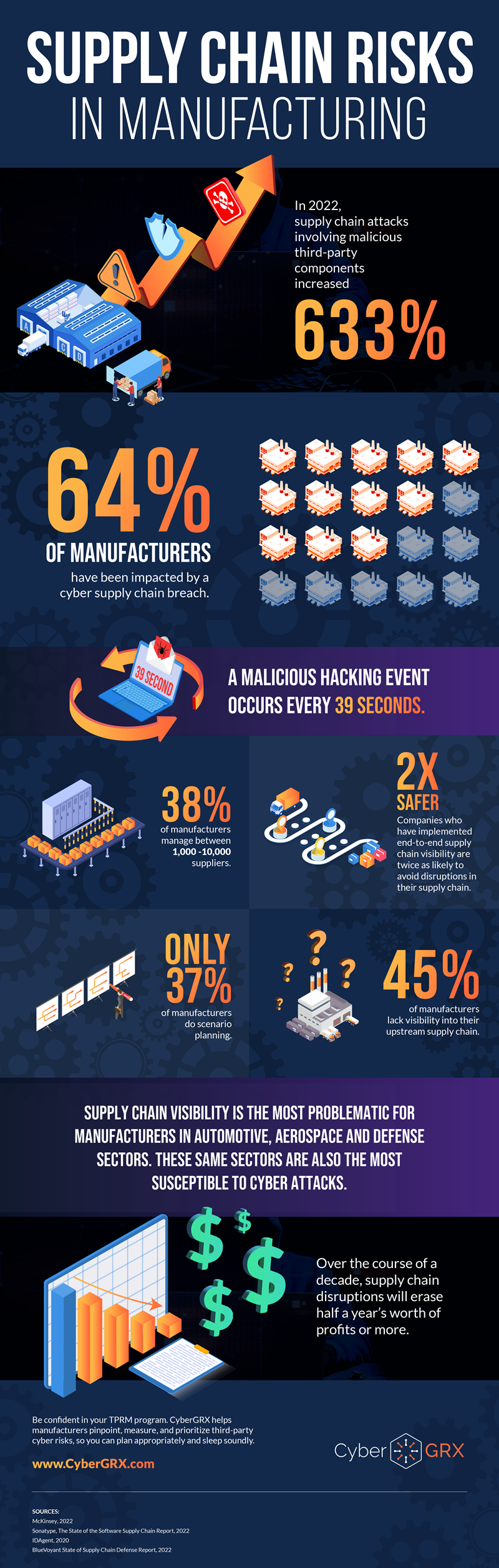

In the realm of open-source software, transparency and collaboration are celebrated as its core strengths. However, recent developments have highlighted a lurking vulnerability that could turn these strengths into weaknesses. This article delves into the world of AI agent backdoors, particularly focusing on the implications of tools like Open Claw, and the glaring gaps in current supply-chain security measures.

TL; DR

- AI Agent Backdoors: Tools like Open Claw can transform any open-source repo into a network of espionage with minimal detection.

- Supply-Chain Scanner Limitations: Current scanners lack the categories needed to identify these AI-driven threats.

- Vulnerability of CLI Tools: The widespread use of CLI-Anything exposes repos to potential exploitation.

- Future Security Measures: Enhanced detection capabilities and AI-driven security solutions are crucial.

- Call to Action: Developers must prioritize security audits and adopt new best practices.

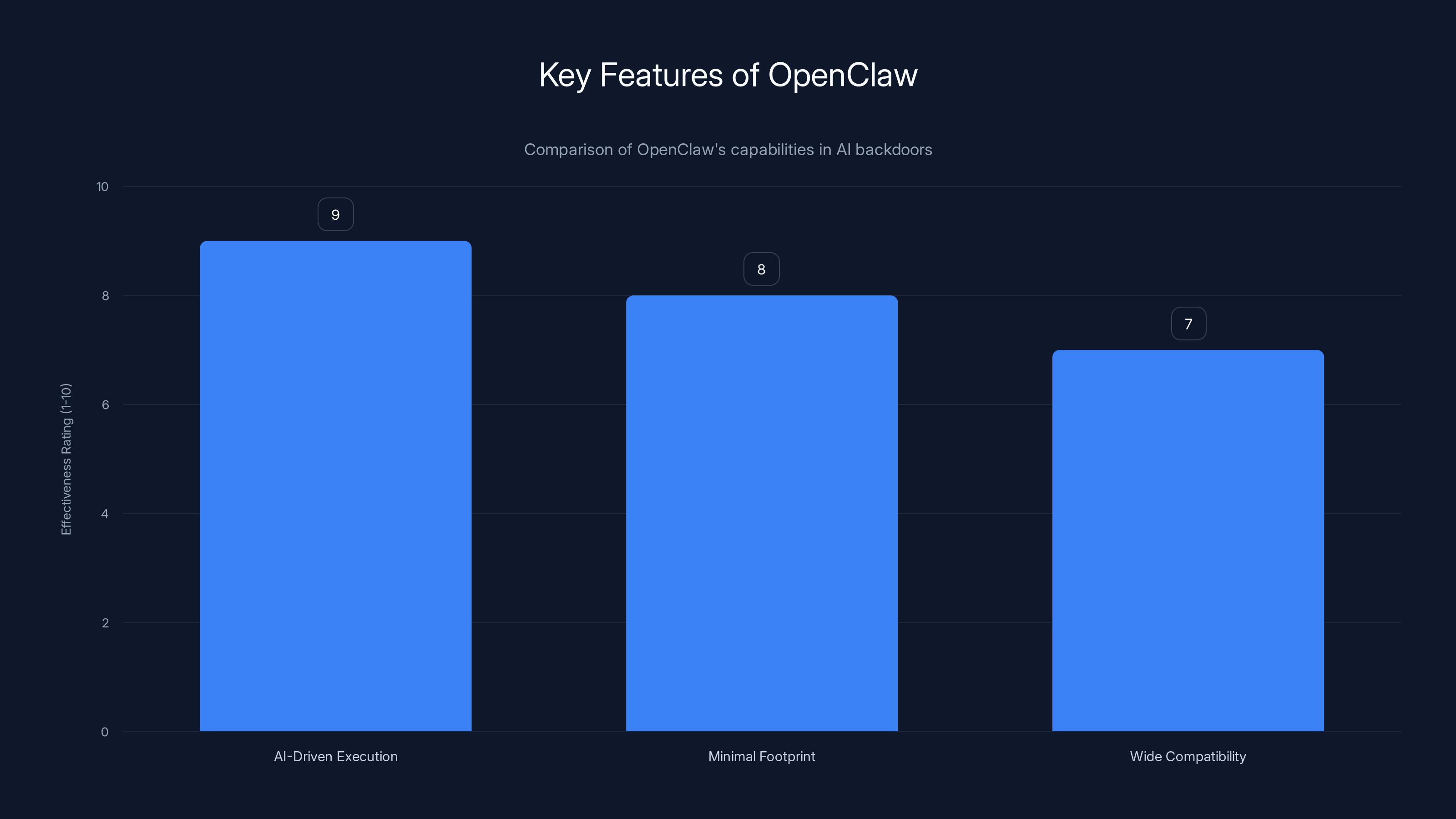

OpenClaw excels in AI-driven execution with a high effectiveness rating, making it a potent tool for stealthy operations.

Understanding AI Agent Backdoors

AI agent backdoors are essentially malicious scripts or commands embedded within software that allow unauthorized access or control. These are not new, but their integration with AI tools like Open Claw introduces a novel threat model. Such backdoors can be activated with a simple command, making them particularly insidious.

What Makes AI Backdoors Unique?

Traditional backdoors required complex setups and often left traces. AI backdoors, however, leverage machine learning algorithms to disguise their presence and operations. This makes them harder to detect using conventional security tools.

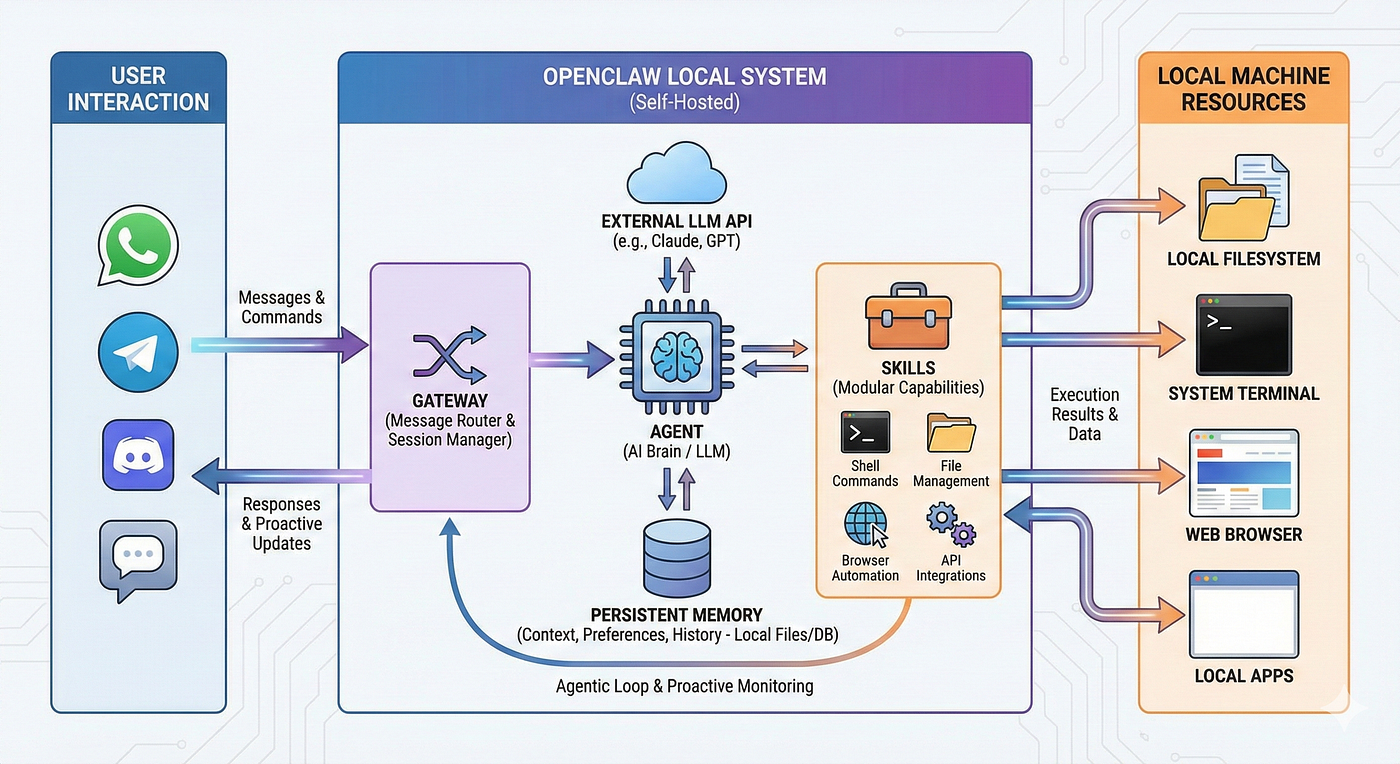

Open Claw: A Case Study

Open Claw illustrates how AI can be both a boon and a bane in cybersecurity. Designed originally as a tool to enhance software efficiency, it can also be repurposed to infiltrate systems. According to a guide on agentic development security, such tools can be manipulated to bypass traditional security measures.

Key Features of Open Claw:

- AI-Driven Execution: Uses AI algorithms to execute commands stealthily.

- Minimal Footprint: Operates with a low profile, leaving minimal traces.

- Wide Compatibility: Supports multiple platforms and programming languages.

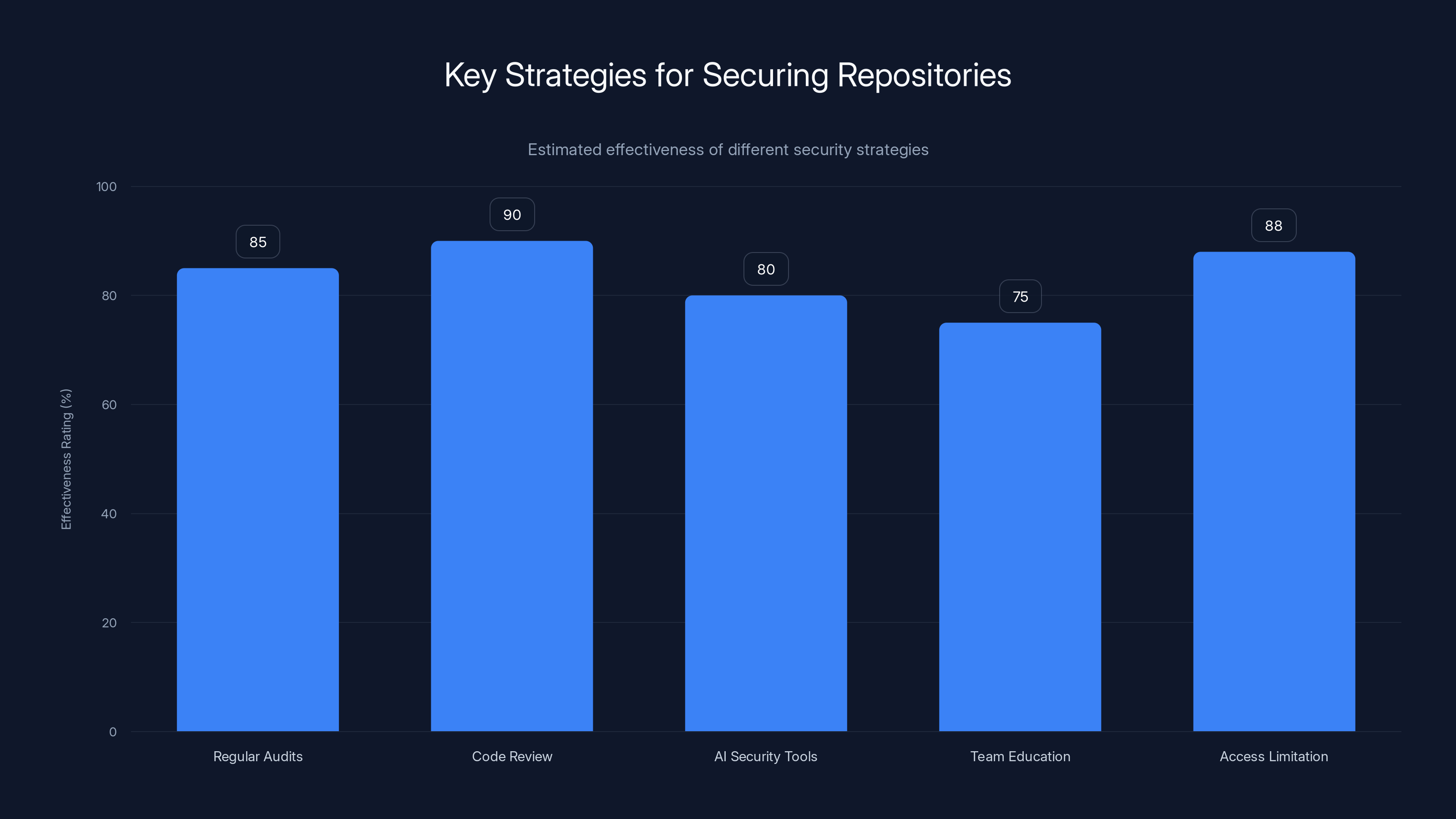

Code review processes and access limitation are highly effective strategies for securing repositories, with ratings of 90% and 88% respectively. (Estimated data)

The Role of CLI Tools in Security Breaches

Command Line Interfaces (CLI) are integral to software development, offering powerful capabilities for code manipulation and execution. However, their power also makes them vulnerable to exploitation.

CLI-Anything: A Double-Edged Sword

CLI-Anything, a tool that creates structured command line interfaces from any repo, exemplifies this duality. While it enhances usability, it also opens avenues for backdoor entry. The BitwardenCLI supply chain attack highlights how such tools can be exploited for unauthorized access.

Advantages and Risks of CLI-Anything:

- Streamlined Operations: Simplifies complex operations into manageable commands.

- Exposed Vulnerabilities: Converts repos into potential targets for malicious actors.

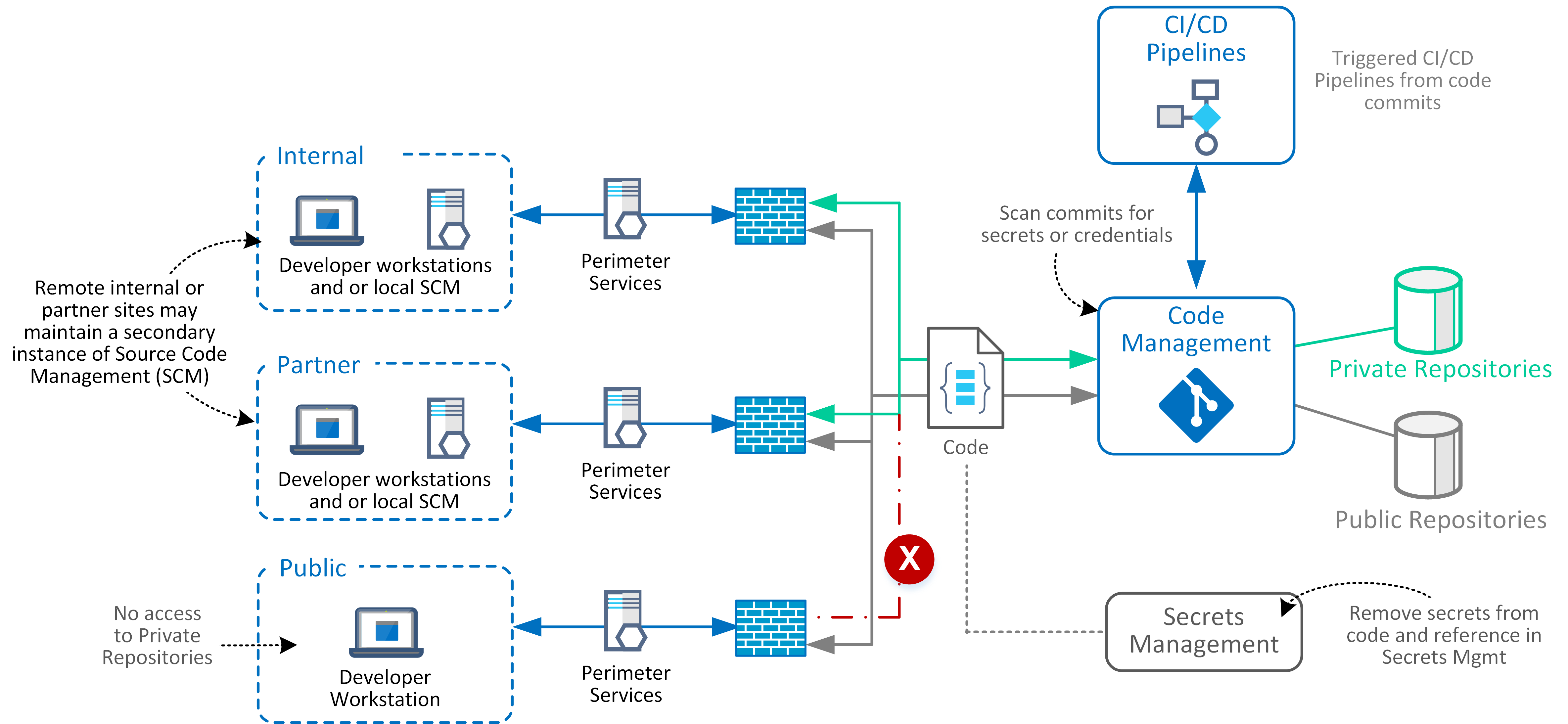

Why Supply-Chain Scanners Fail

Supply-chain scanners are designed to detect known vulnerabilities and suspicious activities. However, AI backdoors present a new challenge that existing scanners are not equipped to handle.

Limitations of Current Scanners

- Lack of Detection Categories: Most scanners do not have specific categories for AI-driven threats.

- Static Analysis Limitations: They rely heavily on static analysis, which AI backdoors can evade.

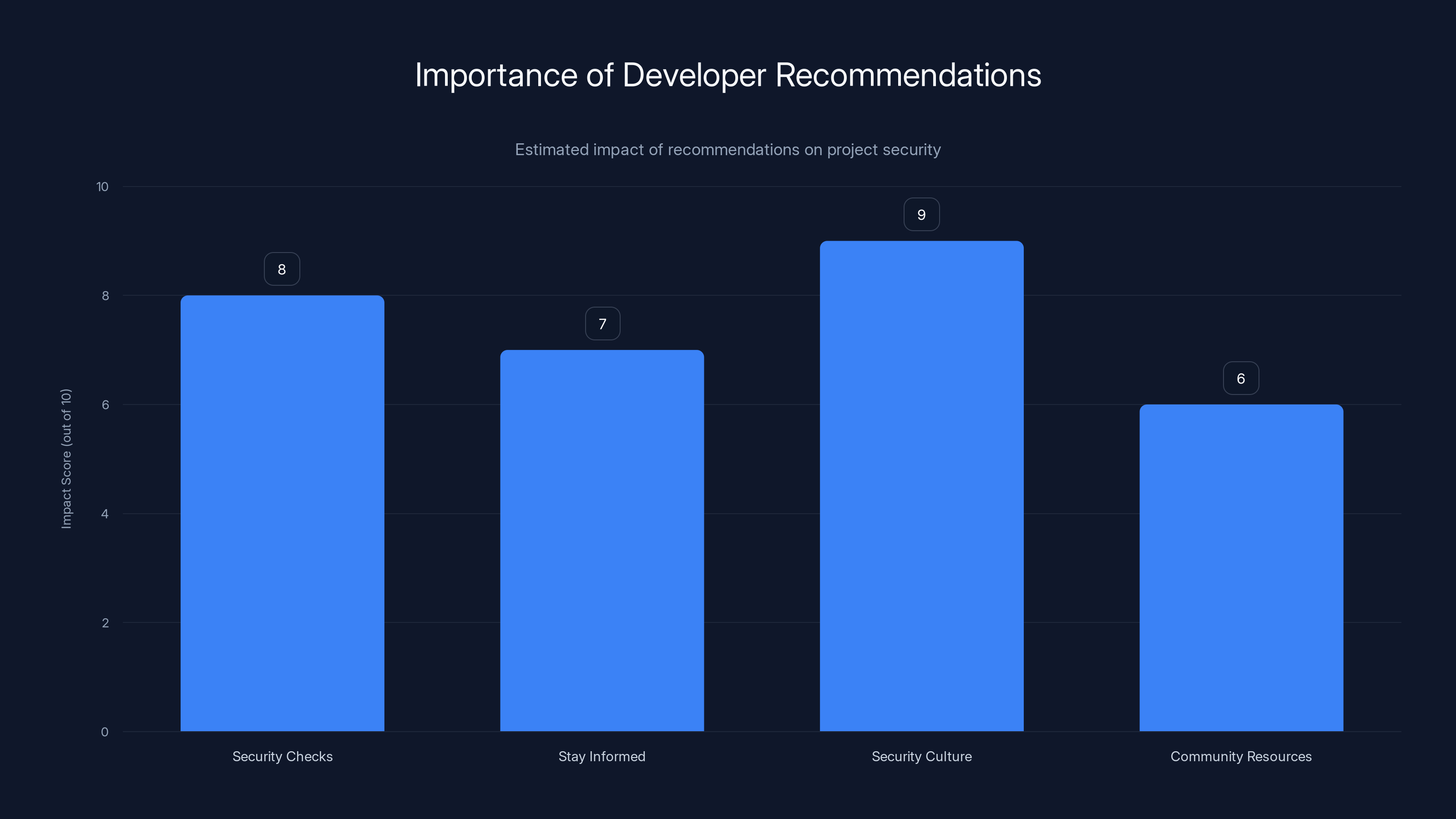

Fostering a security-first culture and integrating security checks early are estimated to have the highest impact on project security. Estimated data.

Practical Implementation Guide: Securing Your Repositories

Securing open-source repositories against AI backdoors requires a multi-faceted approach. Here’s a practical guide to bolster your defenses:

- Conduct Regular Audits: Implement a routine audit schedule to detect unusual patterns.

- Enhance Code Review Processes: Utilize peer reviews and automated tools to scrutinize code changes.

- Incorporate AI Security Tools: Deploy AI-driven security solutions that can adapt to new threats.

- Educate Your Team: Raise awareness about the latest security threats and best practices.

- Limit Access: Restrict access to critical parts of your repositories based on roles.

Common Pitfalls and Solutions

Even with the best intentions, security efforts can fall short due to common pitfalls. Here’s how to avoid them:

Over-reliance on Automated Tools

Pitfall: Believing that automation alone can address all security needs.

Solution: Complement automation with human oversight to catch nuanced threats.

Ignoring Minor Anomalies

Pitfall: Dismissing minor irregularities as false positives.

Solution: Investigate anomalies thoroughly, as they may indicate a backdoor attempt.

Future Trends in AI Security

As technology evolves, so too must our security measures. Here are some trends to watch in the AI security landscape:

- AI-Enhanced Detection: Future security tools will leverage AI to predict and identify threats proactively.

- Behavioral Analysis: Focusing on behavioral patterns rather than signatures to detect anomalies.

- Collaborative Defense Networks: Sharing threat intelligence across organizations to build a unified defense.

Recommendations for Developers

Developers play a crucial role in safeguarding open-source projects. Here are some actionable recommendations:

- Prioritize Security in Development: Integrate security checks early in the development cycle.

- Stay Informed: Keep abreast of the latest security trends and tools.

- Foster a Security-First Culture: Encourage team-wide responsibility for security.

- Leverage Community Resources: Participate in open-source security forums and initiatives.

Conclusion

The integration of AI in open-source software development is a double-edged sword, offering both remarkable potential and significant risks. By understanding the nature of AI agent backdoors and proactively adapting our security measures, we can harness the benefits of AI while mitigating its threats.

FAQ

What is an AI agent backdoor?

An AI agent backdoor is a hidden script or command in software that allows unauthorized access or control, often leveraging AI to conceal its presence.

How do CLI tools contribute to security vulnerabilities?

CLI tools, when misused, can simplify the process for attackers to execute malicious commands within a repository, potentially leading to unauthorized access.

Why are current supply-chain scanners ineffective against AI backdoors?

Most scanners lack the detection categories necessary to identify AI-driven threats, which often evade traditional static analysis methods.

What are some best practices for securing open-source repositories?

Conduct regular audits, enhance code review processes, utilize AI security tools, educate teams, and limit access based on roles.

How can developers stay informed about security trends?

Participate in security forums, subscribe to cybersecurity newsletters, and attend relevant industry conferences.

What future trends can we expect in AI security?

Expect advancements in AI-enhanced detection, behavioral analysis, and collaborative defense networks to improve threat identification and response.

How can AI be used to enhance security?

AI can analyze large datasets for patterns, predict potential threats, and automate responses to detected anomalies, thereby enhancing overall security.

What role do developers play in security?

Developers are crucial in integrating security into the development process, staying informed about new threats, and fostering a culture of security awareness within their teams.

Key Takeaways

- AI backdoors pose significant risks to open-source repositories.

- Current supply-chain scanners are inadequate for detecting AI-driven threats.

- CLI tools can be exploited for unauthorized access.

- Future security measures must include AI-driven detection capabilities.

- Developers need to prioritize security protocols and education.

Related Articles

- The Ethical Implications of AI Manipulation: A Deep Dive [2025]

- AI-Generated Phishing Attacks: Beyond the Inbox and How to Protect Yourself [2025]

- AI Agents: New Risks Requiring Continuous Monitoring and Oversight [2025]

- GPT-5.5 vs Mythos: Unveiling the Real Cybersecurity Contenders [2025]

- The Complex Dynamics of AI Responsibility: A Case Study on Tumbler Ridge and OpenAI [2025]

- Keeping AI Agents in Check: Preventing Unauthorized Use of Your Credit Cards [2025]

![The Unseen Threat: AI Agent Backdoors in Open-Source Repositories [2025]](https://tryrunable.com/blog/the-unseen-threat-ai-agent-backdoors-in-open-source-reposito/image-1-1778020559848.png)