AI Layoffs vs. AI-Washing: Separating Truth From Corporate Spin [2025]

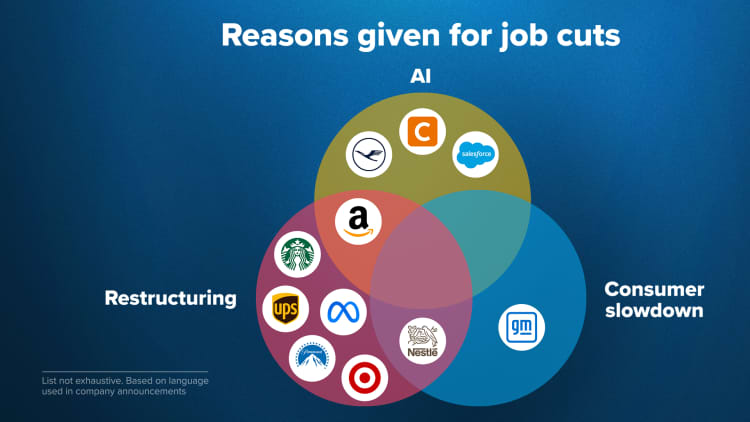

When Amazon announced 18,000 layoffs in early 2024, the official story was clear: artificial intelligence demanded it. The company needed to streamline, adapt, and position itself for the AI future. Sounded reasonable. Sound strategic. But here's what nobody really asked at the time: was that actually true?

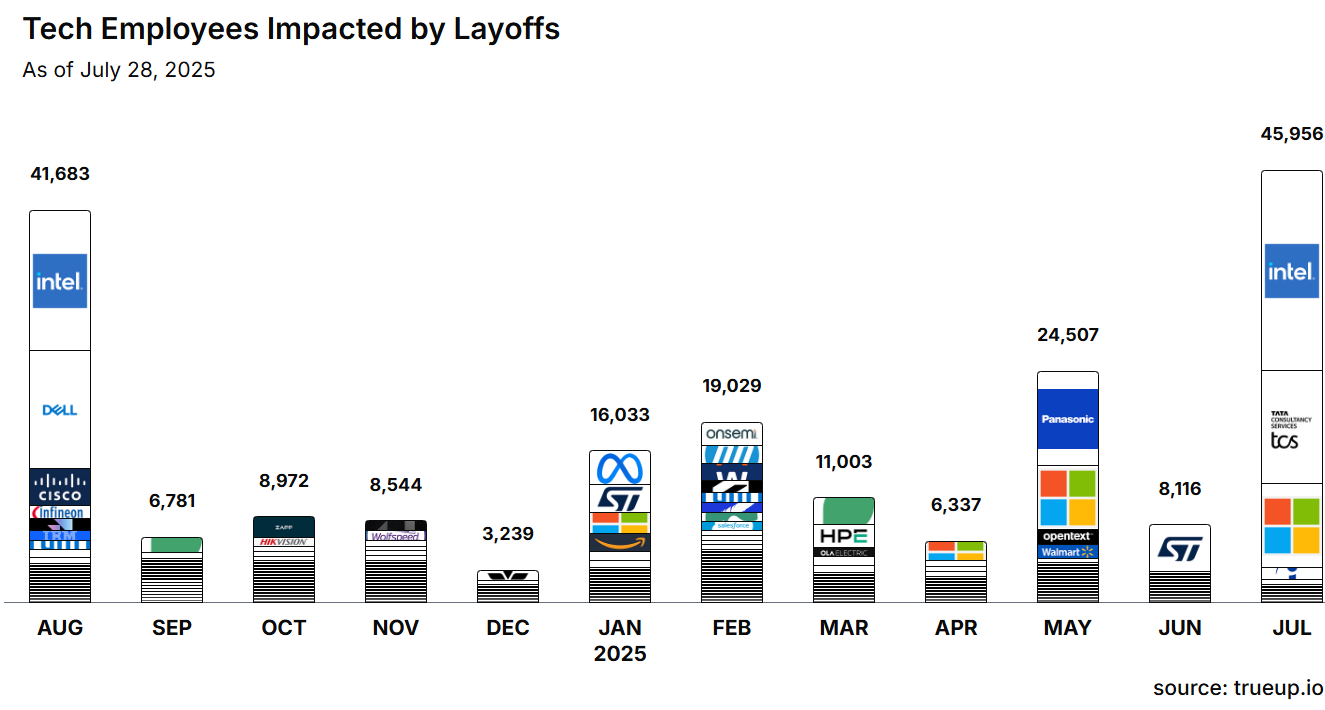

This is the central tension facing the tech industry right now. Over 50,000 workers were laid off in 2025 with AI cited as the primary reason. Companies ranging from Pinterest to Meta to Microsoft all used variations of the same explanation: AI efficiency gains mean we don't need as many people in certain roles anymore.

Except there's growing evidence that something else is happening underneath the surface.

Forrester Research published a detailed analysis in January 2026 that found a troubling pattern. Many companies announcing AI-related layoffs don't actually have mature, production-ready AI applications waiting in the wings to replace those workers. No vetted systems. No tested workflows. No clear automation pathway. Instead, they're citing AI as a future-looking justification for cuts driven by immediate financial pressure.

This phenomenon has a name now: AI-washing. And it's becoming increasingly difficult to distinguish from genuine workforce transformation.

Molly Kinder at the Brookings Institution put it bluntly: saying layoffs were caused by AI is "a very investor-friendly message." It sounds forward-thinking, technological, inevitable. The alternative—admitting that you over-hired during the pandemic boom, that you made poor acquisition decisions, that your business fundamentals need work—that doesn't play as well in earnings calls.

So what's really going on? Which companies are genuinely restructuring, and which are simply using AI as convenient cover for decisions they've already made? More importantly, what should workers, investors, and industry observers actually understand about this trend?

TL; DR

- Over 50,000 layoffs in 2025 cited AI as justification, but Forrester research suggests many companies lack mature AI systems to justify those cuts

- AI-washing is real: companies attributing layoffs to AI automation while having no deployed solutions ready

- Pandemic over-hiring is the actual culprit for many companies, not genuine AI workforce transition

- Real AI transformation requires specific conditions: mature applications, infrastructure investment, and genuine efficiency gains

- Bottom line: distinguish between companies making strategic AI bets versus those using AI as convenient cover for poor planning

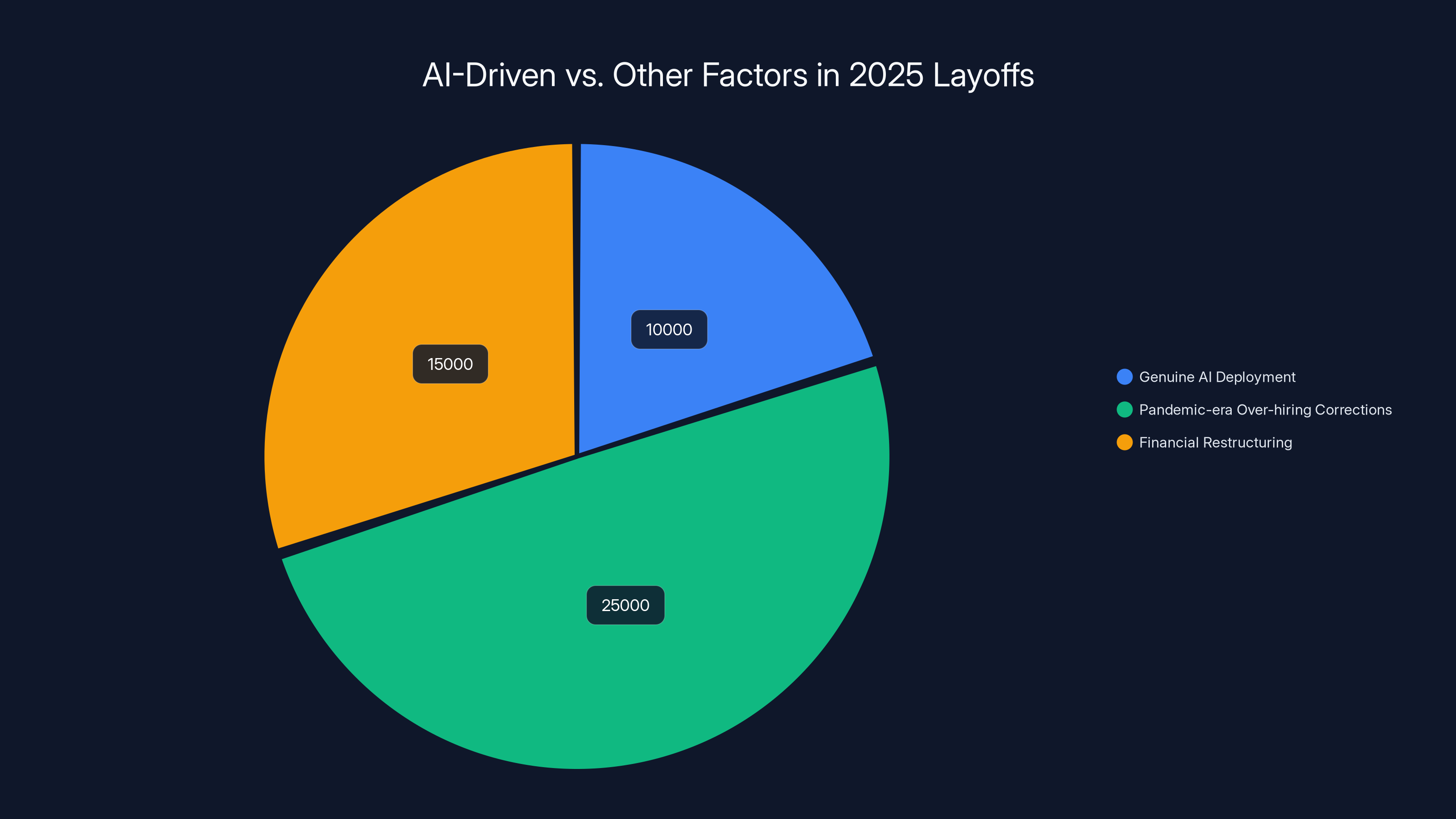

In 2025, while 50,000 layoffs were attributed to AI, only 10,000 were due to genuine AI deployment. The majority were due to over-hiring corrections and financial restructuring. Estimated data.

The Scale of the AI Layoff Phenomenon

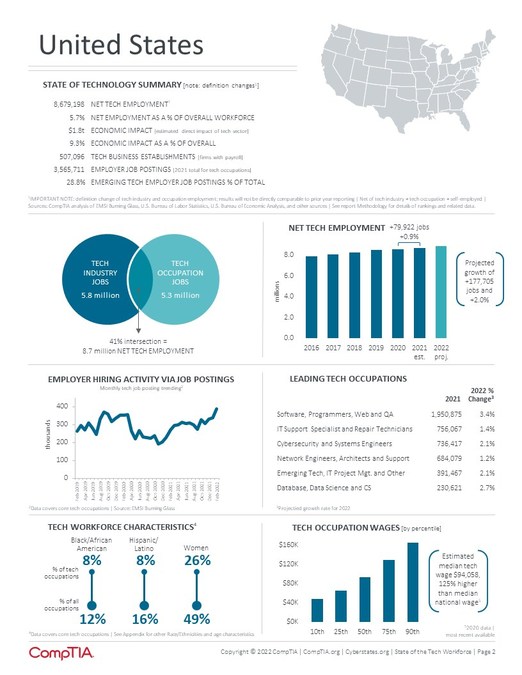

The numbers sound enormous in isolation. Fifty thousand workers. That's a major city's worth of employment. But context matters here.

Across the entire U.S. economy, roughly 3.9 million workers file unemployment claims weekly on average. So 50,000 layoffs spread across a full year isn't apocalyptic at the macro level. But that's not quite the right way to think about it either.

What matters more is the concentration. These layoffs happened disproportionately in a handful of high-profile companies. Amazon's 18,000 cut roughly 6% of its workforce. Meta's 21,000 reduction was about 13% of headcount. Microsoft went through roughly 10,000 positions. These weren't tiny adjustments. They were significant organizational reshuffles.

The concentration matters because these are the companies setting the narrative. When Amazon's CEO explains layoffs as AI-driven, employees at smaller tech companies start wondering if the same conversation is coming for them. Investors start thinking about AI efficiency across their entire portfolio. The messaging cascades.

But here's what's revealing: the timing doesn't quite add up.

If companies are laying off workers because they're deploying AI systems to handle those tasks more efficiently, you'd expect to see a clear sequence. First, companies invest heavily in AI infrastructure. Second, they build and test applications. Third, once systems are proven, they reduce headcount in roles the AI can handle. It's a logical progression.

Except that's not what actually happened at most companies. The layoffs came first. The AI investments often came later, if at all. Or they'd already been made as part of earlier technology spending, completely separate from the sudden workforce reductions.

Estimated data suggests that only about 35% of companies see margin expansion and 30% see revenue per employee increase after AI-driven layoffs, indicating mixed financial performance.

Pandemic Over-Hiring: The Real Story Most Companies Won't Admit

Here's where the narrative gets uncomfortable for tech leadership.

During 2020-2021, tech companies went absolutely mad with hiring. The pandemic created urgency. Remote work expanded the possible talent pool. Interest rates were essentially free, so venture capital and corporate capital were abundant. Everyone was hiring to capture growth and market share.

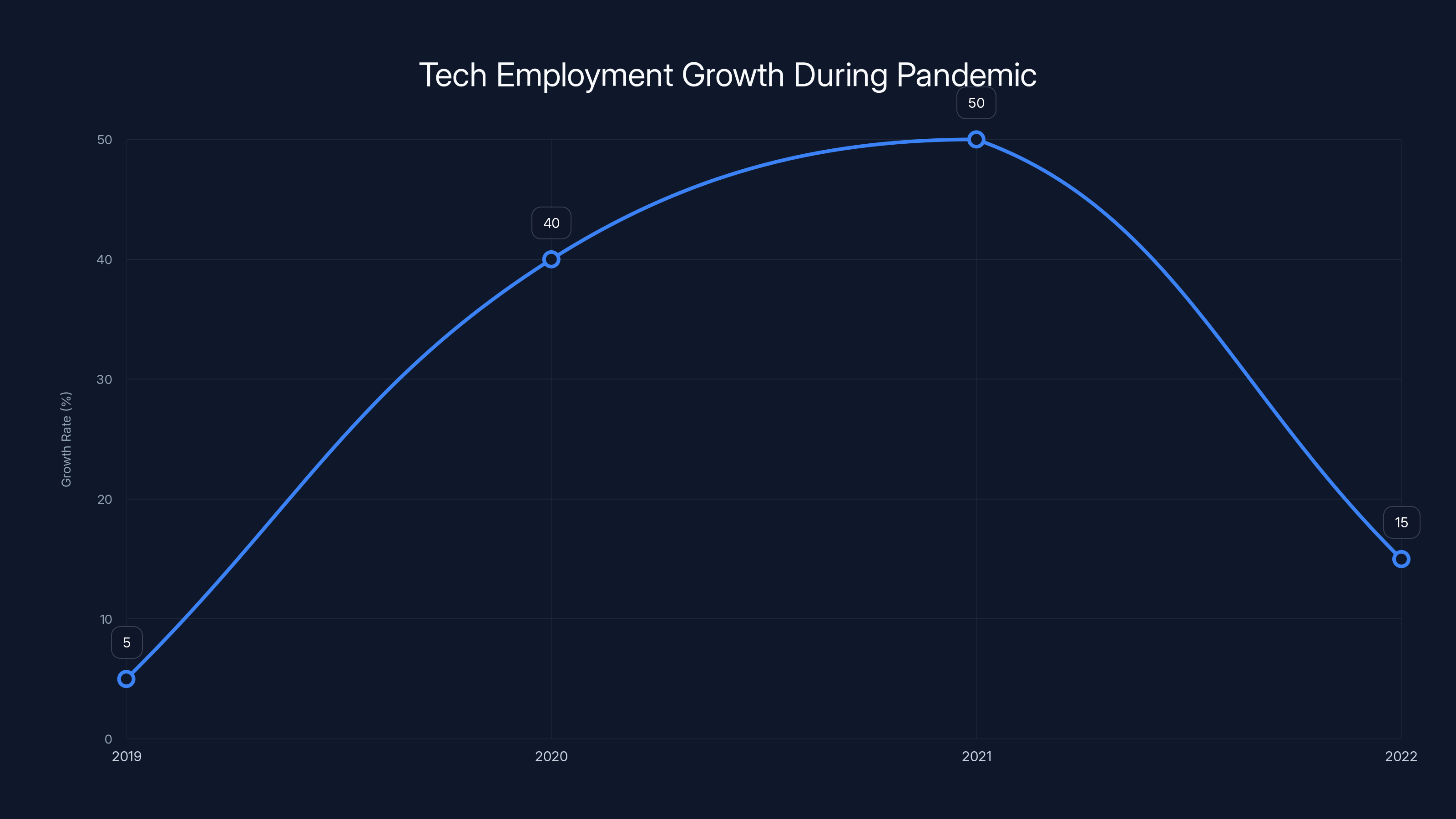

Bureau of Labor Statistics data shows that tech employment grew by roughly 1.2 million jobs between 2020 and early 2022. That was genuinely unsustainable. Companies weren't just hiring incrementally. They were doubling teams in some cases, tripling in others.

Then reality arrived. Supply chain issues hit. Inflation spiked. Interest rates started climbing in 2022. The free-money era ended. And suddenly, all those hiring decisions looked catastrophically premature.

Companies faced a choice: admit they'd massively over-hired in a speculative bubble, or find a more palatable explanation. Enter AI.

The beauty of the AI explanation is that it allows companies to claim they're not correcting a mistake. They're not admitting they hired 40% too many people. Instead, they're claiming they're forward-thinking about technology. They're proactive. They're embracing the future.

The problem is that the math doesn't work. McKinsey's analysis of tech workforce trends shows that companies took years to scale up hiring during the 2010s, typically growing headcount by 15-20% annually. But the 2020-2022 hiring spree often doubled that rate. That's not a gradual adjustment for a changing industry. That's a bubble.

So when these companies suddenly announce 10-15% workforce reductions while citing AI, what they're really doing is popping their own bubble. They're admitting the boom wasn't sustainable, just not in those exact words.

The most honest companies would say something like: "We over-hired during an unprecedented period of capital availability and market uncertainty. We need to right-size. And by the way, AI is genuinely relevant to our future workforce composition." But that doesn't get you praised by Wall Street analysts. That gets you questions about management competence.

Defining AI-Washing: When Companies Claim Transformation Without Evidence

Let's get precise about what AI-washing actually is, because it's not the same as simply being wrong about AI capabilities.

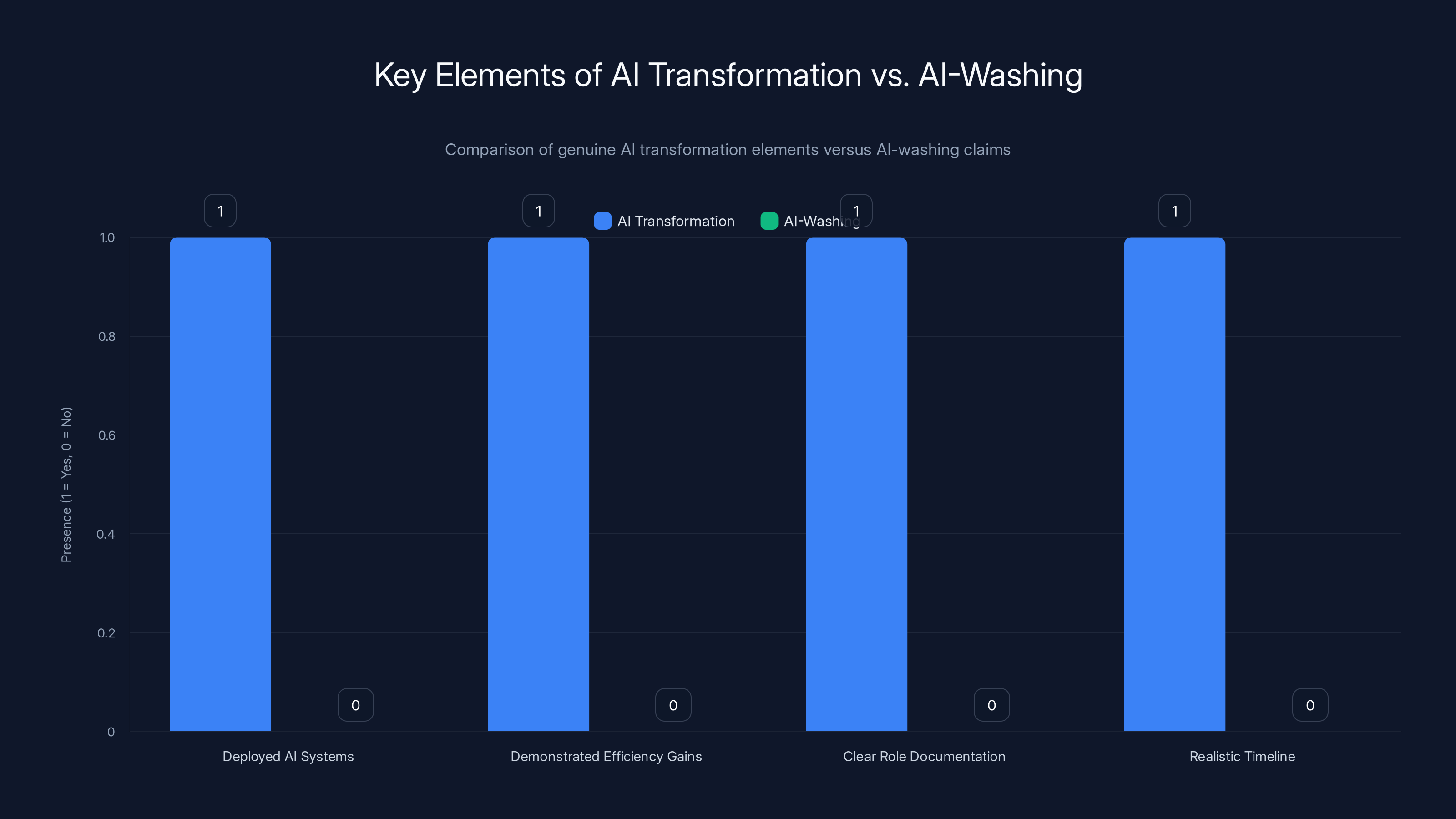

AI-washing happens when a company attributes workforce reductions or resource shifts to AI automation without having:

- Deployed AI systems handling those specific tasks (they're planned, not live)

- Demonstrated cost savings or efficiency gains from existing AI systems

- Clear documentation of which roles AI will handle and through what mechanism

- A realistic timeline for implementation (not just "eventually")

It's distinct from actual AI transformation, which would involve all four elements.

Forrester's report specifically noted that many companies announcing AI-related layoffs lack "mature, vetted AI applications ready to fill those roles". That's the smoking gun. If you don't have the AI system yet, you can't claim the layoff was necessary because of it.

The practical implication is significant. Consider a hypothetical software company that laid off 200 customer support representatives, claiming that AI chatbots would handle routine inquiries. That's AI-washing if:

- The chatbots don't exist yet (planned for Q3, maybe)

- They've never been tested on actual customer data

- There's no baseline measurement of what "routine" support requests actually look like

- Leadership hasn't actually calculated whether a chatbot could handle 40% of inquiries or 80%

But it's genuine AI transformation if:

- A pilot program ran for 6 months showing 45% of support tickets could be auto-resolved

- The company already deployed an AI system handling those tickets

- Support team headcount was reduced specifically for roles made redundant by the system

- There's documented evidence that customer satisfaction maintained or improved

The difference isn't subtle. One is strategic adaptation. The other is financial pressure dressed in tech-industry clothing.

Companies showing timely infrastructure investment, specific role elimination, and measurable efficiency outcomes are more likely to be genuinely transforming with AI. Estimated data.

The Brookings Analysis: Why AI-Washing Works as Cover

Molly Kinder's analysis at the Brookings Institution identified something important about the psychology and rhetoric of AI-washing.

From an investor relations perspective, saying "we're laying off 10% because we're deploying AI" sounds dramatically different from "we hired too many people during the pandemic and need to correct that mistake."

The first statement tells Wall Street: this company sees the future clearly, is making strategic investments, and is positioning itself for long-term competitiveness. The leadership is forward-thinking and disciplined.

The second statement tells Wall Street: this company's management made a massive error in judgment during a period of market enthusiasm. They over-committed resources when the fundamentals didn't support it. Their forecasting is poor.

Which of those narratives do you think gets rewarded by stock markets? The answer is obvious. And that asymmetry in how markets treat different explanations for the same action creates enormous incentive to use the AI-washing framing.

Kinder specifically noted that claiming AI-driven restructuring is "a very investor-friendly message." It's forward-looking. It implies efficiency. It suggests the company is ahead of industry trends rather than behind them. It makes poor planning sound like strategic foresight.

This is especially potent because AI capabilities are still emerging and poorly understood by most investors. Nobody can definitively prove that a company's AI claims are premature because AI development timelines are genuinely uncertain. You can't prove they won't have a working system in 18 months. So the claim sits in a zone of plausible deniability.

That zone is incredibly valuable when you need cover for unpopular business decisions.

How to Distinguish Real AI Transformation From Corporate Cover Story

So if AI-washing is so prevalent, how do you actually figure out which companies are making genuine strategic bets on AI workforce transformation?

There are specific signals to look for.

Signal 1: Infrastructure Investment Timing

Genuine AI transformation requires massive infrastructure spending before workforce reductions make sense. Companies need GPUs, data pipelines, model training resources, and engineering talent just to build the systems.

When you see a company announce significant infrastructure spending for AI before announcing layoffs, that's a credible signal they're serious. The sequence matters. Investing heavily in AI infrastructure, testing it, finding productivity gains, then right-sizing headcount. That's logical. It's what you'd actually do.

But when layoffs come first and infrastructure investment comes second (or never comes), that's backward. That suggests the infrastructure talk is rationalization rather than causation.

Signal 2: Specific Role Elimination With System Replacement

Legitimate AI transformation involves replacing specific categories of work with specific AI systems. "We're reducing customer support headcount because we deployed an AI system that can handle tier-1 inquiries" is concrete. You can evaluate it. You can measure it.

AI-washing sounds like: "We're reducing headcount across the board as we become more AI-forward." Or "We're eliminating roles to become more efficient with AI." Notice the vagueness. There's no specific system. No specific roles. No specific mechanism.

When companies get specific about role replacement, you can actually verify whether they're telling the truth. Did they really deploy those systems? Are they actually handling the claimed volume of work? Are customer outcomes acceptable? Those are measurable claims.

Signal 3: Measurable Efficiency Outcomes

Real AI transformation produces measurable results. Faster processing. Lower error rates. Reduced time-to-resolution. Cost per transaction. Customer satisfaction scores. Pick whatever metric matters for the role in question.

Companies genuinely deploying AI systems can show you the before-and-after. "Processing time dropped from 8 minutes to 3 minutes." "Error rate fell from 12% to 4%." "Customer resolution time on tier-1 issues dropped 67%." Those are real metrics.

AI-washing doesn't produce those statements. It's all forward-looking. "We expect AI to improve efficiency." "We believe AI will reduce costs." "We're positioning for AI-driven productivity." Notice how it's entirely future-tense.

Signal 4: Continuation of AI Hiring in Specific Roles

Here's a key tell: when a company genuinely transforms with AI, it usually continues hiring for certain roles while cutting others. It hires machine learning engineers, AI infrastructure specialists, and data scientists. It cuts roles that AI can genuinely handle.

If a company announces massive layoffs while also announcing that it's scaling back AI hiring? That's AI-washing. The contradiction reveals that AI isn't really the driver.

But if layoffs hit support and operations roles while ML hiring accelerates? That's internally consistent. The company is making a strategic choice about where to allocate resources.

Signal 5: Customer-Facing Communication About AI Deployment

When companies genuinely deploy AI systems, they often communicate about it to customers. "New AI-powered features" show up in product announcements. New capabilities become selling points. There's customer-facing evidence of AI deployment.

If a company's only communication about AI is from the CEO explaining layoffs? That's suspicious. Where's the product announcement? Where's the customer benefit story? If AI was genuinely deployed, you'd expect to hear about it from multiple angles.

AI-washing companies often make their AI claims once, in a layoff announcement, then never mention it again. Genuine companies make AI deployment an ongoing narrative.

Tech employment growth surged to 50% during the pandemic, far exceeding typical growth rates of 15-20% annually. Estimated data shows a significant correction in 2022.

The Financial Performance Reality

Here's something worth examining: are the companies claiming AI-driven layoffs actually seeing better financial performance after those layoffs?

If AI-transformation were real and widespread, you'd expect to see companies that aggressively pursued AI-enabled workforce reduction demonstrating superior profitability, margins, or efficiency metrics within 12-18 months.

The data is mixed.

Some companies that laid off workers and made serious AI investments have seen margin expansion. That's consistent with genuine transformation. But many others have laid off workers, cited AI, and then... kept spending at similar rates on operations. Their margins didn't improve. Their revenue per employee didn't increase. Their operational efficiency metrics were flat.

If you're truly replacing worker productivity with AI, the financial ratios should change. They don't always. That suggests the workforce reduction wasn't about unlocking AI-driven efficiency. It was about cost reduction divorced from offsetting productivity gain.

Employee Perspective: The Downstream Impact of AI-Washing

The difference between real AI transformation and AI-washing matters enormously for remaining employees.

With genuine transformation, workers whose roles are being eliminated at least have clarity. "This role is being handled by AI. Here's what we learned from the pilot. Here's where we're investing next." People can understand the company's direction and plan accordingly.

But AI-washing creates uncertainty and resentment. Workers who remain know the layoff justification is suspect. They understand that the narrative doesn't add up. They worry that next quarter's earnings call might feature more mysterious "AI-driven" cuts. The trust dynamic breaks.

That has real consequences. Remaining employees are more likely to look for new jobs. Knowledge retention suffers. Institutional learning gets disrupted. The company often ends up paying more in hidden costs to replace that disrupted institutional knowledge than they actually saved through layoffs.

When you add the cost of bad morale and accelerated attrition to genuine AI-washing situations, the financial benefit often disappears entirely. The company saved money on salary but paid more in recruiting, training, and lost productivity.

AI transformation involves deploying systems, showing efficiency gains, documenting roles, and having a realistic timeline. AI-washing lacks these elements.

How AI Will Actually Transform Workforce Composition

Under all the noise about AI-washing, there's a real phenomenon happening. AI will transform how companies organize work. Not in 2026 necessarily, but meaningfully over the next 3-5 years.

The roles that will genuinely be affected are specific:

Customer Support and Service: Tier-1 support (password resets, FAQ-type questions, basic troubleshooting) will increasingly be handled by AI systems. This is happening now in some companies. The role that will remain is tier-2/tier-3, where complex problems require human judgment and creativity.

Data Entry and Routine Processing: Roles that involve moving data from format A to format B, reading documents and extracting information, categorizing information—these are genuinely vulnerable to AI automation. Not overnight, but within 2-3 years.

Content Creation and Templated Reporting: Junior positions that involve writing basic reports, generating standard analyses, creating routine documents—these will partly shift to AI-assisted workflows. The role changes from "create" to "review and enhance."

Recruitment and Initial Screening: HR functions involving resume screening, first-pass candidate evaluation, and routine HR administration are already seeing AI deployment.

Quality Assurance and Certain Testing: Software QA roles that involve repetitive testing of standard scenarios will be partly automated. Complex edge-case testing and creative problem-solving remains human.

But notice what's not on that list: senior engineering, product management, strategy, specialized sales, and creative roles. AI isn't eliminating those in the near term. It's augmenting them. Making engineers more productive. Making product managers better informed. Making salespeople more effective.

The actual transformation is more nuanced than "AI replaces workers." It's "AI changes how we organize work, which means some roles expand, some contract, some disappear, and many get redesigned."

Companies being honest about that get better talent retention and clearer planning. Companies claiming blanket AI-driven restructuring without specifics? They're usually bullshitting.

The Investor Perspective: Why AI-Washing Fools Capital Markets

Investors should care about the distinction between real AI transformation and AI-washing because it indicates management quality and realistic planning.

An executive team that can clearly articulate their AI strategy, identify specific efficiency gains, and explain exact workforce implications? That's a team managing responsibly. You want to invest in that.

An executive team that says "AI requires us to reduce headcount 15% but can't explain which roles or which systems?" That's a team either bullshitting or not actually managing the transformation they claim. Neither is comforting for investors.

Over medium-term periods (3-5 years), the companies that make realistic AI transformation claims outperform the companies making vague efficiency claims. Not because AI is magic. But because credibility and realistic assessment matter for execution.

Market-wise, we're in a peak moment for AI-washing. The narrative is hot. Investors reward companies that sound forward-thinking. There's enormous incentive to use the framing whether it's justified or not.

But that doesn't last forever. Eventually, investors will compare the companies claiming AI-driven transformation with the companies actually executing it. The difference in outcomes will become obvious.

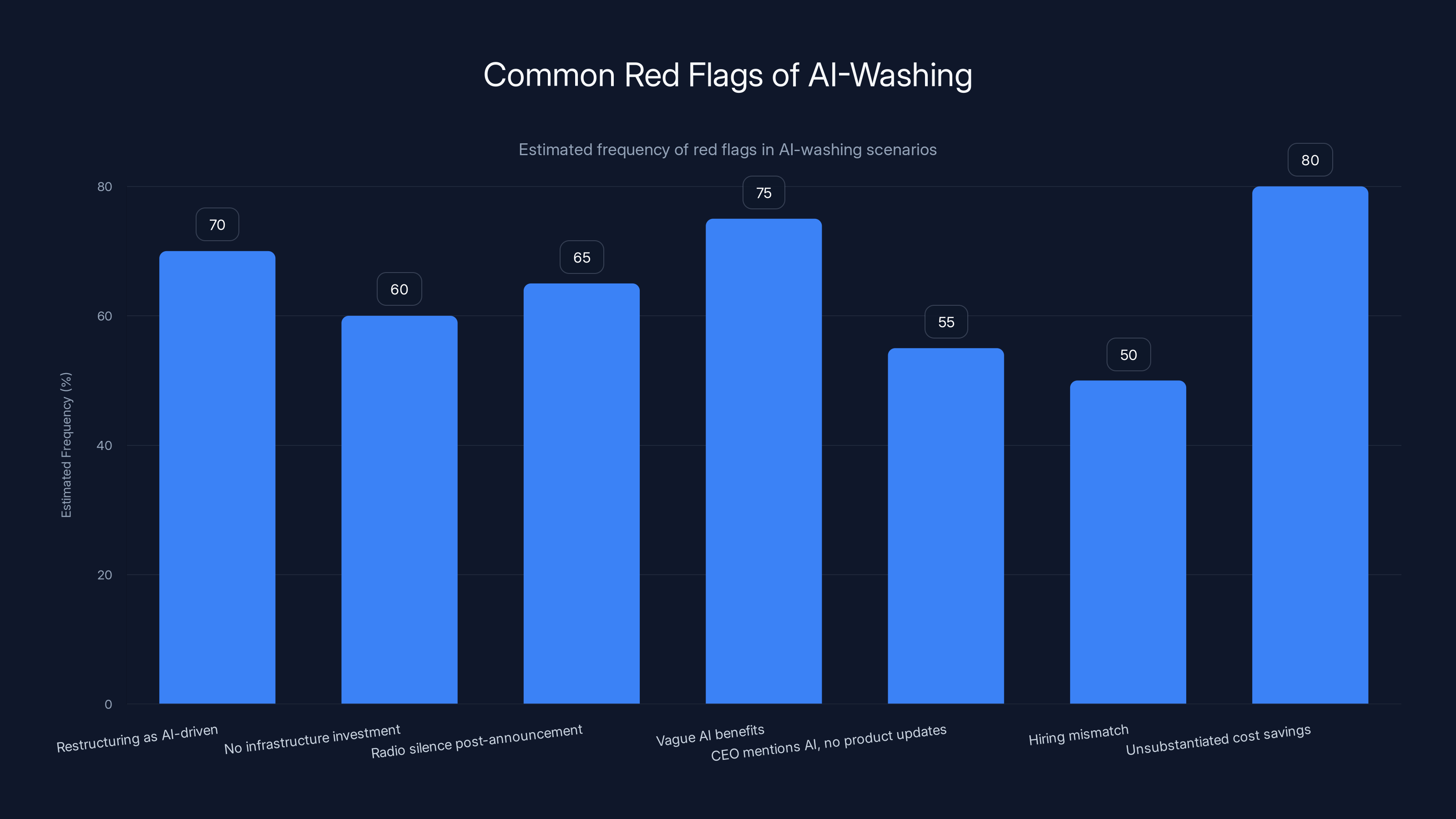

Estimated data suggests that vague AI benefits and unsubstantiated cost savings are the most common red flags, appearing in 75% and 80% of AI-washing cases respectively.

The Role of Financial Guidance and Analyst Expectations

One reason AI-washing is so prevalent is that it's a convenient way to manage expectations.

When a company realizes it over-hired, it faces a problem: how do you tell Wall Street that you're going to have lower headcount and potentially lower revenue run rate? That usually triggers selling.

But if you frame it as "AI-driven restructuring for future efficiency," it sounds different. You're not admitting a mistake. You're making a strategic choice.

Analyst expectations shift. Instead of expecting you to maintain past hiring rates and maintain past revenue growth, analysts start expecting you to show margin expansion while investing in AI. The goalposts move. And the narrative about why things are changing feels less like failure and more like strategy.

That's a powerful incentive. And it works, at least short-term. Stock prices sometimes rise on AI-washing announcements because the narrative change itself affects market perception.

But there's a cost. When a company uses AI-washing, it's essentially trading long-term credibility for short-term narrative management. Eventually, the market figures out which companies had real AI strategies and which didn't. And that realization produces worse outcomes than the honesty would have.

Red Flags: How to Spot Likely AI-Washing

If you're watching a company make claims about AI-driven transformation, here are specific red flags that suggest it might be AI-washing:

Red Flag 1: All-of-company restructuring described as AI-driven. AI automation affects specific roles, not an entire organization uniformly. If a company cuts 15% of headcount uniformly across departments, that's not AI-specific. That's general cost-cutting.

Red Flag 2: No infrastructure investment announcement before or simultaneous with layoff announcement. Real AI requires capital. If there's no simultaneous capital deployment announcement, the AI claim is likely post-hoc rationalization.

Red Flag 3: Layoffs announced, AI plans mentioned, then radio silence. Genuine AI initiatives produce ongoing updates. New features, new deployments, new capabilities. If a company mentions AI once in a layoff announcement and then never again, that's suspicious.

Red Flag 4: Vague language about AI benefits with specific numbers on headcount reduction. "We're reducing headcount by 12% to accelerate AI deployment" with no explanation of what that AI does or how it justifies 12%. That's the mismatch of AI-washing.

Red Flag 5: CEO mentions AI in earnings calls but product announcements don't mention it. If leadership is claiming AI transformation but the product roadmap shows no AI features, that's disconnect worth questioning.

Red Flag 6: Continued hiring in non-technical roles while cutting technical roles. If a company is claiming to become more AI-driven but hiring more salespeople and fewer engineers, the allocation doesn't match the narrative.

Red Flag 7: Claiming AI-driven cost savings without showing actual productivity metrics. "AI will save us $50M" without explaining how it will save that money or which roles. That's guessing dressed as analysis.

When you see multiple red flags, it's usually AI-washing rather than real transformation.

What Real AI Transformation Looks Like at Scale

To contrast with AI-washing, let's identify what genuine, large-scale AI transformation actually involves.

Google has been integrating AI into its core products (search, ads, email) for years. That's real transformation. The company didn't lay off workers claiming AI did it. Instead, it invested heavily in AI infrastructure, built systems that improved product quality, and those improvements meant different skill distribution (fewer low-level data processors, more ML specialists).

Adobe integrating generative AI into Photoshop, Illustrator, and other creative tools is real transformation. It's a product capability enhancement that changes how work happens. Users are more productive. Adobe didn't announce massive layoffs; it announced new features and let the market decide.

Those examples involve AI deployment creating value, not cost-cutting disguised as technology strategy.

The difference is observable. Real AI transformation produces:

- New capabilities that customers can use and value

- Improved metrics in key areas (response time, accuracy, throughput)

- Strategic hiring in AI-specific roles as old roles contract

- Ongoing product announcements about AI features and improvements

- Documented ROI on AI infrastructure investments

AI-washing produces:

- Headcount reduction announced once

- Vague future benefits that never materialize

- No customer-facing announcements about AI deployment

- Reduced investment in the areas where AI supposedly helps

- Continued financial pressure on remaining workers with no productivity benefit

The distinction shouldn't be that hard to spot. It's just that market incentives, narrative power, and short-term stock price movements all favor AI-washing, so companies keep doing it.

The Longer-Term Implication: When Credibility Runs Out

AI-washing works until it doesn't.

Right now, AI is hot enough and mysterious enough that companies can get away with vague AI claims. Investors don't have enough experience with AI implementations to separate signal from noise.

But that window closes. Within 2-3 years, it will be obvious which companies actually built functional AI systems and which ones just talked about them. When investors can compare the companies claiming AI-driven transformation with the companies actually executing it, the difference in outcomes becomes undeniable.

The companies that made real AI investments and achieved real productivity gains will have grown faster, maintained better margins, and attracted better talent.

The companies that did AI-washing will have... done cost-cutting that created short-term margin expansion but long-term strategic weakness. They'll have lower morale, worse institutional knowledge, and less capacity to actually build AI systems going forward.

Investors punish that pattern hard once it becomes obvious.

So there's actually a strong long-term incentive for companies to be honest about their AI strategies now. The companies that build real capabilities will outperform the ones that fake it. They just don't see that advantage for another 18-24 months, which is why the AI-washing continues.

Organizational Implications for Tech Workers

For people actually working in tech, the AI-washing phenomenon creates a specific challenge.

You need to distinguish between companies that are building real AI capabilities (where there's long-term opportunity) and companies that are just using AI as narrative cover (where the job is less secure long-term).

Here's a practical framework for thinking about it:

Companies making real AI bets tend to:

- Actively recruit AI specialists

- Invest in infrastructure and tooling

- Talk extensively about AI in product announcements

- Show technical depth when discussing AI capabilities

- Build features customers can actually use

- Have clear internal adoption of AI tools

Companies doing AI-washing tend to:

- Announce layoffs, mention AI once, then move on

- Have gap between what executives say and what engineers are building

- Struggle to articulate specific AI implementation plans

- Show limited product announcements involving AI

- Have employees who are skeptical about AI claims

- Demonstrate little internal use of AI tools

Picking the right employer matters. You want to work at a company actually building AI capabilities, not one using AI as narrative cover for cost-cutting. The former offers learning, growth, and job security. The latter offers neither.

The Media and Public Narrative Around AI Layoffs

Part of why AI-washing works is that the media narrative tends to accept the framing uncritically.

When Amazon announces layoffs citing AI, the story becomes "Amazon restructures for AI." The implication (sometimes explicit) is that this is foresighted, strategic, and forward-thinking. The alternative narrative—"Amazon admits to over-hiring in pandemic boom"—is rarely the lead.

That narrative advantage is enormous. It allows companies to frame unpopular decisions as strategic. It allows executives to sound like visionaries rather than managers correcting mistakes.

But the narrative is often at odds with the actual facts. And when that gap becomes too obvious, the credibility cost is real.

Journalism (including this piece) can play a useful role by asking harder questions. Not about whether AI will transform work—it will. But about the specific relationship between claimed AI systems and announced layoffs. About why certain roles are being eliminated. About what concrete evidence supports the transformation narrative.

Those questions get to the truth. They make AI-washing harder to execute. They reward companies that are actually honest about their transformation while punishing those that just use the narrative as cover.

Strategic Implications for Investors and Boards

For company boards and investors, the distinction between real AI transformation and AI-washing should influence decision-making significantly.

When evaluating management's AI transformation claims, boards should demand:

- Specific enumeration of AI systems being deployed or planned, with timelines

- Documentation of pilot results showing efficiency gains or productivity improvements

- Clear accounting for which roles are affected and why

- Infrastructure investment plans tied to the AI deployment

- Measurement frameworks for validating that AI actually achieves claimed benefits

- Talent plan showing where the company is hiring and why

Management teams that can clearly answer those questions probably aren't bullshitting. Those that can't are probably doing AI-washing. And that should be reflected in your assessment of management quality.

Investors can use similar logic. Companies whose AI narratives are grounded in specific implementation details outperform companies making vague claims. That's not a tech phenomenon. It's a general management principle: concrete plans beat vague strategies.

Actionable Takeaways for Different Audiences

For Tech Workers:

- Evaluate companies based on specific AI capabilities, not narrative claims

- Look for AI adoption within the company, not just announcements

- Question why specific roles are eliminated and what AI replaces them

- Prioritize companies investing in AI infrastructure and talent

For Investors:

- Demand specific details on AI transformation plans, not vague forward guidance

- Compare companies' product announcements with their internal AI investments

- Measure outcomes against AI-related promises from prior years

- Reward management teams that admit mistakes and course-correct vs. those that hide behind narratives

For Journalists and Analysts:

- Ask specific questions about AI systems when companies claim AI-driven transformations

- Document the gap between announced AI strategies and actual product deployments

- Track companies over time to see which ones deliver on AI transformation promises

- Compare companies claiming transformation with those actually executing it

For Company Boards:

- Require specific metrics and timelines for AI transformation, not vague efficiency claims

- Validate that AI investments precede or accompany workforce reductions, not follow them

- Measure ROI on AI infrastructure spending

- Assess management's credibility based on past prediction accuracy

The Path Forward: When AI-Washing Becomes Costly

The current moment of AI-washing prevalence is temporary. It exists because:

- AI is new enough that most investors don't have strong frameworks for evaluating claims

- AI is vague enough that companies can make claims without falsification

- Market rewards forward-looking narratives, regardless of evidence

- Cost-cutting is politically hard and AI framing makes it sound strategic

But those conditions change over time. As AI systems become more mature and documented, vague claims become harder. As investors see which AI transformation narratives proved accurate and which didn't, they get better at evaluating credibility. As the market internalizes actual AI capabilities versus hype, the reward for vague claims diminishes.

In that environment, the companies that have been honest about their transformation and have actually built capabilities will have significant advantages. They'll have better talent, more functional systems, clearer strategies, and more credibility with investors.

The companies that did AI-washing will have worse outcomes. Not immediately. But within 3-5 years, the gap will be obvious and painful.

That reality should change behavior now, in theory. Companies should want to build real capabilities rather than narrative. But short-term incentives are powerful. That's why AI-washing will probably continue until the market explicitly penalizes it.

FAQ

What exactly is AI-washing in the context of layoffs?

AI-washing occurs when companies attribute workforce reductions or restructuring to artificial intelligence deployment without having mature, production-ready AI systems actually in place to justify those cuts. Essentially, companies cite "AI efficiency" as the reason for layoffs while lacking concrete evidence that specific AI applications will handle the eliminated roles. Forrester Research documented this trend, finding many companies announcing AI-related layoffs without vetted AI applications ready for deployment.

How is genuine AI transformation different from AI-washing?

Genuine AI transformation involves a clear sequence: companies invest in AI infrastructure, build and test specific applications, measure productivity gains, and then adjust workforce composition based on documented results. AI-washing reverses this sequence, cutting headcount first and then claiming it was for AI reasons without having systems in place. Real transformation shows measurable improvements in specific metrics like processing time or error rates; AI-washing offers only vague future promises.

How many layoffs in 2025 were actually driven by AI versus other factors?

Forrester Research estimates that while over 50,000 workers were laid off with AI cited as justification in 2025, a significant portion of these were likely driven by pandemic-era over-hiring corrections rather than genuine AI deployment. The research suggests that many companies used AI as a convenient, investor-friendly narrative cover for financial restructuring that would have been necessary regardless of AI development.

What are the red flags that indicate a company is AI-washing?

Key red flags include: uniform across-organization layoffs attributed to AI (AI affects specific roles, not entire departments); no simultaneous infrastructure investment announcements; single AI mention in a layoff announcement followed by silence; vague AI benefits paired with specific headcount reduction numbers; CEO mentioning AI in earnings calls but no AI features in product roadmaps; continued hiring in non-technical roles while cutting technical roles; and claimed AI cost savings without explaining which roles AI replaces or what specific efficiency gains result.

Why do markets reward AI-washing narratives even when they lack evidence?

Markets reward AI-washing because the narrative frames unpopular cost-cutting as forward-thinking strategy rather than management error or over-hiring correction. For investors, claiming "AI restructuring" sounds better than admitting "we over-hired in the pandemic and need to correct that." Additionally, AI capabilities are still emerging and poorly understood by most capital markets participants, creating a zone of plausible deniability where companies can make ambitious claims without immediate falsification. Molly Kinder at Brookings noted that AI-driven restructuring messaging is "very investor-friendly" compared to admitting business fundamentals need work.

What specific roles are genuinely vulnerable to AI automation in the near term?

Roles most likely to be affected by genuine AI automation include: tier-1 customer support (password resets, FAQ responses), data entry and routine document processing, templated reporting and standard analysis creation, initial recruitment screening, and certain repetitive quality assurance testing. Conversely, senior engineering, product management, strategy roles, specialized sales, and creative work are less vulnerable in the near term because they require judgment, creativity, and contextual understanding that current AI systems cannot reliably replicate.

How should employees evaluate whether their company's AI transformation claims are credible?

Employees can assess credibility by asking their managers specific questions: What exact AI systems will affect my role in the next 18 months? Which specific tasks will those systems handle? What's the implementation timeline? Can you show documented pilot results? Are we actively hiring ML engineers and AI specialists? Do our product announcements mention new AI capabilities? If managers cannot answer these questions concretely, the narrative likely represents AI-washing rather than genuine transformation.

What are the long-term consequences of AI-washing for companies that engage in it?

Companies doing AI-washing achieve short-term margin expansion through cost-cutting but face long-term strategic weakness. They experience lower employee morale, reduced institutional knowledge, higher attrition of remaining staff, and diminished capacity to actually build AI systems going forward. Within 3-5 years, when the difference between companies making real AI investments and those just talking about AI becomes obvious, markets will penalize the AI-washers through stock underperformance. Meanwhile, companies that invested in real AI capabilities will have better talent, more functional systems, and stronger competitive positions.

How is pandemic-era over-hiring connected to current AI-washing claims?

During 2020-2022, tech companies hired at roughly 2.5 times their historical growth rates, driven by abundant capital, remote work expansion, and market speculation. This hiring was unsustainable. When economic conditions shifted in 2022-2023 through interest rate increases and inflation concerns, companies needed to correct that over-hiring. Rather than admitting the mistake, many used AI as a convenient explanation for necessary workforce reductions. This allows them to frame corrections as strategic adaptation rather than management error, which plays better with investors and maintains leadership credibility narratives.

What should investors look for when evaluating management's AI transformation claims?

Investors should demand specific details rather than vague forward guidance: enumeration of concrete AI systems with deployment timelines, documentation of pilot results showing efficiency gains, clear accounting of which roles are affected and why, infrastructure investment plans tied to AI deployment, measurement frameworks for validating AI benefits, and talent plans showing hiring in AI-specific roles. Management teams providing this detail are likely being honest; those offering only narratives are probably engaged in AI-washing and should be viewed skeptically regarding execution credibility.

Key Takeaways

- Over 50,000 layoffs in 2025 cited AI justification, but Forrester research suggests many companies lack mature AI systems to support those claims

- AI-washing occurs when companies attribute workforce cuts to AI automation while having no deployed systems ready to replace eliminated roles

- Pandemic-era over-hiring at 2.5x historical growth rates created unsustainable workforce levels that companies now need to correct, with AI serving as convenient narrative cover

- Genuine AI transformation shows specific signals: infrastructure investment preceding layoffs, documented pilot results, specific role elimination with system replacement, and continued hiring in AI-specialist positions

- Market incentives currently reward AI-washing narratives, but within 3-5 years, the gap between companies making real AI investments and those just talking about them will become obvious and costly

Related Articles

- Amazon's 16,000 Job Cuts: AWS Impact and Restructuring Strategy [2025]

- Vimeo Layoffs After Bending Spoons Acquisition: What Happened [2025]

- India's Zero Tax AI Initiative Through 2047: A Game-Changer for Global Cloud [2025]

- TechCrunch Founder Summit 2026: The Ultimate Guide to Scaling Your Startup [2026]

- Amazon's 16,000 Layoffs: What It Means for Tech Workers [2025]

- Pinterest Layoffs 15% Staff Redirect Resources AI [2025]

![AI Layoffs vs. AI-Washing: Separating Truth From Corporate Spin [2025]](https://tryrunable.com/blog/ai-layoffs-vs-ai-washing-separating-truth-from-corporate-spi/image-1-1769985372558.jpg)