Google's Gemini Can Now Generate Music: What Lyria 3 Means for Creators

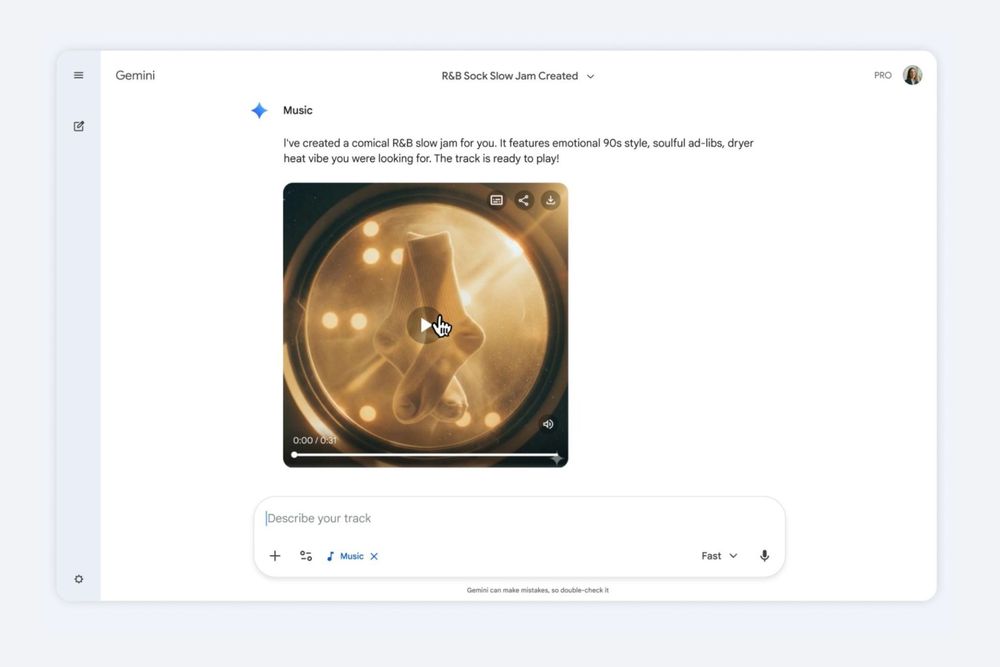

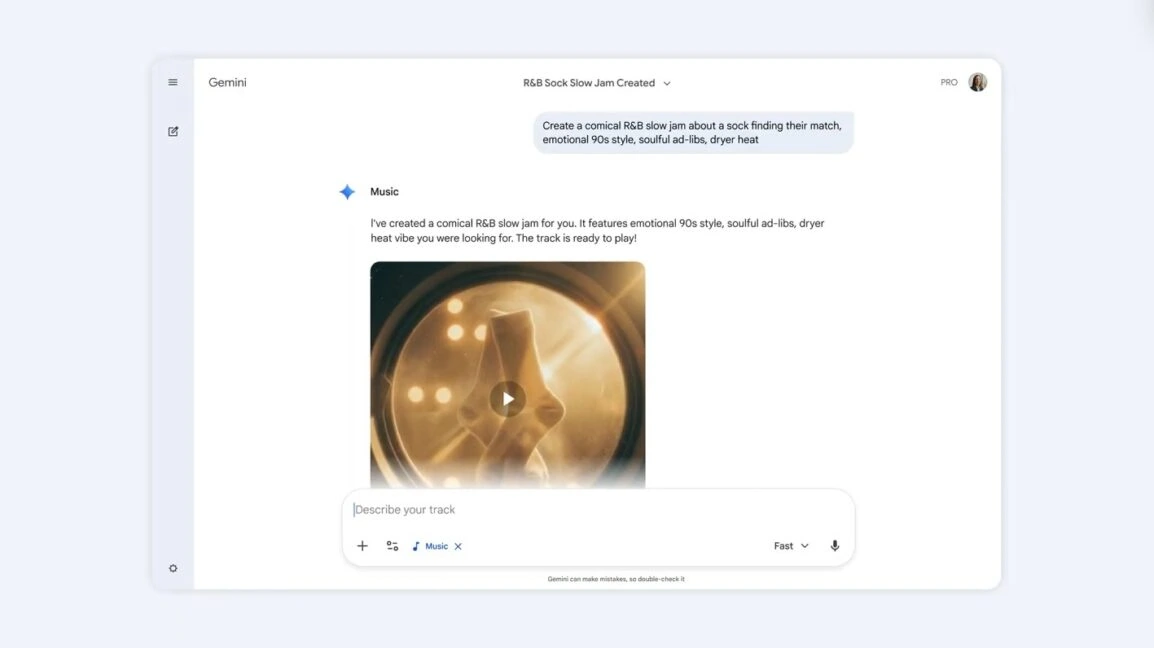

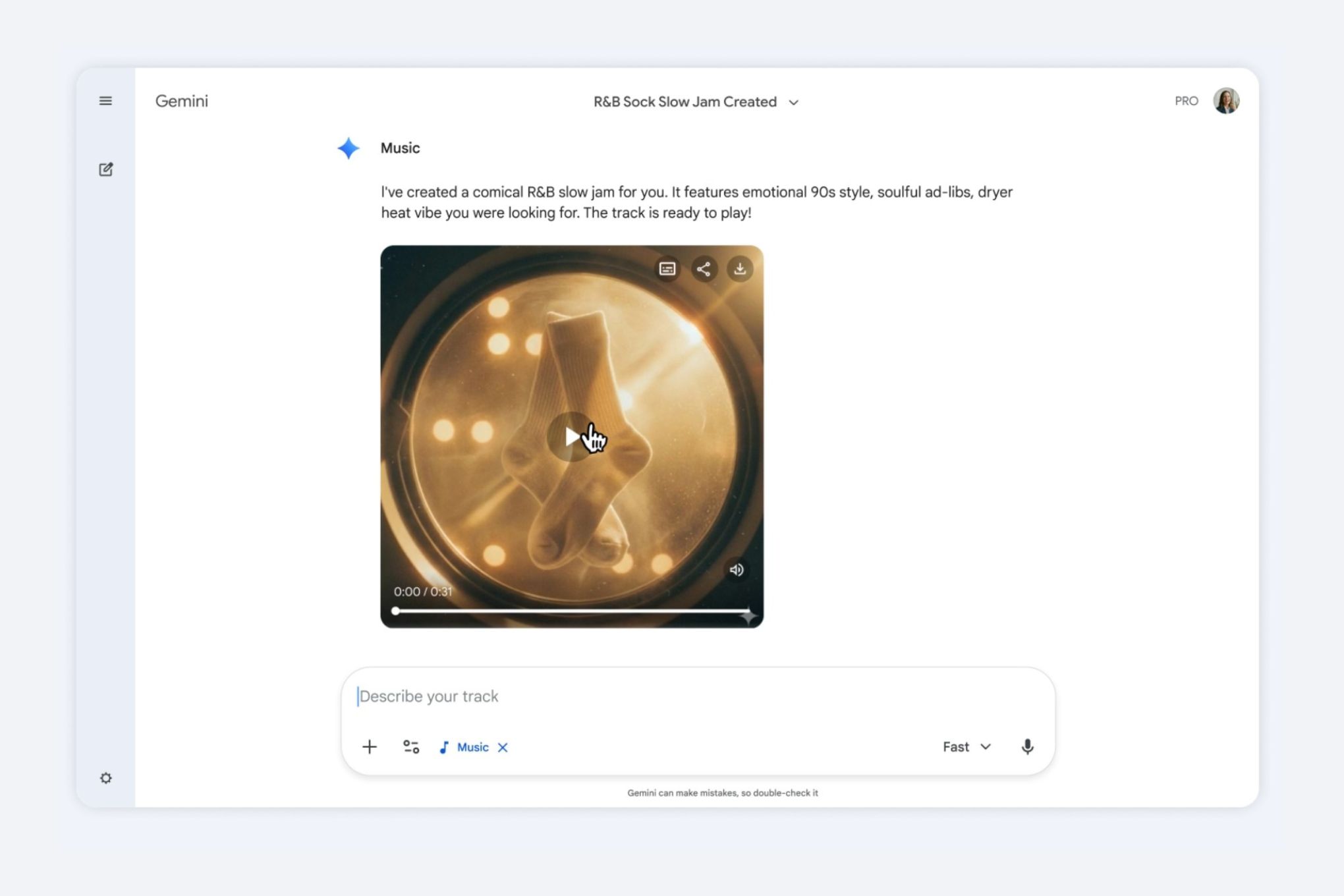

There's this moment when you first realize an AI tool has crossed into something genuinely useful. For me, it happened when I watched Google's demo of Lyria 3 generating a music track from the prompt "a comical R&B slow jam about a sock finding their match." The result wasn't perfect, but it was undeniably listenable. Corny? Sure. Strange in places? Absolutely. But functional in a way that most AI-generated music wasn't a year ago.

Google just announced that Gemini users can now generate 30-second music clips using its newly integrated Lyria 3 model. This isn't Google's first rodeo with audio generation, but it's a significant step forward. The company has been building toward this for years, and Lyria 3 represents a tangible leap in what's possible when you combine advanced language models with audio synthesis.

What makes this announcement particularly interesting isn't just the technology itself, but where it sits in the broader landscape of AI music generation. We're at this weird inflection point where the tools are good enough to be useful but not so good that they feel indistinguishable from human creation. That's actually perfect for a lot of use cases—background music for short videos, experimental remixes, quick backing tracks for content creators.

Let me walk you through what Gemini's music generation actually does, how it works, where it's limited, and why it matters more than you might initially think.

Understanding Lyria 3: Google's Music Generation Breakthrough

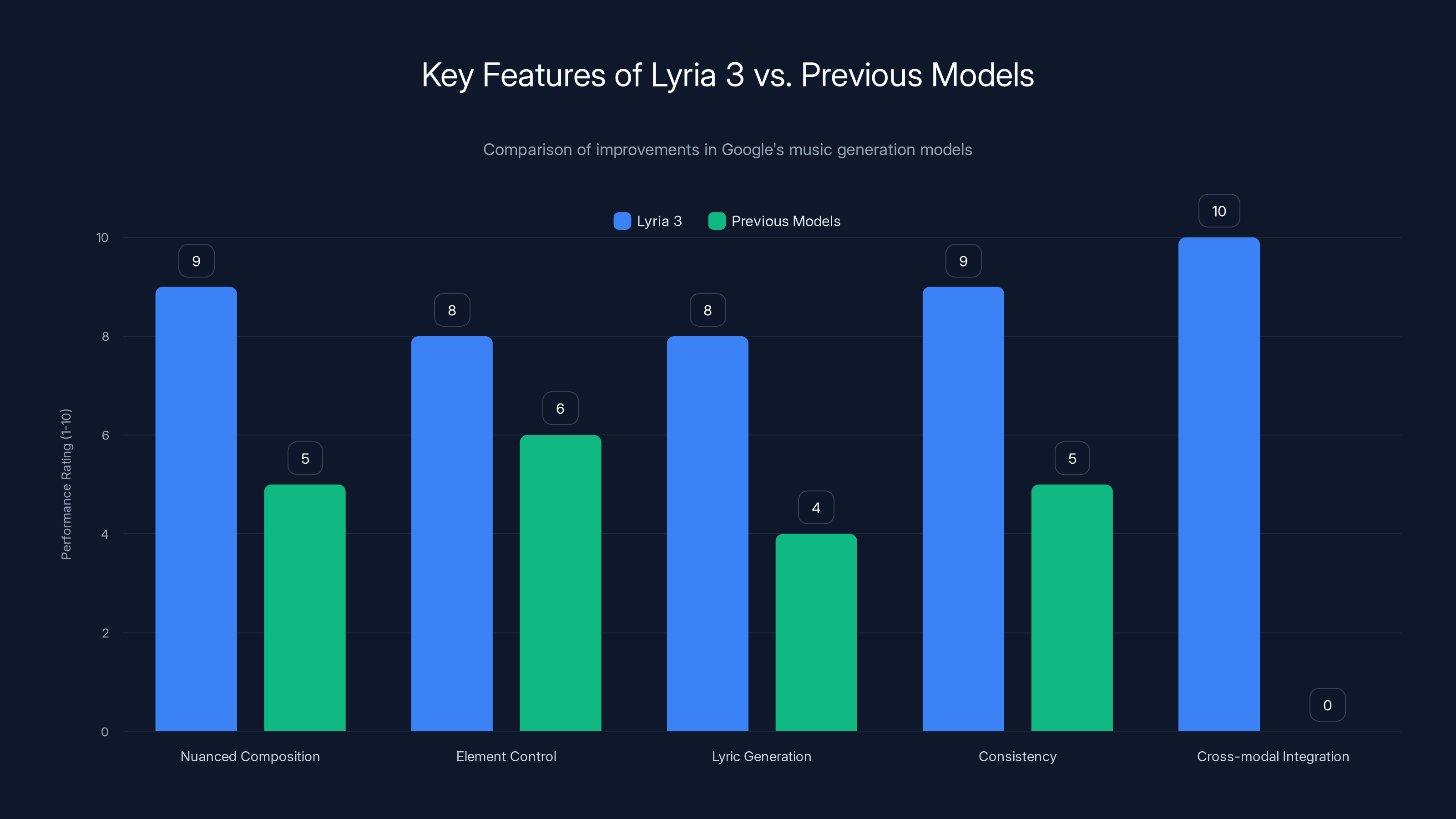

Lyria 3 is Google's third iteration of its music generation model, and the improvements from its predecessors are substantial. The company built this model specifically to handle more nuanced musical composition, better control over individual elements, and automatic lyric generation.

Here's what's important to understand: this isn't a tool that listens to existing music and remixes it intelligently. It's a generative model that understands musical structure at a fundamental level and can create novel compositions from scratch. The model was trained on a diverse dataset of music, which means it understands patterns in how songs are built, how instruments interact, how lyrics fit into musical narratives.

The architecture of Lyria 3 builds on years of research into audio generation. Previous models struggled with maintaining consistency throughout a track, keeping time accurately, and generating lyrics that didn't sound completely artificial. Lyria 3 tackles these problems by thinking about music generation at multiple levels simultaneously. The model generates overall structure first, then fills in details at the melodic, harmonic, and lyrical levels.

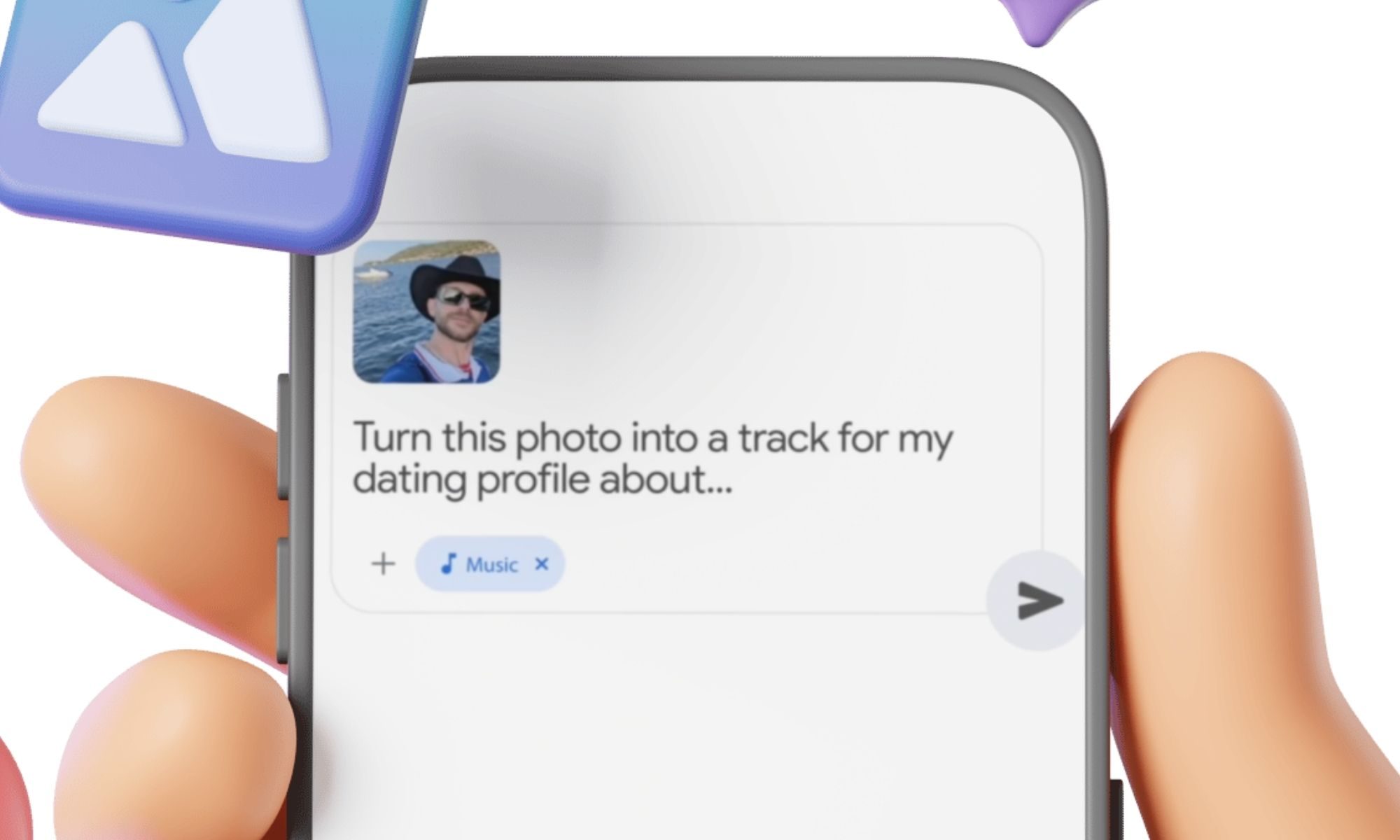

One of the underrated aspects of this release is that Google chose to integrate it into Gemini rather than releasing it as a standalone tool. That decision matters because Gemini already has multimodal capabilities. You're not just limited to text prompts. You can feed the model an image and ask it to generate music inspired by that visual aesthetic. You can show it a video and ask for accompaniment. This cross-modal approach opens up creative possibilities that wouldn't exist in a text-only interface.

The 30-second limitation exists for practical reasons. Longer generations would require more computation, produce larger files, and create more potential for quality degradation. Thirty seconds is long enough to establish a clear musical identity and deliver a complete idea. It's the length of a decent intro, a full verse-and-chorus combination, or a solid instrumental phrase. Think of it as a musical paragraph rather than a full essay.

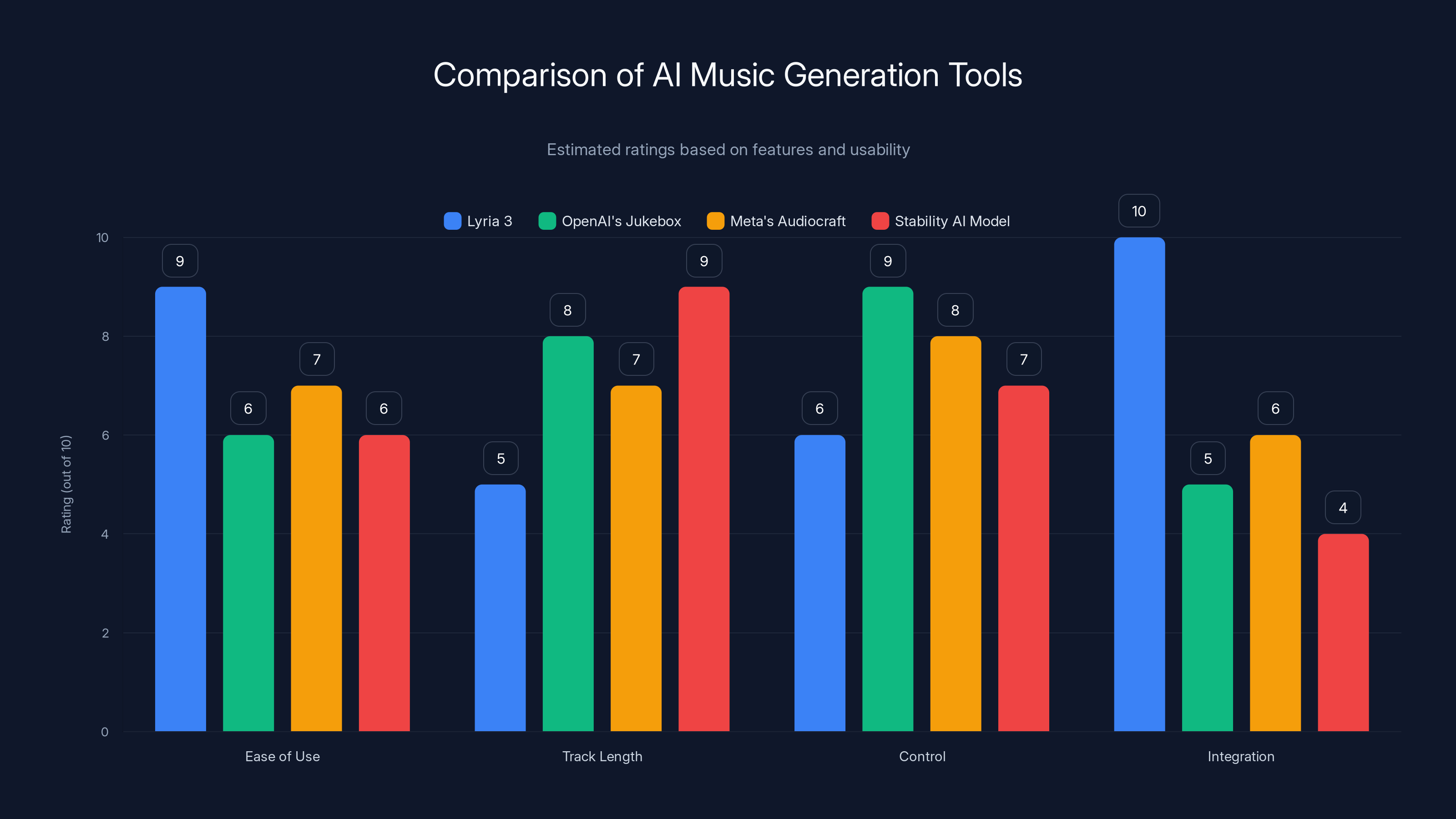

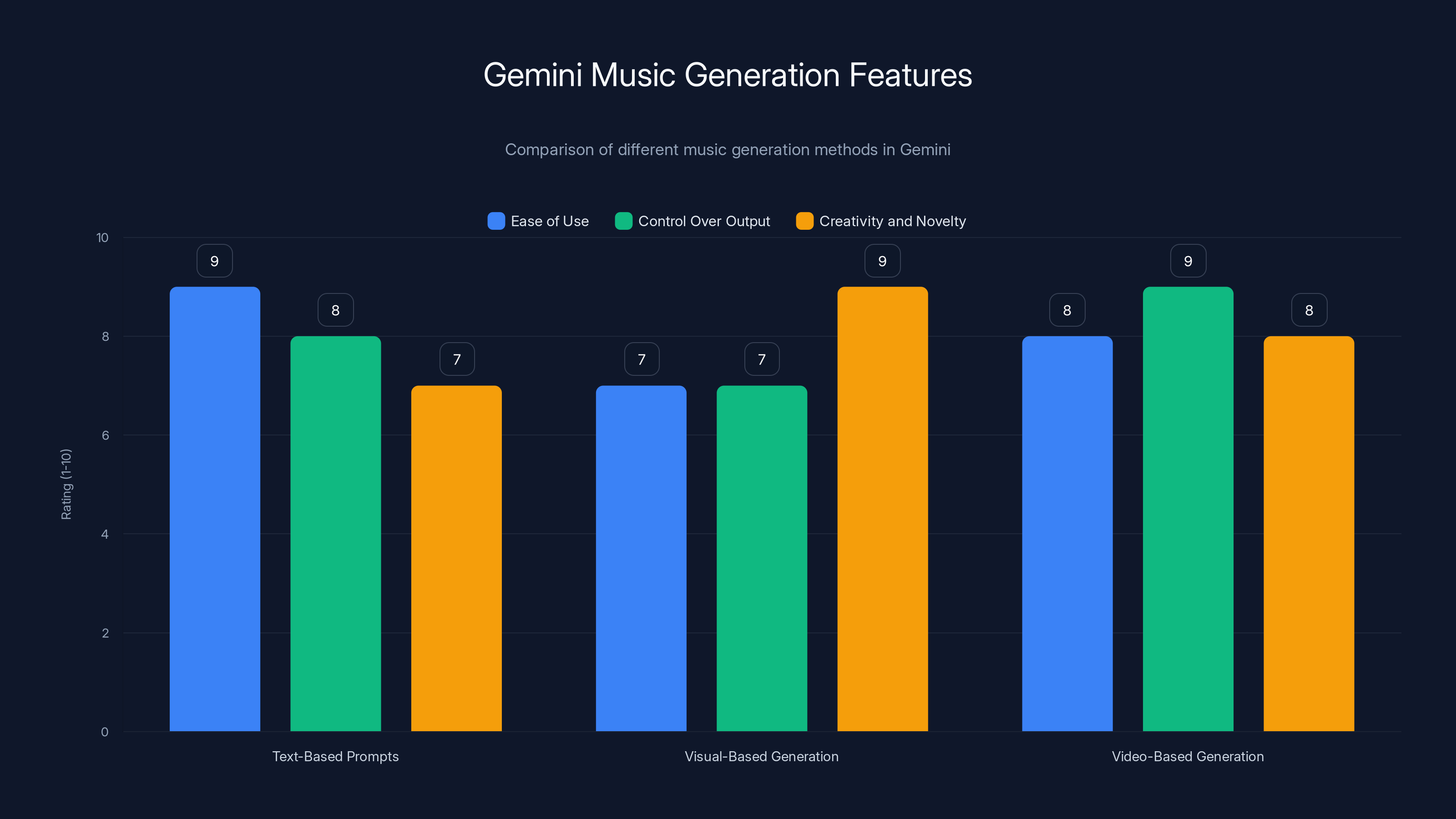

Lyria 3 excels in ease of use and integration, but is limited by shorter track lengths. Estimated data based on tool features.

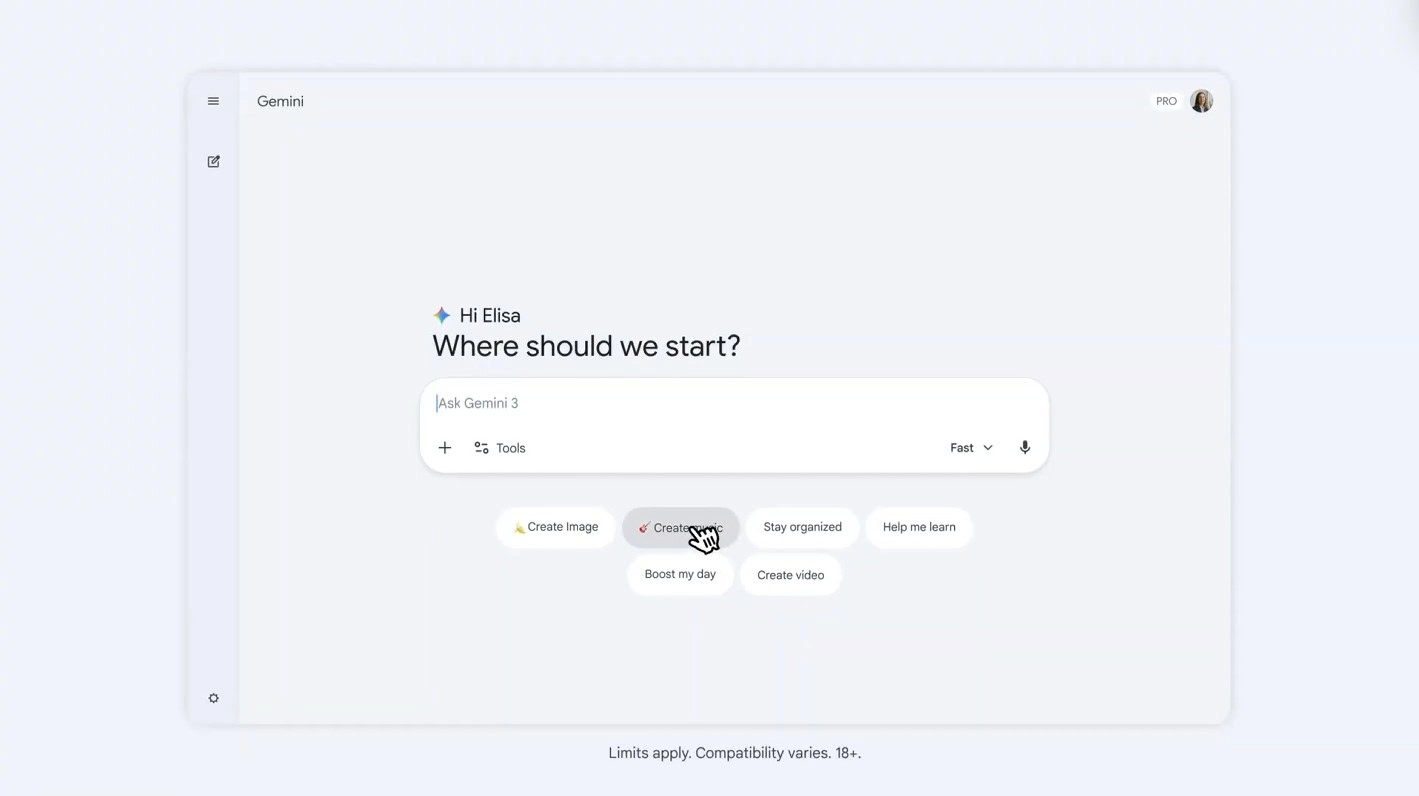

How to Use Gemini for Music Generation

The user experience for generating music in Gemini is remarkably straightforward, though the depth available if you dig into it is surprisingly sophisticated. Let me break down the different approaches and what works best.

Text-Based Prompts: Starting Simple

The easiest entry point is typing a text description of the music you want. You don't need to be a music theorist or producer. Prompts like "upbeat dance track," "melancholic acoustic guitar," or "funky bass line" will produce serviceable results. The beauty of Lyria 3 is that it extracts the essence of what you're asking for even when your description is loose.

But here's where it gets interesting. If you want more control, you can get granular about specific elements. You can specify tempo (fast, moderate, slow, or actual BPM). You can request particular instruments or instrument combinations. You can ask for specific drum patterns. You can even guide the emotional tone or intended use case.

A prompt like "120 BPM house track with four-on-the-floor kick drum, prominent synth baseline, and energetic snare pattern" will give you much tighter control over the output than "make a dance song." The model responds to specificity with better results.

Visual and Video-Based Generation

This is where things get creative. You can upload an image to Gemini and ask it to generate music that matches that visual aesthetic. Want music inspired by a sunset photograph? A minimalist architectural image? A chaotic street scene? You can feed any image and the model will synthesize what it perceives about that image into musical composition.

Video-based generation works similarly but with more information. You can upload a video clip and ask for music that matches its mood, pacing, or subject matter. This is particularly useful for content creators who have footage and need quick backing tracks.

The real power here is that these aren't just novelty features. They're genuinely useful for creative workflows. A designer working on a visual project can generate reference music while iterating on aesthetics. A content creator can generate multiple variations of background music without licensing fees.

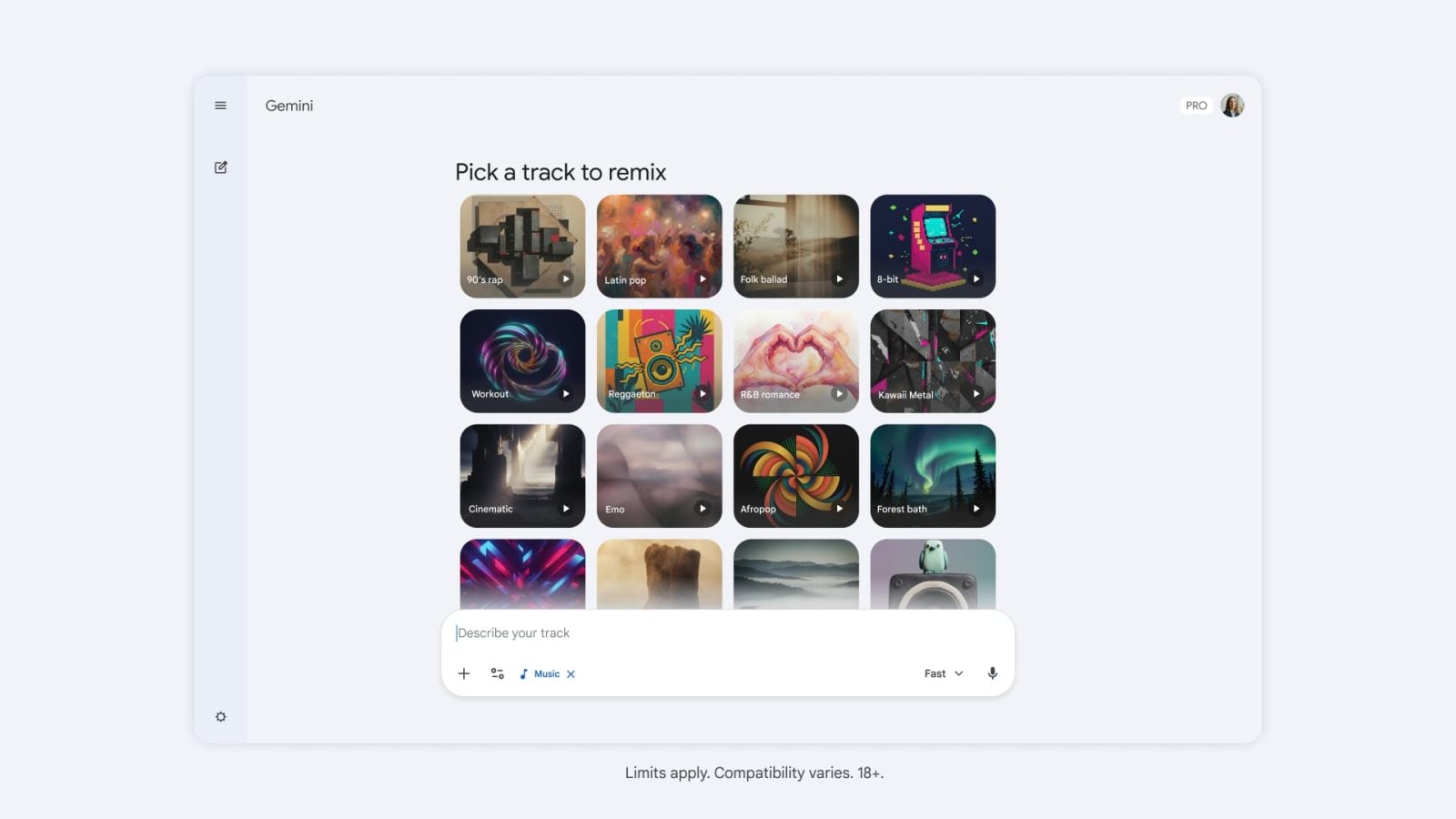

Remixing and Variation Generation

Lyria 3 can also remix existing music descriptions. If you generated a track you mostly like but want it slightly different, you don't need to start over. You can prompt for variations with specific modifications. Want the same track but with different instrumentation? More or less reverb? Sped up or slowed down? The model can handle these requests.

This feature matters because it reduces the trial-and-error cycle. Instead of generating five completely different tracks hoping one works, you can generate one you like and then iterate on it efficiently.

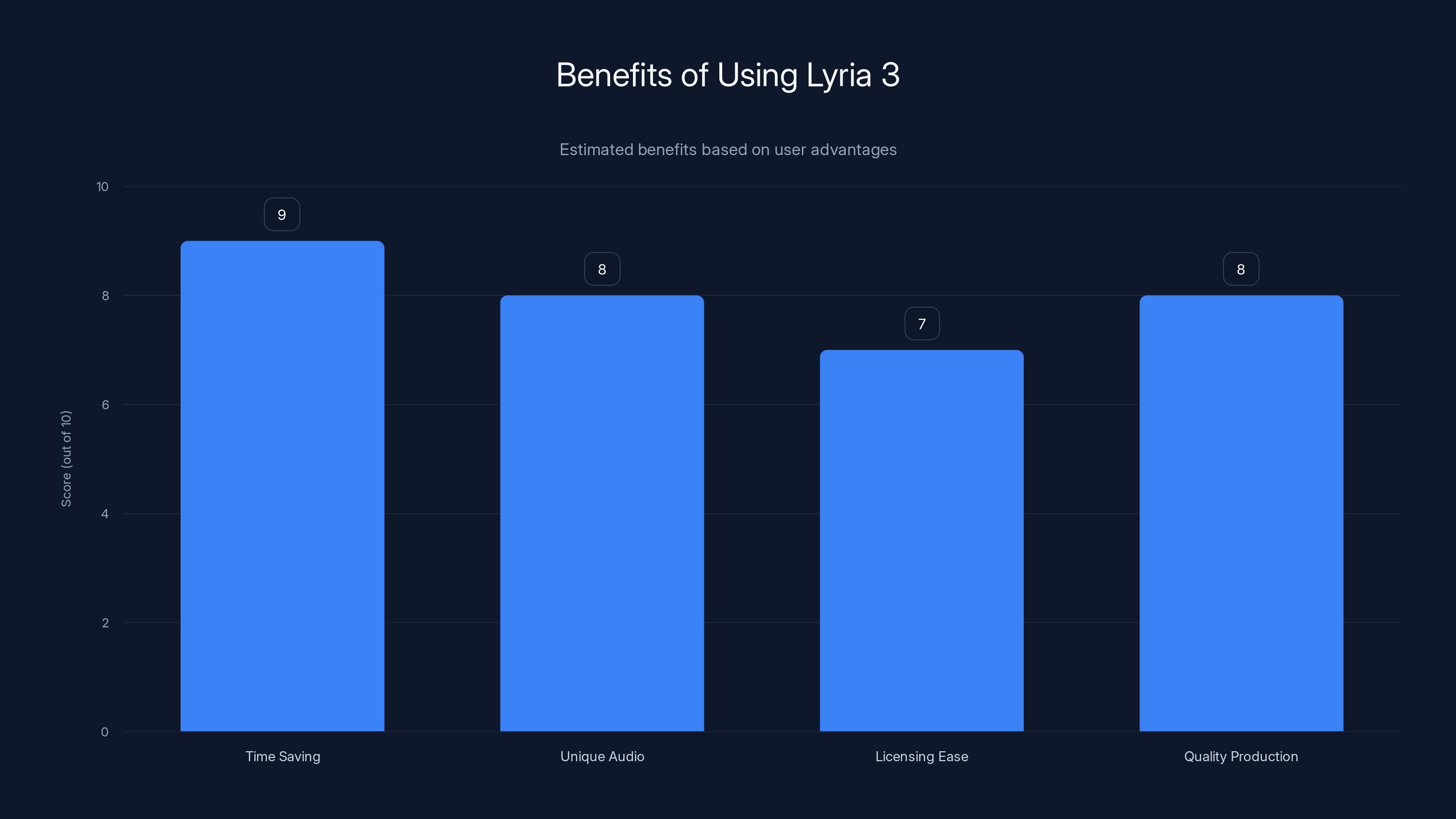

Lyria 3 offers significant benefits, notably in time-saving and producing unique audio, making it a valuable tool for content creators. Estimated data.

Quality Assessment: Where Lyria 3 Excels and Where It Falls Short

Let's be honest about what Lyria 3 can and can't do. The samples Google released with this announcement are genuinely impressive for AI-generated music, but they also reveal some limitations that are important to understand.

The Strengths: Instrumentation and Rhythm

The instrumental components of Lyria 3's output are where the model shines brightest. The rhythmic accuracy is solid. The instrument sounds are convincing. Synthesized bass, drums, and melodic instruments all feel present and mix appropriately. When you listen to a Lyria 3 track and focus just on the instrumental layers, you'd be hard-pressed to identify it as AI-generated without comparing it side-by-side with professional production.

The harmonic progressions are generally solid. The model understands music theory at an operational level, creating chord progressions that don't clash, melodies that complement existing elements, and arrangements that develop over the track's duration. For a piece of AI, understanding that a song needs structure, variation, and cohesion is genuinely impressive.

Tempo consistency is another strength. The model doesn't drift or lose time the way some earlier generation tools did. If you ask for a 120 BPM track, you get 120 BPM maintained throughout.

The Weaknesses: Lyrics and Composition

This is where things get weird. The lyrics that Lyria 3 generates are simultaneously impressive and completely awkward. The model understands syllable counting, rhyme schemes, and how to fit words into melodic patterns. But the actual linguistic content is often clunky or tonally off.

You'll get rhymes that work technically but feel forced. Word choices that make sense individually but create strange lines when combined. The emotional arc of the lyrics often doesn't match the music's emotional arc. Sometimes the model generates multiple interpretations of lines simultaneously, creating a strange layering effect.

Google's example of "a sock finding their match" shows this clearly. The concept is funny, and the lyrics capture that concept, but they do it in a way that feels uninspired. The jokes don't quite land. The phrasing is stilted. It's like listening to a talented musician who's never studied comedy writing trying to make a funny song.

There's also the broader composition question. Thirty seconds isn't much time, which limits what you can do narratively. And while Lyria 3 can create coherent musical ideas, it doesn't always create compelling ideas. Some generated tracks feel generic. They hit expected beats without surprising or delighting.

Integration Points: Where You'll Actually Use This

Google isn't just dropping Lyria 3 into Gemini and calling it a day. The company is integrating this across multiple products, which is important because different use cases benefit from music generation in different contexts.

Gemini Web Interface

The most direct access point is through Gemini itself. Any user 18 or older who speaks English, Spanish, German, French, Hindi, Japanese, Korean, or Portuguese can generate music directly in the Gemini chat interface. This is available starting immediately, though rollout timing varies by region.

The web interface is where experimentation happens. Users can iterate quickly, try different prompts, explore variations. It's the sandbox where people learn what works and what doesn't.

YouTube Shorts and Dream Track

YouTube's "Dream Track" feature is where Lyria 3 becomes genuinely useful for creators. Shorts creators can use Lyria 3 to generate detailed backing tracks for their videos. Instead of licensing music or using generic stock tracks, creators can generate unique, custom music that matches their content.

This is particularly valuable because YouTube's music licensing system is notoriously restrictive. Many creators avoid using music because of copyright complications. Lyria 3-generated music bypasses this entirely. Generate a track, use it in your Short, no licensing worries.

Dream Track has been available for a while, but integrating Lyria 3 makes it significantly more powerful. The quality of generated tracks is high enough that creators don't feel like they're settling.

Potential Future Integrations

Google mentioned that Lyria 3 could potentially be incorporated into Google Messages. Imagine sending a custom-generated soundtrack along with a text message. Or embedding AI-generated audio in collaborative documents through Google Workspace. Or using it in Google Meet to generate intro music for presentations.

These aren't officially announced, but they're logical extensions of the technology. Google has a history of spreading AI capabilities across its product suite, and music generation fits that pattern.

Lyria 3 significantly improves on previous models with better nuanced composition, control over elements, and cross-modal integration. Estimated data based on feature descriptions.

The Watermarking and Copyright Question

One of the most important aspects of Lyria 3's release is the inclusion of Synth ID watermarking. This invisible metadata embedded in generated audio identifies it as machine-created content. Google started rolling out its Synth ID Detector at Google I/O 2025, which can identify AI-generated content.

Why does this matter? Because it addresses one of the biggest concerns around generative AI: the ability to pass off machine-created content as human-created work. If your generated music is watermarked and detectable, it's much harder to claim you created it yourself. This has implications for copyright, plagiarism, and authorship.

The watermarking isn't visible to listeners. You can't hear it. But detection tools can identify it, which means generated music has a digital fingerprint proving its origin. This is actually a thoughtful approach to a real problem. Instead of trying to make AI music indistinguishable from human music, Google is being transparent about its origins.

However, watermarking isn't foolproof. Determined people can try to remove watermarks or work around them. But it raises the barrier significantly compared to unmarked AI-generated content.

Lyria 3 in the Broader AI Music Generation Landscape

Google isn't operating in a vacuum. There are other players in the AI music generation space, and understanding where Lyria 3 sits relative to these alternatives matters for users trying to decide what tools to use.

Comparison with Other Generative Music Tools

Tools like OpenAI's Jukebox, Meta's Audiocraft, and Stability AI's similar models have been working on music generation for years. Some of these tools offer longer generation lengths. Some offer more control over musical elements. Some are focused on remixing rather than pure generation.

Lyria 3's advantages are its integration with Gemini's multimodal capabilities and its focus on usability. You don't need to be technically sophisticated to generate music. The interface is simple. The results are consistent. And it's built into a tool millions of people already use.

Lyria 3's disadvantages are the 30-second limit and sometimes awkward lyric generation. If you need longer tracks or production-quality vocals, other tools might be better suited.

The Competitive Advantage of Integration

What really matters is that Google has woven music generation into an existing, widely-used product. Gemini has tens of millions of users. A non-trivial percentage will experiment with music generation. They'll discover what's possible, what's useful, what's just for fun. This broad exposure matters more for the technology's adoption than any technical specification.

Integration also means improvement velocity. Google can iterate quickly based on user feedback. Changes to Lyria 3 can be deployed across all Gemini instances without separate app updates or installation requirements.

Text-based prompts offer the easiest entry point, while video-based generation provides the most control over output. Visual-based generation excels in creativity and novelty. Estimated data.

Use Cases Where Lyria 3 Actually Works

Let me be specific about where this technology creates genuine value, because that's different from just being technically interesting.

Content Creation and YouTube Shorts

If you're a Shorts creator who needs background music but doesn't want to deal with licensing or generic stock music, Lyria 3 is a game-changer. You can generate unique, custom music that perfectly matches your content's tone and pacing. The quality is high enough that viewers won't notice they're listening to AI-generated music.

A creator might spend 15 minutes generating music for a 30-second video with Lyria 3. Previously, they'd spend 30-45 minutes scrolling through stock music libraries trying to find something that fit.

Podcast and Audio Content Production

Podcasters can use Lyria 3 to generate intro and outro music, transition music, or background music for specific segments. Having custom-generated audio that matches a show's aesthetic is more professional than using generic stock music, and it's significantly cheaper than licensing actual songs.

Indie Game Development

Small game development teams or solo developers can generate background music for their games using Lyria 3. Instead of commissioning a composer or finding royalty-free music, they can generate custom tracks that match specific game moments or atmospheres. The quality is improving rapidly.

Musical Experimentation

Musicians can use Lyria 3 as a compositional tool. Generate a bass line, harmonize it, add drums, iterate until something interesting emerges. It's not replacing human creativity, but it's augmenting the creative process. A musician who knows how to edit and iterate can take Lyria 3's output and refine it into something genuinely artistic.

Presentation and Pitch Materials

Business professionals can generate background music for presentations, pitch decks, or internal training materials. Instead of hunting for copyright-free music, they can generate custom audio that matches the presentation's mood and tone.

Technical Limitations and What They Mean

Understanding Lyria 3's technical limitations helps explain why it's not perfect and what improvements will probably come next.

The 30-Second Barrier

This limitation exists because generating longer audio requires exponentially more computational resources. Extending to 60 seconds would quadruple the processing power needed. That's expensive and slow. Google chose 30 seconds as the sweet spot between capability and practicality.

But 30 seconds is ultimately arbitrary. As computational efficiency improves, this limit will probably increase. We'll see 60-second, then 2-minute, then 5-minute generations become practical. The barrier isn't fundamental to the technology, just to current hardware constraints.

Lyric Quality Ceiling

Generating lyrics that work within musical constraints is genuinely hard. You need the right number of syllables, the right emphasis pattern, intelligible pronunciation, and lines that actually make sense semantically. Getting all of those right simultaneously is the challenge.

Lyria 3 handles the technical constraints well. It's the creative aspect that struggles. The model understands music theory but doesn't understand storytelling the way a lyricist does. This probably improves as language models improve, but it's a harder problem than purely instrumental generation.

Musical Creativity Ceiling

What's the ceiling on how creative AI music can get? That's an open question. Current models are very good at following patterns and generating variations on familiar musical structures. They're less good at surprising listeners or creating genuinely novel musical ideas.

This isn't necessarily a problem for the intended use cases. Most background music, intro music, and functional audio doesn't need to be innovative. It needs to be appropriate and professional. Lyria 3 handles that.

As computational efficiency improves, Lyria 3's audio generation limit is expected to increase from 30 seconds to 5 minutes. Estimated data.

Privacy, Data, and Ethical Considerations

When you generate music in Gemini, Google collects data about your prompts and the outputs you select. This is standard practice for machine learning services. Your prompts help train future versions of the model. Your selections show which outputs were useful, which helps with ranking and recommendation.

If you're generating music for sensitive or confidential projects, that's worth understanding. Your prompts will be associated with your Google account. If privacy is a concern, you'll want to consider whether generating music through Gemini is appropriate for that use case.

There's also the question of training data. What music was used to train Lyria 3? How were artists compensated or credited? Google hasn't fully disclosed this, which is a common issue in AI development. Models trained on copyrighted music raise questions about artist compensation and consent.

The Future of AI-Generated Music

Lyria 3 is a significant step, but it's not the endpoint. Where is this technology headed in the next few years?

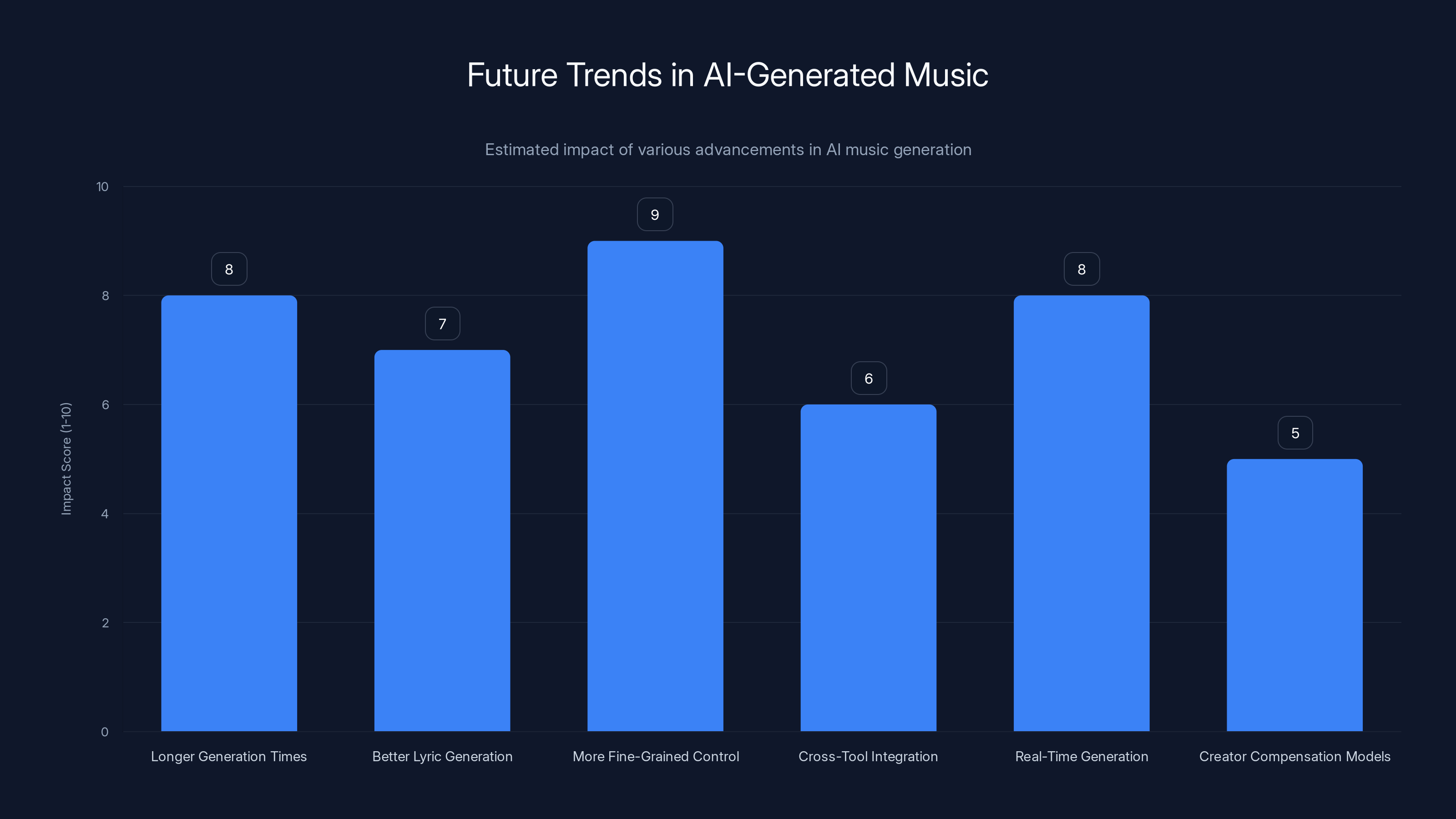

Longer Generation Times

As computation becomes cheaper and more efficient, longer music generation becomes practical. We'll see tools capable of generating 5-minute, 10-minute, maybe even full-album-length compositions. This opens up use cases that aren't viable with 30-second clips.

Better Lyric Generation

As language models improve and music-specific models become more sophisticated, lyric quality will improve. We'll see fewer awkward phrasings and weird word choices. The emotional arc of lyrics will better match the music.

More Fine-Grained Control

Users will get more control over specific musical elements. Instead of just requesting instruments and tempo, they might be able to specify exact harmonic progressions, specific melody contours, or detailed arrangement instructions.

Cross-Tool Integration

We'll see music generation integrated into more creative tools. Your DAW might have built-in AI music generation. Your video editor might generate music automatically based on footage. Your presentation software might suggest accompanying audio.

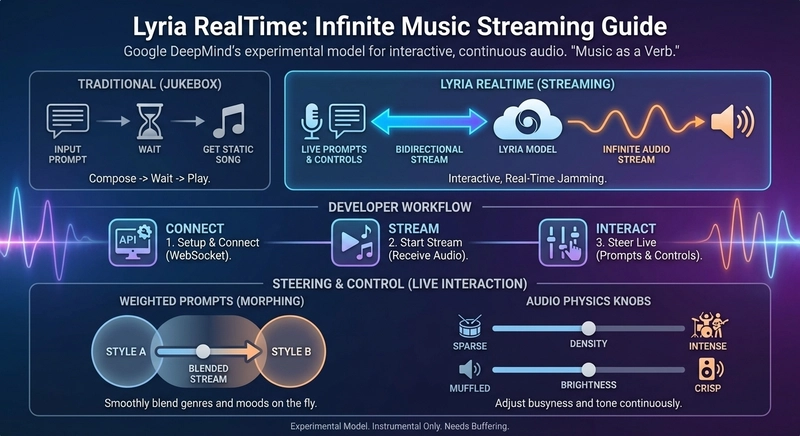

Real-Time Generation

Instead of generating complete tracks upfront, systems might generate music in real-time based on dynamic inputs. Imagine a game that generates music responding to player actions, or a DJ tool that generates accompaniment responding to live input.

Creator Compensation Models

The music industry will develop new compensation and licensing models for AI-generated music. Instead of licensing specific songs, creators might license the right to use AI models or pay based on usage metrics.

Estimated impact scores suggest that more fine-grained control and longer generation times will have the highest impact on the future of AI-generated music.

Why This Matters More Than You Might Think

Lyria 3 might seem like a cool feature for Gemini users, but it has broader implications worth considering.

Democratization of Music Production

Professional music production used to require expensive equipment, significant technical knowledge, and often collaboration with trained musicians. Lyria 3 lowers those barriers. Anyone with internet access and a Gemini account can generate professional-quality backing music. This democratization is real and meaningful.

Shift in Music Industry Economics

As AI-generated music becomes more accessible and more usable, the economics of music production shift. License costs might decrease. The demand for certain types of music work might decrease. The industry will adapt, but disruption is coming.

New Creative Possibilities

Tools enable new forms of creativity. Photography wasn't just painting cheaper, it enabled visual storytelling that painting couldn't achieve. AI music generation isn't just composing cheaper, it enables creative workflows that weren't possible before.

Questions About Authenticity and Value

As AI-generated music becomes more prevalent, we'll have cultural conversations about authenticity and value. What do we value in music? Is it the final product or the human creativity involved in creating it? As these questions get answered, they'll shape how AI music tools are used and regulated.

Getting Started With Lyria 3

If you want to experiment with Lyria 3, here's what you need to know.

Access Requirements

You need to be 18 or older and have a Google account. You need to speak one of the supported languages: English, Spanish, German, French, Hindi, Japanese, Korean, or Portuguese. You need access to Gemini, which is available through the web interface (gemini.google.com) or through mobile apps.

Getting the Best Results

Start with simple prompts and iterate. "Upbeat pop" works. "Funky bass with minimal drums and vocal harmony" works better. Be specific about what you want but don't over-explain. The model responds better to clarity than to verbosity.

Experiment with images and videos if you have them. Visual prompts sometimes generate more interesting results than text descriptions alone.

Generate multiple variations of the same prompt. Sometimes the first result isn't the best. Asking for variations often produces a better output.

What to Do With Your Generated Music

You can download generated tracks directly from Gemini. You can use them in videos, presentations, podcasts, or other projects. The watermarking identifies them as AI-generated, but that's transparent and can actually be a positive signal.

If you're creating content and using Lyria 3-generated music, it's good practice to disclose that the audio was AI-generated. Most audiences are understanding about this, and transparency builds trust.

Common Mistakes to Avoid

If you're new to Lyria 3, here are errors that will waste your time.

Expecting Studio-Quality Vocals

Lyria 3 generates music, not perfect vocals. If you need professional-quality singing, you might want to use generated music as backing tracks and layer human vocals on top.

Asking for Overly Specific Musical Theory Details

While you can request specific chord progressions or harmonic structures, the model sometimes misinterprets overly technical requests. Simpler descriptions often work better.

Not Using Images or Videos When You Have Them

Text-only prompts are fine, but adding visual input often improves results. If you're generating music for a specific visual project, show the model that visual.

Assuming One Generation Is Your Only Option

Generate variations. Generate with slightly different prompts. The second or third version is often better than the first.

Neglecting the Remix Feature

If you like something about a generated track but want to change something, remix it instead of starting over. This saves time and often produces better results than generating from scratch.

Comparing Lyria 3 to Traditional Music Production

Let me put this in perspective by comparing Lyria 3 to traditional approaches for different use cases.

Time Investment

Traditional: Finding music might take 30-60 minutes. Commissioning original music might take weeks. AI: Generating music takes 5-10 minutes. This difference matters most for content creators on deadlines.

Cost

Traditional: Licensing music costs money. Commissioning original music costs significantly more. AI: Free or part of Gemini subscription. The economics strongly favor AI for certain use cases.

Uniqueness

Traditional: Licensed music is often used by many creators, so your video might use the same backing track as someone else's. AI: Generated music is unique to your specifications. Your video sounds different from others.

Quality

Traditional: Professional music production sounds professional. AI: Generated music sounds professional in many cases but not always. The quality gap is closing.

Creative Flexibility

Traditional: Once you choose music, you're locked in. AI: Easily generate variations or new music if creative direction changes. The flexibility matters for iterative creative work.

The Broader Context: AI Tools and Creative Work

Lyria 3 is part of a larger trend of AI tools augmenting creative work. Image generation, video creation, writing assistance, code generation—all of these are following similar patterns: tools that handle routine work, augment human creativity, and lower barriers to creative expression.

Understanding where Lyria 3 sits in this landscape helps explain why it matters and what's probably coming next. The future isn't about AI replacing human creators. It's about tools that let humans be more creative, more productive, and more ambitious in their work.

This shift has benefits and challenges. Benefits: more creative people can make more things. Challenges: jobs that were formerly specialized become more commodified. The net effect is probably positive, but the transition is disruptive.

Practical Integration Into Your Workflow

If you're considering using Lyria 3, here's how to integrate it practically into your creative process.

For Content Creators

Add music generation to your pre-production process. Before you film or edit, generate a few music options. This gives you options and creative direction. You can edit video to match the music or choose music that matches your cut.

For Podcasters

Generate intro, outro, and transition music for your show. Keep these consistent across episodes but regenerate periodically for variety. This creates professional audio production without hiring a composer.

For Marketers

Generate background music for promotional videos, webinars, and training content. Custom-generated music that matches your brand aesthetic is more professional than generic stock music and usually cheaper than licensing.

For Developers

If you're building a game or interactive experience that needs music, experiment with Lyria 3. Generate variations based on game state or user actions. This creates dynamic audio that's responsive to context.

Limitations Worth Acknowledging

For transparency, let me be direct about where Lyria 3 doesn't work well.

It's not good for generating music that requires exact technical specifications or production-specific details. If you need a track that fits exactly into a specific frequency range or has particular acoustic properties, traditional production is better.

It's not good for generating lyrics that need to tell a specific story or convey nuanced emotions. The technical lyrics are serviceable, but the creative storytelling often falls flat.

It's not good for generating music that needs to match existing audio or have very specific tonal qualities. The model is good but not precise enough for this level of technical matching.

It's not appropriate for generating music that closely imitates specific artists' styles without modification, which raises copyright questions.

These aren't dealbreakers for the intended use cases, but they're important to understand if you're considering Lyria 3 for specific projects.

FAQ

What is Lyria 3?

Lyria 3 is Google's third-generation music generation model integrated into Gemini that can create 30-second original music tracks based on text prompts, images, or videos. The model can generate entirely new compositions or remix existing musical descriptions with control over elements like tempo, instrumentation, and emotional tone.

How does Lyria 3 work?

Lyria 3 uses deep learning to understand musical structure and composition patterns. When you provide a prompt, the model analyzes the request, generates an overall musical structure, and then fills in details at melodic, harmonic, rhythmic, and lyrical levels simultaneously. The process happens entirely on Google's servers, and the results are processed within seconds.

What are the benefits of using Lyria 3?

Lyria 3 saves content creators significant time by generating music in minutes instead of hours, eliminates licensing concerns for many use cases, provides unique audio that won't be used by other creators, and lowers barriers to professional-quality music production. It's particularly valuable for YouTube Shorts creators, podcasters, small game developers, and anyone needing functional, non-generic background music.

Who can access Lyria 3?

Lyria 3 is available to Gemini users who are 18 years or older and speak one of the supported languages: English, Spanish, German, French, Hindi, Japanese, Korean, or Portuguese. Access is through Gemini's web interface or mobile apps, and availability may vary by region during rollout.

Is AI-generated music marked as such?

Yes, all Lyria 3-generated music is watermarked with Google's Synth ID technology, which invisibly embeds metadata identifying the track as machine-created. Google's Synth ID Detector can identify these watermarks, making it difficult to pass off AI-generated music as human-created compositions.

Can I use Lyria 3-generated music commercially?

Lyria 3-generated music can be used in many commercial contexts like YouTube videos, podcasts, and presentations. However, licensing terms for commercial use are still evolving across the industry. Check Google's terms of service and content policies for your specific use case, and consider disclosing that music was AI-generated, especially for professional productions.

What are the main limitations of Lyria 3?

Lyria 3 currently generates only 30-second clips, struggles with lyric quality and emotional storytelling in vocals, works best with relatively simple prompts rather than overly technical specifications, and may not be suitable for applications requiring precise technical audio matching or exact style replication.

How does Lyria 3 compare to hiring a composer or licensing music?

Lyria 3 is significantly faster and cheaper than commissioning original music from composers, and it's more flexible than licensed music since you get unique, custom-generated tracks. However, professional human composers produce more emotionally nuanced and creative work. Lyria 3 is best for functional background music, quick iterations, and situations where speed and cost matter more than artistic complexity.

Can I edit or modify Lyria 3-generated music?

You can request variations and remixes through Gemini's interface by asking for modifications to specific elements. Some users also download tracks and edit them in digital audio workstations for additional customization, though this requires technical audio editing knowledge.

What's the technical quality of Lyria 3's output?

The instrumental components are generally professional-quality with accurate rhythm, good sound design, and convincing instrument simulation. Lyric generation is technically competent but often feels stilted or awkwardly phrased. Overall quality is comparable to mid-level professional production for background music and significantly above stock music quality, though it doesn't match top-tier human composition.

The landscape of creative tools is changing rapidly. Lyria 3 represents a meaningful step in making professional-quality music accessible to creators who previously lacked the skills, time, or budget. Whether you're a content creator looking for quick backing tracks, a developer building a game, or someone experimenting with music production, Lyria 3 offers genuine utility. The technology isn't perfect, but it's good enough for the jobs it's meant to handle. And that's something worth paying attention to.

Key Takeaways

- Lyria 3 generates 30-second AI music tracks through Gemini with text, image, or video prompts

- Instrumental quality is professional-grade while lyric generation remains the weakest component

- Integration with YouTube Shorts (Dream Track) and Gemini makes music generation accessible to millions

- All generated audio is watermarked with SynthID for content authenticity identification

- Best use cases are background music for content, podcasts, games, and presentations—not professional compositions

Related Articles

- World Labs $200M Autodesk Deal: AI World Models Reshape 3D Design [2025]

- Seedance 2.0 Sparks Hollywood Copyright War: What's Really at Stake [2025]

- Grok's Deepfake Crisis: EU Data Privacy Probe Explained [2025]

- Samsung's AI Slop Ads: The Dark Side of AI Marketing [2025]

- How Bill Gates Predicted Adaptive AI in 1983 [2025]

- SAG-AFTRA vs Seedance 2.0: AI-Generated Deepfakes Spark Industry Crisis [2025]

![Google Gemini Music Generation with Lyria 3: [2025]](https://tryrunable.com/blog/google-gemini-music-generation-with-lyria-3-2025/image-1-1771448777524.jpg)