How to Verify What's Real: Media Authenticity Methods in 2025

You've seen it happen. Someone shares a video of a politician saying something outrageous, and your first thought isn't "Did they really say that?" anymore. It's "Is this real or AI-generated?" That shift—from automatic trust to automatic skepticism—defines our current moment.

The problem isn't that synthetic media exists. It's that it works too well. A high school student with a laptop can now create photorealistic images, convincing video deepfakes, and digitally altered audio that would've required Hollywood budgets five years ago. Meanwhile, genuine footage gets questioned simply because perfect forgeries are now possible.

This is where media authenticity comes in. Not as a silver bullet, but as a practical toolkit for answering a simple question: Where did this content come from, and has it been modified?

This article breaks down how these authentication methods actually work in practice, what their real limitations are (spoiler: they're significant), and where the industry is heading. I'm not going to sell you on a perfect solution because one doesn't exist. Instead, I'll give you the honest picture of what's possible today and what we're still figuring out.

TL; DR

- Media integrity methods use standards like C2PA to track content origin and modification history through cryptographic signatures and metadata.

- Watermarking and fingerprinting add invisible or perceptual marks to content, but can be removed or fooled by sophisticated attacks.

- The authenticity gap persists: proving something is real is much harder than proving it's fake or modified.

- 2026 legislation will require "verifiable" provenance signals, putting pressure on implementers to clarify what that actually means.

- No single solution works across all content types—images, video, and audio each face unique technical and adoption challenges.

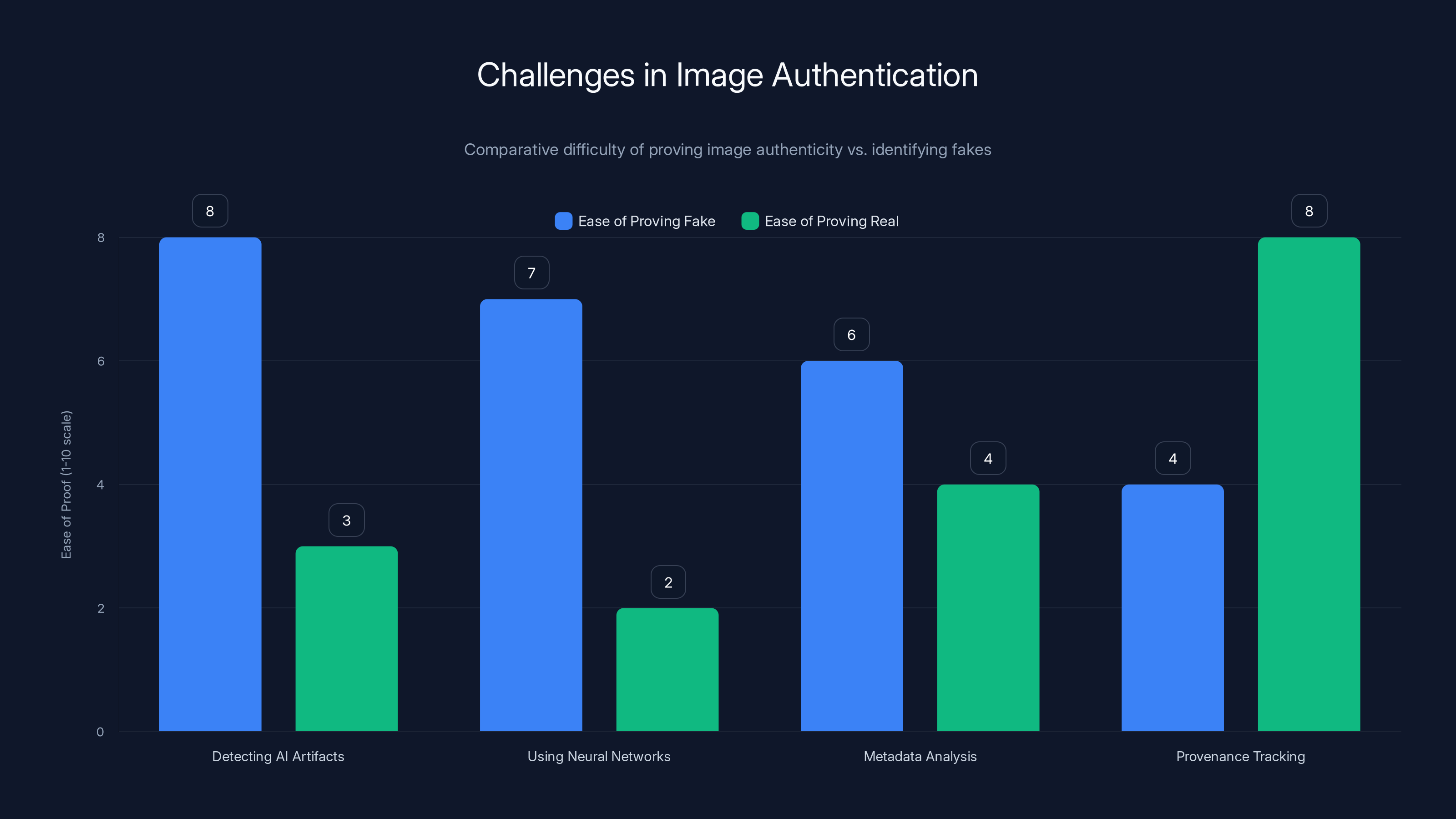

Proving an image is fake is generally easier than proving it's real, with provenance tracking being the most reliable method for authenticity. Estimated data based on typical challenges in media authentication.

The Problem We're Trying to Solve: Why Media Provenance Matters Now

Five years ago, deepfakes felt like a novelty. Someone would create a celebrity video, it would circulate for a week, get debunked, and disappear. Today, the stakes are completely different.

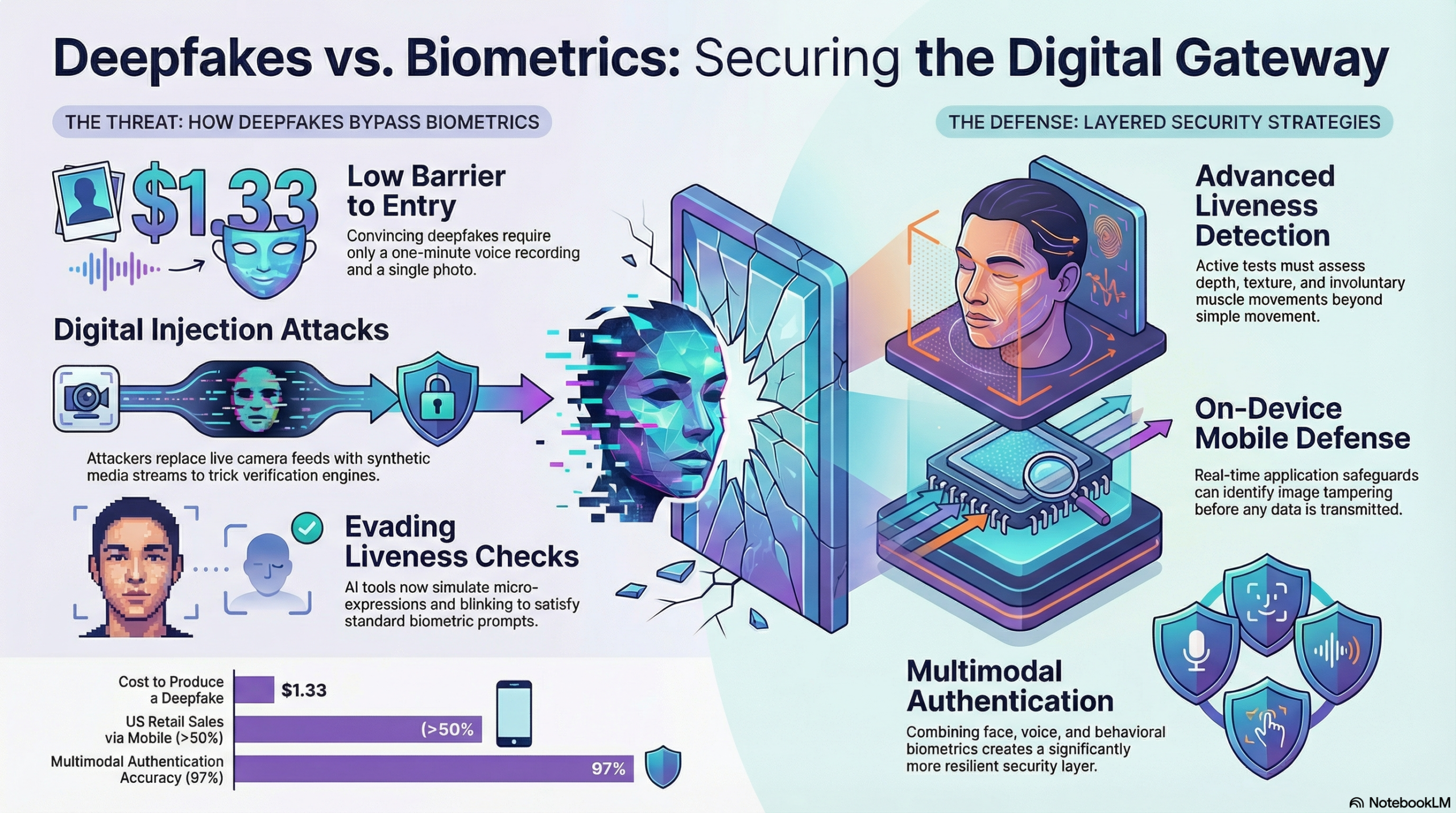

We're not just talking about viral entertainment anymore. We're talking about election interference, financial fraud, harassment campaigns, and disinformation at scale. When a fake video of a corporate CEO can tank stock prices, or when synthetic audio can impersonate someone's voice in a phone call to steal money, provenance becomes critical infrastructure.

The challenge is scale. Humans can't manually verify every image and video we encounter. There are 720,000 hours of video uploaded to YouTube every day. Facebook processes roughly 350 million photos daily. TikTok moves even faster. No amount of human fact-checkers can keep pace with synthetic media generation that's becoming increasingly automated.

This is why the industry started building media integrity and authentication (MIA) methods. The core idea is simple: if we can cryptographically prove where content came from and track every modification made to it, we can help people make informed decisions about what they're viewing.

But the implementation? That's where things get complicated. Real fast.

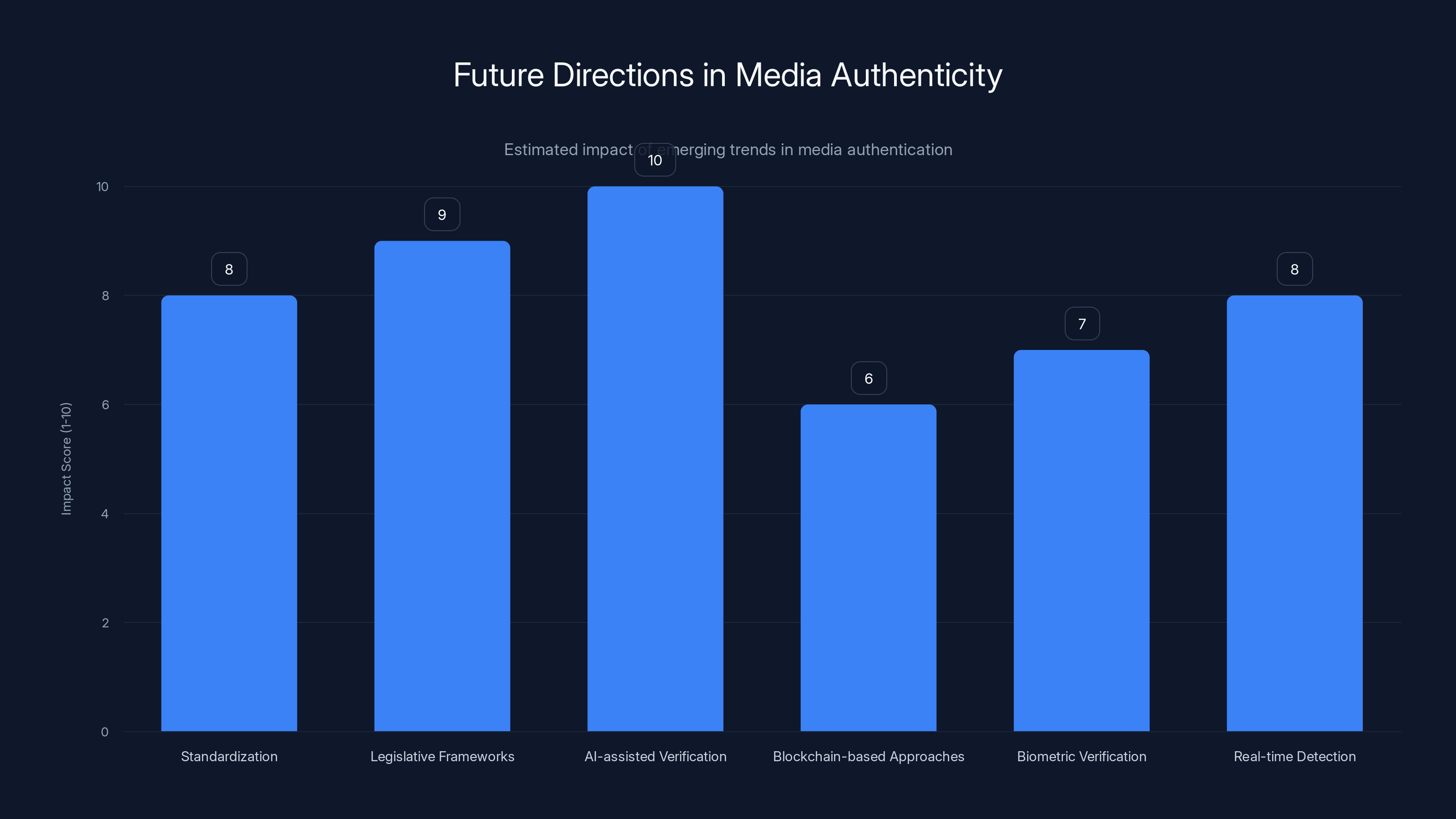

AI-assisted verification is expected to have the highest impact on media authenticity, with a score of 10, due to its intuitive presentation of authentication data. Legislative frameworks also score high at 9, as they will drive compliance and infrastructure development. (Estimated data)

What Is C2PA and Why Does It Matter?

The Coalition for Content Provenance and Authenticity (C2PA) isn't a company. It's a standards body backed by major technology firms, media organizations, and publishing platforms. Their mission is straightforward: create open technical standards that let anyone verify content authenticity and track its origin.

At its core, C2PA is trying to solve a fundamental problem with digital media: metadata is invisible and easy to fake. You can take a photo, strip out all its information, edit it heavily, and nobody would know without technical analysis. C2PA wanted to build something more resilient.

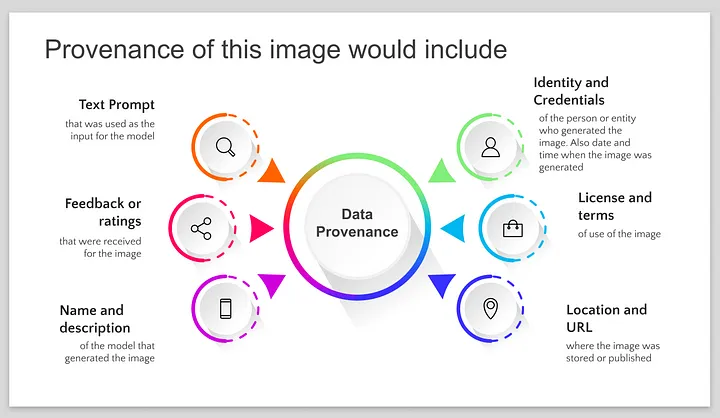

The C2PA standard works by embedding cryptographic signatures and a "claim history" directly into media files. Think of it like a certificate of authenticity for digital content. When someone creates or modifies a piece of media, the C2PA-compliant tool adds a claim that says: "I created this on February 15, 2025, using Adobe Photoshop version 25.2, and I claim it's original." If someone else edits that file later, a new claim gets added: "I modified this on February 16, 2025, cropping out the left side and adjusting brightness."

The beauty of this approach is transparency. Unlike metadata that lives separately from the file, C2PA claims travel with the content. You can inspect the full history as it moves across platforms. This creates an auditable trail.

But here's the critical caveat: C2PA doesn't guarantee truthfulness. It guarantees that you can verify who made a claim and when. Someone could still upload an entirely synthetic image and claim it's authentic—the cryptographic signature would be valid, but the underlying claim would be false. C2PA provides accountability, not infallibility.

Adoption is still in early stages. Major platforms like Microsoft, Adobe, Nikon, and Canon have committed to supporting C2PA. But you can't just flip a switch and make it universal. Every content creation tool, every social platform, every news organization has to integrate C2PA into their workflows. That's happening gradually, but we're nowhere near ubiquitous adoption yet.

Watermarking: Adding Invisible Signatures to Content

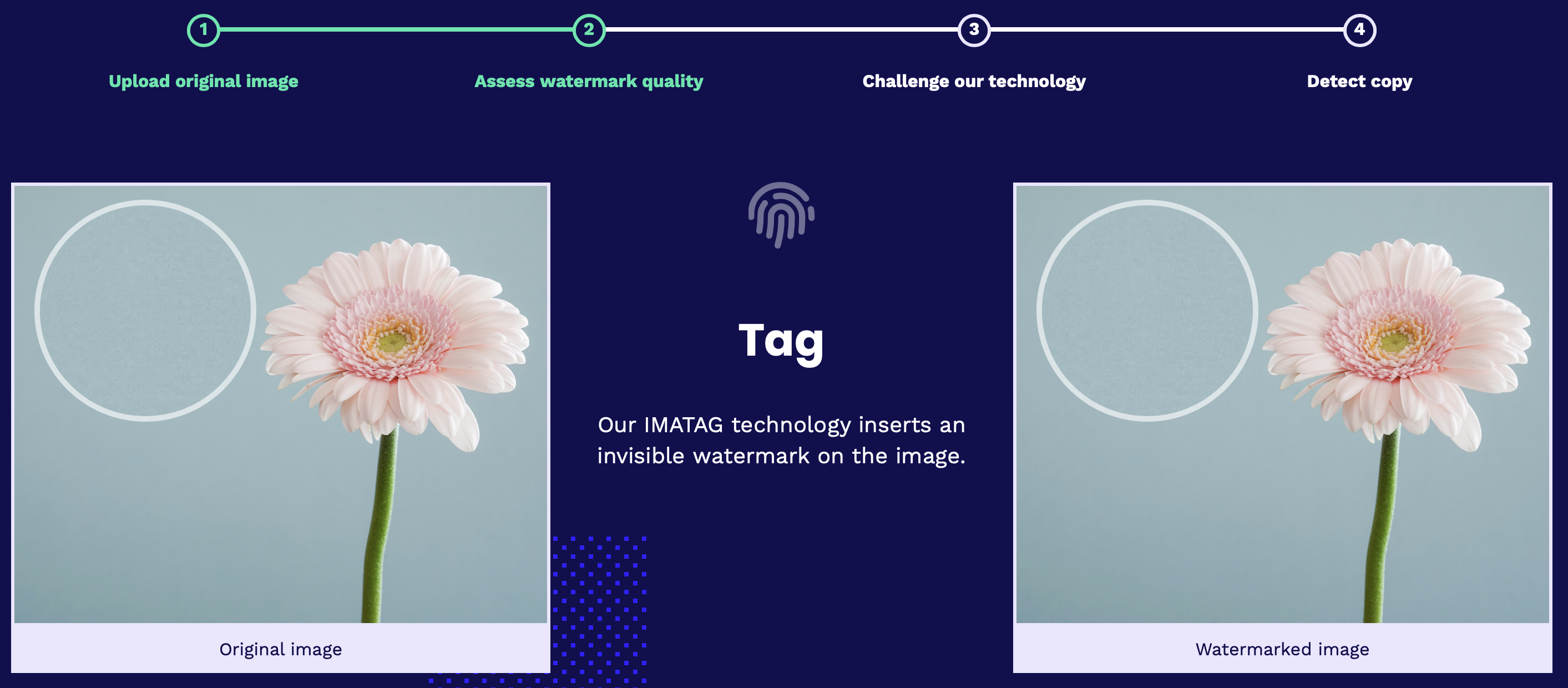

Watermarking is an older authentication approach, but it's becoming more relevant in the AI era. The idea is to embed a hidden signal into media that identifies it as synthetic or authentic, and ideally, who created it.

There are two main types of watermarks: visible and invisible. Visible watermarks are obvious—like a TV station logo in the corner of a broadcast. These are easy to detect but also easy to remove or cover up. Invisible watermarks are embedded in the data itself, typically in ways that survive light editing like compression or resizing.

For synthetic media detection, invisible watermarking is more interesting. When an AI system generates an image, it can embed a watermark that identifies it as AI-generated. This watermark would ideally persist even if someone edits the image, crops it, or compresses it. The theory is that if you download an image and can extract a watermark saying "Generated by Open AI DALL-E on February 15, 2025," you've immediately got critical information about its origin.

The problem? Watermarks can be removed. If you know roughly where and how the watermark is embedded, you can attack it. Researchers have demonstrated that adversarial attacks—basically using AI to find and neutralize the watermark—work against several watermarking schemes. Crop the image differently, apply certain filters, add noise, and the watermark becomes undetectable.

There's also a fundamental tension in watermark design: the stronger the watermark (the more robust it is to editing and attacks), the more likely it is to degrade image quality. A watermark that survives heavy JPEG compression might also introduce visible artifacts that make the image look wrong. Content creators don't want their images corrupted by authentication signals. This forces watermark designers to make trade-offs that leave vulnerabilities.

Another challenge is adoption coordination. If different AI image generators use different watermarking standards, or if watermarks aren't standardized, detection becomes fragmented. You'd need a different watermark detector for every system. This is beginning to improve with efforts like the C2PA approach to standardization, but we're still in the messy transition period.

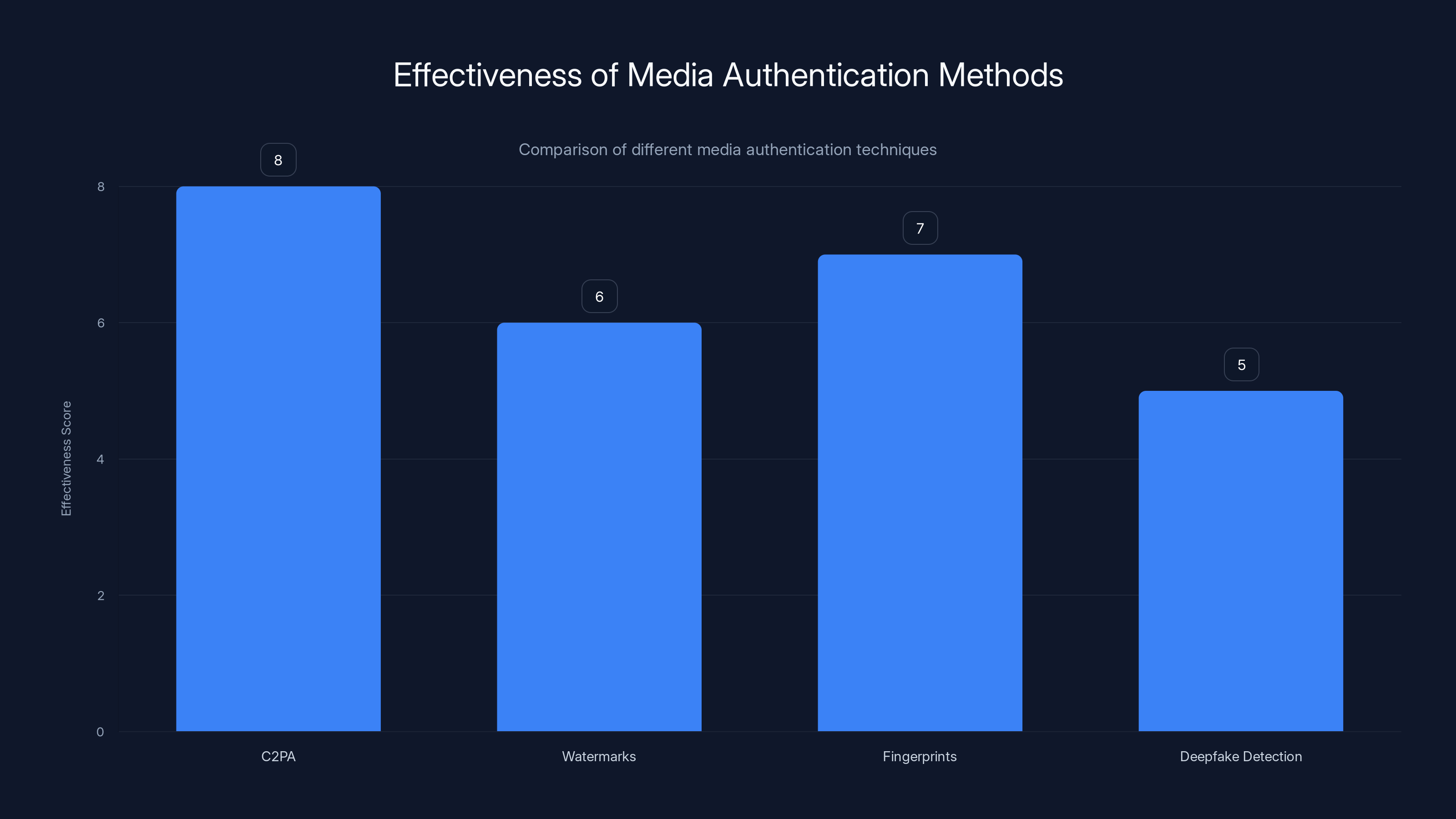

C2PA is currently the most effective method for media authentication, scoring 8 out of 10, while deepfake detection lags behind at 5. Estimated data.

Fingerprinting and Perceptual Hashing: Content Matching at Scale

Fingerprinting takes a different approach than watermarking. Instead of embedding a hidden signal into media, fingerprinting creates a compact digital summary of the content's perceptual characteristics. This summary is called a hash.

Here's how it works: You take an image and run it through an algorithm that extracts key features—edge patterns, color distribution, texture characteristics. These features get compressed into a short string of bits, maybe 64 or 128 bits. This fingerprint is much smaller than the original image but captures enough information to identify it.

The power of fingerprinting is deduplication and matching. If you've seen an image before, even in a slightly different form—compressed differently, cropped, rotated, or with colors adjusted—the fingerprint should match or be very similar. This lets platforms detect when content is being reused, which images are copies of each other, and which are original.

For authenticity purposes, fingerprinting doesn't directly prove origin, but it can work alongside other signals. For example, if a news organization publishes an image with a C2PA claim saying it was captured at 2:15 PM in downtown Seattle, and that image's fingerprint matches thousands of copies circulating online, you can verify distribution history. If the fingerprint doesn't match anything older than the claimed capture time, that's consistent with the image being original.

But fingerprinting has blind spots. Perceptual hashing can handle minor edits, but major modifications—significant cropping, heavy color correction, changing objects within the scene—can result in completely different fingerprints. An image that's been significantly AI-edited might have a fingerprint so different from the original that matching fails.

There's also the database problem. Fingerprinting only works if you're comparing against a known database of previous content. If an image is novel or hasn't been seen before, fingerprinting can't tell you if it's real or fake. It can tell you if it's a copy, but not if it's authentic.

Scale creates additional complexity. Maintaining fingerprint databases for every image and video ever created is technically and logistically challenging. Different platforms use different fingerprinting algorithms, which means they don't always match across services.

The Authenticity Problem: Why Proving Something Is Real Is Harder Than Proving It's Fake

Here's the asymmetry that makes media authentication so tricky: proving something is fake is easier than proving it's real.

If I want to prove an image is AI-generated, I have several approaches. I can look for telltale artifacts—weird hand anatomy, impossible reflections, glitches that commonly appear in generative AI output. I can run neural networks trained on thousands of fake images and get a probability score. I can extract metadata and check for watermarks. If any of these approaches yields positive evidence of synthesis, I can say with some confidence the image is fake.

But proving something is real? That's fundamentally harder. An authentic image is one that was actually captured by a camera. To prove that, I need to show that the image exhibits properties consistent with camera capture. But here's the problem: as AI systems get better at generating realistic imagery, they get better at replicating those properties.

The technical term for this is the "authenticity gap." It's the difference between detectability and verifiability. Deepfakes from 2018 were detectably wrong in obvious ways. Modern deepfakes are much closer to undetectable. But being undetectable isn't the same as being authentic. You can't distinguish a perfect forgery from the truth with technical analysis alone.

This is why the field is shifting toward provenance-based authentication. Instead of trying to analyze an image and determine if it's real, the approach is: track where it came from and what happened to it. If I can verify that an image was created by a camera I trust, at a time and location where I expect it to exist, that's much more reliable than analyzing the image itself.

This is where C2PA becomes critical. Rather than asking "Is this image fake?" you ask "Can I verify the creation history and claims?" The latter question is actually answerable. The former increasingly isn't.

But this approach has a prerequisite: trust in the signing authority. If an image has a C2PA claim signed by someone I trust—a major news organization, a government agency, a specific photographer—then the claim is cryptographically valid. The signature proves the claim was made by that entity at that time. What I can't know from the signature alone is whether the claim is true. A trusted organization could still lie and claim an edited image is original.

This is why technical authentication works best alongside social and institutional checks. A credible news organization with a reputation to protect is less likely to falsify C2PA claims than an anonymous account. Media literacy—understanding source credibility—remains essential even with perfect authentication technology.

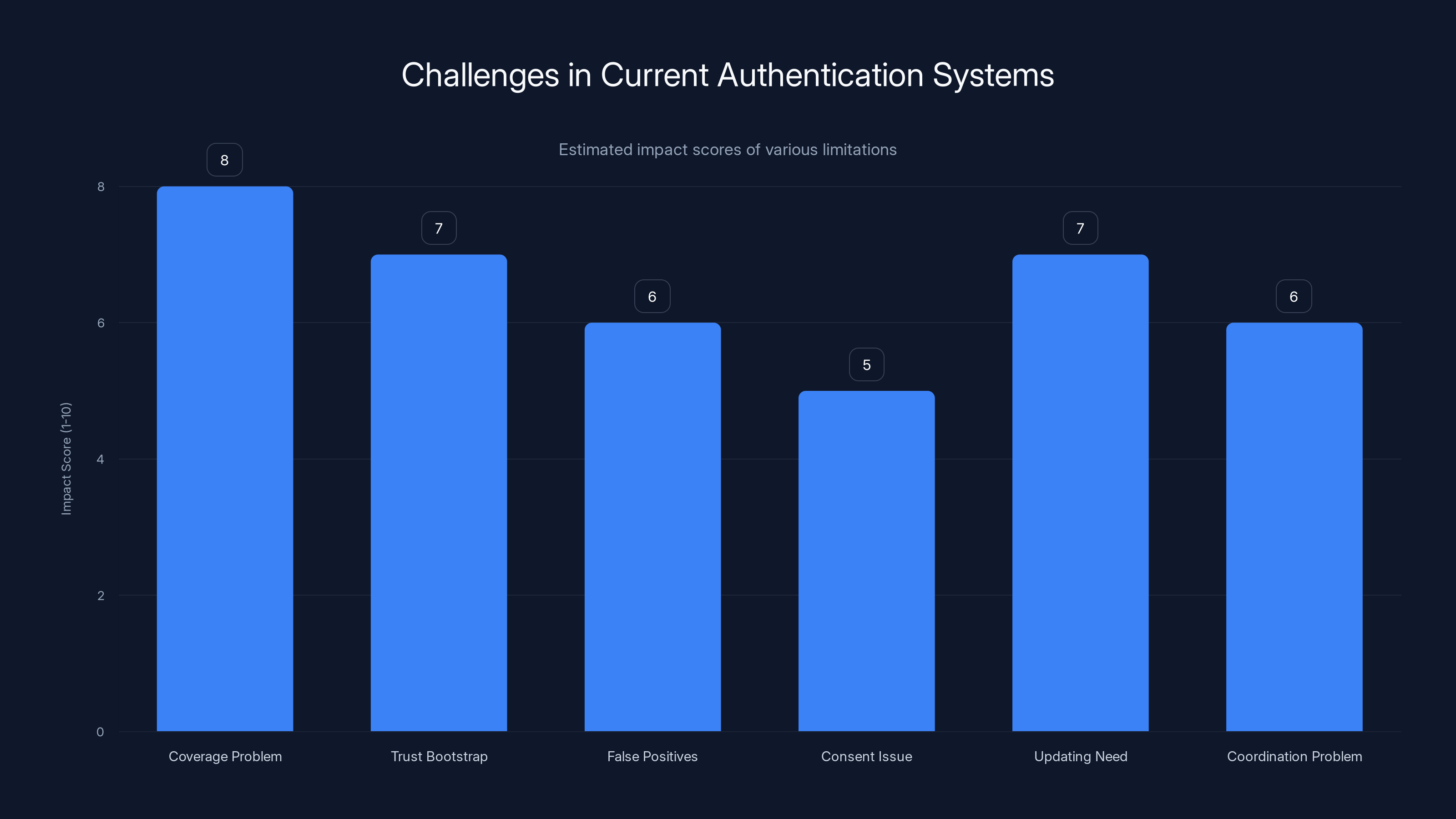

This chart estimates the impact of various limitations in current authentication systems. The coverage problem is perceived as the most significant, with an impact score of 8. (Estimated data)

Adversarial Attacks: How Authentication Systems Can Be Exploited

As soon as authentication systems exist, adversaries start probing for weaknesses. This is the fundamental cat-and-mouse game of security.

Watermark removal is the most obvious attack. Researchers have demonstrated that adversarial perturbations—carefully crafted edits that humans can barely perceive—can completely destroy a watermark. In one research example, adding imperceptible noise to an image removed its watermark while preserving visual quality. This technique could theoretically let someone strip the authentication signal from synthetic media and redistribute it as if it were original.

Metadata stripping is trivial. Every image file contains metadata—camera model, capture date, GPS location. You can remove all of it with basic image editing software. If an authentication system relies entirely on embedded metadata, someone determined can delete it. This is why C2PA cryptographic signatures are more robust than simple metadata—removing the claim would invalidate the signature, making the attack obvious.

But even cryptographic approaches have attack surfaces. If you can compromise the private key used to sign content, you can forge authentic-looking claims. This creates dependency on key management. Systems need to securely store and protect private keys, rotate them appropriately, and handle key compromise scenarios. That's non-trivial infrastructure.

There's also the problem of signed lies. A creator could legitimately claim they made an image using a certain tool at a certain time, embed a valid cryptographic signature, and that signature would be technically correct—even if the underlying claim is false. "I generated this image with Photoshop on Feb 15" is verifiable if Photoshop agrees and the signature is valid. What isn't verifiable is whether the image is actually synthetic or actually original. The signature proves the claim was made by someone with the signing authority, not that the claim is truthful.

Differential attacks exploit the detection gap I mentioned earlier. If detection systems are trained to identify known deepfake patterns, but new generative models produce patterns the detectors haven't seen, the detection fails. This is happening constantly as AI models improve. Detectors trained on DALL-E 2 outputs might not work well on DALL-E 3 outputs, and both might fail against models released next year.

There's also the question of coordinated attacks. If an organization wants to spread disinformation at scale, they don't need to crack every authentication system. They just need to control enough distribution channels to spread false claims faster than fact-checks can debunk them. This is a social problem more than a technical one, but it's the reality of media integrity.

The Adoption Challenge: Why Standards Haven't Gone Universal Yet

Let's be honest: the biggest barrier to media authenticity isn't the technology. It's adoption.

C2PA standards are solid. Watermarking and fingerprinting are well-understood. The problem is coordination. Every creator, platform, and tool needs to adopt these standards simultaneously for them to be useful. If only 10% of images have C2PA claims, the standard's value drops dramatically because you still can't verify 90% of what you see.

Adoption requires solving several problems at once. First, technical integration: every image editor, video creation tool, and camera firmware needs to support generating and validating signatures. Adobe has committed to this. Microsoft has committed to this. But what about smaller tools? What about older cameras? What about phone apps that thousands of people use daily?

Second, there's the infrastructure problem. Someone needs to maintain the certificate authorities that validate signatures. Someone needs to host the public keys that prove claims are authentic. If that infrastructure goes down, the entire system becomes unreliable. This creates dependency on organizations to maintain critical infrastructure indefinitely.

Third is the user experience problem. Most people don't think in terms of signatures and claims. They think "Is this real or fake?" A typical user won't understand what a C2PA claim means or how to verify it. Platforms need to present these signals in intuitive ways, which is harder than it sounds. You can't just show a raw signature—you need clear UI that explains what the claim means and whether to trust it.

Fourth is the regulatory uncertainty. In 2026, various jurisdictions are implementing legislation that requires "verifiable provenance." But there's genuine disagreement about what that means. Does it mean C2PA signatures specifically? Does it mean any authentication method? Can fingerprinting alone satisfy the requirement? Until regulations clarify, organizations are uncertain about how much to invest.

Finally, there's economic incentive misalignment. For platforms that profit from engagement, more misinformation might actually be better for engagement metrics. Verifying content takes resources. Building authentication systems costs money. There's no immediate business case driving adoption in all cases. This is why legislation is necessary—to force adoption by making it a requirement rather than an optional competitive advantage.

Smaller platforms and organizations face disproportionate burdens. A major news organization can afford to build C2PA integration into their entire workflow. A freelance photographer or small publisher might not have the resources. This creates a two-tier system where only well-resourced actors can participate in authenticated media ecosystems.

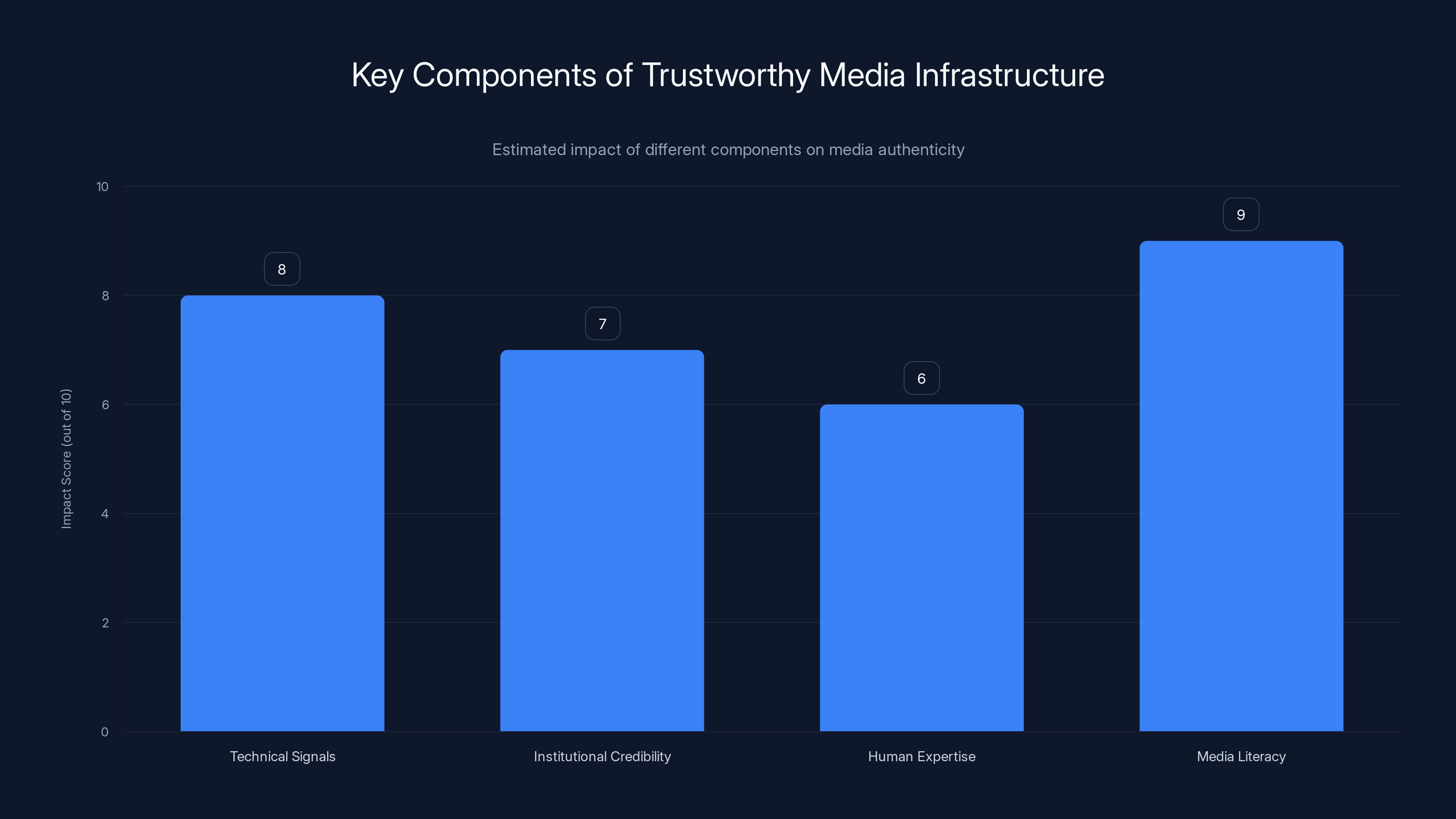

Estimated data shows media literacy and technical signals as having the highest impact on building trustworthy media infrastructure. These components help in distinguishing credible sources and detecting misinformation.

Technical Implementation: How Media Authenticity Works in Practice

Let's get concrete. Here's how media authentication actually works when you create, publish, and view content.

At Creation Time:

When you take a photo with a camera that supports C2PA, or use software like Adobe Photoshop that integrates C2PA, the application captures metadata about the capture or creation event. This includes:

- Timestamp (when the content was created)

- Device information (camera model, software used)

- Location data (if available)

- Creator identity (verified through digital certificates)

- Content hash (a fingerprint of the image itself)

The software cryptographically signs this metadata using a private key associated with the creator. The signature mathematically proves that someone with that private key created the claim. The claim and signature get embedded into the image file in a way that survives standard editing operations.

If you edit the image in Photoshop, the software adds a new claim: "Modified on Feb 16, 2025, 3:14 PM, cropping and brightness adjustment." This new claim also gets signed and added to the image's history. Now the image has a chain of custody—original creation plus every verified edit.

At Distribution:

When you share the image on social media or via email, that claim history travels with the file. Ideally, the platform you're sharing to extracts and validates the C2PA claims. They check the signature against the creator's public certificate to verify the claim was actually signed by that creator. They display information to viewers: "Original image captured by @photographer on Feb 15. Cropped on Feb 16."

At Viewing:

When you download and view the image, your viewing software can show you the authentication information. If you're using a C2PA-aware application, you might see a panel showing:

- Original creator and verified identity

- Creation timestamp and device

- Modification history and who made each change

- Any warnings if signatures don't validate

If you download the image on a platform that doesn't support C2PA yet, the claims are still embedded in the file, but invisible to you. This is intentional—C2PA claims should travel with media across any platform.

For Video and Audio:

Video and audio authentication work similarly but face additional complexity. Video files are much larger, which makes watermarking more challenging. Audio deepfakes can be created from just a few seconds of someone's voice, making detection harder. The C2PA standard extends to video and audio, but the technical challenges are greater.

For audio specifically, biometric approaches are emerging—analyzing speech patterns, voice characteristics, and acoustic properties to verify someone actually said something. But these approaches are also improving alongside forgery technology, creating an ongoing cat-and-mouse dynamic.

Limitations of Current Systems: What We Still Can't Do

Making all this work sounds good in theory. In practice, every authentication approach has serious limitations.

The coverage problem: Most content online doesn't have authentication signals yet. Billions of images exist without watermarks, fingerprints, or C2PA claims. Even as adoption improves, we'll have a massive legacy of unverified content. You can't retroactively prove a 2020 photo is authentic just because better systems exist in 2025.

The trust bootstrap problem: C2PA depends on trusted signing authorities. But how do you know you're verifying against the right public key? If a website claims to be the official Adobe C2PA root certificate but it's actually a spoofed domain, you'd verify against the wrong key. This creates dependency on secure certificate distribution. Most users will never understand this layer of trust.

The false positive problem: Detection systems for synthetic media (looking for artifacts, anomalies, etc.) are improving, but they still produce false positives. An authentic image might trigger a "synthetic" flag due to unusual compression or photography techniques. Conversely, perfect forgeries might not trigger anything. The systems create confidence scores, not certainties.

The consent problem: Facial recognition and speaker identification approaches require comparing media against database references. Doing this at scale raises privacy concerns. Biometric analysis of untrustworthy content could create surveillance infrastructure under the guise of authenticity verification.

The updating problem: Detection systems need to be continuously retrained as new generative models emerge. Old detectors become obsolete. This creates maintenance burden and leaves windows of vulnerability when new models are released before detectors are updated.

The coordination problem: Different platforms might validate signatures differently. Different organizations might accept different levels of trust. Without coordination, you get fragmented ecosystems where content is verified in some contexts but not others.

The economic problem: Running authentication infrastructure costs money. Computing fingerprints for billions of videos annually, storing claim histories, operating certificate services—these have real costs. Smaller platforms might cut corners or skip implementation entirely, creating security vulnerabilities.

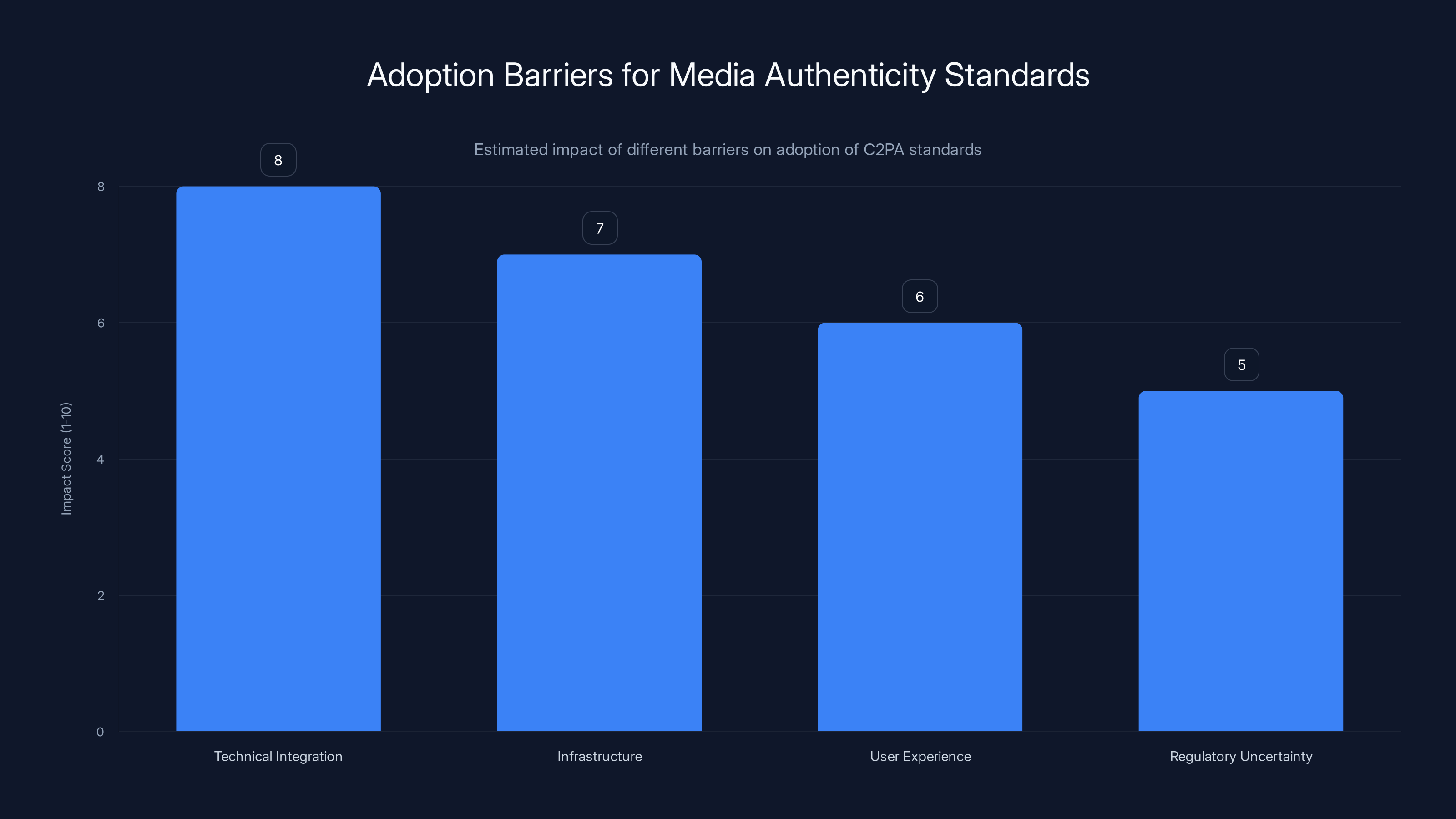

Technical integration poses the highest barrier to adoption of media authenticity standards, followed by infrastructure and user experience challenges. Estimated data.

Future Directions: Where Media Authenticity Is Heading

The industry is moving in several promising directions, though each faces its own challenges.

Standardization across industries: Currently, different sectors—news, social media, photography, video production—are developing separate authentication approaches. The future involves true interoperability where a photo verified by one ecosystem is recognizable to all others. This is technically possible but requires industry coordination.

Legislative frameworks: 2026 legislation in multiple countries will force adoption. The European Union, US states, and other jurisdictions are implementing requirements for provenance verification. These laws will accelerate infrastructure development, though they'll also create compliance complexity for small creators.

AI-assisted verification: Rather than asking humans to understand signatures and claims, AI systems will soon present authentication information intuitively. Instead of showing raw metadata, an interface might show: "High confidence this is real. Created by journalist Sarah Chen. No modifications detected." These summary judgments will be derived from technical authentication but presented in human terms.

Blockchain-based approaches: Some projects are exploring blockchain for authentication, treating the immutable ledger as a trust layer. This creates interesting possibilities for decentralized verification, but blockchain approaches also have scaling challenges and high energy costs. Most serious discussions are moving away from blockchain toward more efficient cryptographic approaches.

Biometric verification: For video and audio, biometric approaches—speaker verification, facial recognition tied to official records, behavior analysis—will complement traditional authentication. This raises privacy concerns but also enables verification of content without relying solely on creator claims.

Real-time detection: Rather than signing content at creation time, some researchers are exploring systems that detect and respond to synthetic content in real-time as it's distributed. Machine learning models will be deployed at platforms to automatically flag suspicious content, warning users before they share it.

Hybrid trust models: Future systems won't rely on any single authentication method. Instead, they'll combine C2PA claims, fingerprint databases, biometric verification, and behavioral signals to create confidence scores. You might see something like: "Verified by creator (C2PA) + Fingerprint matches news archives + Biometric confidence 94% = High confidence authentic."

Creator reputation systems: Authentication will be tied to creator identity and history. An image with a C2PA claim from a verified journalist with a strong track record would show higher confidence than the same image with a claim from an unknown account. This integrates social trust with technical verification.

The Role of Media Literacy in Authentication

Here's something that doesn't get enough attention: technology alone can't solve misinformation. Authentication systems are powerful, but they only work if people know how to interpret the signals.

Let's say we achieve perfect C2PA adoption tomorrow. Every image has verified claims about its origin. Someone still needs to understand what those claims mean, evaluate source credibility, and recognize when they're being manipulated.

A bad actor could legitimately claim to be a journalist, sign their fabricated story with valid C2PA signatures, and technically comply with all authentication standards. The technology proves the claim came from that person. It doesn't prove the claim is truthful.

This is why media literacy is inseparable from authentication technology. The most important questions remain human ones: Who created this? What's their credibility? Are they incentivized to lie? What corroborating evidence exists? Can I verify the story through independent sources?

Authentication technology provides infrastructure for verifying answers to those questions. It doesn't replace the need to ask the questions themselves.

Education efforts are starting to emphasize this integrated approach. Rather than teaching "How to spot deepfakes," the focus is shifting to "How to verify sources and understand authentication signals." This is slower and less satisfying than a technical silver bullet, but it's the realistic approach.

Force multiplier effect: When people understand both the power and limitations of authentication, they become harder targets for misinformation. Someone armed with technical literacy and skepticism catches deepfakes that fool the general public. Platforms that prioritize verifiable sources see less viral misinformation. Communities that emphasize source credibility self-correct faster.

Industry Response: How Organizations Are Implementing Authenticity Solutions

Large organizations are moving on authenticity faster than small ones.

News organizations: Major news outlets are integrating C2PA into photography workflows. The New York Times, Reuters, and others are adding C2PA claims to published images. This creates a verifiable record: "This image was published by Reuters on this date with these modifications." They're also participating in industry coalitions to promote standards adoption.

Camera manufacturers: Canon, Nikon, and Sony are building C2PA support into professional cameras. When a photographer shoots an image, the camera automatically embeds verified metadata. This provides immediate authenticity signals for professional photojournalism.

Social platforms: Major platforms like Microsoft are experimenting with content labels and verification mechanisms. You might see a label on content indicating its source, whether it's been modified, and what claims have been made about it. But full implementation remains limited due to scale challenges.

AI image generators: Stable Diffusion, DALL-E, Midjourney, and others are adding watermarks or metadata to generated images. This is partially about branding (identifying their output) and partially about authenticity. They want people to know when they're viewing AI output.

Fact-checking organizations: Organizations like Snopes, FactCheck.org, and regional fact-checkers are integrating authentication signals into their verification workflows. When they verify a claim about an image, they can reference C2PA claims and fingerprint data as part of their analysis.

Research institutions: Universities and research labs are continuing to develop better detection methods, watermarking techniques, and verification systems. This includes both defensive research (how to make authentication more robust) and understanding adversarial approaches (how systems can be attacked).

The 2026 Legislation Timeline: What's Coming and When

Regulation is accelerating adoption timelines significantly.

Multiple jurisdictions are implementing requirements for content provenance verification in 2026 and beyond. The European Union's proposed Digital Services Act will require platforms to identify synthetic media. US states are considering specific legislation around deepfakes and authentication. Various countries are implementing requirements for political content verification during elections.

These regulations create hard deadlines. Organizations that haven't started implementation now will face compliance pressure very soon. This will likely trigger a wave of hastily-implemented solutions that might prioritize speed over security or user understanding.

The legislation also creates standardization pressure. Organizations need clear guidance on what "verifiable provenance" actually means. Early legislation might be vague, leaving implementers uncertain about compliance. As standards clarify, we'll see convergence, but the transition period will be messy.

One significant wildcard: international fragmentation. Different countries might implement different requirements. What's compliant in the EU might not satisfy Chinese regulations. This creates pressure for adoption of universal standards but also opportunities for regulatory arbitrage where organizations comply with the weakest applicable standard.

Real-World Scenarios: How Authenticity Works When It Matters

Let's think through concrete situations.

Scenario 1: Verifying a news photo during a crisis

A major news event occurs. Photos start circulating immediately. Traditional approaches: News organizations publish images with captions explaining source and context. Viewers have to trust the organization's reputation.

With authentication: The original photographer's camera embeds a C2PA claim with precise timestamp and location. News organizations add their own claims confirming publication and any edits they made. When the image circulates, viewers can verify the chain: original photographer > news organization > distribution. If someone crops the image or edits it heavily, the modified version has different claims that viewers can check.

This significantly reduces circulation of false photos because the faked version would have a different claim history.

Scenario 2: Detecting a deepfake political ad

In 2025, someone creates a synthetic video of a political candidate saying something controversial. Old approach: fact-checkers manually analyze the video, identify artifacts, publish a debunking article. By that time, the video has already circulated widely.

With authentication: The video generator doesn't include a C2PA claim indicating authentic capture. Viewers looking at the video see a warning: "No verified authenticity claim." Additionally, the platform runs detection systems that flag video as likely synthetic, comparing it against databases of known synthetic patterns. The combined signals quickly identify the content as suspicious.

This isn't foolproof—someone could fake a C2PA claim on the synthetic video—but it creates friction and increases detection likelihood.

Scenario 3: Verifying historical footage in legal proceedings

A court case involves surveillance footage as evidence. Traditional approach: Forensic experts analyze the video to confirm it hasn't been tampered with, verify the camera metadata, testify about their methodology. This takes time and expensive expertise.

With authentication: The camera system has built-in C2PA support. The footage has cryptographic claims about camera ID, capture time, and any processing applied. Legal teams can verify the chain automatically, reducing the need for manual forensic analysis. This doesn't eliminate the need for expert testimony, but it provides stronger technical foundation.

These scenarios show both the promise and limits. Authentication helps, but combined with other verification methods and human judgment. No scenario involves technology alone solving the problem.

Open Questions and Unsolved Problems

Several fundamental problems remain open.

The liveness problem: How do you verify that something is real-time capture rather than pre-recorded? Video calls from someone claiming to be present in a location could actually be pre-recorded footage or deepfake video. Authentication can verify the file's creation, but not the claim about when it was actually captured.

The ambiguity problem: Some modifications are innocent (cropping, color correction, compression) while others are deceptive (object removal, content insertion). How should authentication systems distinguish? Should all modifications be flagged equally or weighted differently?

The provenance gap: Older content predates authentication systems. Billions of images and videos exist without any way to verify their origin. How do you integrate legacy content into an authenticated media ecosystem?

The scale problem: Implementing authentication systems at global scale involves enormous infrastructure costs and complexity. Who pays for this? Who operates the certificate authorities and verification systems? How do you ensure they remain available and trustworthy indefinitely?

The privacy problem: Biometric authentication (facial recognition, speaker verification) enables powerful verification but also creates surveillance capabilities. How do you implement authentication without creating problematic privacy infrastructure?

The future models problem: As generative models improve exponentially, detection systems struggle to keep pace. How can you design authentication systems that remain relevant as AI capabilities change?

Best Practices for Creators and Organizations

If you're responsible for creating or publishing content, here's what you should be doing now.

For photographers and visual creators:

- Start using C2PA-enabled cameras or tools if your platform supports it

- When publishing images, include context about source and any modifications

- Maintain metadata and version control for your original files

- Consider adding watermarks to sensitive content to prevent unauthorized use

- Build reputation through consistent, verified output

For news organizations and publishers:

- Integrate C2PA into your publishing workflow

- Develop clear policies about what modifications (cropping, color correction, etc.) require explanation to readers

- Train staff on authentication signals and how to explain them to audiences

- Implement fingerprinting systems to track image reuse and detect plagiarism

- Develop reader education materials explaining how to interpret authenticity information

For platform companies:

- Implement content verification infrastructure even before legislation requires it

- Develop clear UI for presenting authentication information to users

- Build partnerships with creators and publishers to enable widespread C2PA adoption

- Deploy detection systems for synthetic content as supplementary to creator claims

- Invest in media literacy programs helping users understand authenticity signals

For content consumers:

- Check for C2PA claims and authentication signals on important content

- Verify source credibility independent of technical signals

- Be skeptical of content without clear attribution or authenticity claims

- Use reverse image search to find original sources

- Support news organizations and creators who implement proper authentication

Key Takeaways and What's Next

Media authenticity has shifted from research curiosity to urgent practical problem. Synthetic media is improving faster than detection systems. Legislation is mandating solutions. The industry is moving toward C2PA standards and related approaches.

But let's be clear about what's possible: There's no magic wand that will solve misinformation. Authentication technology is necessary infrastructure, but it's not sufficient. You still need media literacy, source evaluation, institutional credibility, and human judgment.

The next few years will be critical. As 2026 legislation takes effect and platforms implement authentication systems, we'll see whether these approaches actually work in practice or whether they become security theater—the appearance of verification without meaningful protection.

The most likely outcome is hybrid systems combining technical authentication, AI-assisted detection, biometric verification, and human expertise. No single approach dominates because each has irreducible limitations. The practical path forward involves making authentication useful enough and accessible enough that most people can verify important content.

For those building and implementing these systems, the challenge is immense. Technical challenges are solvable. Adoption challenges are harder. But the stakes justify the effort. In a world where perfect forgeries are technically possible, provenance becomes critical infrastructure for distinguishing fact from fiction.

FAQ

What exactly is media authenticity and why does it matter?

Media authenticity refers to the ability to verify whether digital content (images, video, audio) was genuinely captured or created versus being synthesized or heavily modified. It matters because AI-generated and deepfake content is becoming indistinguishable from authentic media, and this creates risks for elections, public health, financial markets, and personal safety. When you can't reliably distinguish real from fake, trust in all media erodes.

How does the C2PA standard actually work and what does a C2PA claim prove?

C2PA embeds cryptographic signatures and metadata directly into media files, creating a verifiable chain of custody. When you add a C2PA claim to content, you're saying: "I created/modified this at this time using this tool." The cryptographic signature proves the claim came from someone with your digital credentials. However, the signature doesn't prove the claim is true—it just proves who made the claim. Someone could falsely claim authentic content is synthetic or vice versa, but the signature would be valid.

What are watermarks and fingerprints, and how do they help with authentication?

Watermarks are invisible or visible signals embedded into content that identify it as synthetic or track its origin. Fingerprints are compact digital summaries of content that allow matching of the same image or video across different versions. Watermarks help identify synthetic media and track usage, while fingerprints help detect copies and verify distribution history. Both can be removed or spoofed by sophisticated attacks, making them useful but not bulletproof.

Can current authentication systems be hacked or defeated?

Yes. Adversaries can remove watermarks through imperceptible edits, strip metadata, forge C2PA claims if they compromise signing keys, and create deepfakes that detection systems miss. There's an ongoing arms race where authentication improves, attacks improve faster. This is why experts recommend using multiple signals together—C2PA claims plus fingerprint matching plus detection systems plus source verification—rather than trusting any single approach.

When will all images and videos have authentication signals?

Not soon, and maybe never universally. Legacy content predates authentication systems. Adoption requires tool developers, platforms, creators, and consumers to all implement support simultaneously. We'll likely see adoption concentrated among professional and institutional creators first (news organizations, camera manufacturers, government) then gradually spreading. Consumer devices will lag. Legislation in 2026 will accelerate adoption in regulated sectors but not eliminate gaps.

How do I know if I can trust a C2PA claim I see on content?

Check three things: (1) Is the claim's cryptographic signature valid? This proves it came from someone with the claimed credentials. (2) Who made the claim? A verified journalist or news organization is more trustworthy than an anonymous account. (3) Does the content's provenance make sense? If the claim says it was captured in New York at 2 AM but it's clearly a professional studio shot, something is wrong. C2PA proves who made a claim; it doesn't prove the claim is true.

What happens to content that predates authentication systems?

Legacy content without authentication signals will become increasingly suspicious simply by absence of verification. This creates perverse incentives to question authentic older content. Some approaches involve retroactive authentication—news organizations can go back and add C2PA claims to archived content they published—but this creates trust issues (how do we know the retroactive claim is accurate?). The transition period will involve ambiguity about older content.

Are there privacy concerns with media authentication systems?

Yes, significant ones. Biometric approaches (facial recognition, speaker identification) enable powerful verification but also create surveillance infrastructure. Fingerprinting at scale requires massive databases of content. Location metadata reveals where content was captured. The challenge is building authentication systems that enable verification without creating privacy nightmares. Current proposals involve transparency—letting people know what signals are being used—but this is an unsolved tension.

What's the difference between detection systems and authentication systems?

Detection systems analyze content to determine if it's likely synthetic—looking for artifacts, anomalies, inconsistencies. They're proactive but prone to false positives and obsolescence as AI improves. Authentication systems track origin and history—proving who created content and what happened to it. Detection says "This looks fake." Authentication says "I claim this was created this way." They're complementary; neither alone is sufficient.

What should I do if I encounter suspicious content online?

First, check for C2PA claims or watermarks if available. Second, reverse image search to find earlier versions and track distribution history. Third, verify the source's credibility and reputation independently. Fourth, look for corroborating evidence from trusted organizations. Fifth, be skeptical if the content matches your existing beliefs too perfectly—confirmation bias makes misinformation more convincing. Finally, if it matters, wait for verification from multiple independent sources before sharing.

How will authentication change the news industry?

News organizations that implement C2PA and verification systems will have competitive advantage in credibility. Readers will learn to trust verified sources more. This will pressure organizations that don't implement authentication to do so, creating a baseline of verification across quality journalism. However, it will also make misinformation more obvious by absence of claims, potentially making people more skeptical of all content. Some news organizations will need to explain legitimate edits more transparently, potentially requiring new media literacy from audiences.

Conclusion: Building Trustworthy Media Infrastructure

We're at an inflection point. Synthetic media technology is advancing faster than detection and authentication capabilities. Legislation is forcing implementation timelines. Public trust in media is eroding. These pressures converging create both urgency and opportunity.

Media authenticity isn't a solved problem. The approaches discussed here—C2PA standards, watermarking, fingerprinting, detection systems—each have real limitations. But they also represent genuine progress toward building infrastructure for verifiable content.

The realistic path forward isn't perfect authentication. It's layered verification combining technical signals, institutional credibility, human expertise, and media literacy. It's legislative requirements forcing adoption. It's creator incentives aligning toward providing trustworthy provenance. It's consumer awareness of how to interpret authenticity information.

This won't eliminate misinformation. But it can make sophisticated forgeries harder to spread undetected. It can create friction that gives fact-checkers time to respond. It can help people distinguish between sources they should trust and those requiring skepticism. That's not perfect, but it's meaningful.

The organizations implementing authentication now—news outlets, camera manufacturers, platforms—are building the infrastructure we'll all depend on. The creators adding C2PA claims are establishing credibility advantage. The researchers improving detection and watermarking are pushing the boundaries of what's possible. The policymakers requiring verification are creating accountability.

For the rest of us, the challenge is learning to use these signals effectively. Understanding what C2PA claims mean. Recognizing the difference between technical verification and truthfulness. Combining multiple signals when evaluating important content. Maintaining skepticism even toward verified sources. Building media literacy that keeps pace with technology.

The stakes are genuinely high. Content controls narratives. Narratives shape reality. If we lose ability to distinguish authentic from synthetic media, we lose shared reality itself. That's hyperbolic-sounding, but consider: elections depend on voters accessing reliable information. Public health depends on accurate medical information. Markets depend on truthful financial reporting. Justice systems depend on reliable evidence.

Authenticity infrastructure isn't perfect, but it's necessary. The work being done now—building standards, implementing systems, training people to use them—is foundational for the next decade of digital communication.

The future isn't guaranteed to work. Legislation could be misguided. Implementation could fail. Adversaries could outpace defenses. But the alternative—giving up and accepting that we can't verify anything—isn't acceptable. So the practical approach is building the best possible systems, being honest about their limitations, and creating resilience through redundancy and human judgment.

That's the realistic, imperfect, necessary path forward.

Related Articles

- Samsung's AI Slop Ads: The Dark Side of AI Marketing [2025]

- SAG-AFTRA vs Seedance 2.0: AI-Generated Deepfakes Spark Industry Crisis [2025]

- AI Video Generation Without Degradation: How Error Recycling Fixes Drift [2025]

- Deepfake Detection Deadline: Instagram and X Face Impossible Challenge [2025]

- India's New Deepfake Rules: What Platforms Must Know [2026]

- Google Gemini Music Generation with Lyria 3: [2025]

![Media Authenticity Methods: Detecting Deepfakes & Verifying Content [2025]](https://tryrunable.com/blog/media-authenticity-methods-detecting-deepfakes-verifying-con/image-1-1771519265097.jpg)