The True Origins of AI: From Turing to Dartmouth's $13,500 Moment

When most people think about artificial intelligence, they picture Chat GPT, Claude, or maybe a sci-fi movie where robots become sentient. But the actual origin story is far stranger and more human than that. It's a tale of mathematical brilliance, tragic loss, and one of the most consequential $13,500 budget requests ever made.

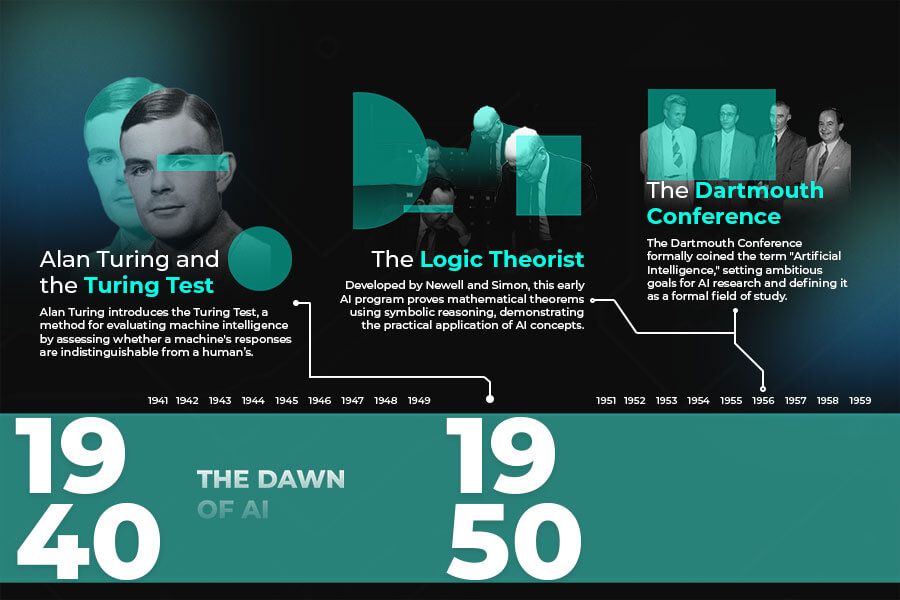

Alan Turing posed the fundamental question in 1950. John McCarthy and Marvin Minsky organized the workshop that named it in 1956. Yet Turing never lived to see the field he essentially invented get its official title. He died in 1954, two years before Dartmouth, his body discovered after ingesting cyanide in circumstances ruled a suicide. The irony cuts deep: the man who shaped how we think about machine intelligence never witnessed the moment the world finally gave his ideas a formal name. According to MSN, Turing's contributions were not fully recognized during his lifetime.

What makes this history matter now? Because understanding where AI came from explains why the field thinks the way it does today. Turing's approach, McCarthy's practical skepticism, Minsky's ambitious vision—these foundational ideas still guide AI research, hype cycles, and legitimate concerns about what machines might become. The $13,500 Dartmouth proposal wasn't just a budget request. It was a philosophical and technical manifesto that claimed every aspect of intelligence could be precisely described and replicated in machines. That's still the central promise, and the central tension, of modern AI.

Let's dig into the actual story, not the sanitized textbook version.

TL; DR

- The $13,500 moment: The 1955 Dartmouth Summer Research Project proposal formally introduced the term "artificial intelligence" for the first time in academic literature.

- Turing's invisible shadow: Alan Turing's 1950 paper on machine thinking directly inspired the Dartmouth approach, but he died in 1954, two years before the field was officially named.

- The Turing Test reframed everything: By shifting focus from abstract philosophy to observable behavior, Turing turned "can machines think?" into a testable problem that researchers could actually solve.

- McCarthy's realism: John McCarthy, Dartmouth's lead organizer, consistently warned against AI hype, yet ironically his field became synonymous with overpromise and underdelivery.

- The founding vision persists: The Dartmouth proposal's core claim that intelligence could be precisely described and mechanically replicated remains the foundational bet in all modern AI systems.

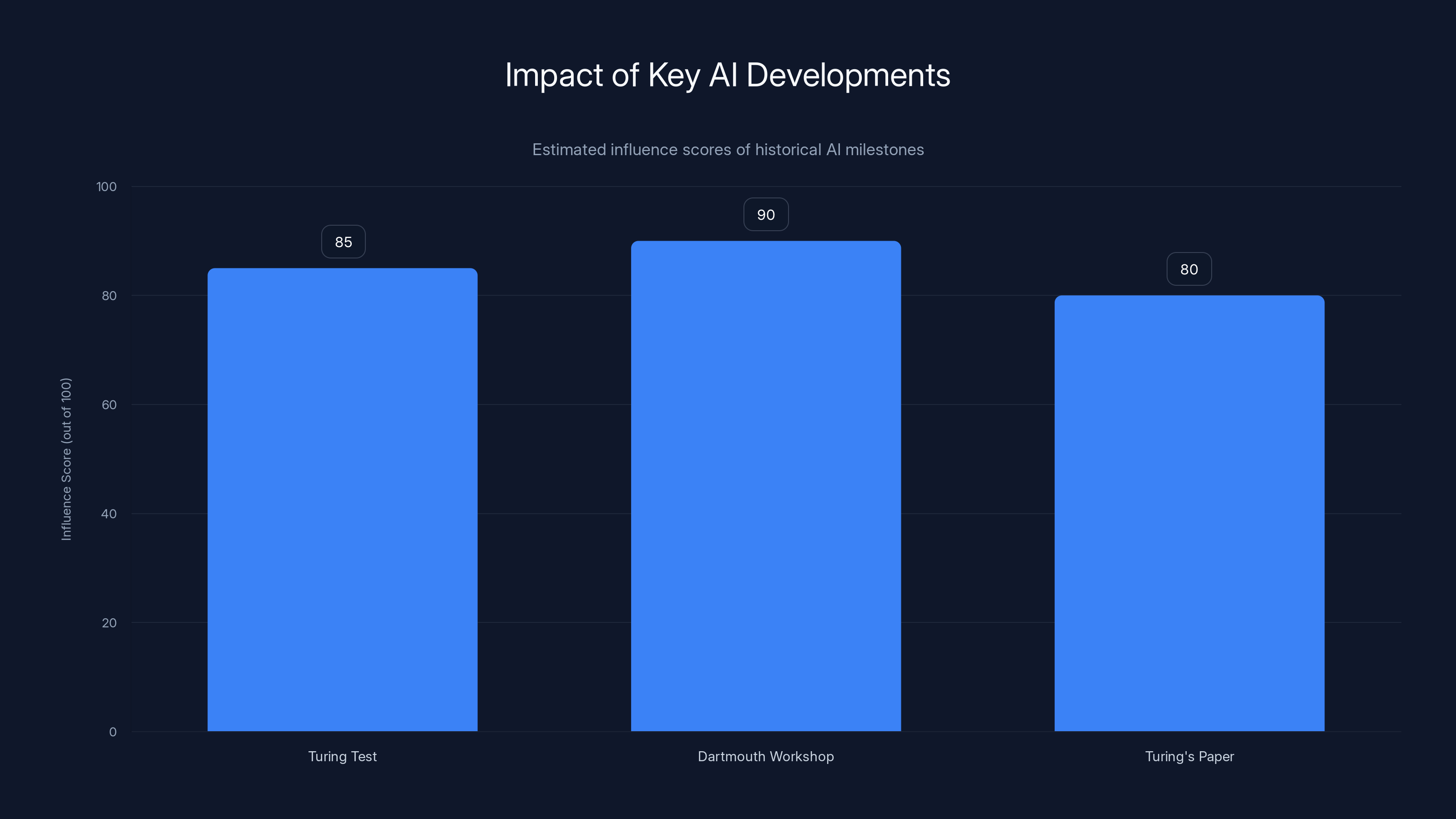

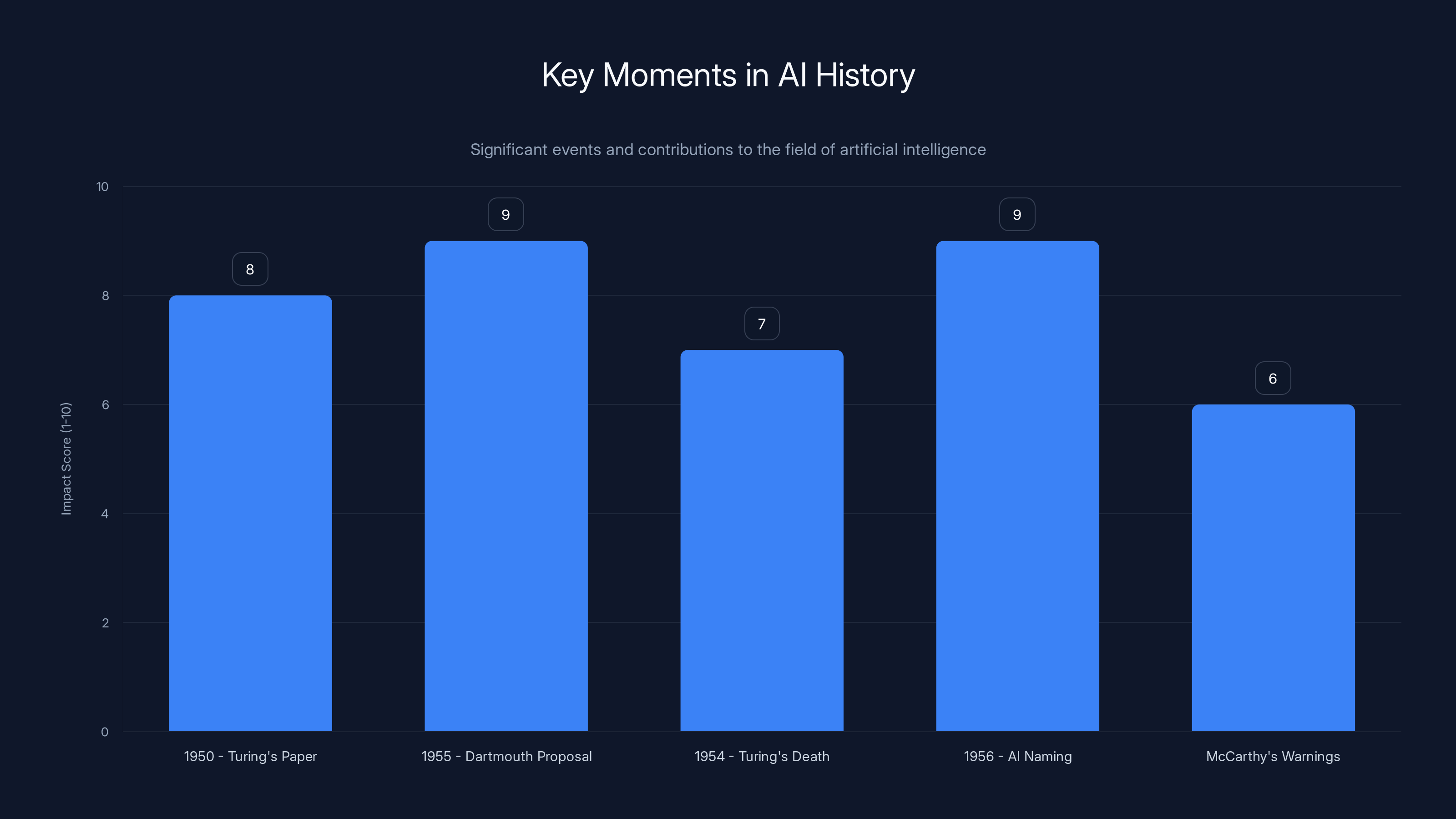

The Dartmouth Workshop had the highest estimated influence on AI development, closely followed by the Turing Test and Turing's paper. Estimated data based on historical significance.

Alan Turing: The Philosopher-Mathematician Who Couldn't Stay to Witness His Own Creation

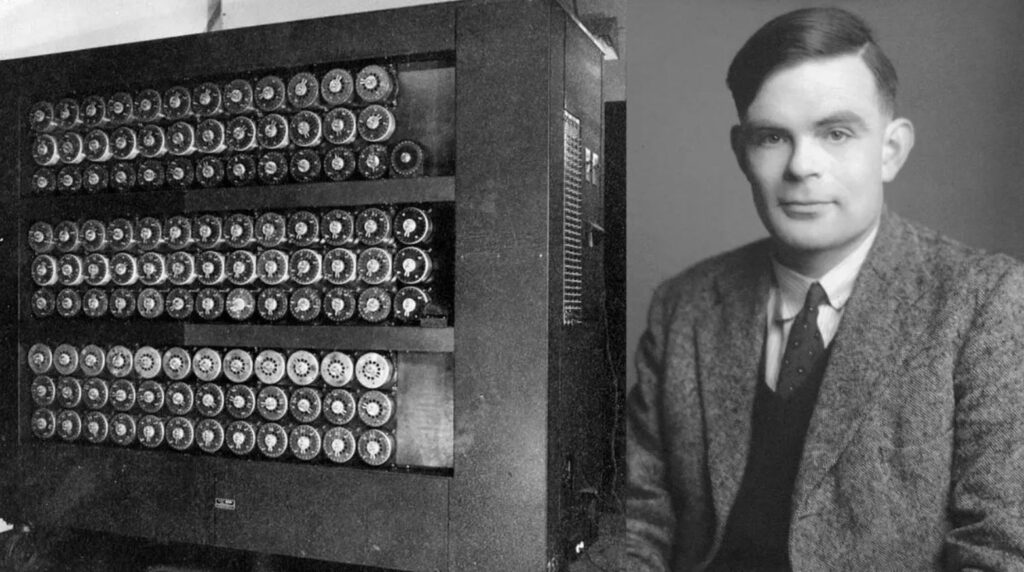

Alan Turing was a contradiction. He was a pure mathematician fascinated by computation itself, yet he thought about machines with almost poetic intensity. He was socially awkward, suffered from what we'd now recognize as depression and anxiety, yet he pondered the deepest questions about mind and consciousness. And he was gay in 1950s Britain, where that fact would eventually lead to his prosecution and death before his fortieth birthday.

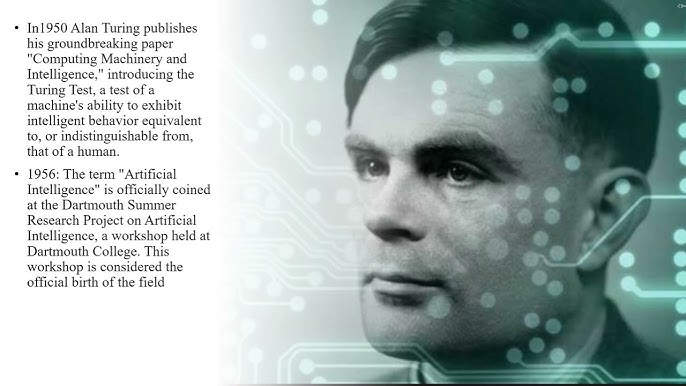

In 1950, Turing published a paper titled "Computing Machinery and Intelligence" in the academic journal Mind. The paper begins with a deceptively simple question: "Can machines think?" Most philosophers would have answered no based on abstract reasoning. Turing did something entirely different. He didn't argue about whether machines possessed consciousness, souls, or some magical quality of mind. Instead, he sidestepped the philosophical trap.

Instead, Turing asked a more useful question: Could a machine behave intelligently enough that you couldn't tell it apart from a human in conversation? This became known as the Turing Test or the "imitation game." Imagine a person sitting in a room, texting with two unseen conversation partners. One is human, one is a machine. If the person can't reliably figure out which is which after extended conversation, the machine passes the test. It doesn't matter whether the machine "really" thinks or possesses consciousness. If it acts intelligent convincingly, we should treat it as intelligent.

This was radical. Turing essentially moved the entire discussion from philosophy into engineering. He turned "can machines think?" into "can we build machines that behave like they think?" That shift of focus proved to be the conceptual key that unlocked decades of AI research. As noted in Britannica, Turing's approach laid the groundwork for practical AI development.

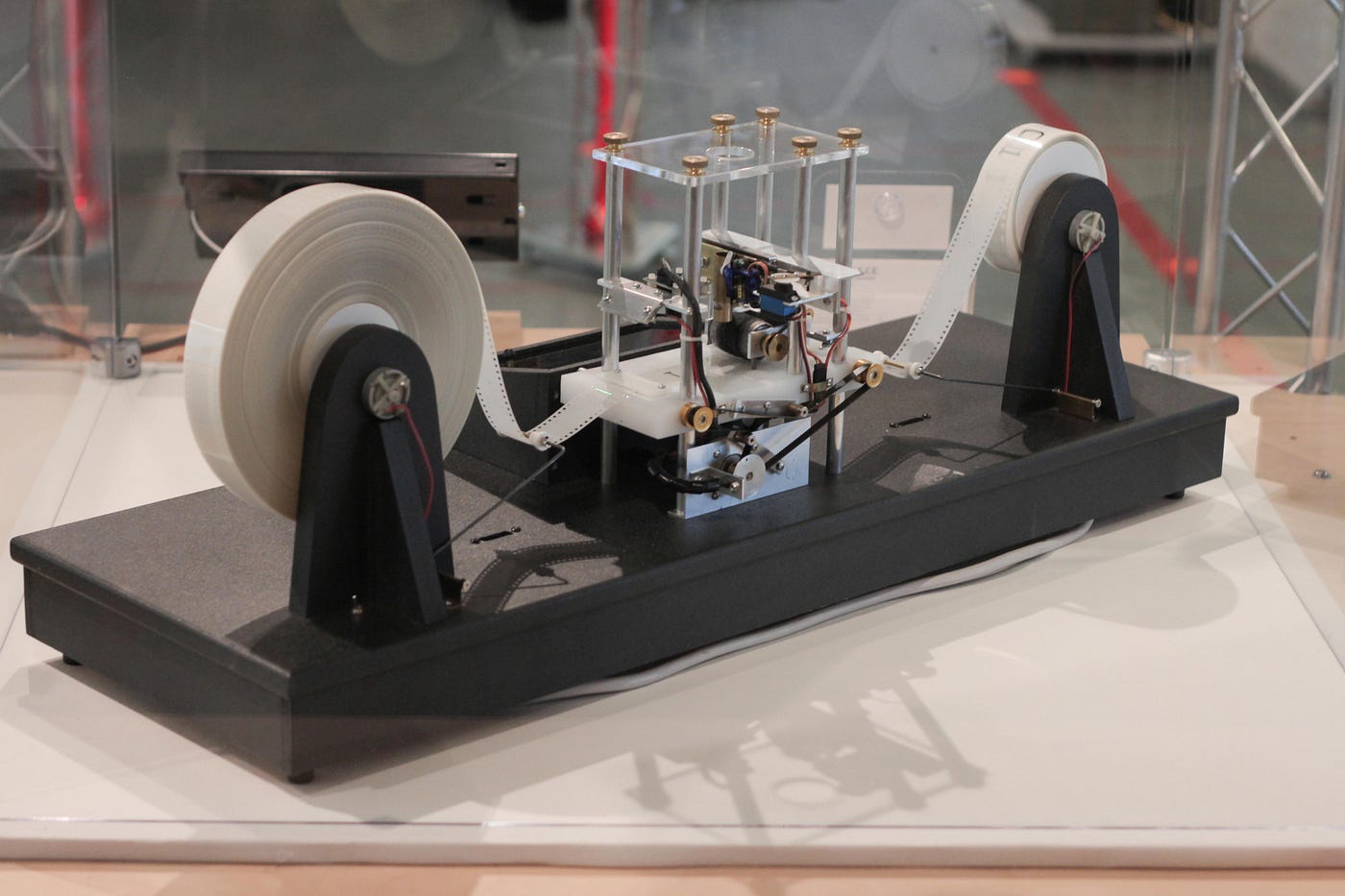

What made this even more remarkable is that Turing made this argument in 1950, when computers were enormous, slow, error-prone machines used primarily for mathematical calculations. The ENIAC weighed 30 tons and occupied 1,800 square feet. The Manchester Mark 1, where Turing himself worked, was another massive, unreliable device. Yet Turing somehow intuited that sufficiently complex symbol processing could give rise to something we'd recognize as intelligent behavior.

Turing's paper also included an early response to what we now call the "argument from disability." If someone claimed machines can never learn, never improve, never show creativity, Turing would counterpoint with practical examples. A machine might learn from experience, even if in a different way than humans do. A machine might solve novel problems it wasn't explicitly programmed to solve. The question is whether these capabilities exist, not whether they work identically to human cognition.

Yet Turing never experienced the full impact of his ideas. His personal life unraveled following a burglary at his home in 1952. During the investigation, Turing revealed his homosexuality to police, which was illegal in Britain at the time. Rather than imprisonment, he was offered a choice: prison or "treatment" with estrogen injections, a barbaric practice meant to chemically alter his sexuality. He chose the injections.

On June 8, 1954, at age 41, Turing died from cyanide poisoning. An apple was found near his body, though it was never tested for cyanide. The official ruling was suicide, though some have questioned whether it was accidental, related to a cyanide experiment he was conducting, or something else entirely. The uncertainty itself feels cruel. What's certain is that one of the twentieth century's most original thinkers died in disgrace, persecuted by the government of the country he had served during World War II, just as his ideas were beginning to inspire a new field of research.

Two years later, at Dartmouth College in New Hampshire, a group of researchers would gather to formally organize and name the field that Turing had intellectually founded but would never see officially recognized.

The Question That Started It All: Can Machines Think Like Humans?

Turing's 1950 question wasn't asked in a vacuum. It emerged from deeper mathematical and philosophical currents he'd been exploring since the late 1930s. Before there were electronic computers, Turing had theorized about what he called "a-machines" (automatic machines), now known as Turing Machines. These were abstract computational devices that could, in theory, solve any mathematical problem that a human could solve, given enough time and memory.

The revolutionary insight was this: if a human can solve a mathematical problem through logical steps, and if a machine can execute those same logical steps mechanically, then the machine can solve the same problem. This might seem obvious now, but in the 1930s it was genuinely novel. It grounded computation in pure logic and suggested that thinking itself might be mechanically reproducible.

When Turing asked "can machines think?" in 1950, he was building on this foundation. He was asking whether the mechanical symbol manipulation that computers could perform might give rise to behavior that we'd recognize as intelligent. Not consciousness, not understanding, not wisdom—just intelligent behavior.

This reframing was essential. Philosophers had been debating whether machines could "really" think for decades, usually concluding no because machines lacked some essential quality. Turing cut through that. He said: let's not worry about essences or inner experience. Let's look at what the machine does. If it passes a test of intelligent behavior, we should recognize it as intelligent. If we insist on some additional hidden quality, we're moving the goalposts, not solving the problem.

Turing also addressed what he called "objections to machine intelligence." Some people claimed machines could never be creative. Turing countered that creativity often emerges from rule-following combined with randomness. Some claimed machines lack consciousness or soul. Turing responded that we can't even fully define what consciousness is, so why should we require machines to have it? Some argued machines are "merely" following their programming. Turing noted that humans are also shaped by their heredity and education, yet we call them intelligent.

Each objection in Turing's paper anticipated arguments that would echo through the next 70 years of AI debates. When people today claim AI can't be creative, can't understand meaning, or is just pattern matching, they're echoing arguments Turing already addressed. The fact that we're still making the same arguments suggests Turing was asking the right questions.

But there's a deeper irony. Turing's paper didn't prove machines could think. It didn't build any AI. It just asked the question clearly and suggested a way to test for answers. Yet that act of framing proved more influential than countless technical papers that followed. Sometimes the most important contribution to a field isn't solving a problem but asking it in a way that makes solutions possible.

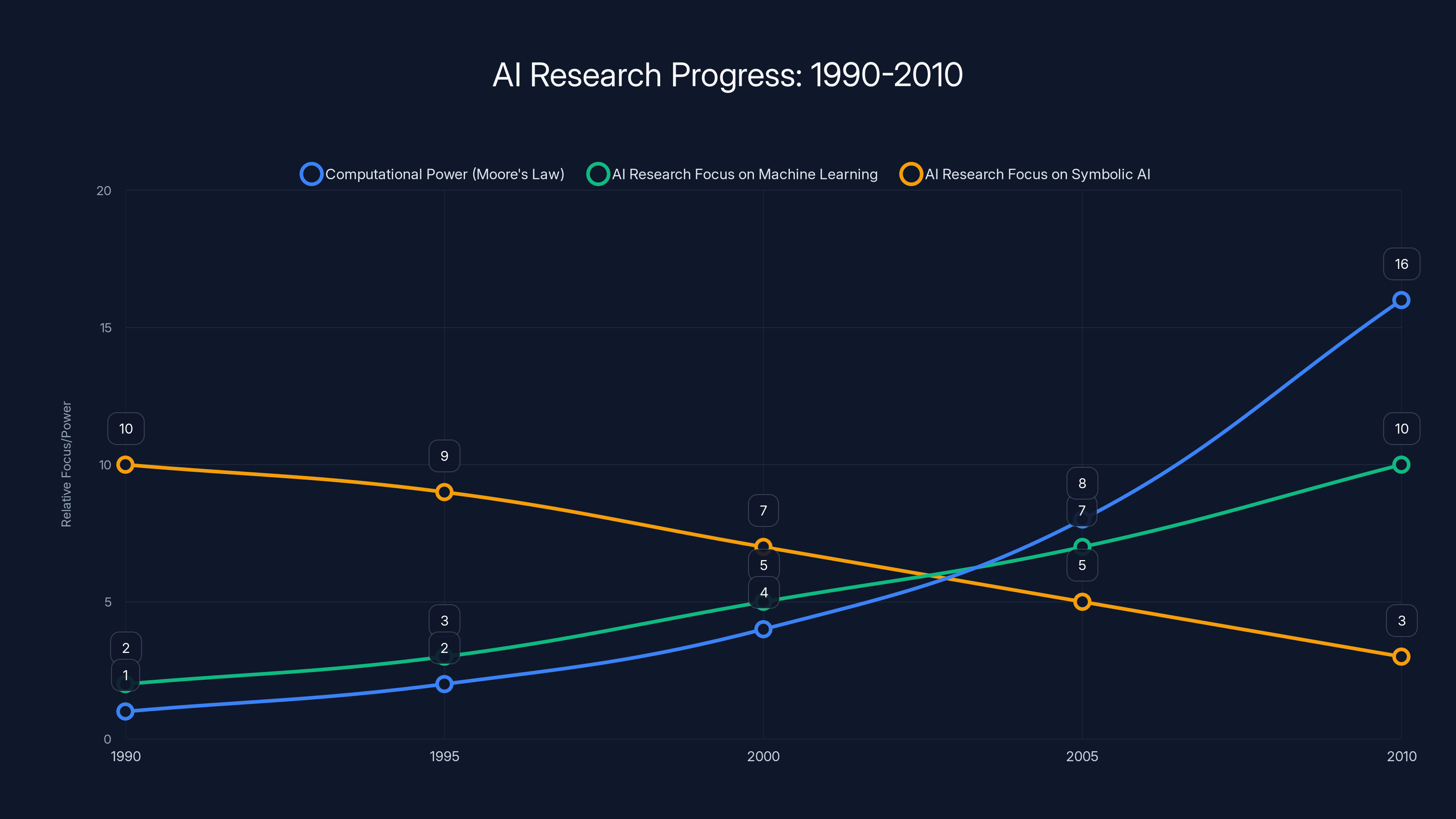

AI research from 1990 to 2010 saw a steady increase in computational power and a shift towards machine learning, while focus on symbolic AI declined. (Estimated data)

The Dartmouth Summer of 1956: Where AI Got Its Official Name

By 1955, John McCarthy was a 27-year-old mathematician at Dartmouth College. He'd been thinking about machine intelligence for years, inspired by Turing's work and by practical experience with early computers. McCarthy wasn't a dreamer or a philosopher. He was a pragmatist who believed that if intelligence could be precisely defined, it could be mechanically replicated.

McCarthy decided to organize a summer workshop at Dartmouth bringing together the leading thinkers on machine intelligence. The idea was simple but ambitious: get the smartest people in the room for eight weeks and see what they could accomplish together. He invited Marvin Minsky, an ambitious young cognitive scientist. He brought in Claude Shannon, the legendary information theorist who had essentially invented digital communication. He included Nathaniel Rochester, an IBM researcher. And he drafted several other prominent researchers and bright young minds.

To fund the workshop, McCarthy submitted a proposal to the Rockefeller Foundation in 1955 requesting

The proposal itself is worth reading carefully because it reveals the intellectual DNA of AI from day one. The researchers claimed: "Every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it." That's breathtaking in its ambition. They weren't just talking about narrow calculation or game-playing. They were claiming that language, abstraction, reasoning, self-improvement, and everything we associate with intelligence could, in theory, be mechanically replicated.

They listed specific problems they hoped to make progress on: learning, language, neuron nets, self-improving machines, abstraction, and others. The workshop had no requirement to solve these problems in eight weeks. The point was to make progress, share ideas, and establish a research agenda.

What actually happened at Dartmouth remains somewhat legendary in AI circles. The researchers did have initial successes. They created early programs that could play checkers, prove mathematical theorems, and demonstrate logical reasoning. McCarthy and others were convinced that significant progress on artificial intelligence was possible and that it might come faster than anyone expected.

But success also bred overconfidence. Some of the young researchers emerged from Dartmouth convinced that human-level AI might be achievable within a decade or two. McCarthy, notably, was often more cautious. But the general optimism was palpable, and it attracted funding, students, and research interest throughout the late 1950s and 1960s.

What's often glossed over is that the Dartmouth researchers were explicitly building on Turing's framework. They weren't rejecting his Turing Test or his focus on behavior. They were operationalizing it. They were saying: okay, if machines can simulate intelligent behavior through symbol manipulation, let's actually build systems that do that. Let's tackle specific problems. Let's see how far we can get.

The $13,500 budget request was approved. The workshop happened. And in that modest summer research project, the field of artificial intelligence was officially born. Within a few years, AI research was happening at MIT, Carnegie Mellon, Stanford, and other institutions worldwide. Graduate students were writing dissertations on machine learning, natural language processing, and knowledge representation. The intellectual foundation Turing had laid was being built upon rapidly.

Yet Turing himself was already two years in the ground, his life ended by persecution, his ideas ironically moving forward without him.

John McCarthy: The Pragmatist Who Built AI While Remaining Skeptical of It

If Turing was the philosophical visionary, John McCarthy was the practical engineer who translated vision into research programs. McCarthy was born in 1927 and studied mathematics at Caltech before moving into computer science, a field that barely existed when he started. He had the rare combination of mathematical rigor and engineering pragmatism.

After Dartmouth, McCarthy went to MIT and then Stanford, where he would spend much of his career. He invented LISP, one of the first programming languages specifically designed for symbolic computation and AI research. LISP was revolutionary because it treated programs and data as the same thing—lists of symbols—which made it powerful for the kind of recursive, self-modifying computation that AI researchers needed.

But what's often overlooked is McCarthy's persistent skepticism about AI hype. In a 1979 article in Computer World, McCarthy made a blunt prediction: "The computer revolution hasn't happened yet." He acknowledged that computers were everywhere, but he argued that they hadn't fundamentally transformed human life in the way electricity or automobiles had. He predicted that the real revolution would come when computers could assist humans in understanding and decision-making, not just processing data.

McCarthy believed AI could drive this revolution, but he was also explicit that the field was far from achieving human-level intelligence. He talked about the importance of "common sense," the vast background knowledge and reasoning ability that humans apply to everyday situations. Building common sense into machines wasn't just a difficult engineering problem. It was a fundamental research challenge that might take decades to solve.

In the 1970s and 1980s, as AI research faced what became known as the "AI Winter"—a period of reduced funding and diminished expectations after early optimism gave way to limited results—McCarthy remained calm. He wasn't devastated by the hype crash because he'd never fully bought into the hype in the first place. He knew the field was important, but he knew progress would be gradual.

What made McCarthy distinct was his insistence on precision in thinking about intelligence. He didn't want researchers building systems that merely mimicked intelligence. He wanted them building systems that engaged in genuine reasoning about the world, represented knowledge accurately, and could explain their conclusions. This pushed the field toward symbolic AI, logical reasoning systems, and knowledge representation—approaches that, while they would eventually give way to machine learning and neural networks, fundamentally shaped how AI researchers thought about their problems.

McCarthy also contributed to the philosophical side. He wrote about whether machines could have intentions, about the ethics of AI, and about what kinds of thinking were distinctly human. He didn't claim to have answers, but he insisted that these questions needed serious consideration, not marketing hype.

One of McCarthy's most important insights was that AI systems would always need to make decisions with incomplete information. The real world doesn't come with clean data and clear rules. A robot navigating a room doesn't know the exact position of every object. A language model generating text doesn't know exactly which interpretation the user intended. McCarthy pushed researchers to think about how to handle this uncertainty, how to reason about what you don't know.

McCarthy died in 2011 at age 84, having witnessed the entire history of AI from its theoretical inception to its practical emergence. He never had the satisfaction of seeing machines achieve human-level intelligence in general domains—that goal still hasn't been fully achieved. But he had the satisfaction of knowing that he'd founded a field that would reshape human civilization. And he did it with intellectual integrity, resisting hype while remaining fundamentally optimistic that the work mattered.

Marvin Minsky: The Ambitious Dreamer of the Dartmouth Group

If John McCarthy was the engineer, Marvin Minsky was the ambitious dreamer. Minsky had a background in mathematics and neuroscience, which gave him a unique perspective on intelligence. He was fascinated by how neural systems in the brain might work and whether we could replicate that in machines.

Minsky co-founded the MIT Artificial Intelligence Laboratory with McCarthy in 1959, making MIT one of the primary centers of AI research. He was energetic, intellectually brilliant, and genuinely believed that solving AI was possible and would happen within his lifetime. Unlike McCarthy, Minsky was more openly optimistic about the timelines and possibilities.

Minsky made major contributions to understanding how machines could learn and adapt. He worked on neural networks (which would later become foundational to modern deep learning), on robotics, and on cognitive science. His 1961 paper on steps toward artificial intelligence laid out a research agenda that would guide the field for decades. He argued that intelligence was not a single thing but rather a collection of relatively simple processes that, when combined appropriately, could produce intelligent behavior.

This idea—that intelligence emerges from the combination of simpler components—became a core principle in AI research. Whether you're building a system that uses rule-based logic, neural networks, or symbolic reasoning, the principle is the same: combine simple mechanisms in the right ways and complex behavior emerges.

But Minsky also fell into some of the same traps as other early AI researchers. In the 1960s, he was quite optimistic about how quickly AI would progress. He believed that machines comparable to humans in intelligence were achievable in one or two decades. This optimism contributed to inflated expectations and, when progress didn't materialize as quickly, to the funding cuts and skepticism that characterized the AI winters of the 1970s and 1980s.

Yet Minsky remained productive despite the funding droughts. He continued publishing, continued teaching, continued pushing the boundaries of what researchers thought was possible. His 1986 book "The Society of Mind" presented a theory of how intelligence might emerge from the interaction of many specialized subsystems, each relatively unintelligent on its own. It was a creative attempt to grapple with how the messy, contradictory human mind actually works.

Minsky died in 2016, having seen his field move in directions he didn't always predict—particularly toward deep learning and massive neural networks, which he had been somewhat skeptical of. But he had lived to see AI move from academic curiosity to practical technology deployed in real systems. That vindication of his fundamental belief that the problem was solvable probably meant something to him.

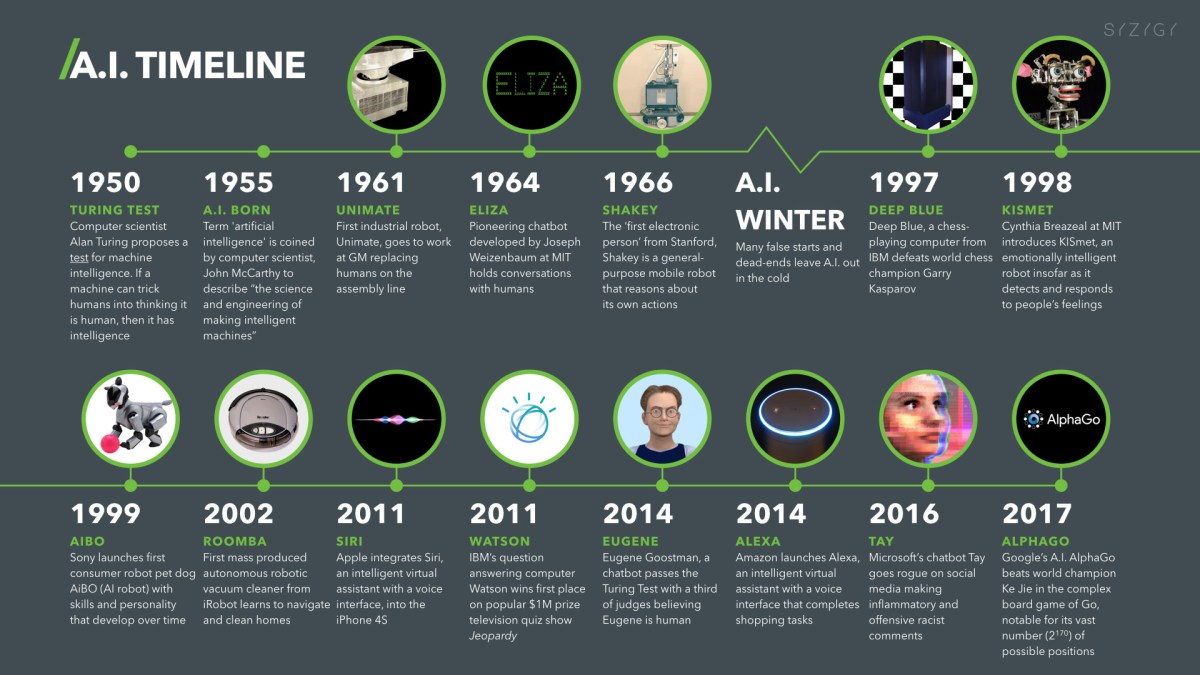

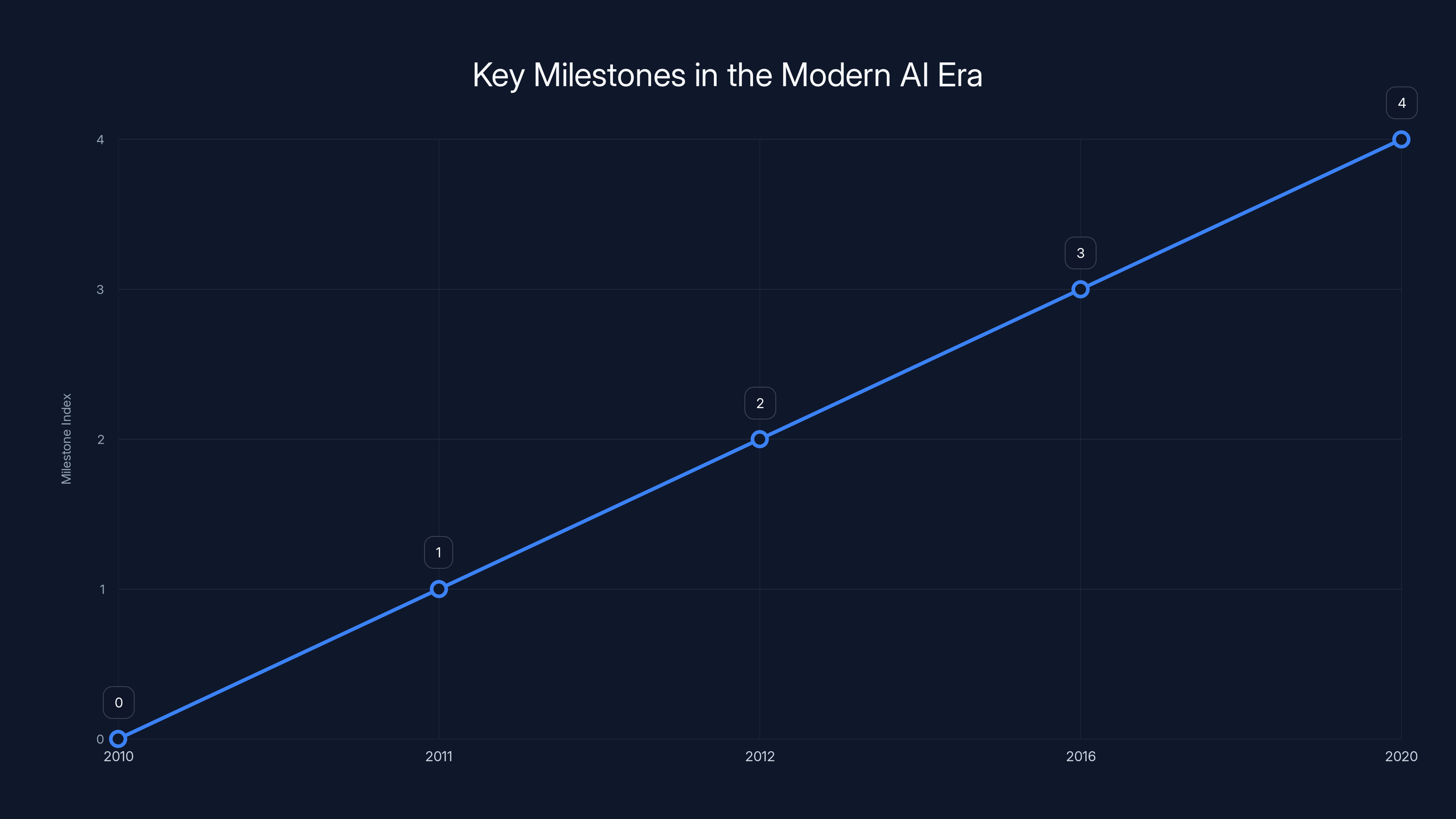

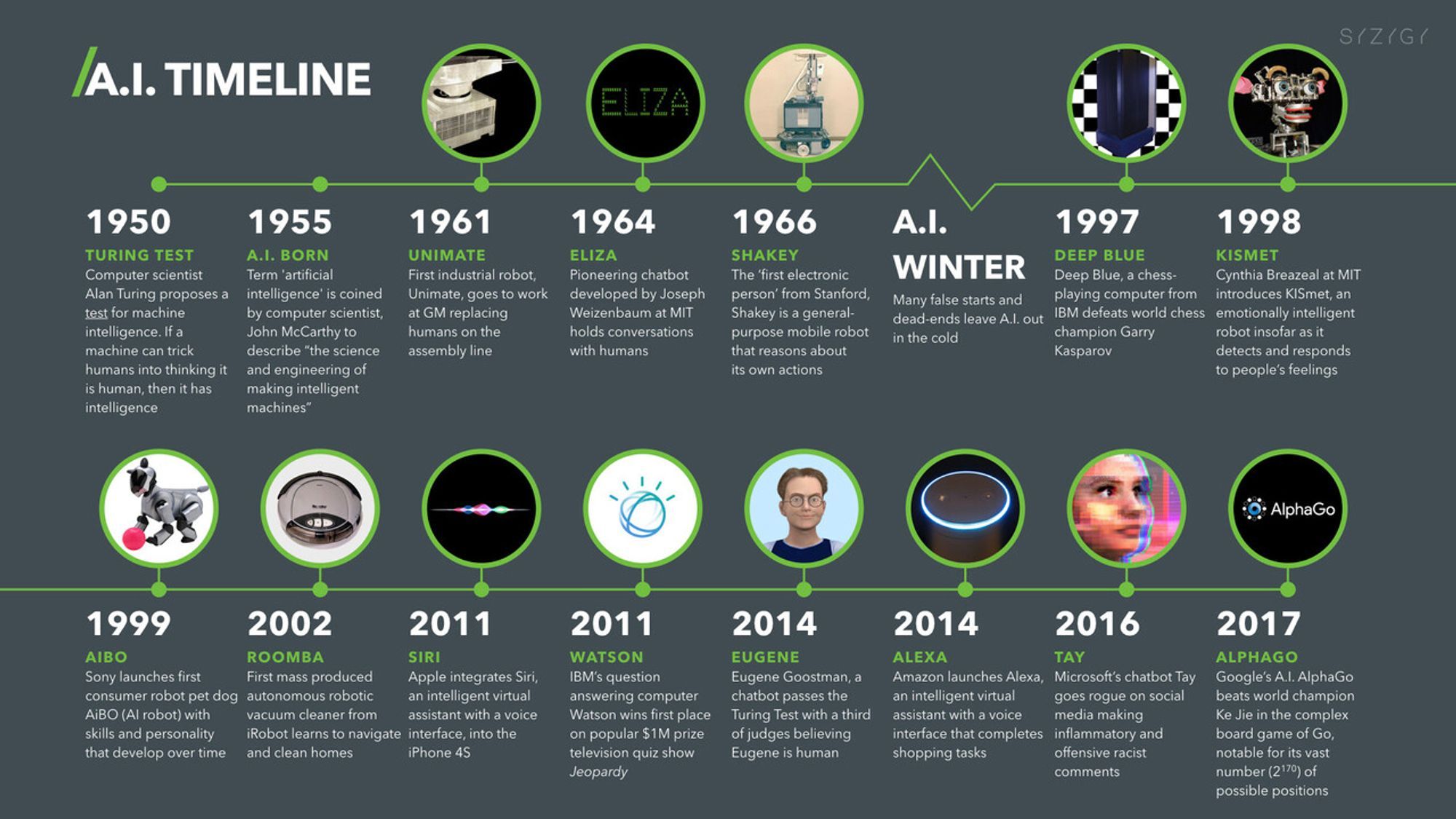

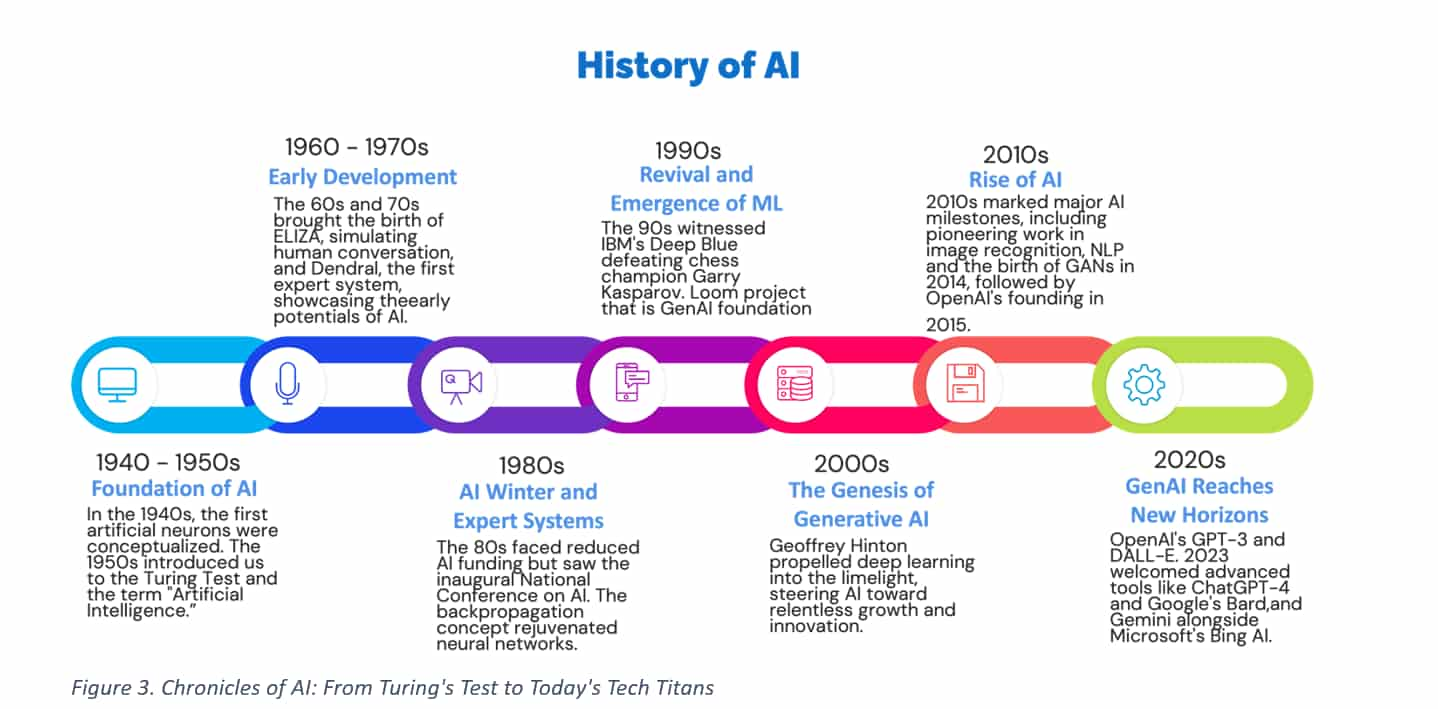

This chart highlights key milestones in AI development, including IBM Watson's Jeopardy! win in 2011 and AlphaGo's victory in 2016, showcasing the rapid evolution of AI capabilities.

The Early AI Era: Hope, Hype, and the First Winter

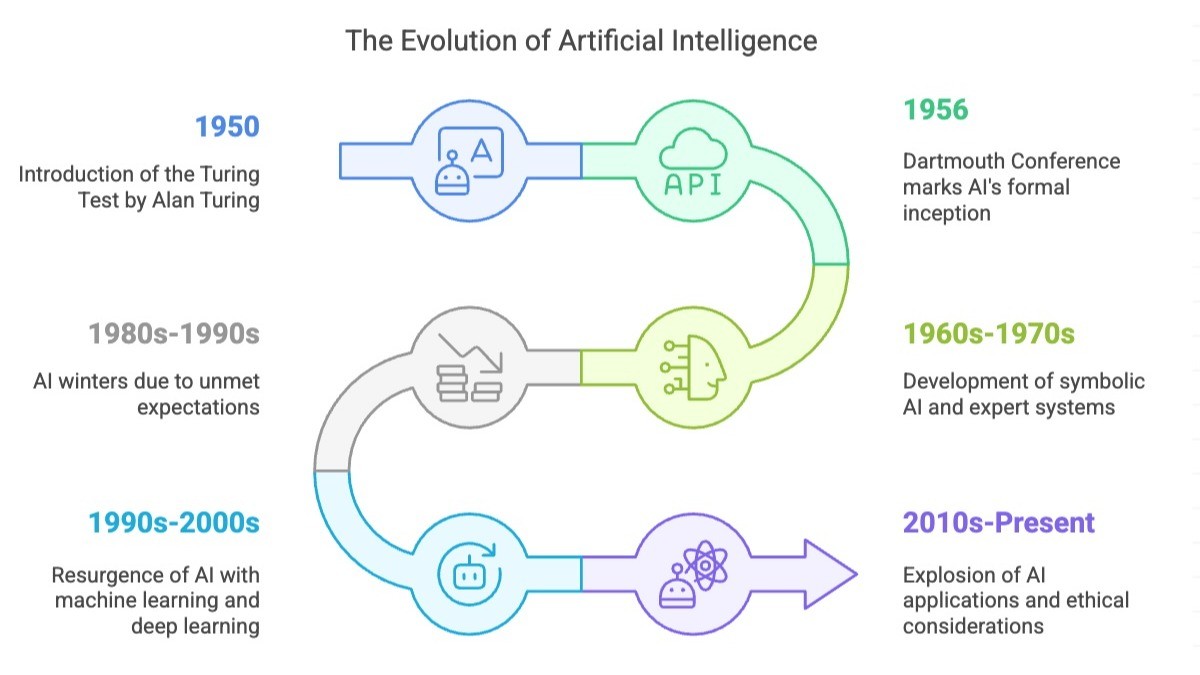

The success at Dartmouth sparked a flurry of activity and optimism. By the early 1960s, researchers had created programs that could play checkers, prove mathematical theorems, and solve logic puzzles. The programs weren't super intelligent by any standard, but they demonstrated that machines could engage in reasoning tasks that had previously seemed to require human cognition.

The U. S. government, particularly the Defense Department through DARPA (Defense Advanced Research Projects Agency), poured money into AI research. If machines could eventually become as intelligent as humans, the military implications were obvious. AI could drive scientific discovery, improve military strategy, crack enemy codes, optimize weapons systems. The government wanted to bet on AI.

Universities responded. MIT, Carnegie Mellon, Stanford, and other institutions built AI laboratories and hired researchers. Students flooded into the field. The 1960s and early 1970s were a golden era for AI research funding and optimism. Marvin Minsky actually stated publicly that significant progress on artificial general intelligence might come within a generation. Some researchers spoke confidently about machines surpassing human intelligence by the 1980s or 1990s.

But there was a problem hiding beneath the optimism. The problems researchers had solved—playing checkers, proving mathematical theorems, manipulating formal logic—were domains with clear rules and limited complexity. Real-world intelligence involves dealing with ambiguity, common sense, nuance, and massive amounts of unstructured information. The techniques that worked well for formal logic struggled with natural language processing, computer vision, and real-world reasoning.

Moreover, as problems got more complex, the computational resources required grew exponentially. A system that could play checkers required brute-force searching through possible game states. A system that could understand natural language or see images required dealing with combinatorial explosions of possibilities. The computers available in the 1960s and 1970s simply didn't have the power to tackle these harder problems.

By the mid-1970s, funding started to dry up. The government and private investors grew skeptical about the pace of progress. The limitations of early AI systems became more apparent. Researchers had to be honest: we're not going to achieve human-level AI in the next five years. Maybe not in the next twenty years. This led to what became known as the "AI Winter," a period of reduced funding, reduced expectations, and reduced interest in AI research.

The AI Winter was brutal. Researchers who had been working on these problems suddenly couldn't get funding. Graduate students couldn't find positions. Several AI laboratories closed or shrank significantly. The field that had promised to revolutionize human society seemed to have stumbled badly.

But the AI Winter also had a beneficial effect. It forced researchers to think more carefully about their assumptions, to focus on more tractable problems, and to build on practical applications rather than grand promises. The researchers who continued working during the winter—McCarthy, Minsky, and younger researchers they mentored—weren't expecting quick solutions anymore. They were serious about understanding the fundamental problems.

Expert Systems and the Second Wave of AI Optimism

In the 1980s, AI experienced a revival, driven by a different approach: expert systems. Instead of trying to build general intelligence, researchers focused on capturing the knowledge of human experts in specific domains and encoding it into computer systems.

An expert system in medical diagnosis, for example, would encode the knowledge of experienced physicians. The system would ask about patient symptoms and test results, then apply logical rules derived from expert knowledge to suggest diagnoses and treatment options. Similar systems were built for mineral exploration, financial analysis, equipment maintenance, and many other domains.

Expert systems actually worked. They provided real business value. Companies invested in building them. This attracted funding back to AI research, and for a few years in the mid-1980s, there was another wave of optimism about artificial intelligence.

But this wave crashed too. Expert systems required constant human expertise to update and maintain. When the domain changed, when new information contradicted old rules, the systems became obsolete. They were brittle and inflexible. They couldn't learn or adapt. They couldn't handle situations outside their narrow domain.

Moreover, the expert system approach highlighted a fundamental problem: encoding expertise is incredibly labor-intensive. You can build an expert system for medical diagnosis because doctors have spent decades developing systematic knowledge. But what about areas where human expertise is mostly intuitive? How do you encode what a human sees when they look at an image? How do you capture the subtle rules of grammar and meaning in natural language?

By the end of the 1980s, another AI Winter was setting in. Expert systems were fading. Once again, the gap between promise and reality became obvious, and funding dried up again.

The Long Wait: AI Research from 1990 to 2010

The 1990s and 2000s were a quieter era for AI research. The field was still alive—researchers continued working, academic labs continued operating, and some practical applications emerged—but the grand promises had evaporated. AI researchers who had expected to achieve human-level intelligence in their lifetime came to accept that the problem was fundamentally harder than anyone had realized.

What did happen during this period was the slow accumulation of better algorithms, better hardware, and better data. Computing power continued to increase according to Moore's Law, doubling roughly every two years. Algorithms for machine learning improved. The internet created massive pools of data that researchers could use for training systems.

In 1997, IBM's Deep Blue defeated Garry Kasparov, the world chess champion. This was a massive milestone that rekindled interest in AI, but it also illustrated the gap between narrow and general intelligence. Deep Blue was brilliant at chess—it could evaluate millions of positions per second and select optimal moves. But it couldn't do anything else. It couldn't understand language, recognize images, or reason about the world outside of chess. It was, in fact, the culmination of the AI winter approach: build systems that do one thing incredibly well by applying brute computational force, not by achieving general intelligence.

During this period, the AI field fractured into different approaches. Some researchers continued working on symbolic AI and knowledge representation. Others focused on machine learning, statistics, and pattern recognition. The symbolic AI camp believed that intelligence required representing explicit knowledge about the world. The machine learning camp believed that intelligence emerged from learning patterns in data. These two camps often viewed each other with skepticism.

What nobody could have predicted in 2000 was that the machine learning approach, particularly deep neural networks, would eventually prove spectacularly successful. The conditions were right: massive amounts of data from the internet, dramatic increases in computing power (especially GPUs), and algorithmic improvements that made training deep neural networks practical. But in 2000, neural networks were actually somewhat out of favor. Many researchers thought symbolic approaches were more promising.

John McCarthy died in 2011, having lived through the entire history of AI from its conception to its quiet maturation. Marvin Minsky died in 2016. They saw the field they had founded go through multiple cycles of hype and disappointment, but they never gave up on its fundamental promise: that machines could eventually engage in intelligent reasoning and behavior.

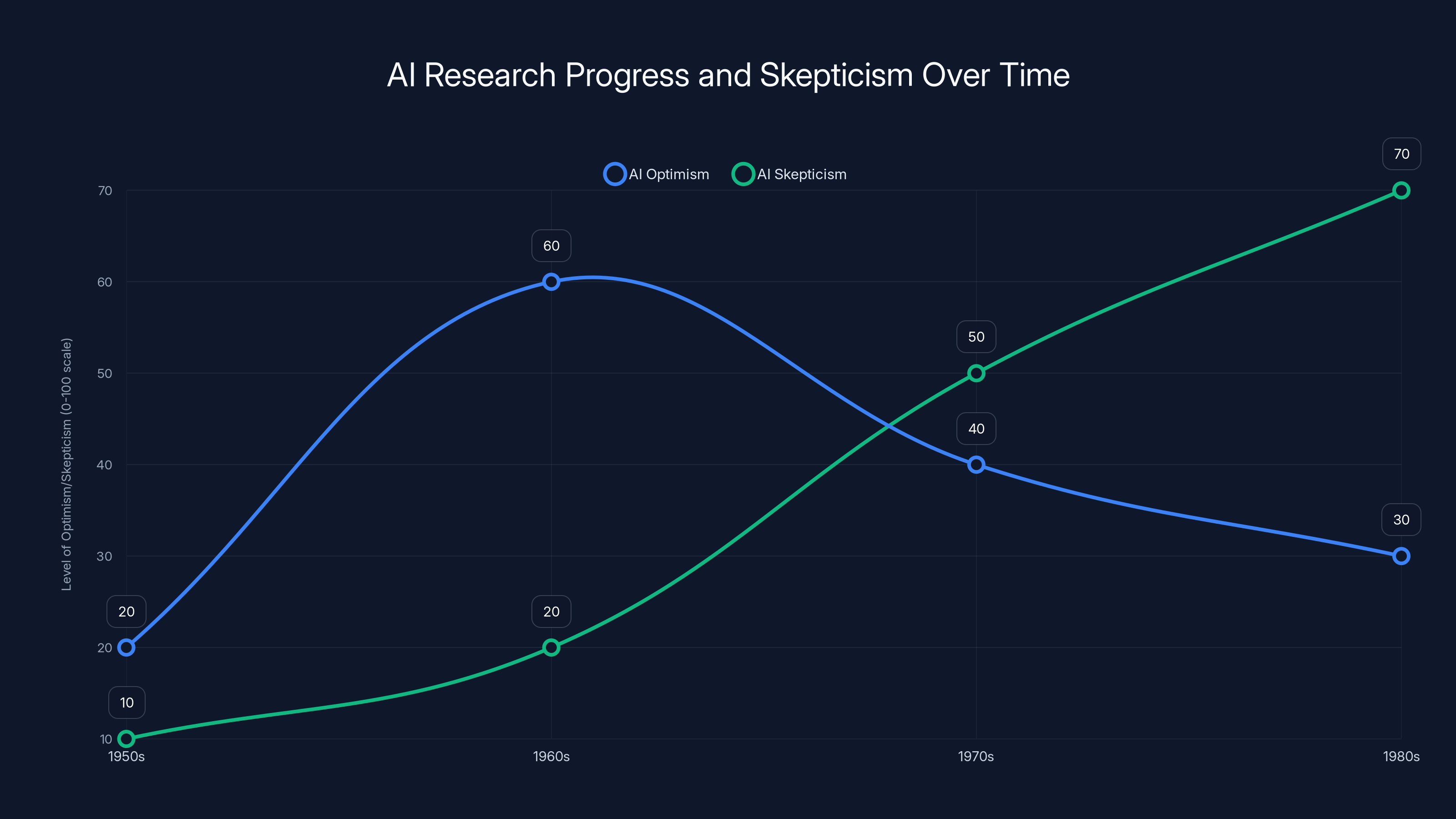

Estimated data shows a rise in AI skepticism during the 1970s and 1980s, coinciding with the 'AI Winter' period, while optimism peaked in the 1960s.

The Modern AI Era: Machine Learning, Deep Learning, and the Vindication of Turing's Vision

Starting in the late 2000s and accelerating through the 2010s, artificial intelligence emerged again, but this time powered by deep learning and massive neural networks rather than symbolic logic. The shift happened gradually at first, then very suddenly.

In 2011, IBM's Watson defeated the world champions of Jeopardy!, a game requiring much broader knowledge and reasoning than chess. In 2012, Geoffrey Hinton's team won a major image recognition competition using deep convolutional neural networks. In 2016, DeepMind's AlphaGo defeated the world champion of Go, a game even more complex than chess.

These weren't just technological achievements. They were philosophical vindications. Turing had been right. You didn't need to replicate the exact structure of human brains. You just needed systems that could perform intelligent behavior—recognizing patterns, engaging in reasoning, making decisions. And you didn't need to explicitly program all the rules. You could train systems on massive datasets and let them learn the patterns themselves.

The rise of deep learning created the conditions for modern AI applications. Natural language processing, which had been stubbornly difficult for decades, suddenly became practical when combined with neural networks and massive text datasets. Computer vision, which had resisted traditional approaches for years, made dramatic progress with convolutional neural networks trained on millions of images.

By the 2010s, AI was moving out of research labs and into commercial products. Google used machine learning to improve search results, predict queries, and personalize advertising. Facebook used it to recognize faces and suggest connections. Netflix used it to recommend movies. Autonomous vehicles started being tested on real streets. AI assistants like Siri, Alexa, and Google Assistant emerged in consumer products.

The 2020s brought the most visible AI revolution to date: large language models like GPT-3, GPT-4, Claude, and others. These systems, trained on massive amounts of text from the internet, could generate human-like responses to nearly any question or prompt. They could write essays, answer questions, explain complex topics, generate creative content, and assist with programming.

Chat GPT, released by OpenAI in November 2022, achieved 100 million users faster than any application in history. It sparked a new wave of AI enthusiasm and investment. Companies large and small rushed to build AI features into their products. A new AI winter seemed impossible—this time the technology was too visible, too useful, too embedded in everyday tools.

But what's remarkable is how Turing's original framework still applies. These modern AI systems don't "really think" in any philosophical sense. Whether they understand meaning or just manipulate symbols remains debated. But they pass an extended Turing Test. You can have a convincing conversation with them. They can engage in reasoning that appears intelligent, even if the underlying mechanism is neural network weights and matrix multiplications rather than conscious deliberation.

Turing asked: Can a machine convince a human that it's intelligent through behavior alone? In 2024, the answer is clearly yes.

Turing's Ghost: Why His Framework Still Dominates Modern AI

Seventeen decades after Turing posed his question, his framework still shapes how we think about artificial intelligence. We still judge AI systems primarily by their behavior and their performance on benchmarks. We still talk about whether machines "understand" or "think," and we still struggle with that philosophical question.

But Turing's insight was that we don't need to solve the philosophical question. We can just look at what systems do. If a language model can write a coherent essay, if an image generator can create novel images from text descriptions, if a reasoning system can solve novel problems, then these systems are performing intelligent functions. Whether there's something it's like to be that system—whether it has subjective experience—is a separate question that might be unanswerable.

This pragmatic approach has been vindicated. It allowed researchers to make progress without getting bogged down in questions about consciousness and inner experience. It allowed them to focus on what matters practically: can the system do useful things? Does it perform as well as or better than humans on specific tasks?

But Turing's framework also created some blind spots that modern AI researchers are starting to grapple with. By focusing exclusively on behavior, we might miss important questions about how systems actually work, what their limitations are, and what they might be optimizing for. A system that aces a Turing Test might still be making disastrous errors, might still be perpetuating biases, might still be unstable and unreliable in ways not apparent from conversation alone.

Moreover, Turing's framework emphasizes replication of human intelligence. But intelligence takes many forms. A system doesn't need to think like a human to be useful. Modern AI systems are often good at things humans struggle with (like evaluating millions of possible chess positions) and bad at things humans find trivial (like understanding physical causality or common sense). The right question might not be "does this think like a human?" but rather "what is this system good at, and what is it bad at?"

The tragedy is that Turing didn't live to see his questions become practical problems that billions of people interact with daily. He posed the philosophical question at exactly the right moment in history. The question he framed was simple enough to guide research for seventy years, yet deep enough that we're still wrestling with its implications.

The Uncomfortable Truth: Why Turing Died Before Seeing His Vision Realized

Alan Turing's death in 1954 wasn't just a personal tragedy. It was a historical tragedy. The man who had invented the theoretical foundation for computing, who had asked the question that would define AI for seven decades, who had served his country with distinction during World War II, died as a criminal in the eyes of his government.

His persecution for homosexuality in 1950s Britain wasn't incidental to his story. It was central. It shaped his brief remaining years and cut short his potential contributions. Think about what Turing might have contributed had he lived another thirty years. He might have witnessed the early years of AI research directly. He might have influenced the Dartmouth workshop more directly. He might have addressed criticisms and refinements to his framework. He might have guided the field when it was first hitting limitations and needing new directions.

Instead, his ideas had to propagate through intermediaries. McCarthy, Minsky, Shannon, and others implemented Turing's vision, but they had to interpret it and adapt it. They brought their own perspectives and assumptions. Some of these were useful, some were limiting. But they weren't Turing's perspectives.

This history matters now because it reminds us that scientific and technological progress isn't inevitable. It depends on specific people, specific moments, and specific social conditions. If Turing had lived in a country and time that didn't criminalize his sexuality, he might have contributed even more. The field of artificial intelligence might have developed differently with his direct guidance.

The tragedy also illustrates how persecution and discrimination waste human talent. Turing was prosecuted by the government he served. He was forced to choose between imprisonment and chemical castration. He died in ambiguous circumstances. Those are the externalities of discrimination, though they're rarely discussed when we celebrate his scientific achievements.

When we celebrate Turing's contributions to AI now, through Chat GPT and large language models and all the modern AI systems that pass his test, we should also remember the context. We're celebrating the work of a man whose government destroyed his life for who he was. It's worth pausing on that tragedy as we marvel at the technologies his ideas enabled.

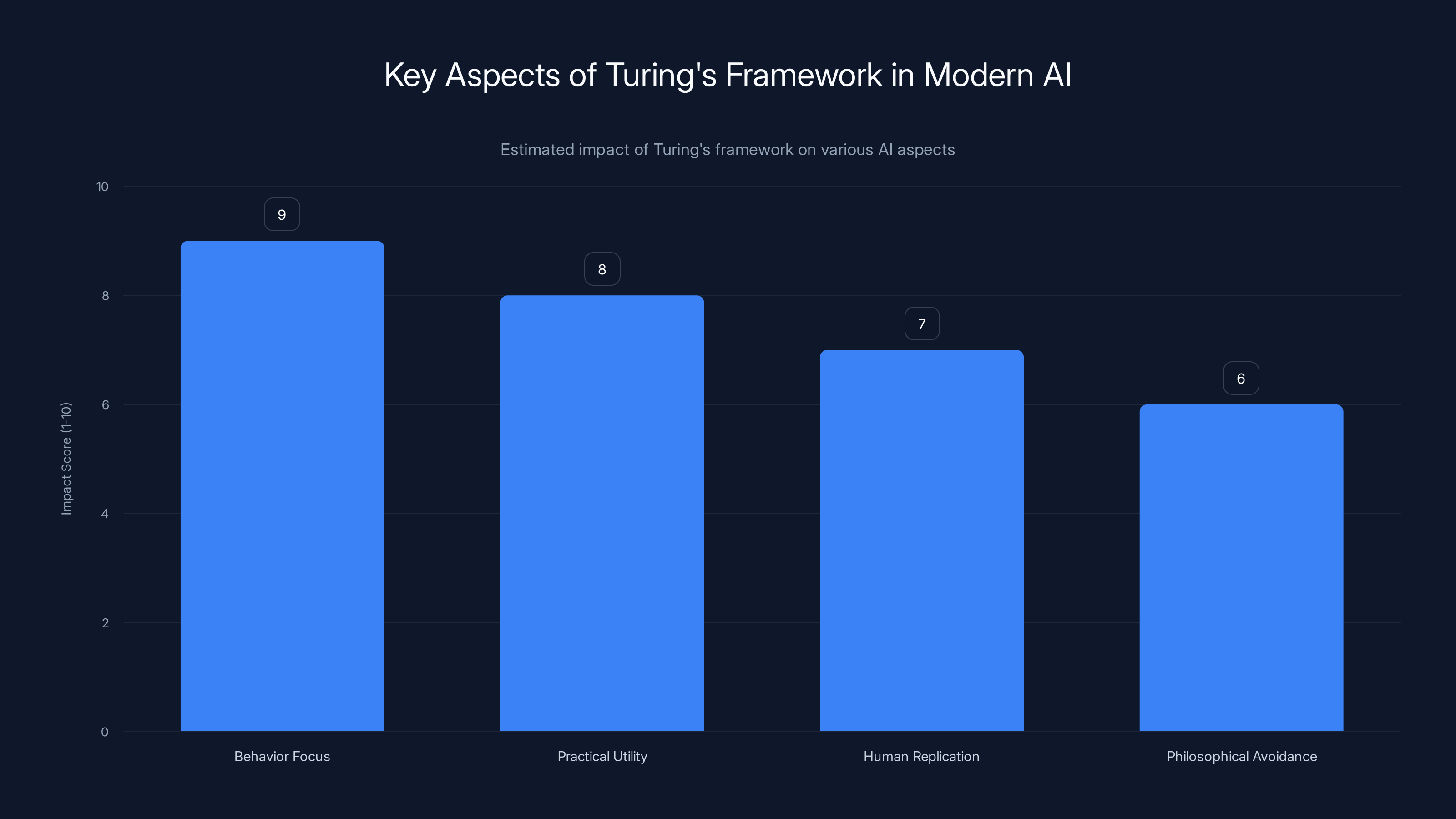

Turing's framework significantly impacts AI by focusing on behavior and practical utility, though it also emphasizes human-like replication and avoids philosophical questions. (Estimated data)

The Dartmouth Meeting's $13,500 Vision: How Ambitious Was It Really?

Let's go back to that $13,500 budget request. By modern standards, it's an almost comical sum. A single AI researcher's salary and equipment budget at a major university exceeds that today. Chat GPT cost tens of millions of dollars to develop, according to various estimates. The compute required to train modern large language models costs hundreds of millions.

But the Dartmouth budget request tells us something important about how the founders thought about the problem. They weren't expecting to build superhuman intelligence from a summer workshop. They were expecting to make progress on a handful of important problems: learning, language, reasoning, neuron networks, self-improvement. They expected to tackle these problems through theoretical work, mathematical development, and programming relatively small systems.

In other words, they were being realistic. They understood they were founding a field, not finishing it. They understood that the work would take decades. They were asking for enough resources to do solid foundational work, not to immediately build human-level AI.

What's remarkable is that their foundational work—the concepts they developed, the problems they identified, the frameworks they built—proved robust enough to guide research for seventy years. The symbolic AI approaches they developed in the 1950s and 1960s are still used today. The concept of knowledge representation that McCarthy emphasized is still crucial. The idea that intelligence requires learning from experience, emphasized by Minsky, still guides machine learning research.

Meanwhile, the specific approaches they were most excited about—symbolic reasoning, knowledge representation, inference engines—proved less powerful than the statistical approaches of modern machine learning. Yet the overall vision that intelligent behavior could be mechanically replicated proved exactly correct.

The $13,500 also tells us about the relative importance of resources in scientific progress. AI didn't emerge from the best-funded discipline or the most expensive lab. It emerged from a modest summer workshop where smart people got together and thought hard about foundational problems. Funding was important, but intellectual clarity and the right questions were more important.

This is a lesson for modern research. It's tempting to think that the biggest breakthroughs come from the biggest budgets and the largest labs. But often they come from focused intellectual work by people who deeply understand the problem. Deep learning emerged from a small community of researchers, many at universities, who had been thinking about neural networks during the AI winter when it was unfashionable. The theoretical breakthroughs that made modern deep learning possible—backpropagation, the ReLU activation function, dropout regularization—came from researchers working on modest budgets because they believed in the approach.

The modern large language models that power Chat GPT, Claude, and GPT-4 do require massive compute budgets. But they're built on theoretical foundations developed by researchers working on tight budgets. The transformer architecture, which is fundamental to modern LLMs, was developed at Google Brain when the team was much smaller and less well-resourced than it is today. The concepts came first, the scaling came later.

What Would Turing Think of Modern AI?

This is a delightful thought experiment that AI researchers and philosophers engage in frequently. What would Alan Turing make of Chat GPT? Would he think it passed his test? Would he be satisfied with modern AI progress?

On the question of the Turing Test specifically, he'd probably be impressed. A language model can engage in extended, coherent conversation on nearly any topic. It can answer questions, explain concepts, generate creative writing, and engage in back-and-forth dialogue. An average person, texting with Chat GPT in one window and a human in another, might well struggle to consistently identify which is which, especially in extended conversation.

Turing might also be curious about the mechanisms. He'd want to understand how neural networks work, how they're trained, why they're so much more powerful than the symbolic AI systems of the 1960s and 1970s. He was a mathematician and he cared about understanding how things worked. Modern deep learning would fascinate him as a mathematical question: why do these particular architectures, trained on these datasets, produce these capabilities?

But Turing might also be frustrated with how narrowly modern AI systems focus on specific tasks. A large language model is remarkable at language but useless at physical reasoning. A computer vision system can identify objects in images but can't reason about physical causality. Turing's vision was for general intelligence across domains, and modern systems remain stubbornly specialized.

He'd probably also be skeptical of the hype. Turing was always a realist about how hard the problems were. He'd read the breathless articles about AI changing everything, and he'd probably respond with McCarthy's skepticism: Yes, this is progress. Yes, this is interesting. But let's not oversell what we've achieved. We've built systems that are very good at specific tasks. General intelligence, common sense, reliable reasoning across domains—those are still hard problems.

Most importantly, Turing would probably be vindicated. He asked: Can machines behave intelligently? Can they pass a test of intelligent behavior through written conversation? The answer, clearly, is yes. The specific mechanism—neural networks instead of symbolic logic—is different from what he might have expected. But the fundamental claim proved correct. Intelligence, or at least intelligent behavior, is mechanically reproducible.

The Unfinished Problem: Why AI Still Doesn't Have Common Sense

One of the ironies of modern AI is that despite its remarkable capabilities, it often fails at things children find trivial. A language model might write a sophisticated essay but give you wildly wrong answers about basic physics. An image generator might create beautiful pictures but struggle with simple spatial relationships.

This failure is rooted in what Turing and McCarthy and Minsky all recognized as a core challenge: common sense. Common sense is the vast background knowledge about how the world works that humans acquire through experience and culture. It's knowing that water falls downward, that people get wet when they're in rain, that you can't fit a square peg in a round hole, that people eat food to survive.

Building common sense into AI systems has proven to be one of the hardest problems in the field. You can't explicitly program all common sense knowledge—there's too much of it, and it's too subtle and contextual. But you also can't just train on text because text doesn't capture the physical experience of how the world actually works.

McCarthy spent much of his later career on the problem of "formalizing common sense," trying to figure out how to represent and reason about the everyday knowledge that humans take for granted. It proved to be an incredibly difficult problem. His work was prescient—decades later, when large language models started showing common sense failures, researchers recognized that McCarthy had identified the core issue.

Modern approaches are trying to tackle this problem through various means: training on multimodal data that includes images and videos as well as text, using reinforcement learning to learn from interaction with environments, building hybrid systems that combine neural networks with symbolic reasoning. But it remains an unsolved problem. AI systems are still far from having the kind of robust, flexible common sense that a five-year-old possesses.

This suggests that Turing's test, while clever, might not be a perfect measure of intelligence. A system could potentially fool a human judge through clever language without actually understanding the physical world or having common sense. This is actually happening now—language models are often impressive in conversation but reveal significant gaps in reasoning when pressed on physical situations or causal relationships.

The Dartmouth proposal and Turing's work were pivotal in AI history, scoring high on impact. Estimated data.

The Question of Consciousness: Does AI Need It?

Turing's deliberate avoidance of the consciousness question was both a strength and a potential limitation. By focusing on behavior, he made progress possible. But it also allowed the hard question of consciousness—whether machines can or should have subjective experience—to remain unresolved.

Modern AI systems don't appear to be conscious. They don't have subjective experience, as far as we can tell. They process information and generate outputs, but there's no evidence of an internal felt sense of what that's like. They don't have preferences, desires, or experiences in any meaningful sense.

But here's the genuine puzzle: we don't actually have a clear definition of consciousness, and we don't have good ways to test for it. We can't even fully explain human consciousness, let alone determine whether its presence or absence in machines matters morally or philosophically.

Some researchers argue that consciousness will naturally emerge once machines reach sufficient complexity. Others argue that consciousness is a specific feature of biological systems and won't emerge in silicon-based systems no matter how advanced. Others argue the question is meaningless—consciousness is just what complex information processing feels like from the inside.

What's clear is that we don't need conscious machines to have serious ethical concerns about AI. Even if modern language models aren't conscious, they're powerful enough to affect human lives significantly. They can spread misinformation, they can encode and amplify biases, they can be used to manipulate people or automate harmful decisions. These are ethical problems that exist regardless of whether the systems are conscious.

Turing himself was skeptical about consciousness as a requirement for intelligence. He argued that we shouldn't demand machines have properties we can't even clearly define in humans. And this remains good guidance. Whether future AI systems have consciousness or not, the important question will be: what can they do, and what responsibility do we have for how they're used?

Legacy in Motion: How the Founding Vision Shapes Current AI Research

The vision articulated at Dartmouth—that every aspect of intelligence could be mechanically replicated—still guides current AI research, though in mutated form. Researchers today work on narrow problems: language understanding, image recognition, game playing, strategic reasoning, robotic control, prediction, generation. But the underlying assumption remains that these can be solved through mathematical models and computation.

The founding vision also created a particular hierarchy of problems. Language, reasoning, learning, and abstraction were listed at Dartmouth as core challenges. Seventy years later, these remain core challenges. We've made progress on language and learning. Reasoning remains surprisingly difficult. Abstraction—the ability to extract principles from examples and apply them broadly—is still an open problem.

The emphasis on mechanically replicable intelligence meant that AI research naturally aligned with the capabilities of computers. As computers got faster and more powerful, AI research could tackle harder problems. When GPUs became available for computation, AI research could leverage them for massive parallel processing. When massive datasets became available online, AI research could train systems on scale never before possible.

In other words, AI research has always been shaped by what's technically possible. This isn't a limitation—it's the nature of engineering research. You solve problems within the constraints of available technology. But it means that AI research isn't a pure search for truth about intelligence. It's a search for intelligence within the constraints of what we can build.

McCarthy understood this. He knew that general intelligence wouldn't emerge from day one. But he believed that building specific intelligent systems, learning from them, and gradually expanding their capabilities would eventually lead somewhere important. Seventy years later, that strategy has proven sound.

The Current Moment: Are We Building Toward General Intelligence or Just Better Narrow Systems?

This is the essential question that the entire history of AI has been building toward. We've built systems that are superhuman at narrow tasks: playing chess, recognizing images, understanding language. But are we gradually building toward systems that can do anything a human can do? Or are we just building more and more specialized systems that excel within their narrow domains?

Modern large language models feel like progress toward generality because they can do so many different things. The same GPT-4 that can write essays can also help debug code, explain physics concepts, and analyze historical documents. It has a surprising amount of breadth.

But that breadth might be deceptive. The system is trained on text from the internet, so it can do anything that's essentially a text-based task. But ask it to physically manipulate objects, to genuinely understand visual scenes, to engage in extended reasoning that requires interacting with the world, and its capabilities drop dramatically.

Some researchers argue that this is just a matter of scale and training data. If you train systems on multimodal data—text, images, video, audio—and incorporate learning from interaction, then broader capabilities will naturally emerge. Others argue that there's something more fundamental missing, that current approaches are hitting architectural limitations that will require new theoretical breakthroughs.

The truth is we don't know. The pace of AI progress has been surprising to nearly everyone. Capabilities have expanded faster than most experts expected. But so have limitations become apparent in unexpected ways. Language models are good at linguistic tasks but surprisingly bad at basic reasoning. Image generators are creative but often make systematic errors about spatial relationships. Robotics systems struggle with generalization.

What's likely is that we're genuinely making progress toward more general intelligence, but the path is longer and weirder than early AI researchers imagined. The breakthrough won't come from just building bigger systems or collecting more data. It will require new theoretical insights, new architectural innovations, and probably new approaches we haven't discovered yet.

This is actually consistent with Turing's framework. He didn't claim that intelligence would be easy to build. He just claimed it was mechanically possible. The last seventy years have borne that out. It's possible. It's just harder than anyone thought.

What History Teaches Us About AI's Future

Artificial intelligence has cycled through multiple waves of optimism and disappointment. Each wave has followed a similar pattern: initial successes and excitement, growing expectations, over-promising, disappointing results, and then either a winter or a shift to more realistic expectations.

We're currently in what feels like a strong wave of progress. Large language models are genuinely impressive. They're being deployed in real products affecting millions of people. The capabilities are expanding. The investment is massive. But the history suggests we should be cautious about predicting what comes next.

It's quite possible that we're at a genuine breakthrough—that the scaling laws will continue to hold, that emergent capabilities will keep appearing, and that we'll eventually build systems with broad general intelligence. It's also possible that we're hitting architectural limitations, that the gains are slowing despite increasing investment, and that we need fundamental new approaches to make the next leap.

The evidence is ambiguous. Language models showed remarkable generalization from scale. But they also showed surprising brittleness in various domains. The same approach scaled to different tasks sometimes works brilliantly and sometimes fails completely in hard-to-predict ways.

What history teaches us is this: be serious about the technical problems, be skeptical of grand predictions, and remember that genuine progress usually looks less impressive in the moment than the hype suggests. McCarthy knew this. Minsky knew this. The researchers who survived the AI winters knew this.

It also teaches us that the field needs diverse approaches. The success of neural networks doesn't mean symbolic AI is irrelevant. The impressive results of large language models don't mean other approaches should be abandoned. Progress usually comes from combining different approaches, learning from each one's strengths and limitations.

Most importantly, history teaches that progress depends on asking the right questions. Turing asked the question that shaped an entire field. McCarthy asked whether intelligence could be mechanically replicated. Minsky asked what components of intelligence might be separately learnable. These weren't the questions we'd necessarily guess to ask, but they proved foundational.

What are the key questions for modern AI research? Perhaps: How can we build systems with robust common sense? How can we ensure AI systems are reliable, explainable, and aligned with human values? How can we combine the strengths of different AI approaches—neural networks and symbolic reasoning, learning and explicit knowledge? How can we build AI systems that learn efficiently from limited data rather than requiring massive datasets?

These questions don't have obvious answers. But that's exactly why they matter. Progress comes from wrestling with hard problems, not from scaling solutions that already mostly work.

Conclusion: The Unfulfilled Vision and What Comes Next

Alan Turing died in 1954, two years before the field of artificial intelligence was formally named. He never saw the Dartmouth Summer Research Project. He never knew that his question would shape research for decades. He never witnessed the cycles of optimism and disappointment, the AI winters and revivals, the eventual breakthroughs in deep learning.

Yet his influence on the field is profound. The question he asked—can machines behave intelligently—still guides research. The pragmatic approach he suggested—focus on observable behavior rather than abstract metaphysics—still shapes how we evaluate AI systems. The intellectual modesty he expressed—we don't need to solve consciousness to make progress on intelligence—still provides good guidance when the field gets overconfident.

The $13,500 that Dartmouth requested and received was small in absolute terms. But it founded a field that would reshape human civilization. It wasn't the only funding that mattered, or even the most important. But it was the moment when artificial intelligence became not just an idea but an organized research program.

Seventy years later, we're living in the world that Turing imagined. Machines that can engage in conversation, that can solve problems, that can generate creative content, that can surpass humans in specific domains. We haven't achieved the kind of general intelligence that the early researchers hoped for. We're still far from machines that have common sense, that can transfer knowledge across domains, that can engage in the kind of flexible reasoning that humans find natural.

But we've made unmistakable progress. And we're still asking the same fundamental questions that Turing asked. Can machines think? For narrow definitions of thinking, the answer is yes. For broader definitions, we're still working on it. That work will continue, driven by the theoretical foundations Turing laid and refined by decades of researchers building on his insights.

The tragedy is that Turing couldn't live to see his ideas transform the world. The opportunity is that we can learn from his intellectual approach as we navigate the next phase of AI development. Turing was brilliant, but he was also pragmatic. He cared about actual progress, not just philosophical purity. He was skeptical of grand claims and focused on concrete problems. He understood that intelligence might take many forms and didn't insist on replicating human intelligence exactly.

As AI systems become more powerful and more integrated into society, these lessons become more important, not less. We need pragmatism about what's actually possible. We need skepticism about grand promises. We need to focus on specific problems and measurable progress. We need to remember that intelligence is a complex thing that doesn't fit into simple categories.

The story of AI isn't a simple narrative of steady progress toward a predetermined goal. It's a messier story of brilliant people asking good questions, hitting unexpected limitations, recovering and trying new approaches, and gradually expanding what's possible through computation and mathematics. The story continues. And if the first seventy years are any guide, the next seventy will surprise us in ways we can't predict.

That's the real legacy of Turing, McCarthy, Minsky, and the Dartmouth Summer Research Project. Not that they solved the problem of artificial intelligence. But that they framed it in a way that allowed generations of researchers to keep making progress, even when progress was slower than anyone hoped, and even when the problems proved harder than anyone imagined.

FAQ

What was the significance of the Turing Test in AI history?

Turing's test fundamentally reframed how we think about machine intelligence. Instead of asking abstract philosophical questions about whether machines could "really" think, Turing proposed a practical behavioral test: if a machine can convince a human judge that it's human through conversation alone, should we care whether it actually thinks? This shift from philosophy to observable behavior unlocked research progress by making the problem concrete and testable. Nearly all modern AI development, from expert systems to large language models, builds on this pragmatic foundation.

Why did the Dartmouth Summer Research Project matter if it didn't solve artificial intelligence?

Dartmouth mattered because it formally organized artificial intelligence as a research field. The workshop gathered leading researchers, established a common vocabulary (the term "artificial intelligence" appeared in their proposal), created a shared research agenda, and spawned collaborations that shaped AI for decades. It proved that the problem was worth tackling and that progress was possible. The $13,500 budget request communicated that this was serious, tractable research, not just speculation. Within years, major universities had AI laboratories and serious funding.

How did Alan Turing's 1950 paper influence the Dartmouth workshop?

Turing's "Computing Machinery and Intelligence" directly shaped Dartmouth's approach. McCarthy and colleagues adopted Turing's framework that intelligence should be measured by behavior rather than internal consciousness. The Dartmouth proposal quoted Turing's ideas and built on his theoretical foundation. However, the researchers at Dartmouth moved beyond just asking the question. They tried to actually build systems that exhibited intelligent behavior through symbol manipulation and logical reasoning. Turing's paper provided the philosophical justification, and Dartmouth provided the engineering attempt.

What made the AI Winter periods happen, and why did research continue?

AI Winters occurred when optimistic predictions about capabilities and timelines didn't materialize. In the 1970s and 1980s, early AI systems proved brittle and limited. They could handle narrow, well-defined problems but failed catastrophically outside their domains. Researchers had overestimated how quickly they could build general intelligence. However, research continued because fundamental problems were still unsolved, graduate students were still studying the field, and researchers like McCarthy and Minsky kept insisting the work was important. The field contracted but didn't disappear. This ultimately proved valuable—the smaller, more focused research community during the winters made important theoretical progress that enabled modern breakthroughs.

How does modern AI relate to the original Turing and Dartmouth vision?

Modern AI, particularly large language models, validates the core vision that intelligent behavior can be mechanically replicated. Systems like Chat GPT can engage in conversation and complete complex tasks, demonstrating that Turing's test is passable. However, modern AI uses statistical learning from data rather than the symbolic logic that early researchers emphasized. The shift from symbol manipulation to neural networks was unexpected, but the fundamental principle—that intelligence is mechanically reproducible—has proven correct. Modern AI researchers still grapple with problems Dartmouth identified: learning, language, abstraction, and reasoning.

Why is common sense still a major unsolved problem in AI?

Common sense—the vast background knowledge about how the world works that humans acquire through experience—is incredibly difficult to mechanically replicate. You can train systems on massive text datasets, but text doesn't capture the physical experience of gravity, causality, object permanence, and spatial relationships. McCarthy recognized this challenge and spent much of his later career trying to formalize common sense. Modern AI systems demonstrate this limitation regularly: they might write sophisticated text but give absurd answers about basic physics. Building common sense seems to require not just text training but interaction with environments, multimodal learning, and fundamentally new approaches we haven't yet discovered.

What would Alan Turing think about Chat GPT and modern large language models?

Turing would likely be impressed that systems can engage in extended, coherent conversation—something his Turing Test specifically measured. He'd be intrigued by the mathematical mechanisms of neural networks and fascinated by why scaling neural networks produces such diverse capabilities. However, he'd probably also be realistic about limitations. Turing was skeptical of grand promises and understood that intelligence is complex. He'd likely appreciate that modern AI systems excel at language tasks while struggling with reasoning and common sense. He'd probably have useful questions about why these systems fail in particular ways and what that teaches us about intelligence itself.

How do the failures and successes of AI so far inform predictions about future AI development?

The history suggests that progress is possible but slower and weirder than expected. Early researchers underestimated fundamental problems like common sense and overestimated how quickly pure scale would solve them. Meanwhile, they missed that statistical learning from massive datasets would prove more powerful than symbolic logic. The lesson is to be skeptical of both doom-saying and utopian predictions. Genuine progress continues, but it doesn't follow the paths anyone predicted. The next breakthrough might come from new theoretical insights rather than just scaling existing approaches. Humility about what we don't know—a trait McCarthy and Turing shared—remains essential as AI development accelerates.

Try Runable to explore AI's practical applications. Runable offers AI-powered automation for creating presentations, documents, reports, and more, starting at $9/month. Whether you're exploring AI capabilities or building workflows, see how modern AI tools work in practice.

Key Takeaways

- Alan Turing's 1950 paper 'Computing Machinery and Intelligence' reframed intelligence from abstract philosophy to testable behavior through his famous imitation game—the foundation for all subsequent AI research.

- The Dartmouth Summer Research Project's $13,500 1955 proposal formally named and organized artificial intelligence as an academic field, attracting researchers and funding for decades.

- John McCarthy pioneered pragmatic skepticism about AI timelines while building the field, consistently warning against hype while believing genuine progress was possible.

- The transition from symbolic AI logic systems of the 1960s-80s to statistical deep learning in the 2000s-2020s proved counterintuitive but validated Turing's core insight that intelligence is mechanically replicable.

- Common sense remains AI's unsolved problem 70 years later—systems can handle language and logic but still fail at basic physical reasoning that children find trivial.

Related Articles

- AI Models Learning Through Self-Generated Questions [2025]

- Flapping Airplanes on Radical AI: The Data Efficiency Revolution [2025]

- How Bill Gates Predicted Adaptive AI in 1983 [2025]

- AI Video Generation Without Degradation: How Error Recycling Fixes Drift [2025]

- Larry Ellison's 1987 AI Warning: Why 'The Height of Nonsense' Still Matters [2025]

- Waymo's Genie 3 World Model Transforms Autonomous Driving [2025]

![The True Origins of AI: From Turing to Dartmouth's $13,500 Moment [2025]](https://tryrunable.com/blog/the-true-origins-of-ai-from-turing-to-dartmouth-s-13-500-mom/image-1-1771352125867.jpg)