The Day Tech's Most Powerful CEO Faced His Accusers

Mark Zuckerberg walked into a downtown Los Angeles courthouse on an ordinary Tuesday morning, but nothing about the moment felt routine. He wasn't alone. Flanking him was a small entourage of what appeared to be Meta employees wearing the company's Ray-Ban smart glasses, a subtle flex of corporate tech that seemed almost tone-deaf given what was happening inside the courtroom.

On the other side of the courtroom doors sat something far heavier: parents. Real parents. Parents whose children had died. They'd come to watch the man who built the platforms their kids used compulsively defend himself against allegations that his company's design choices had contributed to those deaths. The juxtaposition was stark, uncomfortable, and absolutely deliberate.

Zuckerberg was there to testify in what's becoming one of the most consequential tech trials of the decade. Not about antitrust violations, though Meta faces those too. Not about data privacy breaches, though the company has settled those cases before. This trial was about something more primal: whether the design of social media platforms, specifically Instagram and its features, was deliberately engineered to addict young people and harm their mental health.

The case centered on K. G. M., a 20-year-old woman claiming that Meta and Google's product decisions encouraged compulsive app usage that led to severe mental health issues. K. G. M. wasn't alone in these claims. Across the country, similar lawsuits were brewing, each one chipping away at the narrative that tech companies are just neutral platforms where people voluntarily spend their time.

For eight straight hours, Zuckerberg sat in that witness chair, often responding in his characteristic cadence. Matter-of-fact. Sometimes monotone. Always careful. He'd answer questions about AR filters that mimic cosmetic surgery. Questions about contradictions in his public statements about keeping young kids off his platforms. Questions about internal discussions where his own executives debated whether certain features were doing more harm than good.

The trial wasn't just about testimony though. It was a theater of competing narratives about what social media actually is, what responsibility tech companies bear, and whether a 20-year-old woman's deteriorating mental health could be directly tied to algorithms and design choices made in Menlo Park, California.

The Ray-Ban Gambit: Tech Flexing in the Courtroom

Let's address the elephant in the room first, because it tells you something important about how Meta operates even in crisis moments.

Zuckerberg didn't show up alone. He brought along people wearing Meta's Ray-Ban smart glasses. The judge wasn't happy about it. In fact, the judge explicitly warned everyone in the courtroom that wearing Meta's AI glasses could result in contempt of court charges. The judge was concerned about potential recordings being made inside the courtroom, which would violate trial rules.

This detail seems small, but it's actually revealing. Here's Zuckerberg, walking into what might be the most serious legal challenge to Meta's business model in years, and his instinct is to showcase the company's latest hardware. It's the kind of move that suggests either remarkable confidence or remarkable tone-deafness. Maybe both.

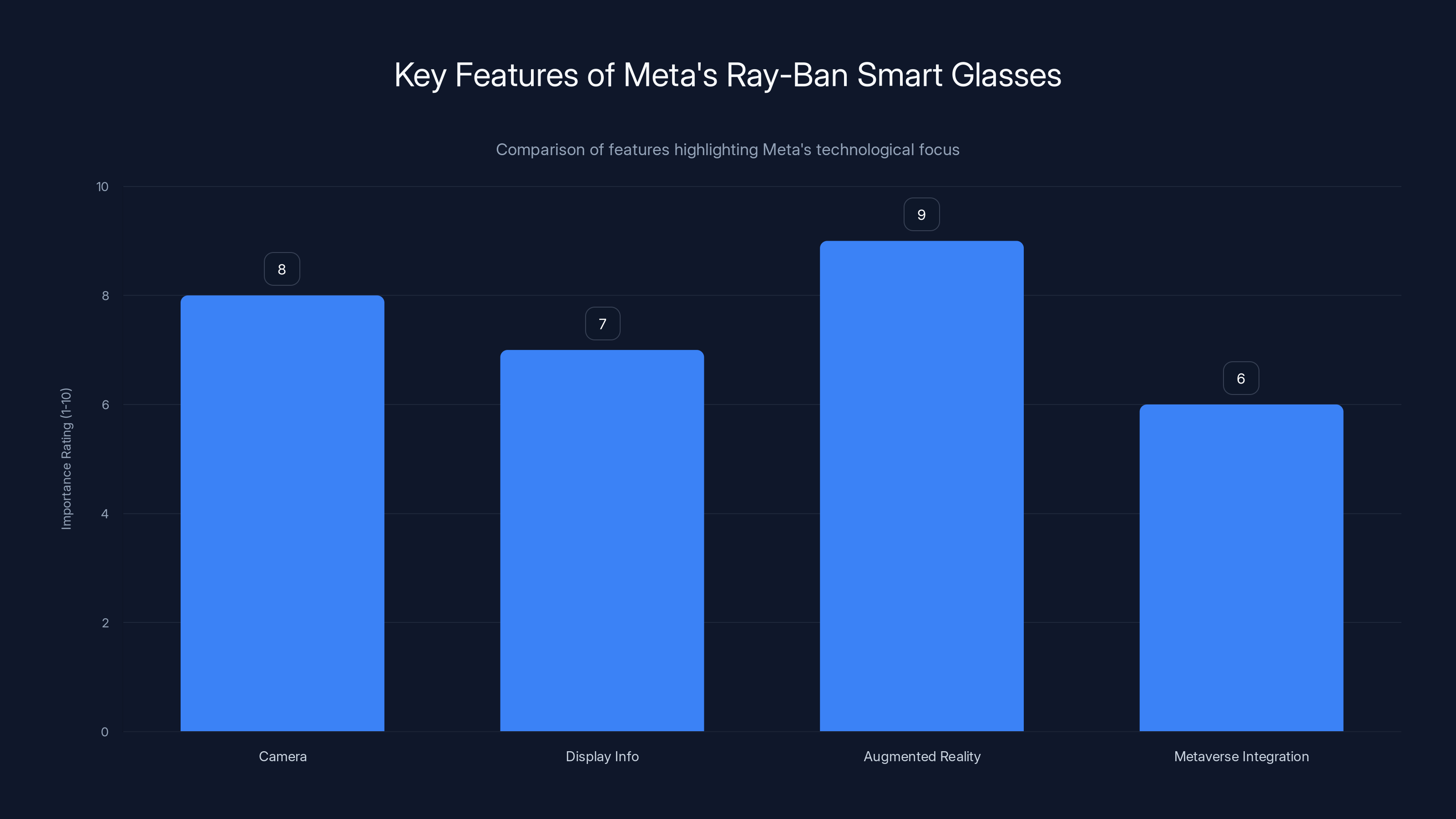

The glasses weren't just props. They represent Meta's pivot toward what the company calls "the next computing platform." Zuckerberg has been betting the company's future on augmented reality and spatial computing. The Ray-Bans are the consumer-facing expression of that bet. They have cameras, they can display information, and they're positioned as the gateway to the metaverse Zuckerberg has been evangelizing for years.

Bringing them to his trial testimony? It sends a message: Meta isn't backing down. The company isn't apologizing for what it built. In fact, Meta is moving forward, building the next thing, confident enough in its legal position that it can afford to show off new hardware.

Whether that confidence is justified is an entirely different question.

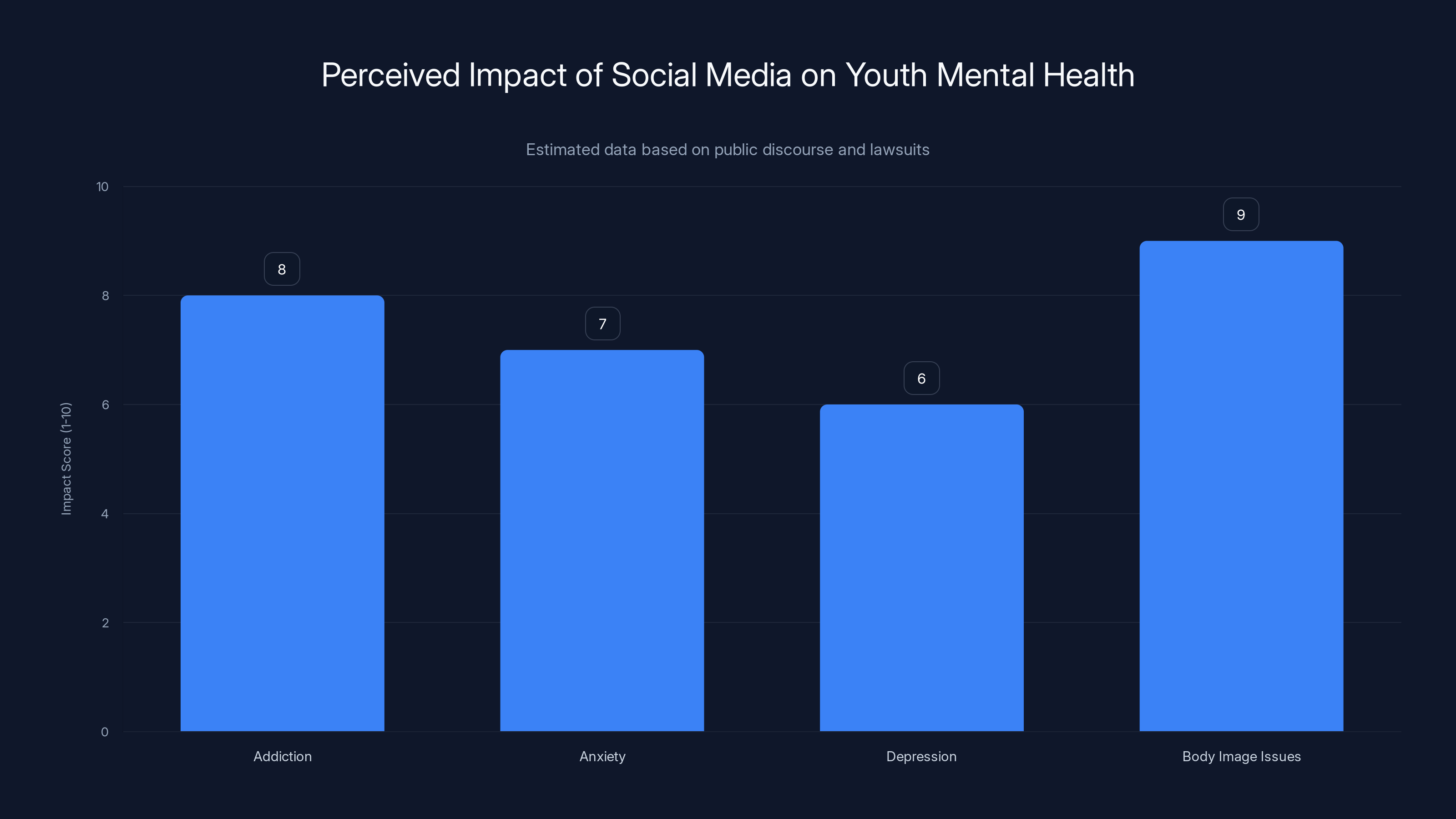

Estimated data suggests that social media platforms are perceived to have a high impact on addiction and body image issues among youth.

What K. G. M. Actually Claimed, and Why It Matters

To understand what Zuckerberg was defending, you need to understand the plaintiff's case.

K. G. M. claimed that she compulsively used Instagram and other Meta platforms because of specific design choices the company made. These weren't accidental features. They were deliberate decisions, her lawyers argued, designed to maximize engagement, increase time spent on the platform, and keep users coming back for more dopamine hits.

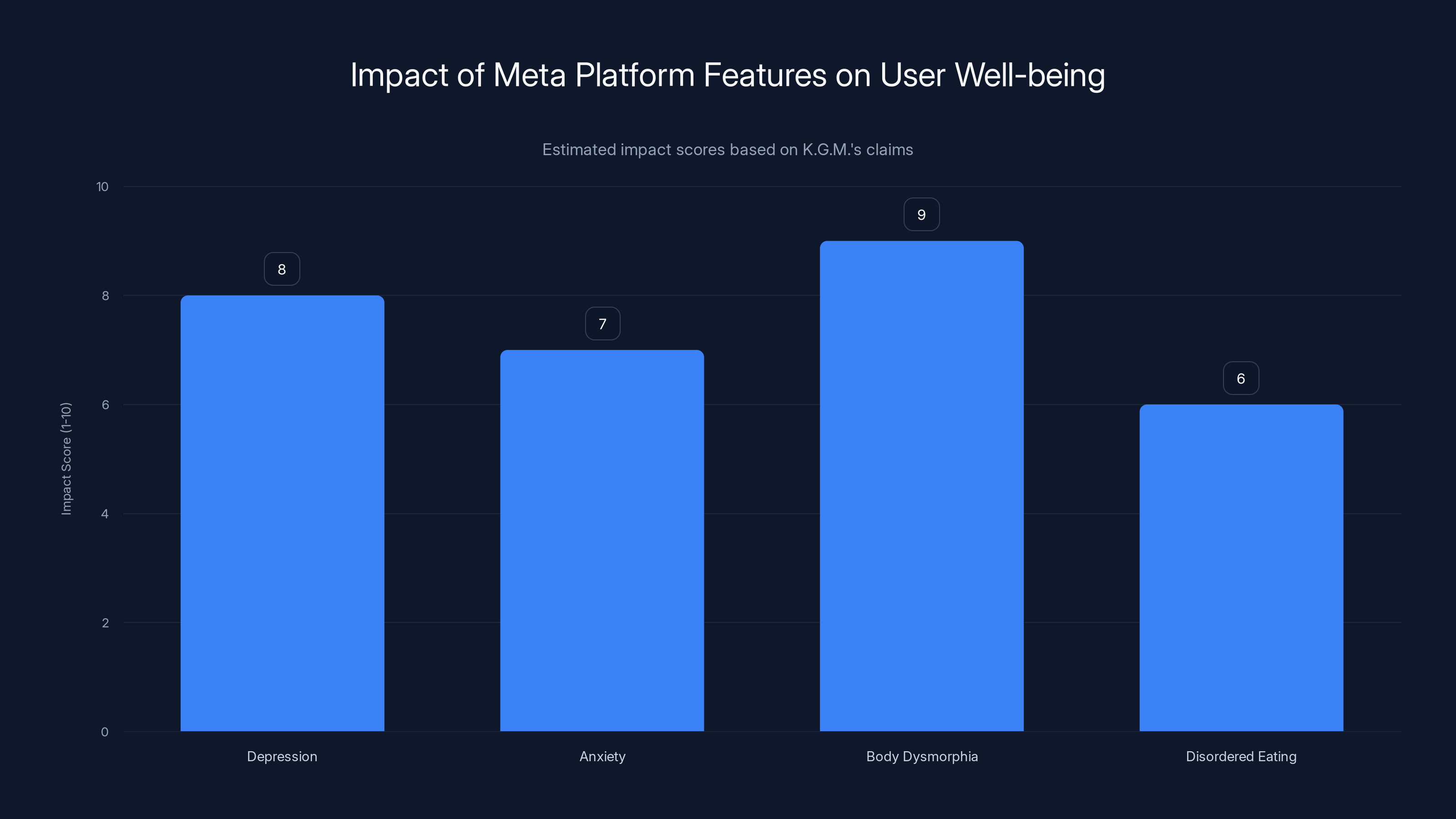

The specific harms she claimed: depression, anxiety, body dysmorphia, and disordered eating. She attributed these directly to features like infinite scroll, algorithmic feeds that amplified content designed to trigger emotional responses, and yes, AR filters that let her distort her face to look more like impossible beauty standards.

Meta's position throughout the trial was consistent: the company doesn't design its platforms to harm people. Rather, Meta makes choices that balance competing values. Free expression matters. User choice matters. The ability for creators to build beauty filters matters. When you build a social network, you have to think about what people actually want to do, not just protect them from themselves.

Zuckerberg's defense of the AR filter decision perfectly encapsulates this tension. When asked why Meta didn't permanently ban filters that simulate cosmetic surgery, Zuckerberg said something that gets to the philosophical core of his approach to product design: "You don't really build social media apps unless you care about people being able to express themselves."

In other words, if you restrict what people can do on your platform too much, you're no longer building a social media app. You're building something paternalistic. Something that assumes people can't be trusted with their own choices.

But here's where it gets legally and ethically murky. K. G. M.'s lawyers weren't arguing that the filters should be banned because they're bad. They were arguing that Meta banned them initially, then unbanned them, and that this decision was driven by internal metrics showing that the filters drove engagement and usage. The company knew they were problematic, the argument went, but chose engagement over user wellbeing.

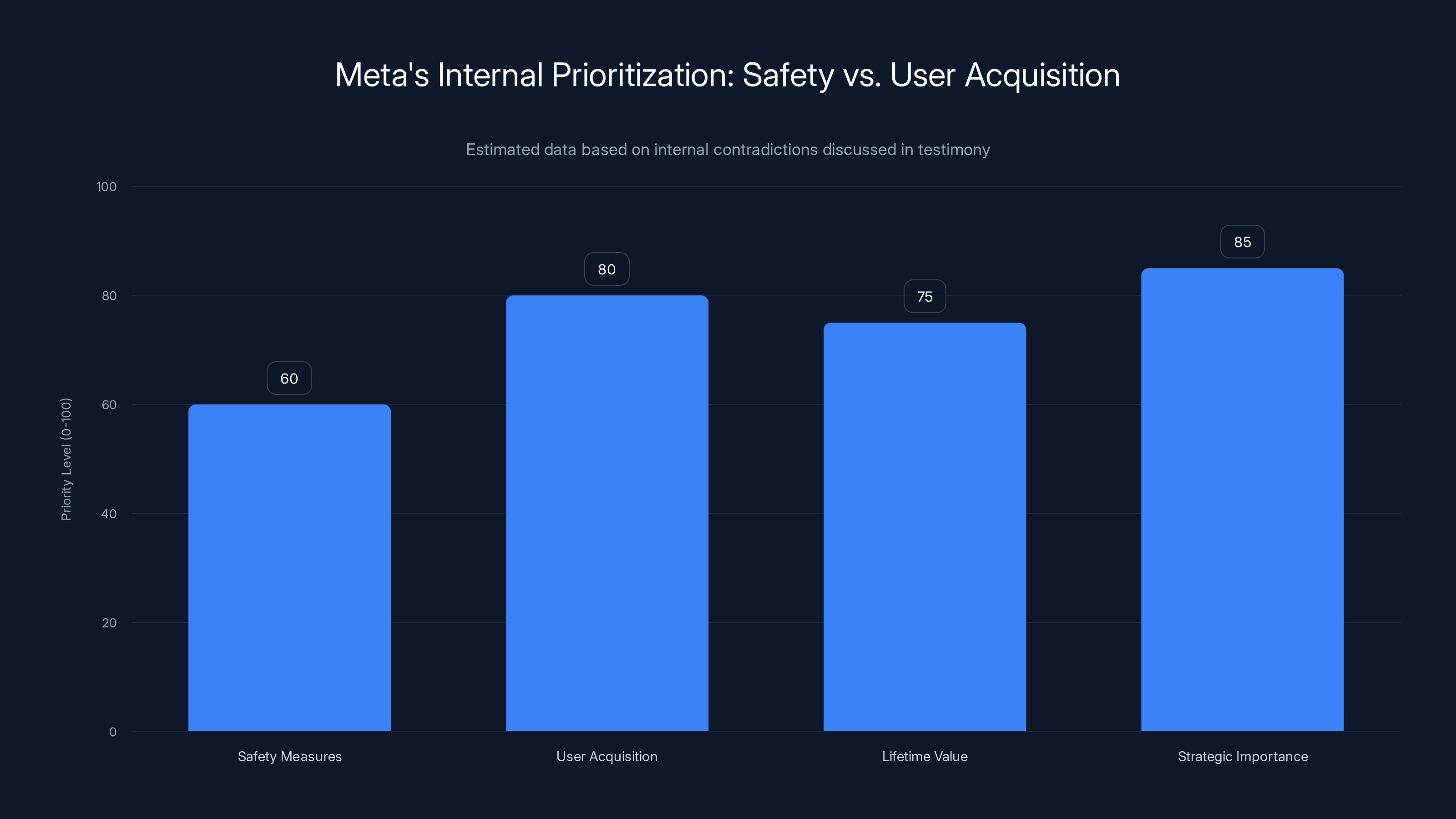

Estimated data suggests that while safety was a priority, internal discussions emphasized user acquisition and strategic importance of young users, potentially outweighing safety concerns.

The Internal Contradictions: What Meta's Own Executives Said

One of the most damaging parts of Zuckerberg's testimony involved emails and internal messages from Meta executives that seemed to contradict the company's public defense.

For years, Zuckerberg had publicly stated that Meta wanted to keep kids under 13 off its platforms. He'd said this in interviews, in congressional testimony, in earnings calls. It was part of Meta's narrative: we care about kids' safety, we're building age-appropriate features, we want to protect young people.

But internal documents told a different story. The documents discussed the value of getting young users on the platform early. They discussed the "lifetime value" of users acquired at young ages. They discussed the strategic importance of Instagram to younger demographics.

Zuckerberg was confronted with these contradictions during his testimony. How do you square your public commitment to keeping young kids off your platforms with internal discussions about the value of young users?

His answer, as documented in testimony, was that the company needed to balance safety with other values. Meta did want to protect kids, but it also wanted to serve users who were using the platform. The company made decisions on a case-by-case basis, weighing the evidence for potential harms against other considerations.

This is the kind of answer that sounds reasonable in isolation. But when you're defending it in court, with parents of deceased children watching from the gallery, it lands differently. It sounds like you're choosing profit over protection. It sounds like you knew there were risks and calculated that those risks were acceptable.

The opposing counsel, Mark Lanier, was deliberately designed to be a contrast to Zuckerberg. Lanier is a pastor as well as a litigation attorney. His speaking style is charismatic, rhythmic, and emotionally resonant. He draws on rhetorical techniques from the pulpit. When he questioned Zuckerberg, he wasn't just asking questions. He was constructing a narrative for the jury about a company that prioritized engagement metrics over the wellbeing of vulnerable young people.

Zuckerberg, for his part, pushed back. At one point, according to reporting, he said: "That's not what I'm saying at all." He was trying to inject nuance, to explain that the company's thinking was more complex than Lanier was suggesting. But nuance is a tough sell when you're defending decisions that affected millions of kids.

The AR Filter Decision: Where the Case Crystallized

The AR filter question became the central battleground of the testimony, and for good reason.

In 2019, Instagram had temporarily banned filters that altered facial features in ways that mimicked cosmetic procedures. The ban wasn't permanent. It was meant to be temporary while Meta researched the impact of these filters on user wellbeing. Instagram's chief, Adam Mosseri, had been questioned about this decision the week before Zuckerberg testified.

During Zuckerberg's testimony, he was asked to explain why the permanent ban wasn't implemented. His answer: after reviewing the research, he didn't think there was compelling evidence of harm sufficient to justify restricting this form of expression.

There was research showing potential negative effects, sure. But the evidence wasn't, in his view, unambiguous enough. And when you're making a decision that involves restricting expression, you need clear evidence. You can't just restrict what people can say because you think it might be harmful.

So Meta made a compromise. The filters wouldn't be permanently banned. But the company wouldn't promote them either. Instagram wouldn't create these filters itself. But users and creators could make them. This was, in Zuckerberg's framing, the balanced approach.

The problem, from K. G. M.'s perspective, was that this "balanced approach" still left the filters available. A young person struggling with body image could still use them. They could still distort their face to look more like impossible beauty standards. And Instagram's algorithm, designed to maximize engagement, might even show them these filters more frequently if the algorithm learned that using them kept the user engaged.

Zuckerberg's defense boiled down to epistemic humility: we didn't have clear enough evidence that banning these filters was the right call. So we made a decision that respected user choice while mitigating some of the harms.

But there's a tension here that the trial was designed to expose: when you're Instagram, and your entire business model depends on usage metrics, claiming that you're balancing values becomes suspect. The company profits from engagement. Features that drive engagement are more likely to be retained. Research showing harms is easier to dismiss than research showing benefits.

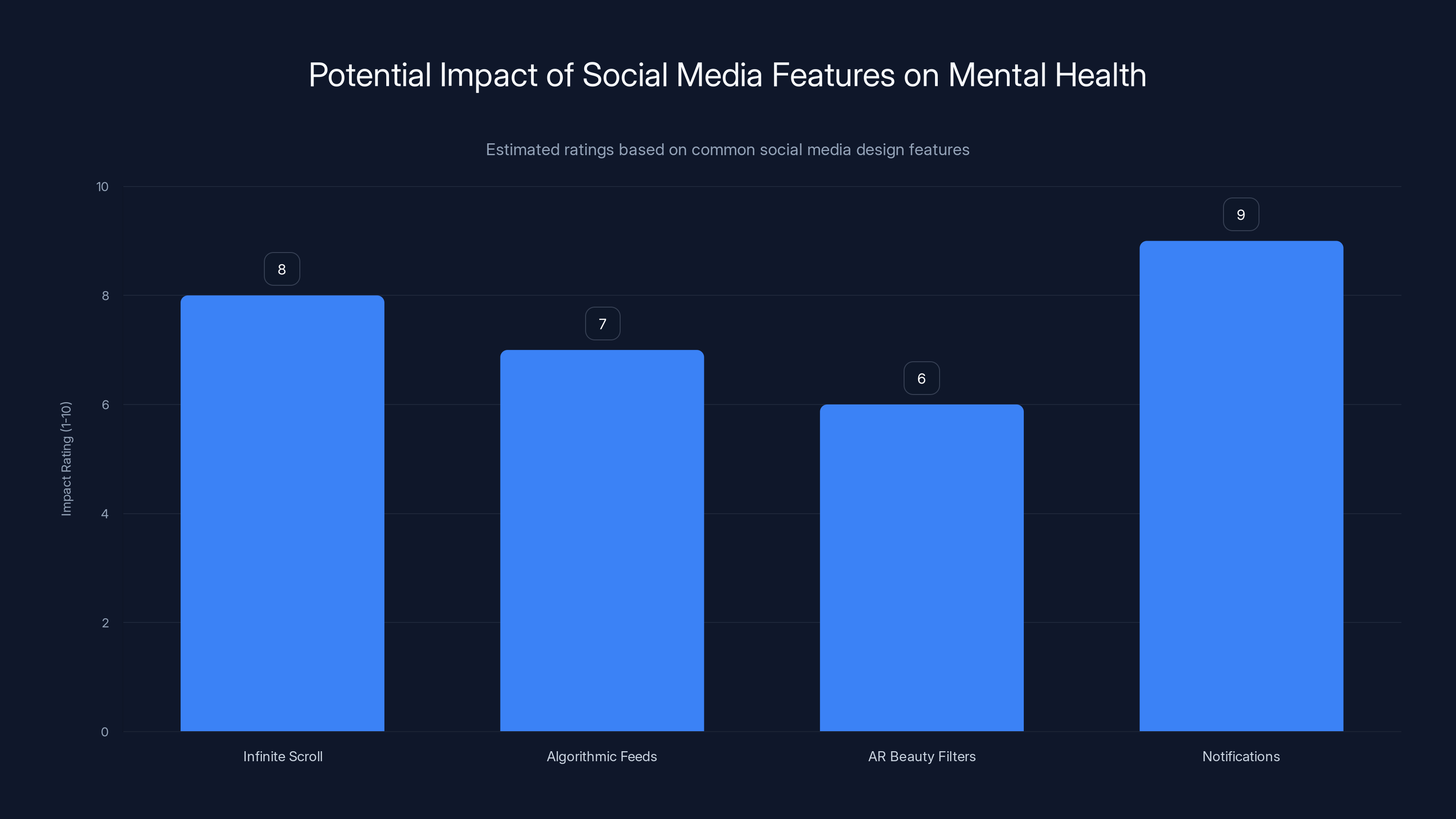

Estimated data suggests that notifications and infinite scroll have the highest potential impact on mental health issues, followed by algorithmic feeds and AR beauty filters.

The Witness Stand Theater: Zuckerberg Under Pressure

Watching video footage of Zuckerberg's testimony, you notice something interesting. He doesn't seem nervous. He doesn't seem intimidated. He seems, if anything, somewhat remote. His responses are technically accurate but emotionally flat.

This is strategic, of course. When you're a billionaire CEO testifying in a case where the opposing side is arguing your company harms vulnerable young people, you don't want to come across as defensive or rattled. You want to seem calm, thoughtful, maybe even sympathetic to the concerns being raised.

But there's a cost to that strategy. When you're answering questions about kids whose lives were harmed by your platform with the same tonality you'd use to discuss server architecture, it creates cognitive dissonance. The jury hears the content of what you're saying, but they also absorb something about your emotional relationship to the question. And if that relationship seems distant or calculated, it undermines your credibility.

Lanier understood this. By maintaining his charismatic, emotionally engaging style, he was creating a contrast. Here's a person who cares deeply about justice and the wellbeing of young people. And here's a person who's explaining, in measured tones, why he didn't think the evidence was compelling enough to change his product.

Zuckerberg did try to push back on specific characterizations of his testimony. When Lanier suggested something that Zuckerberg felt misrepresented his position, he'd correct it. "That's not what I'm saying at all." These corrections showed that Zuckerberg was engaged, that he cared about accuracy. But they also made him sound lawyerly, defensive.

The problem with testifying in a case like this is that there's no winning move. If you're too emotional, you seem like you're manipulating the jury. If you're too measured, you seem like you don't care. If you correct mischaracterizations, you seem defensive. If you don't, your position goes unchallenged.

Zuckerberg walked a narrow line, and whether he succeeded depends entirely on what the jury believes about the fundamental question: Is Meta's design philosophy a reasonable approach to building social networks, or is it a system optimized for profit that treats harms to young people as an acceptable cost of business?

The Broader Pattern of Regulation and Legal Challenges

Zuckerberg's testimony wasn't happening in a vacuum. It was part of a larger pattern of legal challenges to Meta and other tech companies.

States across the country have passed laws restricting how social media companies can target minors. Congress has held numerous hearings on social media's impact on youth mental health. There have been multiple antitrust cases against Meta. And across all of this, the fundamental question keeps coming up: What responsibility do tech companies bear for the harms their products cause?

This trial was significant because it was asking juries, not regulators or politicians, to answer that question. And jury decisions shape both future litigation and regulatory policy.

If K. G. M. won her case, it would open the door to thousands of similar lawsuits. It would suggest that tech companies can be held liable for design choices that lead to mental health harms. It would make it much harder for companies like Meta to claim they're just neutral platforms where people voluntarily interact.

If Meta won, it would be a statement that even if there are correlations between social media use and mental health issues, that doesn't mean the company that designed the platform is responsible. It would suggest that regulators and legislators, not juries, are the appropriate venue for deciding what kinds of design choices should be allowed.

Zuckerberg's testimony was crucial to that calculation. The jury needed to assess his credibility, his character, and whether they believed his explanations for the company's product decisions.

Estimated data suggests that features like infinite scroll and AR filters significantly impact mental health, with body dysmorphia being the most affected.

The Mental Health Science Question

Underlying all of this was a scientific question that's still genuinely unsettled: Does social media use actually cause mental health problems, or does it correlate with them in ways that don't imply causation?

There's research suggesting both. Some studies show correlations between heavy social media use and depression, anxiety, and eating disorders. But correlations aren't causation. It's also possible that people who are already struggling with mental health issues use social media more heavily as a form of escape or self-medication.

K. G. M.'s case was arguing for a direct causal link: Instagram's design caused her mental health to decline. Meta's position was that the evidence for that causal link wasn't strong enough to justify the conclusions she was drawing.

Scientifically, Meta has a point. The evidence is genuinely mixed. There are plausible mechanisms by which social media use could harm mental health, but there are also plausible explanations for why the correlations we observe might not be causal.

But there's a legal and ethical issue here: if we wait for absolute certainty in the scientific evidence before restricting potentially harmful products, we'll never restrict them. Tobacco companies used the same argument for decades. There's no absolute proof, they said, that smoking causes cancer. And technically, they were right. There was statistical evidence, but you could never prove that a specific person's cancer was caused by smoking.

Society eventually decided that the preponderance of evidence was enough to justify restrictions, even without absolute proof. The question for Zuckerberg's trial was whether social media design should be treated the same way.

Contradictions Between Public Statements and Private Actions

One of the most effective lines of questioning during Zuckerberg's testimony involved contradictions between what he'd said publicly and what internal documents revealed about the company's thinking.

This is a pattern that's repeated itself in tech litigation: A CEO makes public statements about caring about privacy, safety, or some other value. Then internal emails emerge showing the company prioritizing something else. The company's defenders then argue that the CEO's statements were taken out of context, or that they reflect the company's values even if they don't perfectly describe its practices.

But from a jury's perspective, it's more straightforward: The CEO said one thing publicly and was thinking something different privately. That suggests deception, or at least a gap between rhetoric and reality.

Zuckerberg was confronted with documents showing that Meta's executives discussed the value of acquiring young users early. He was asked how this squared with his public statements about wanting to keep kids under 13 off the platforms.

His explanation was that Meta wanted to serve all users responsibly, including young people, even if the company ideally wanted to delay their involvement. It's a bit like saying you want to discourage people from smoking, but you also sell cigarettes and you're interested in who your customers are.

Technically, both things can be true. You can want fewer young people using your platform while also wanting to understand and serve the young people who do use it. But it's a difficult position to defend when your entire business model depends on growth, and growth means getting users at the youngest ages possible.

Meta's Ray-Ban smart glasses emphasize augmented reality and spatial computing, indicating a strong focus on future tech platforms. Estimated data.

The Content Moderation Philosophy

Understanding Zuckerberg's approach to content moderation is crucial to understanding his testimony.

For years, Zuckerberg has positioned Meta as a company that values free expression. He's been skeptical of taking down content, even content that other platforms have removed. He's argued that it's better to allow speech to compete with other speech than to restrict it.

This philosophy shows up in his answers about the AR filters. He didn't want to ban them because banning them would constitute a restriction on expression. Even if there were concerns about their effects, that concern wasn't sufficient to justify the restriction.

But there's a contradiction here too: Meta does restrict content all the time. The company has policies against hate speech, harassment, misinformation, and many other categories of expression. So it's not that Zuckerberg values absolute free expression. It's that he makes calculations about when restrictions are justified.

The question Lanier was raising was whether those calculations are appropriately weighted. Are they weighted toward protecting vulnerable users, or are they weighted toward maintaining engagement and avoiding restrictions that would reduce usage?

Zuckerberg's position seems to be that they're appropriately balanced, that the company considers user wellbeing alongside other values. But the internal documents and the product decisions suggest that when there's a conflict, engagement often wins.

The Parents in the Courtroom

One element of Zuckerberg's testimony that shouldn't be overlooked is the context in which it was happening.

There were parents in the courtroom. Parents whose children had experienced severe mental health issues or, in some cases, had died. They were there to watch Zuckerberg defend the design choices that they believed had harmed their kids.

That's a powerful dynamic. It transforms the trial from an abstract legal question into something visceral and emotional. It's not just about whether Instagram should have different design rules. It's about whether Zuckerberg cares about the fact that real people were harmed.

From a legal standpoint, the jury was supposed to be swayed by evidence and legal standards, not by emotional appeals. But juries are made up of people, and people are moved by emotional contexts.

Zuckerberg didn't engage with the parents directly during his testimony. He remained focused on the legal questions being asked. This probably was the right move tactically (getting defensive about the parents' claims would have looked bad), but it also meant that he didn't have an opportunity to demonstrate empathy or concern for their situation.

This absence became its own kind of presence. By focusing on the technical questions and the evidence, without acknowledging the human cost of his decisions, Zuckerberg reinforced the impression that he views the question from an intellectual, not emotional, perspective.

What Happens Next: The Broader Implications

Zuckerberg's testimony was one day in what will be a lengthy trial. There will be other witnesses. There will be more evidence presented. And eventually, there will be a verdict.

But regardless of what happens in this specific case, Zuckerberg's testimony reflects a broader shift in how tech companies are being held accountable.

For years, tech companies operated in a regulatory gray zone. They were powerful, but they were also new enough that the rules hadn't fully caught up to them. They could make product decisions that affected millions of people without being required to explain or justify those decisions to anyone.

Now that's changing. Companies are being called to explain themselves. CEOs are sitting in witness stands. Internal documents are being exposed to public scrutiny.

Zuckerberg's strategy in his testimony was to position Meta as a thoughtful company making careful decisions in complex situations. The company cares about free expression. It also cares about user wellbeing. It tries to balance these values. Sometimes the evidence is unclear, so the company makes judgment calls.

It's a reasonable position. It might even be a true position. But it's increasingly difficult to maintain when your company's business model creates strong incentives to weight engagement heavily in every one of those judgment calls.

The Future of Social Media Regulation

Zuckerberg's trial is just the beginning of a broader reckoning with how social media platforms should be regulated.

States have already started passing laws. Some states have restricted algorithmic feeds for minors. Others have limited data collection from young users. Some have attempted to address specific features, like infinite scroll, that researchers believe encourage excessive use.

At the federal level, there have been multiple legislative proposals. Some focus on transparency, requiring companies to disclose how their algorithms work. Others focus on user control, giving people more ability to opt out of algorithmic feeds. Still others focus on liability, making companies responsible for harms caused by their platforms.

Zuckerberg's testimony may influence how regulators and legislators approach these questions. If the jury finds Meta liable for harms to K. G. M., it will embolden regulators to push for stronger restrictions. If Meta prevails, it will be a signal that courts aren't the right venue for these questions, and that regulation needs to come from legislatures instead.

Either way, the old model of tech companies making product decisions with minimal outside oversight is clearly ending.

Zuckerberg's Credibility Under Scrutiny

A major factor in how the jury responds to Zuckerberg's testimony will be their assessment of his credibility.

On one hand, Zuckerberg is one of the most powerful people in the world. He's not intimidated by the process. He's experienced in public speaking and in fielding difficult questions. He came across as calm and thoughtful.

On the other hand, there were moments where his answers seemed evasive or carefully calculated. When asked about contradictions between his public statements and internal documents, he found ways to explain them that technically made sense but didn't seem to fully acknowledge the tension.

The jury will be assessing whether they believe him. Do they believe that Meta genuinely cares about user wellbeing? Do they believe that the company's decisions were driven by a good-faith balance of competing values? Or do they believe that the company prioritized engagement above all else and rationalized its decisions after the fact?

Zuckerberg's testimony was one data point in that assessment. But the jury will also be looking at the internal documents, the expert testimony, and the trajectory of Meta's product decisions over time.

The Symbolic Power of the Ray-Bans

Let's return to where we started: the Ray-Ban smart glasses.

There's something emblematic about Zuckerberg showing up to defend his company against allegations that it harms young people's mental health while wearing bleeding-edge augmented reality hardware designed to make him look forward-thinking and innovative.

The judge didn't like it. The judge explicitly warned people not to wear the glasses. The judge was concerned about recordings being made in the courtroom.

But Zuckerberg's decision to bring them anyway sends a message: Meta is moving forward. The company has bigger visions than just answering questions about Instagram's design choices from 2019. The company is building the next computing platform. This trial is an inconvenience, not a fundamental challenge to the company's direction.

Whether that's the right message to send to a jury that's deciding whether his company harmed a young woman is another question entirely.

FAQ

What was the main allegation against Meta in this trial?

K. G. M. claimed that Meta's design features on Instagram, particularly algorithmic feeds, infinite scroll, and AR beauty filters that simulate cosmetic surgery, were deliberately engineered to encourage compulsive use and contributed to her mental health issues including depression, anxiety, and body dysmorphia. She argued that Meta prioritized engagement metrics over user wellbeing, especially for young people.

Why was Zuckerberg's testimony about AR filters so important?

The AR filter decision became a centerpiece of the trial because it represented a specific, documented moment where Meta had to decide between restricting a feature due to potential mental health harms or allowing it to promote user expression. Zuckerberg testified that he reviewed research on the filters' impact on wellbeing but found the evidence of harm not compelling enough to justify a permanent ban. This decision crystallized the broader question of how Meta balances free expression against potential harms.

What contradictions were revealed between Meta's public statements and internal documents?

Zuckerberg had publicly stated that Meta wanted to keep children under 13 off its platforms, but internal documents showed executives discussing the strategic value of acquiring young users early and understanding the "lifetime value" of users acquired at young ages. This discrepancy raised questions about whether the company's public commitment to child safety aligned with its internal business thinking.

How does social media design potentially contribute to mental health issues?

Research suggests several mechanisms: infinite scroll encourages extended usage beyond what users intend; algorithmic feeds can amplify emotionally triggering content; social comparison through curated feeds may fuel anxiety and body dysmorphia; and notification systems create intermittent rewards that can reinforce compulsive checking behaviors. However, scientists debate whether these correlations prove direct causation or if people with existing mental health struggles simply use social media more heavily.

What was the significance of the Ray-Ban smart glasses at the trial?

Zuckerberg arrived at the trial flanked by people wearing Meta's Ray-Ban smart glasses, which feature cameras and AI capabilities. The judge explicitly warned against using them in the courtroom, concerned about unauthorized recordings. The gesture was symbolically significant because it suggested Meta was more focused on its future vision than on addressing current harms, which could negatively impact jury perception during testimony about a young woman's suffering.

How did Zuckerberg's testimony approach compare to the opposing counsel's style?

Zuckerberg maintained a calm, measured, matter-of-fact tone throughout his eight hours of testimony, offering technical explanations and nuanced defenses of Meta's decisions. In contrast, opposing counsel Mark Lanier, who is also a pastor, used a more charismatic and emotionally resonant speaking style. This contrast highlighted the tension between Zuckerberg's intellectual framing and the emotional reality of harms experienced by young people and their families.

What role could this trial have on future social media regulation?

The outcome of this trial could significantly influence how regulators and legislators approach social media oversight. If the jury finds Meta liable, it signals that courts will hold tech companies responsible for design-related harms, potentially opening the door to thousands of similar lawsuits and driving stricter regulation. If Meta prevails, it suggests that legislative action rather than litigation is the appropriate path for regulation, potentially slowing the pace of restrictions on platform design practices.

What is Meta's fundamental defense of its design philosophy?

Meta's position is that social media companies must balance multiple competing values including user expression, user choice, innovation, and user safety. Rather than restricting features based on potential harms, the company argues it needs clear evidence of significant harm before restricting what people can do or express. Meta claims it doesn't prioritize engagement over wellbeing but rather makes good-faith attempts to weigh all factors when making product decisions.

How are states and the federal government responding to concerns about social media's impact on youth?

Multiple states have begun passing legislation restricting how social media companies can target minors, including limitations on algorithmic feeds, data collection, and specific features like infinite scroll. At the federal level, several legislative proposals have emerged focusing on transparency (requiring disclosure of algorithm operations), user control (allowing people to opt out of algorithmic feeds), and liability (making platforms responsible for harms). These regulatory efforts suggest that the era of minimal oversight for tech companies is ending.

Key Takeaways

- Zuckerberg testified for eight hours defending Meta's product decisions, including the choice to allow AR beauty filters despite research on potential mental health harms

- Internal Meta documents revealed contradictions between Zuckerberg's public statements about protecting children under 13 and internal discussions about the strategic value of young users

- The central legal question asks whether Meta's design choices constitute liability for harms to young people or represent a reasonable balance of competing values including free expression

- Multiple states are already passing legislation restricting social media design features and youth targeting, suggesting regulatory momentum regardless of trial outcome

- The trial represents a broader shift in tech accountability, moving from voluntary self-regulation toward legal liability and legislative oversight of platform design

Related Articles

- Mark Zuckerberg's Testimony on Social Media Addiction: What Changed [2025]

- Meta's Parental Supervision Study: What Research Shows About Teen Social Media Addiction [2025]

- Meta and YouTube Addiction Lawsuit: What's at Stake [2025]

- Washington Post's Tech Retreat: Why Media Giants Are Abandoning Silicon Valley Coverage [2025]

- Tech Leaders Respond to ICE Actions in Minnesota [2025]

- AI Glasses & the Metaverse: What Zuckerberg Gets Wrong [2025]

![Zuckerberg's Day in Court: Meta's AI Glasses and the Social Media Addiction Trial [2025]](https://tryrunable.com/blog/zuckerberg-s-day-in-court-meta-s-ai-glasses-and-the-social-m/image-1-1771475728452.jpg)