AI and the Future of Work: Understanding the Real Job Market Disruption

Introduction: Beyond the Fear of AI Replacement

When we hear about artificial intelligence and employment, the conversation typically gravitates toward one dominant narrative: machines will steal our jobs. This oversimplification has dominated headlines, policy discussions, and water cooler conversations for years. However, a more nuanced and arguably more important warning has emerged from industry leaders who've actually navigated major technological transitions. Cisco CEO Chuck Robbins recently articulated a fundamentally different concern—one that should reshape how we think about AI's impact on the workforce.

The real threat isn't artificial intelligence autonomously displacing workers en masse. Rather, it's the widening competitive gap between professionals who leverage AI effectively and those who don't. This distinction carries profound implications for career development, organizational strategy, and economic inequality. While an AI system performing routine tasks represents one type of disruption, a highly skilled professional equipped with AI tools outcompeting their peers represents a different challenge entirely—one that creates winners and losers within the same job categories.

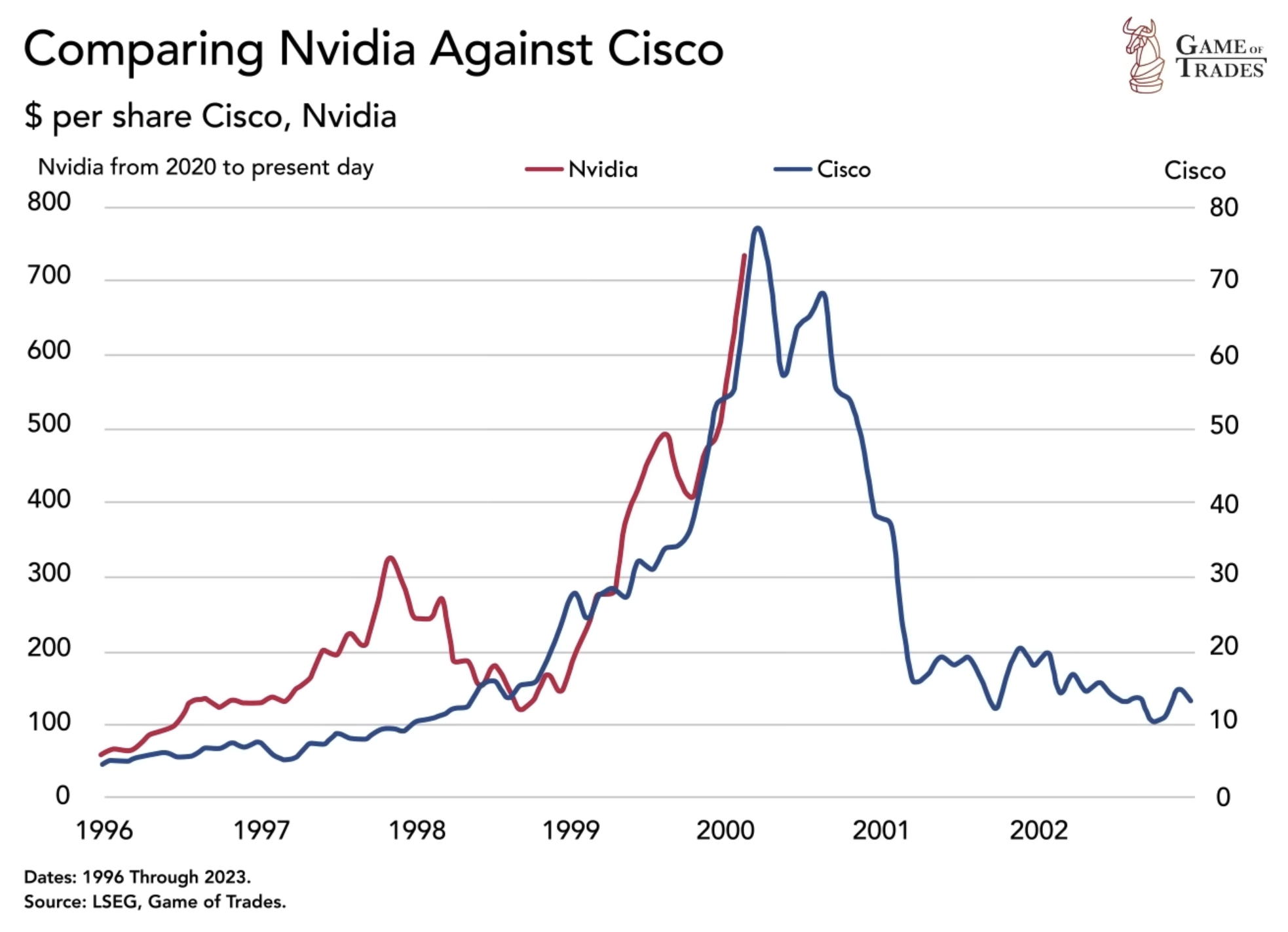

Robbins' warning carries particular weight because Cisco itself survived the dot-com bubble of 2000, when the company's stock plummeted approximately 80% despite its fundamental strength. Having witnessed how technological waves create both unprecedented opportunities and devastating failures, he brings credibility to discussions about AI's trajectory. His perspective isn't that we should panic about the technology itself, but rather that we should prepare for significant market restructuring as companies and workers adjust to AI's presence.

This comprehensive analysis explores the multifaceted implications of AI adoption on labor markets, examining economic data, industry-specific impacts, workforce preparation strategies, and the organizational changes already underway. By understanding the mechanics of how AI creates competitive advantages—and disadvantages—we can better position ourselves, our teams, and our organizations for the transition ahead.

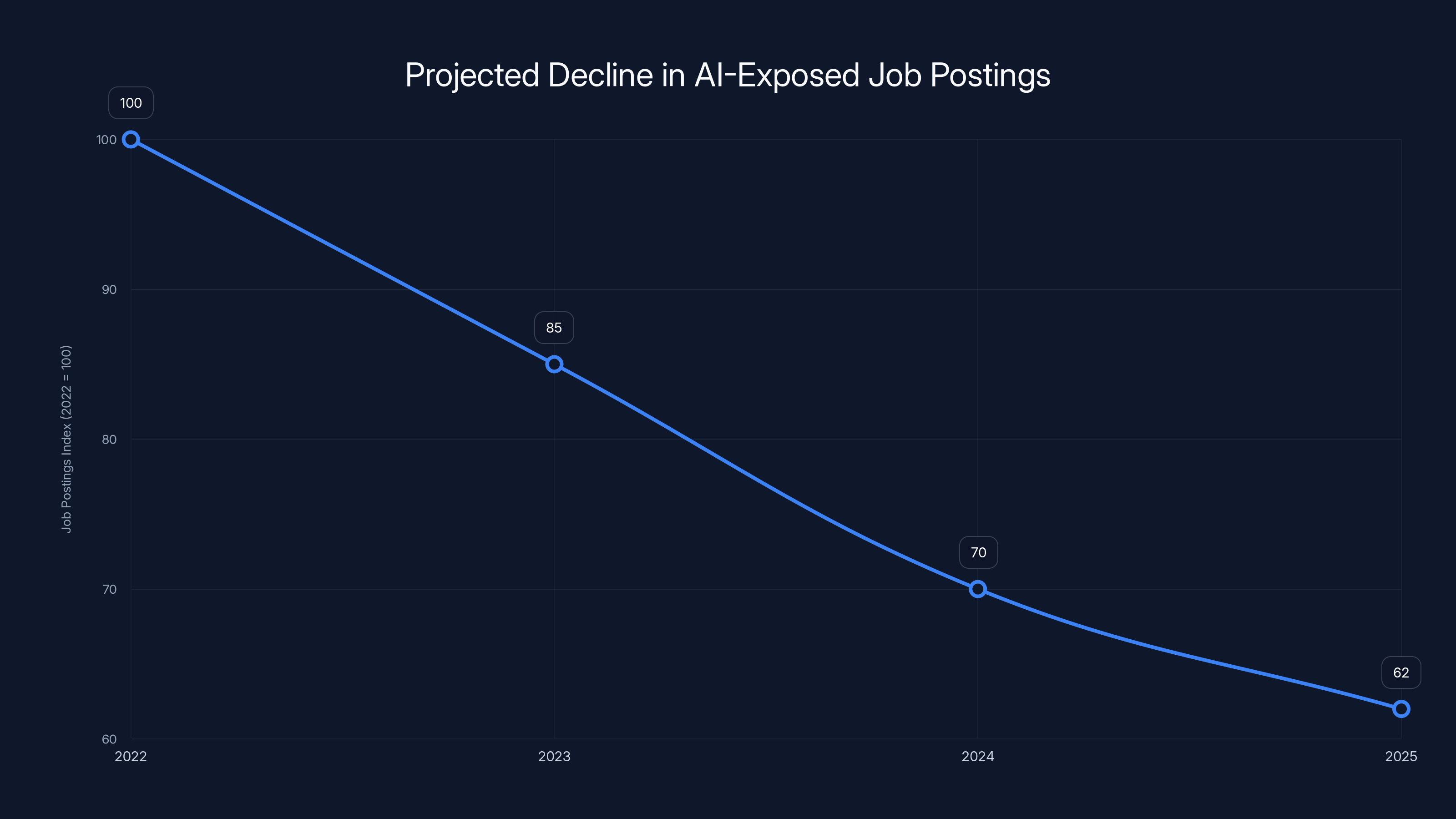

Job postings for roles with high AI exposure are projected to decline by 38% from 2022 to 2025, indicating a shift in labor market demands and skill requirements. Estimated data.

The Cisco CEO's Thesis: Skill Inequality, Not Obsolescence

The Quote That Reframes the Conversation

When Robbins stated, "You shouldn't worry as much about AI taking your job as you should worry about someone who's very good using AI taking your job," he crystallized a crucial insight that distinguishes between two entirely different labor market outcomes. This formulation moves beyond technological determinism—the idea that machines inevitably replace human workers—into a more complex reality about competitive advantage within professions.

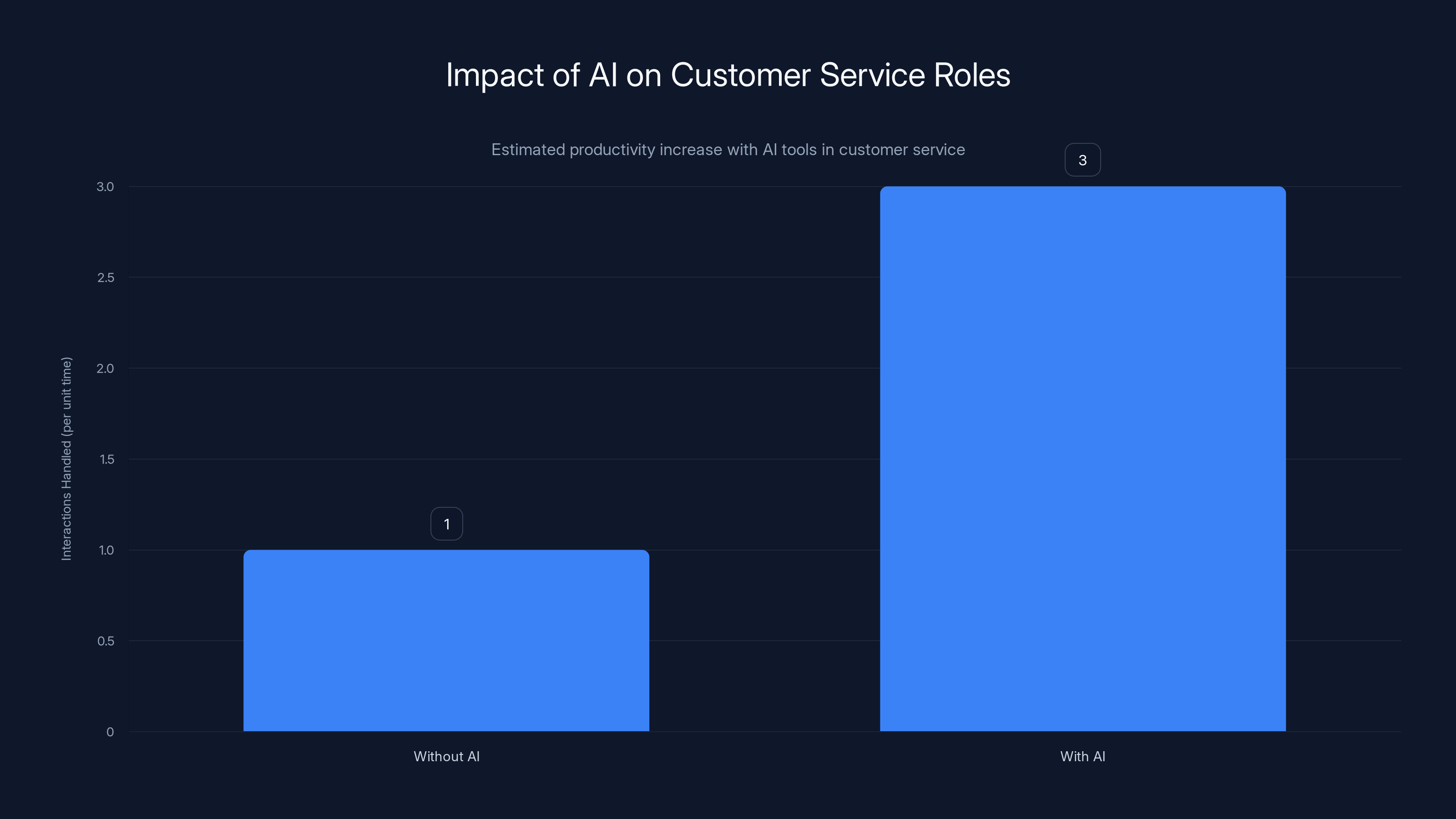

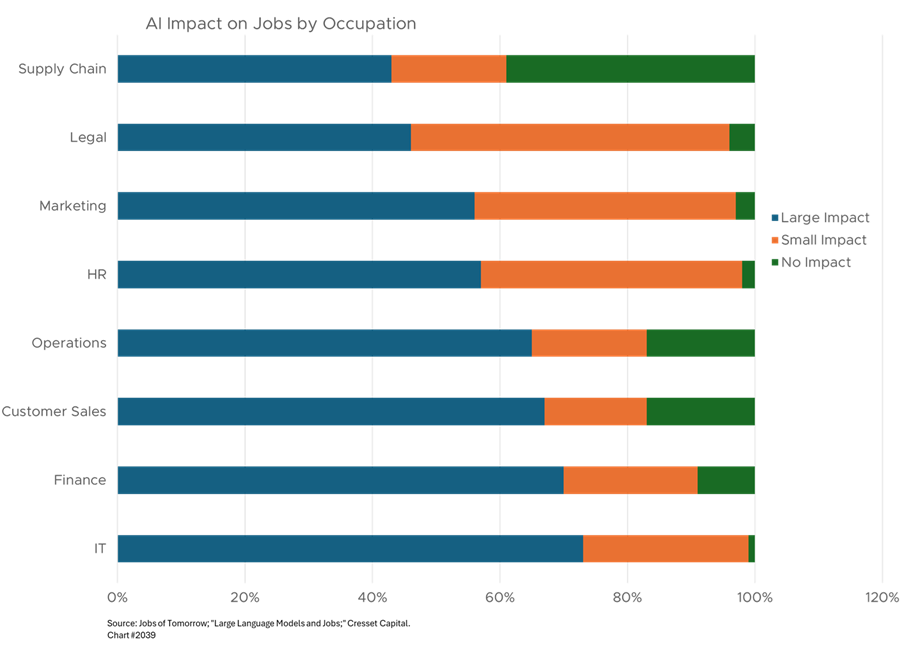

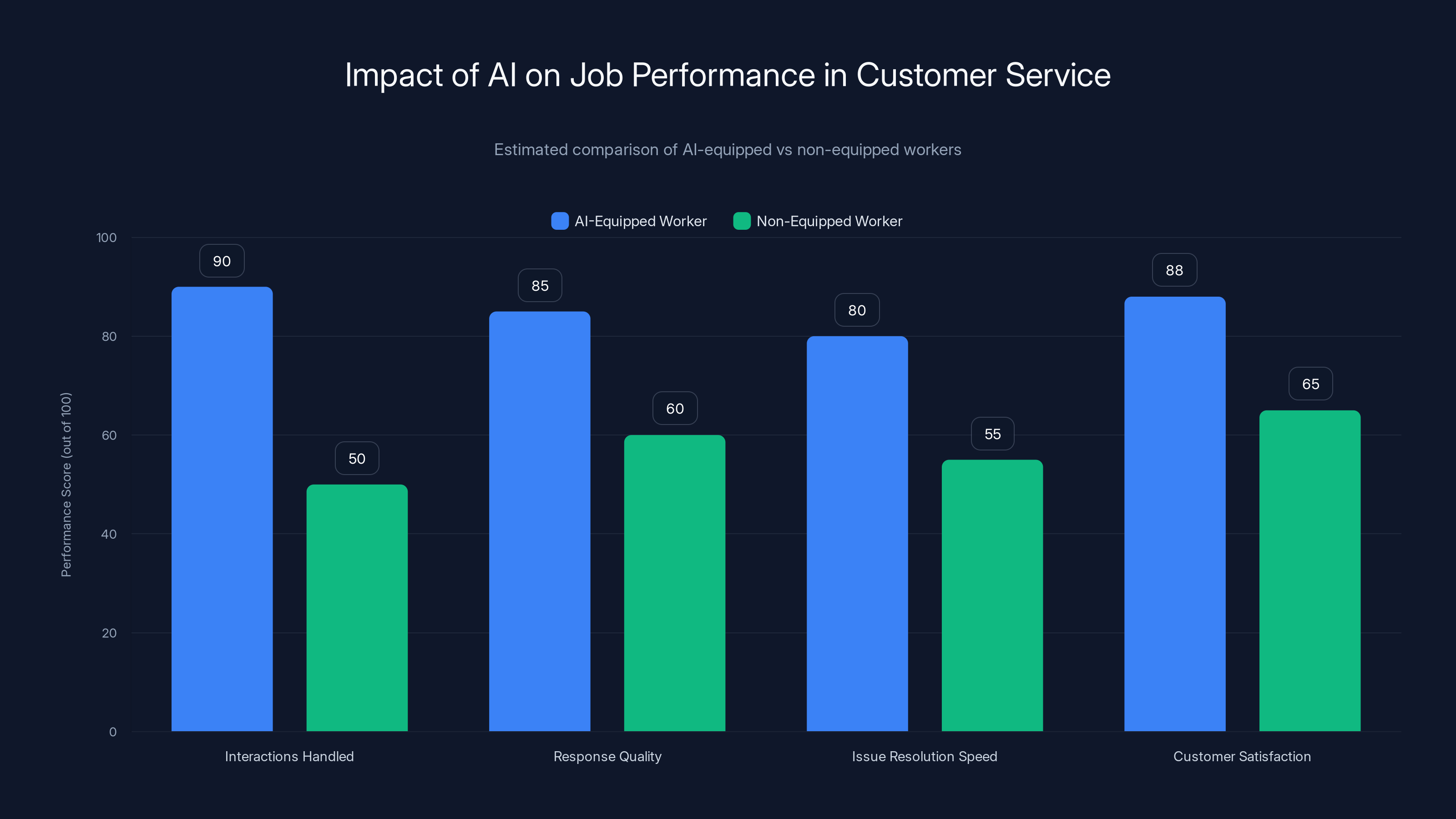

Consider customer service as the example Robbins specifically mentioned. An AI-powered customer service representative doesn't necessarily eliminate the role; rather, a human agent equipped with AI tools can handle dramatically more interactions, provide superior responses, and resolve issues faster than an agent without AI assistance. The job itself persists, but the performance differential between the AI-equipped and non-equipped worker becomes impossible to ignore. From an employer's perspective, hiring or retaining the AI-proficient worker makes logical sense. From an economic standpoint, workers without these skills face obsolescence not through technological replacement, but through competitive displacement.

This distinction carries profound implications for labor economics. Traditional displacement occurs when a technology fundamentally eliminates a function—the way ATMs reduced demand for bank tellers by automating cash distribution. But AI-driven competitive displacement works differently. The function remains, but the value proposition of workers without AI skills declines relative to their AI-equipped counterparts. This creates a more insidious challenge because it's harder to address through simple job retraining programs; it requires continuous skill acquisition and adaptation.

Historical Context: Why Cisco's Perspective Matters

Cisco's experience navigating the dot-com bubble provides important context for understanding how infrastructure-defining technologies disrupt markets. When the internet emerged as a fundamental shift in computing, companies that built networking infrastructure—like Cisco—initially saw explosive growth as businesses rushed to connect to this new paradigm. However, valuations became disconnected from fundamentals as speculative capital flooded the sector. The inevitable correction destroyed approximately 80% of Cisco's stock value, even though the underlying technology remained revolutionary.

The parallel to today's AI boom isn't perfect, but it illuminates an important dynamic. Robbins isn't arguing that AI won't transform the world or that the technology is overblown. Rather, he's suggesting that the current enthusiasm—with companies investing heavily in AI capabilities, building new AI-focused business units, and making strategic shifts based on AI potential—may outpace the actual economic value creation in the near term. This creates what economists call a "boom-bust cycle," where infrastructure overinvestment precedes a correction, which then precedes sustainable long-term growth.

For workers, this cycle creates multiple disruption points. During the exuberant growth phase, companies hire aggressively, skills become premium-priced, and early adopters gain advantages. During the correction, companies that over-invested in AI infrastructure face pressure, potentially leading to layoffs. During the recovery phase, only companies with sustainable AI-driven business models survive, and they employ workers skilled in integrated AI-human workflows. Workers who adapted during the growth phase have positioned themselves for long-term success; those who waited for "things to stabilize" may find themselves significantly disadvantaged.

The "Carnage Along the Way" Framework

What Robbins Actually Means by Disruption

When Robbins references "carnage along the way," he's describing a period of significant organizational and economic turbulence that precedes the establishment of new equilibrium. This isn't hyperbole—it reflects historical patterns in how transformative technologies reshape labor markets. The carnage includes multiple dimensions: companies that fail because they miscalculated AI adoption costs, workers whose skills become suddenly devalued, entire job categories that transform so rapidly that training programs can't keep pace, and regional economic disruptions as high-skill, AI-fluent workers migrate to AI hubs while other regions stagnate.

Consider how e-commerce disrupted retail, a process that's still unfolding decades later. The technology itself created enormous value and new employment categories. Yet the process involved store closures, regional retail ecosystem collapse, extensive workforce displacement, and painful adjustment periods. Workers at closed retail locations couldn't simply transition to e-commerce logistics jobs—the skills diverged, geographic locations often didn't align, and the speed of transition outpaced training capacity. Similar dynamics are likely with AI, but potentially more rapid because the technology advances faster than e-commerce did.

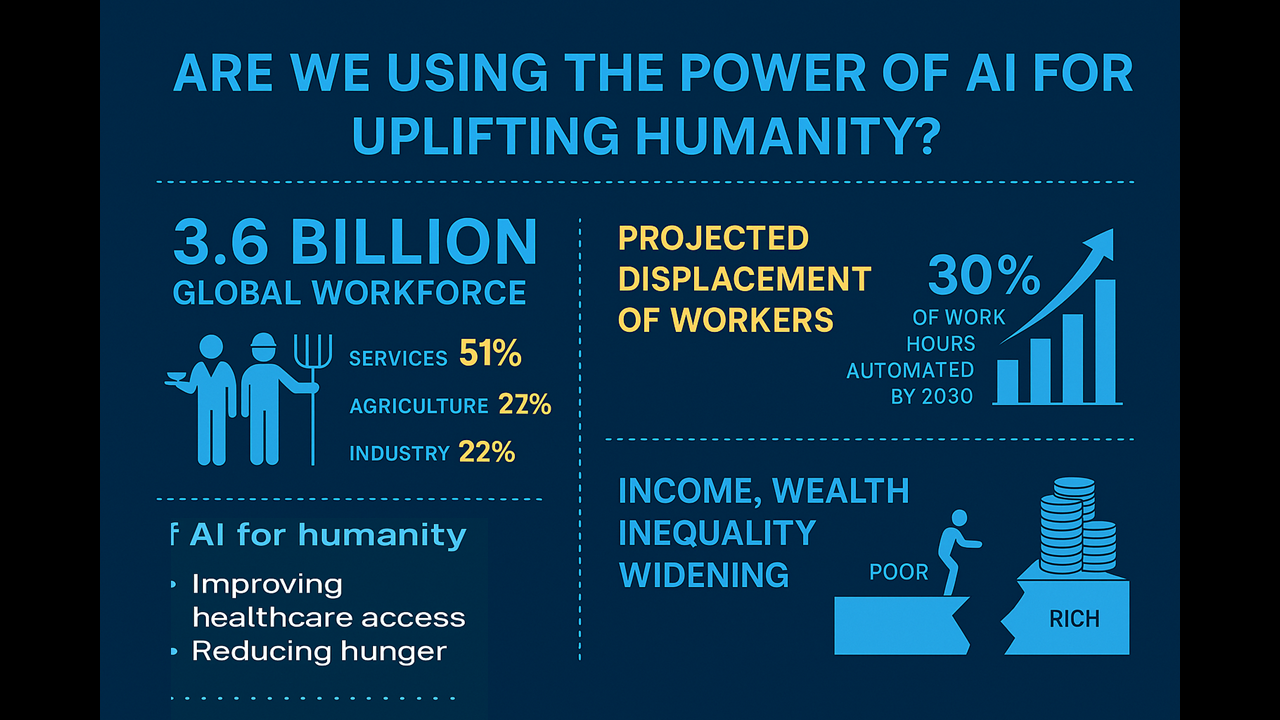

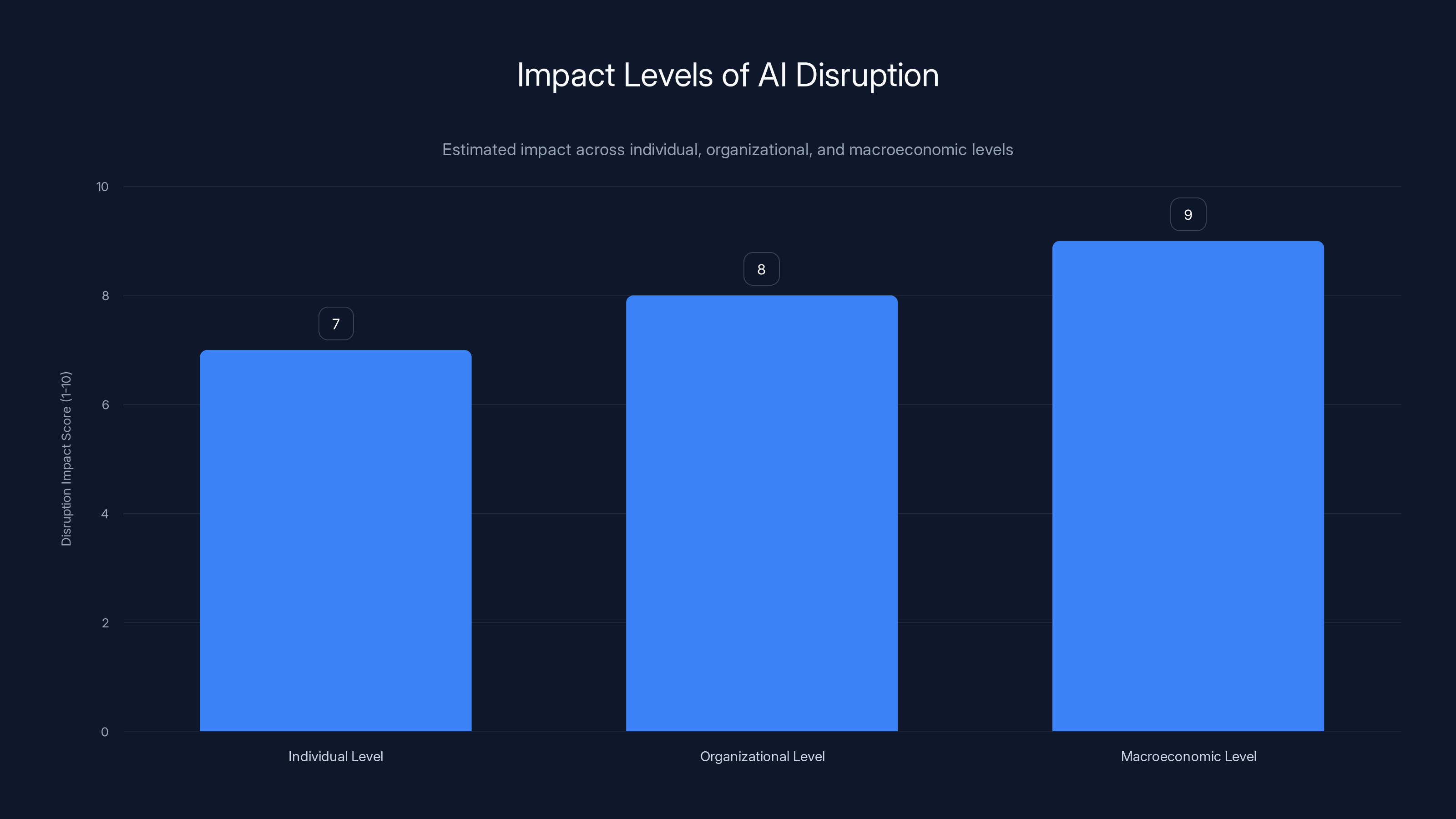

The carnage operates at multiple levels simultaneously. At the individual level, workers in high-AI-exposure fields face uncertainty about whether their skills will remain valuable. At the organizational level, companies must make capital allocation decisions under uncertainty—investments in AI infrastructure might be essential or premature, and it's difficult to know in real-time which outcome will transpire. At the macroeconomic level, if AI productivity gains concentrate in certain sectors while others stagnate, it could exacerbate existing inequality patterns. The cumulative effect is significant disruption even if the underlying technology ultimately benefits society.

Identifying High-Risk Occupations

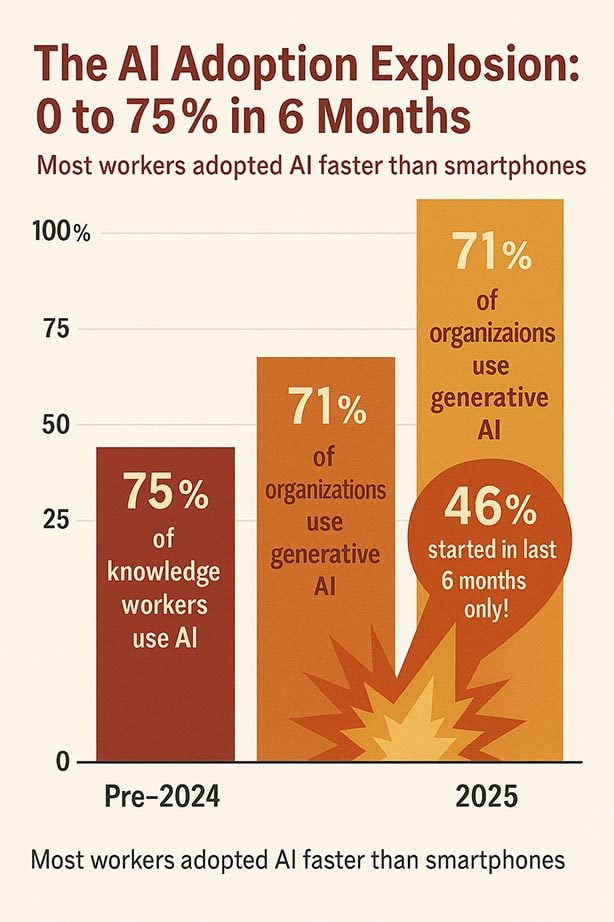

Research from McKinsey, examining job advertisements between 2022 and 2025, reveals that positions with high AI exposure saw a 38% decline in job postings during this period. This metrics provides concrete evidence that AI exposure correlates with declining hiring activity, though the causality remains debatable—are employers hiring fewer people because AI can do the work, or are they reshaping the same roles to require AI skills?

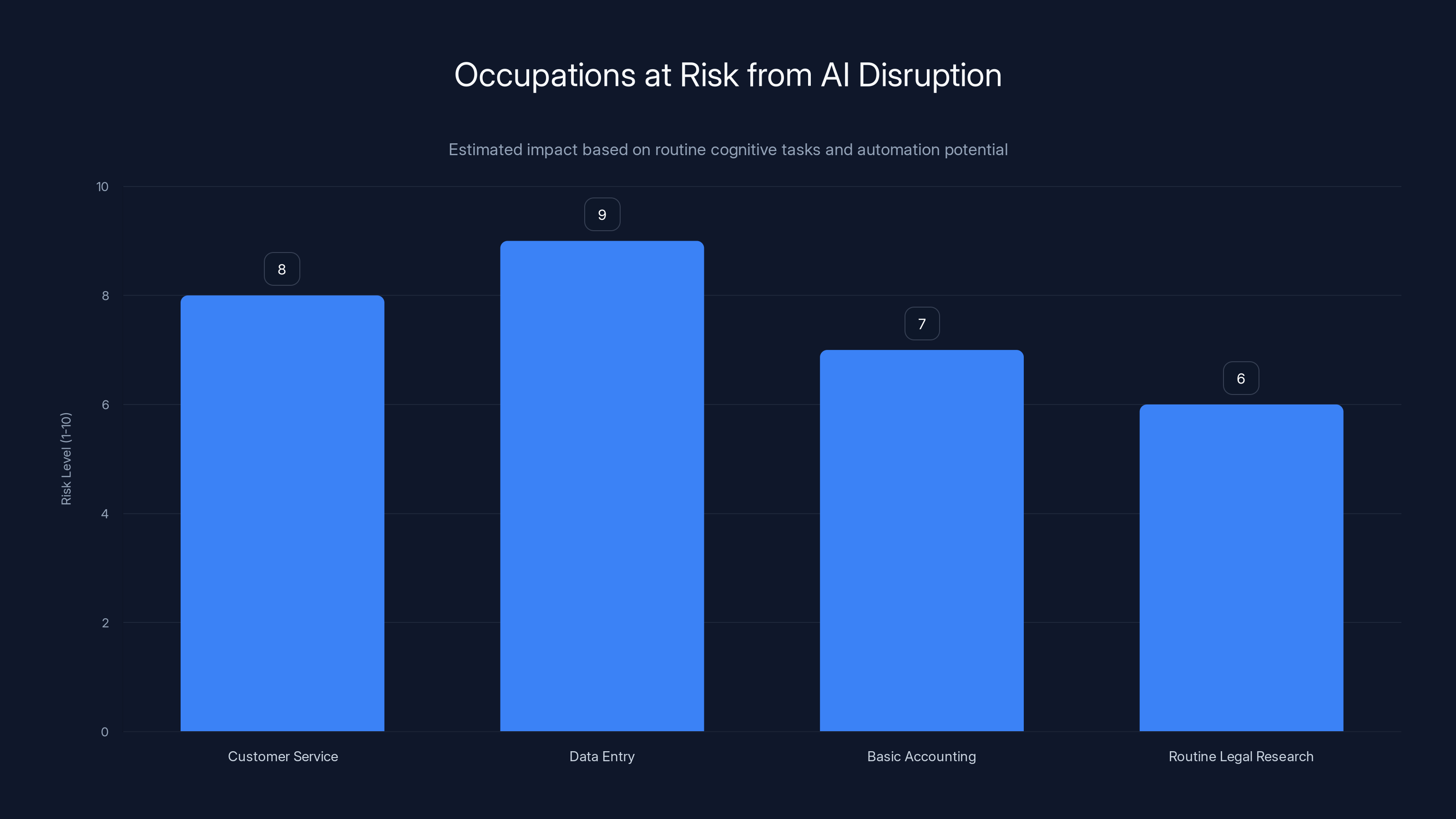

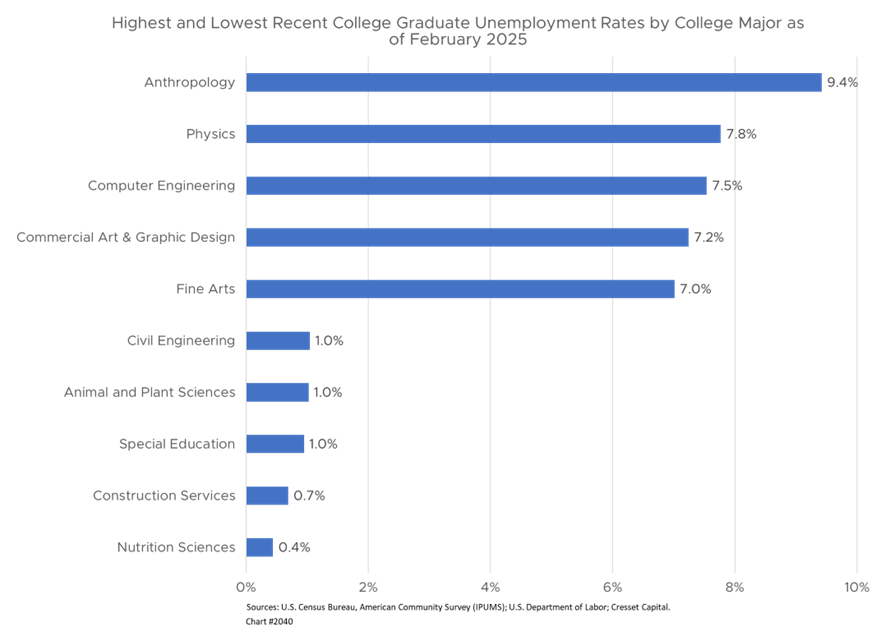

High-risk occupations share common characteristics: they involve routine cognitive tasks, rely on pattern recognition and data analysis, have well-defined inputs and outputs, and can be decomposed into discrete steps. Customer service, data entry, basic accounting functions, routine legal research, technical documentation, and many administrative roles fit these criteria. However, even within these categories, the risk isn't uniform. A customer service representative who learns to use AI tools becomes more productive and valuable; one who ignores the technology becomes less competitive.

Positions with lower AI exposure include those requiring complex human interaction, judgment calls with ethical dimensions, creative problem-solving in novel situations, and physical presence. Skilled trades, management roles requiring emotional intelligence, healthcare provider roles, and creative positions have somewhat more insulation from AI disruption—though this isn't permanent. As AI capabilities advance, the scope of protected occupations narrows.

AI tools can enable customer service representatives to handle up to 3 times more interactions, highlighting the significant productivity boost (Estimated data).

Data on AI's Current Impact on Labor Markets

Job Advertisement Trends as Leading Indicators

The 38% decline in job postings for high-AI-exposure roles between 2022 and 2025 serves as a crucial leading indicator of labor market transformation. This metric matters because job advertisements precede actual hiring decisions and reflect employer confidence. A sustained decline suggests employers are shifting their hiring expectations, not in terms of total volume necessarily, but in terms of skill requirements and role structures.

However, this data requires careful interpretation. A decline in job postings could mean several things simultaneously: employers are hiring fewer people because AI automation reduces headcount needs, employers are reshaping existing roles rather than creating new positions, employers are struggling to articulate what skills they actually need from AI-era workers, or employers are having difficulty filling positions because too few candidates possess relevant AI skills. Available data doesn't fully distinguish between these scenarios, but the employment decline suggests that not all of these are equally weighted—if employers were struggling to find skilled workers, we'd expect job postings to increase, not decrease.

Geographic variation in these trends provides additional insight. Major tech hubs show different patterns than secondary markets, suggesting that AI's impact concentrates initially in regions with higher technology sector concentration and talent density. This creates a secondary concern about regional inequality—if AI adoption accelerates in already-prosperous regions while stagnating in others, it could exacerbate existing geographic divides.

IMF Warnings: "AI Hitting Like a Tsunami"

The International Monetary Fund's characterization of AI's impact as arriving "like a tsunami" reinforces the scale of concern among major economic institutions. When the IMF, which operates within the economic mainstream and tends toward measured language, uses catastrophic metaphors, it signals genuine concern about adjustment speed and capacity. A tsunami doesn't cause damage because water is inherently dangerous; it causes damage because the speed and scale of water movement exceeds existing infrastructure's capacity to accommodate it.

Applied to labor markets, the tsunami metaphor captures something essential: AI capabilities are advancing faster than workers can retrain, faster than educational institutions can update curricula, and faster than policy can respond with appropriate retraining programs and social support. When adjustment speed exceeds institutional capacity, the result is temporary but acute disruption. The IMF's warning specifically notes that "most countries and most businesses are not prepared for it," which suggests that even if long-term outcomes are positive, the transition period will involve significant friction.

This unprepared state manifests in several ways. Educational curricula still emphasize traditional skill development rather than AI-integrated workflows. Government retraining programs, typically developed in response to labor disruption rather than in anticipation, lag behind actual skill needs. Businesses lack frameworks for integrating AI into existing roles while managing the transition for workers. These institutional gaps mean the adjustment period will likely involve more disruption than would occur if preparation were more advanced.

Industry-Specific Impact Analysis

Customer Service and Customer-Facing Roles

Customer service emerges as perhaps the highest-risk category for AI disruption, not because the function will disappear, but because AI fundamentally changes the performance gradient within the role. A well-trained customer service representative using AI tools can handle 2-3 times the volume of interactions, resolve issues more effectively (by drawing on the company's accumulated knowledge base instantly), and provide more personalized service (by reviewing customer history rapidly) than an equivalently trained representative without AI tools.

From an employer's perspective, this creates a straightforward business case for preferring AI-equipped workers. If one employee can service three customers' needs in the same time their counterpart services one customer, the return on training investment in AI tools becomes obvious. This shifts the competitive dynamic entirely. Companies don't need to eliminate customer service roles; they need fewer of them, and those that remain must involve AI proficiency. Workers in these roles face a binary choice: acquire AI skills or accept declining value in the labor market.

The transition creates intermediate challenges. A customer service organization might reduce headcount from 50 to 20 as remaining workers become more productive with AI. The 30 displaced workers can't simply transfer to other organizations, because every organization faces the same productivity calculation. The skills acquired—customer empathy, communication ability, product knowledge—remain valuable, but they're no longer sufficient. Adding AI proficiency to these base skills creates genuinely valuable workers; lacking it creates displacement. This creates urgency around retraining and transition support that many regions lack capacity to provide.

Technical and Professional Services

Higher-skilled technical roles face a different dynamic than customer service. Software developers, accountants, lawyers, and engineers have greater insulation from displacement because their work involves judgment, creativity, and novel problem-solving. However, they face significant competitive displacement risk. A developer fluent in AI coding assistants produces substantially more output than a developer writing code manually, often with fewer errors because the AI catches common mistakes. An accountant using AI for data analysis and pattern recognition identifies anomalies and insights faster than manual analysis allows.

These roles will likely bifurcate: AI-integrated versions that become more productive and valuable, and traditional versions that become increasingly marginal. A senior engineer who integrates AI tools into their workflow might become worth

This creates particular challenges for mid-career professionals who built expertise in pre-AI methodologies. A 15-year career successfully developing software using traditional approaches doesn't transfer neatly to AI-integrated development, which requires understanding different tools, working alongside AI systems rather than building everything from scratch, and reconceptualizing the development process. The skills aren't obsolete—they're still relevant—but they're insufficient, and the competitive advantage they once provided diminishes.

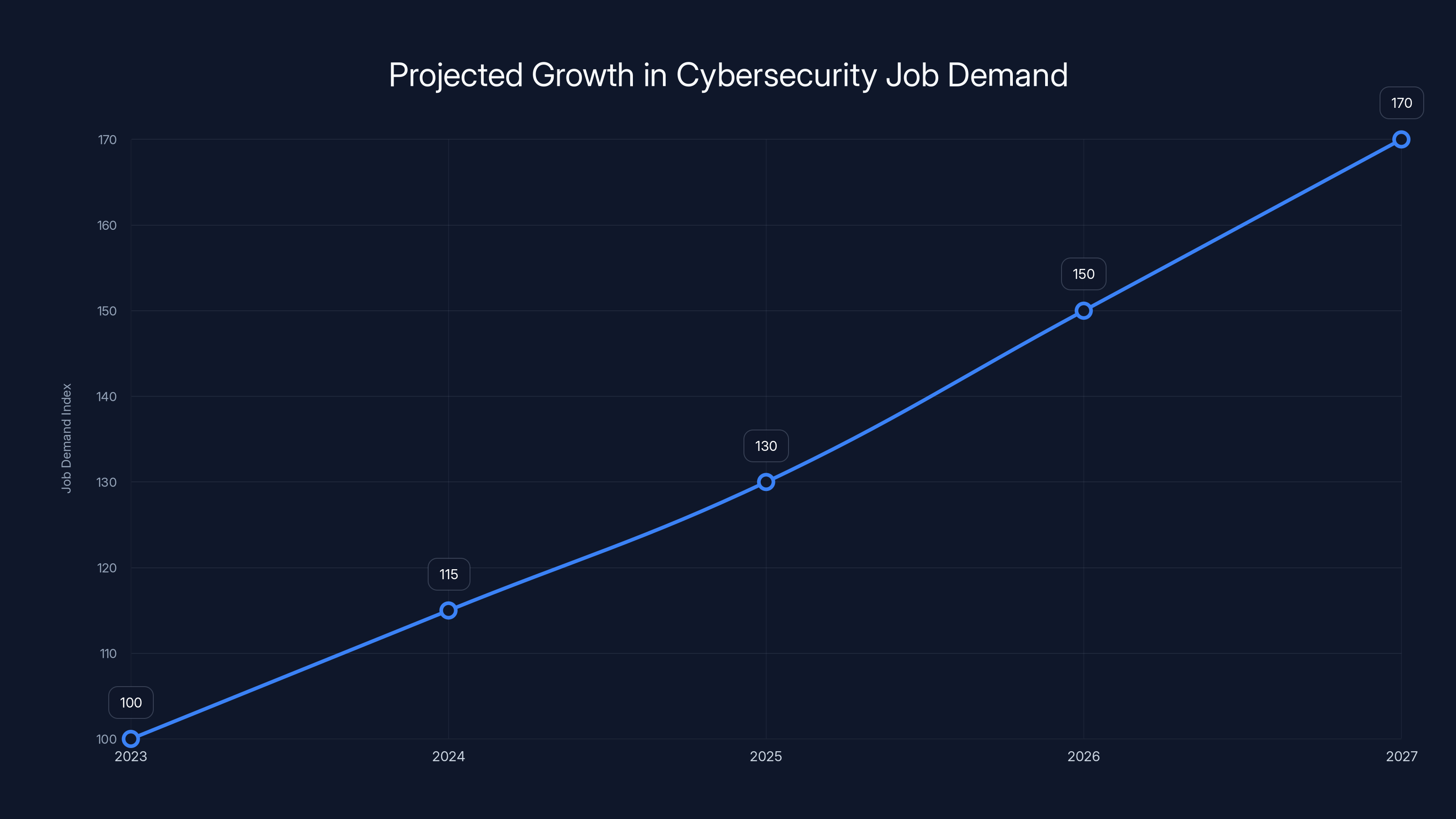

Cybersecurity: An Exception to Job Loss Patterns

Robbins noted that cybersecurity represents a notable exception to AI-driven job loss patterns. As AI becomes more sophisticated, it simultaneously enables more sophisticated attacks. Adversaries equipped with AI tools can orchestrate more complex attack sequences, evade detection systems more effectively, and target vulnerabilities at scale faster than traditional attacks allow. This creates a security arms race where defenders need AI not out of productivity choice, but out of necessity to maintain competitive parity with attackers.

Cybersecurity roles will likely expand rather than contract as organizations struggle to detect and respond to AI-enhanced threats. The complexity of integrating defensive AI systems, the sophistication required to understand attack patterns, and the need for security professionals to think multiple steps ahead of attackers all point toward increased, not decreased, demand for skilled security workers. This creates an interesting labor market divergence where some sectors see AI-driven displacement while others see AI-driven demand increases.

This exception proves important strategically. Workers seeking career security should note that roles directly addressing AI-created problems tend to have stronger demand dynamics than roles where AI increases worker productivity. In other words, positions that serve as AI counterbalances or security measures against AI systems likely weather the disruption better than positions where AI acts as a direct productivity multiplier.

The Mechanism of Skill-Based Displacement

How Competitive Advantage Translates to Displacement

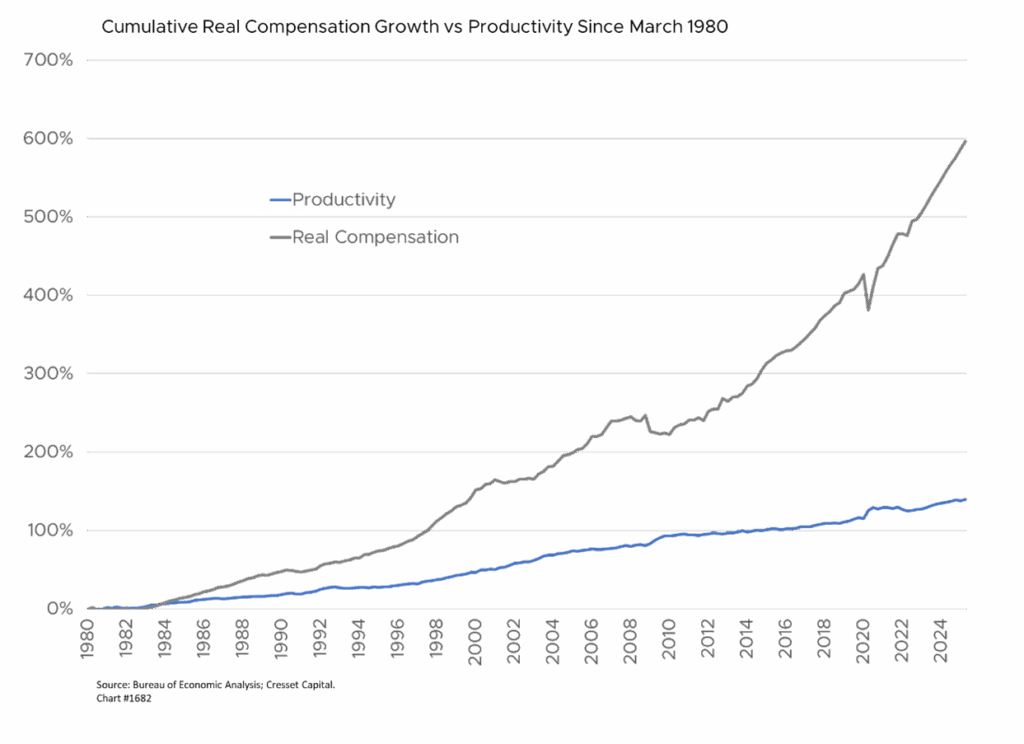

The mechanism through which AI-equipped workers outcompete their non-equipped peers operates through several reinforcing feedback loops. First, productivity differentiation creates obvious employer preference. If two candidates have equivalent traditional skills but one possesses AI proficiency, the employer's calculus strongly favors the AI-equipped candidate. Second, wage compression follows as the lower-skilled worker's market value declines relative to the higher-skilled version. This makes training in AI skills economically rational for currently-employed workers—the payoff in preserved or increased wages justifies the learning investment.

Third, rapid skill depreciation sets in for workers who don't upgrade. Skills that were cutting-edge five years ago become baseline today; baseline skills become insufficient tomorrow. A software developer who learned Python deeply 10 years ago has valuable knowledge, but it's increasingly insufficient without understanding how to leverage AI coding assistants, which have changed what effective coding practices look like. The depreciation isn't sudden—it's gradual—but it's relentless, creating pressure for continuous upskilling that wasn't as intense in pre-AI eras.

Fourth, organizational restructuring follows as companies optimize for AI-integrated workflows. Rather than hiring replacements for departing workers, companies restructure the role itself around AI tools. A marketing department might shrink from 12 people to 6 as the remaining 6 use AI for content creation, data analysis, and campaign optimization. The department's output might increase even as headcount declines, because the tool amplifies what remaining workers can accomplish. This makes the displacement particularly difficult to address because it's not a productivity gain used to expand the business—it's used to accomplish more with fewer people.

The Skill Adjacency Problem

A particular challenge emerges in what might be called the "skill adjacency" problem. Learning to use AI tools isn't purely additive to existing skills; it often requires rethinking fundamental approaches to work. A writer learning to use AI writing assistants can't simply write as they always did and then run the output through AI. Effective use requires understanding AI's strengths (generating first drafts, exploring alternative phrasings, researching topics) and weaknesses (lacking deep creativity, sometimes missing nuance, occasionally generating plausible-sounding errors). Using AI effectively requires reconceptualizing the writing process itself.

This creates barriers to adoption for experienced workers. Someone 10 years into a career in writing, accounting, software development, or any knowledge work has developed deeply embedded approaches to their work. Those approaches are often highly effective for traditional methodologies. Adopting AI tools requires, to some degree, abandoning those embedded approaches and rebuilding work processes from scratch. This is cognitively demanding and often feels backward—giving up expertise you've developed to learn new tools. The psychological and practical barriers are significant, which means that adoption rates among experienced workers likely lag significantly behind adoption among early-career workers who haven't yet embedded traditional approaches.

This gap creates a temporal disadvantage for experienced workers. An 18-year-old starting their first job in data analysis might grow up with AI tools as a natural part of their toolkit, developing practices optimized for AI integration from the start. A 45-year-old data analyst with 20 years of experience faces a more challenging learning curve, creating a window of 20+ years during which the younger worker has a built-in advantage. By the time the older worker catches up, the younger worker has deepened their AI proficiency into specializations the older worker hasn't considered yet.

Estimated data shows that routine cognitive tasks like data entry and customer service are at higher risk of AI disruption, with risk levels ranging from 6 to 9.

Regional and Organizational Variation in Impact

Geographic Concentration of AI Benefits and Disruption

AI adoption isn't distributed uniformly across geographic regions, creating divergent labor market outcomes in different places. Major tech hubs—Silicon Valley, Seattle, Boston, New York, London, Beijing—have high AI adoption rates, abundant skilled workers, venture capital supporting AI-focused startups, and large technology company presences. In these regions, AI adoption creates new job categories (AI trainers, prompt engineers, AI ethics specialists, AI infrastructure developers) that partially offset displacement in traditional categories. The net effect might be moderate job creation focused on high-skill positions.

Secondary and tertiary markets show different patterns. Regions heavily dependent on routine cognitive work—customer service centers, back-office operations, basic financial services—face significant displacement pressure without corresponding new job creation. A city where the primary employers are customer service outsourcing companies faces different disruption than a city with diverse technology companies. When those outsourcing companies automate with AI and reduce headcount, the region loses not just jobs but also the foundation of its economy. Retraining programs can't create jobs that don't exist locally, and migration to opportunity often isn't feasible for displaced workers with families, homes, and community ties.

This geographic divergence likely exacerbates existing inequality. Already-prosperous regions with technology sector concentration benefit from AI productivity, wage growth in high-skill categories, and spillover effects in supporting services. Regions dependent on routine work face contraction, wage pressure, and reduced tax bases that make supporting displaced workers more difficult. Without intentional policy intervention, AI adoption could accelerate geographic inequality within countries and potentially between countries.

Organizational Readiness and Disruption Timing

Companies vary dramatically in AI adoption readiness, creating different timelines for disruption. Large technology companies and sophisticated enterprises have resources, talent pools, and existing data infrastructure that enable rapid AI integration. These organizations can invest in AI infrastructure, develop AI-integrated workflows, and retrain workers relatively quickly. They face disruption, but they also have resources to manage it. Smaller companies and less technologically mature organizations face different constraints. They lack dedicated AI teams, have less data infrastructure, and struggle to attract AI talent in competitive markets. Their disruption timeline might be longer, but when it comes, they have fewer resources to manage transition.

This creates paradoxical effects. Very small companies might delay AI adoption long enough that the technology becomes more accessible and standardized, allowing them to adopt efficiently without the false starts large companies experience. Very large companies might navigate disruption effectively through resources and scale. Mid-sized companies—large enough to recognize AI's necessity but small enough to lack the resources of giants—might face the most severe disruption. They can't afford to get AI integration wrong, but they lack the resources to afford learning curves.

The Infrastructure Buildout Parallel

Why This Matters: Learning from the Internet Boom

Cisco CEO Jensen Huang's statement that current AI development represents "the single largest infrastructure buildout in human history" contextualizes the scale of what's underway. This framing provides important perspective because infrastructure buildouts have historical patterns. The original internet buildout in the 1990s created not only new technologies but also tremendous employment in laying fiber, building data centers, developing networking equipment, and supporting the infrastructure installation. This employment was substantial, even before the internet applications that would define the digital era existed.

AI's infrastructure buildout includes similar dynamic: semiconductor manufacturing for AI chips, data center construction and expansion, cooling systems for high-compute environments, power generation capacity expansion, and the physical infrastructure needed to support AI's computational demands. These elements will employ workers for years, potentially offsetting some displacement in other sectors. A construction worker or electrical engineer installing solar farms to power AI data centers is experiencing employment opportunity created by AI infrastructure, even if they never interact with the actual AI systems.

However, infrastructure employment has temporal limits. Once infrastructure is built, ongoing employment drops significantly. The 1990s internet boom created substantial construction and hardware jobs; the 2000s digital economy created entirely different jobs focused on application development, digital marketing, and e-commerce operations. The transition from infrastructure building to application development likely involved displacement for workers whose skills were specific to the buildout phase. This historical parallel suggests that AI's infrastructure phase will eventually give way to an application phase, and the jobs created in each will differ substantially.

Preparation Strategies: How to Position for the Transition

Individual Career Development in the AI Era

For individual workers, preparation centers on building AI literacy and understanding AI's capabilities and limitations rather than memorizing specific tools. Tools change rapidly—today's cutting-edge AI platform becomes tomorrow's outdated system. But understanding AI's mechanics, knowing how to prompt effectively, understanding where AI excels and where it fails, and thinking about how AI might change your work is more durable. Building this literacy doesn't require becoming an AI researcher or machine learning engineer; it requires experimenting with available tools, reading about how AI works at a conceptual level, and thinking critically about your profession's likely evolution.

Specific preparation depends on your field. Customer service workers should develop skills that AI augments rather than replaces: deep product knowledge, empathy, ability to handle complex emotional situations, and judgment in edge cases. Simultaneously, learning how to work with AI tools in customer interactions—understanding what information AI systems can reliably provide versus where human judgment matters—becomes essential. Software developers should invest time in AI coding assistants, understanding their capabilities and limitations, and thinking about how AI changes development practices. Writers should experiment with AI writing tools, develop skills in directing AI output and refining its results, and focus on the creative and strategic thinking that AI struggles with.

Beyond technical preparation, building what might be called "transition resilience" matters. This includes maintaining emergency financial reserves, staying connected to professional networks, developing skills transferable across domains, and maintaining psychological flexibility about career changes. Workers who assume their current role will exist in current form in 10 years are likely to be disappointed. Those who plan for potential disruption while hoping it doesn't occur are better positioned to adapt if it does.

Organizational Change Management

Companies must navigate the transition thoughtfully to avoid the worst disruption outcomes. This starts with acknowledging the reality of AI-driven change rather than treating it as optional. The ostrich strategy—ignoring AI in hopes that tradition persists—is likely to prove catastrophic. Organizations that begin planning for AI integration now, experimenting with the technology, and identifying roles where AI amplifies human capability versus where it might replace human work, position themselves for the transition better than those waiting for clarity to emerge.

Integrating existing workers into AI-augmented roles requires deliberate strategy. Rather than replacing workers with AI systems, forward-thinking organizations consider how to augment the worker with AI tools. Customer service representatives become AI-assisted service providers. Analysts become AI-accelerated analysts. Developers become AI-augmented developers. This framing positions workers as partners in productivity improvement rather than obstacles to automation. It also requires training investments to help existing workers develop AI proficiency, but the payoff includes lower turnover, better institutional knowledge retention, and smoother transitions.

Organizations should also address the binary risk: waiting too long creates competitive disadvantage as more advanced competitors pull ahead, while moving too fast risks building infrastructure around immature tools or approaches that prove sub-optimal. Navigating this requires pilot programs, willingness to experiment, and accepting that some investments in AI infrastructure will prove misallocated. This tolerance for experimentation is antithetical to how many traditional organizations operate, but it's essential for successful AI adoption.

Estimated data suggests macroeconomic levels face the highest disruption impact due to AI, followed by organizational and individual levels.

The Cybersecurity Wild Card: Growing Demand in Threatening Landscape

Why Cybersecurity Diverges from Typical Job Loss Patterns

Cybersecurity professionals face an unusual position in the AI era: rising rather than falling demand, driven by the same technology that's creating displacement pressure elsewhere. This divergence occurs because cybersecurity operates in an adversarial landscape where attackers and defenders constantly escalate capabilities. As attackers gain access to AI tools for orchestrating attacks, defending organizations need AI-enhanced defensive capabilities to maintain parity. This creates demand that doesn't depend on productivity gains—it depends on necessity.

The specific security challenges AI creates include sophisticated phishing attacks generated by AI, account takeover attempts using AI-generated voice and video deep fakes, identifying zero-day vulnerabilities at scale, and orchestrating multi-stage attacks that evade traditional detection. Defending against these requires not just faster humans, but humans augmented with AI systems capable of detecting patterns at scale and speed that traditional monitoring can't achieve. An organization might automate away 20% of customer service jobs through AI, but it can't reduce cybersecurity staff by the same percentage because the threat landscape has fundamentally changed.

This creates interesting career implications. Security professionals, data analysts focused on threat detection, security architects, and incident response specialists likely experience more favorable job market conditions than their counterparts in displaced occupations. This hasn't yet translated into significantly higher compensation across the board, but labor economics suggest it eventually will as demand outpaces supply. Early-career professionals considering specializations should note that fields addressing AI-created problems likely offer better career security than fields where AI primarily increases productivity.

The Ongoing Arms Race Dynamic

Cybersecurity's divergence from job loss patterns persists precisely because the arms race dynamic prevents stable equilibrium. Attackers gain new capabilities through AI, defenders must invest in AI-enhanced defenses, which creates new attack surfaces, which generates new threats, and so on. This isn't an efficiency problem that resolves through automation—it's a competitive game that continues escalating. The human element remains central because security decisions involve judgment, creativity, and understanding emerging threats. The AI element becomes essential not because it replaces human judgment but because it extends human capability to scales necessary to defend against AI-augmented attacks.

Addressing Market Inequality and the Skill Access Problem

The Two-Tier Labor Market Risk

Among the more concerning scenarios AI creates is the emergence of a sharply two-tier labor market. One tier consists of workers with AI proficiency, working in organizations that have successfully integrated AI into workflows, earning premium compensation. The other tier consists of workers without AI skills or who work in organizations that haven't adopted AI, earning reduced compensation and facing declining opportunities. This isn't speculative—we're already seeing salary divergence in technical fields between AI-proficient and non-proficient workers, with the premium for AI skills potentially reaching 30-50% in some specializations.

This two-tier dynamic becomes particularly concerning when AI skill access correlates with other inequality factors. If AI training requires expensive courses, time away from work, or access to tools available primarily in wealthy regions, then AI skills become a class marker that reinforces existing inequality. Workers with resources access training, develop skills, and secure premium positions. Workers without resources lack opportunities to develop skills, face declining wages, and experience increasing economic stress. This creates not just temporary disruption but durable inequality that could persist for decades.

Address this requires deliberate policy and investment. Some organizations are implementing AI training programs for employees regardless of role, recognizing that building organizational AI literacy benefits everyone. Educational institutions are integrating AI literacy into curricula at all levels. Some governments are investing in free or subsidized AI training programs for displaced workers. These interventions matter because they expand access to the skills that increasingly determine labor market outcomes.

Regional Inequality and Opportunity Concentration

Geographic concentration of AI opportunity creates secondary inequality concerns. AI jobs concentrate in major tech hubs, meaning workers in secondary or rural regions face barriers to opportunity. They could potentially relocate, but that's not feasible for many—caring for elderly parents, family ties, health conditions, housing affordability challenges in tech hubs, and simple preference for existing communities all create relocation barriers. Regions without strong technology sectors face declining opportunities as routine work gets automated and AI-opportunity concentrates elsewhere.

Addressing this requires different approaches. Remote work, enabled by high-speed internet, can partially extend opportunity from concentrated hubs. Some organizations are deliberately distributing roles to secondary markets. Investing in regional education and training infrastructure helps build local capacity. Supporting small technology companies in secondary markets creates local opportunity. These approaches don't solve the problem completely—significant advantages remain to being in tech hubs—but they can reduce the extreme concentration that currently exists.

Historical Lessons: What Previous Technological Disruptions Teach Us

The Pattern of Creative Destruction

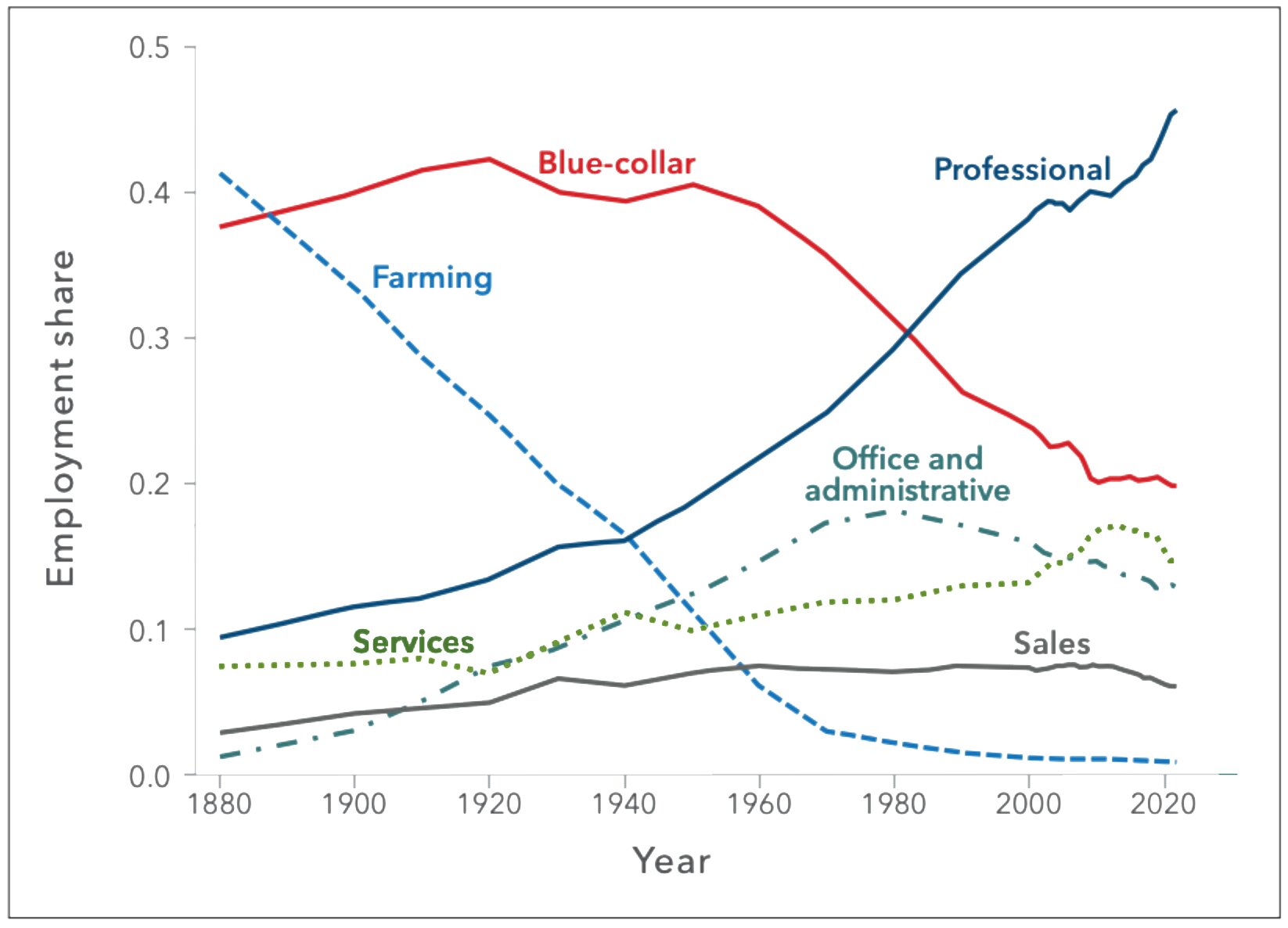

Economist Joseph Schumpeter's concept of "creative destruction" describes how technological progress works: new technologies destroy the value of existing skills, assets, and business models while simultaneously creating new opportunities. Photographs destroyed the portrait painting industry, but photographers became a new occupation. Automobiles destroyed the horse carriage industry but created automobile manufacturing. Manufacturing automation destroyed assembly line jobs but created robot technician and maintenance jobs. In each case, society ultimately benefited from the new technology—we're better off with photographs and automobiles and manufactured goods—but the transition created real disruption for people whose skills became obsolete.

AI is undergoing the same pattern. The ultimate outcomes will likely be positive—AI augmentation of human capability will solve problems, reduce costs, and create opportunities that wouldn't otherwise exist. But the transition will involve creative destruction, meaning disruption for workers whose skills are being destroyed or devalued. The question is whether that disruption will be managed effectively or whether it will create unnecessary suffering while the transition unfolds.

Why Transitions Matter More Than End States

Historical analysis suggests that the end state of major technological transitions matters less than the transition process itself. After the automobile replaced horses, society was clearly better off—transportation was faster, more reliable, and more efficient. But the transition period created significant hardship for stable hands, horse breeders, carriage manufacturers, and related occupations. The long-term benefit doesn't retroactively erase the short-term pain. Workers can't eat long-term societal benefits; they need income during the transition.

This distinction shapes how we should think about AI disruption. The question isn't whether AI will ultimately benefit society—economic analysis suggests it likely will. The question is whether we'll manage the transition effectively, supporting displaced workers, enabling reskilling, and preventing the worst inequality outcomes. A well-managed transition distributes AI's benefits broadly while supporting those negatively affected in the short term. A poorly managed transition concentrates benefits among those who get AI skills first while creating lasting hardship for those who don't.

Historical precedent suggests that management doesn't happen automatically. The transition from agricultural to industrial economies created benefits that historians describe as revolutionary, but the transition period involved urbanization chaos, terrible working conditions, child labor, and social upheaval. We eventually developed education systems, labor protections, and social safety nets that managed the disruption better, but those came after significant suffering. Learning from this history suggests that proactive management is better than reactive crisis response.

Cybersecurity job demand is projected to grow significantly from 2023 to 2027 due to AI-driven threats, diverging from typical job loss patterns. Estimated data.

The Role of Education and Continuous Learning

Fundamental Shift in Educational Demands

Traditional education models emphasize building deep expertise in specific domains. A person became a lawyer, accountant, or engineer and that identity could persist for 40 years with periodic updates as practices evolved. AI disruption undermines this model's viability. The skills that made someone effective 10 years into a 40-year career become partially obsolete 20 years in, requiring fundamental relearning. This creates educational demands that traditional institutions struggle to meet. Universities design 4-year degree programs; AI's evolution operates on 18-month cycles. Professional education assumes knowledge remains current for years; AI requires continuous updating.

This suggests educational transformation is necessary. Rather than treating education as a phase of life (K-12, perhaps college, perhaps some graduate school), the AI era requires education as a continuous process throughout careers. This could involve several mechanisms: employers investing heavily in continuous training, professionals dedicating regular time to skill development, educational institutions offering modular, updateable training rather than fixed programs, and cultural shifts that normalize frequent career transitions and retraining.

Implementing this at scale faces substantial barriers. Current educational systems lack flexibility for continuous updating. Businesses resist spending resources on training that benefits employees' external marketability, not just their current position. Workers lack time and financial resources to constantly engage in new training while working full-time jobs. These barriers are real, but the alternative—allowing large portions of the workforce to become progressively less competitive—seems worse.

The Specialization Versus Generalism Tradeoff

AI's advancement creates interesting dynamics around specialization versus generalism. Deep specialization in specific domains remains valuable if those domains have sustained demand. A surgeon who develops extraordinary expertise in complex surgical techniques remains valuable even as AI assists with diagnosis and surgical planning. However, specialization in routine, well-defined functions becomes risky—those are most vulnerable to AI disruption. Generalism—having broad competence across multiple domains—provides flexibility. A person who understands how to solve problems generally, can learn new domains relatively quickly, and can adapt approaches across different contexts has resilience that pure specialists lack.

For workers, this suggests developing both deep skill in your primary domain and broad competence across adjacent domains. A customer service expert might develop deep specialization in your company's products and customer base, but also build broad competence in communication, problem-solving, and technology that allows transitions if customer service is disrupted. A developer might specialize in a particular technology stack but maintain broad programming competence and understanding of development concepts independent of specific tools.

Corporate Strategy: Building AI-Augmented Organizations

Integration Approaches: Replacement Versus Augmentation

Companies choosing how to integrate AI face a fundamental strategic decision: use AI to replace workers and reduce headcount, or use AI to augment workers and increase productivity. These approaches have different implications for disruption, employee morale, and long-term organizational capability. Replacement-focused approaches create immediate cost savings but also immediate disruption, potential knowledge loss, negative employer brand effects that complicate future hiring, and reduced employee loyalty as remaining workers fear further displacement.

Augmentation-focused approaches require different investment structures. Rather than replacing workers with AI, companies invest in AI tools, training for existing workers, and process redesigns that position AI as workers' tools rather than competitors. This requires higher short-term investment—training costs, time for workers to develop proficiency, temporary productivity reductions during learning phases—but produces long-term benefits through higher retention, better institutional knowledge preservation, and workforce engagement.

Economically, augmentation isn't always optimal in the narrow sense. If replacing worker with AI system saves more money than augmentation-focused productivity improvements, the replacement approach generates higher financial returns. However, this calculus doesn't account for organizational factors like the value of institutional knowledge, the cost of hiring and training replacements, the risks of disruption, and the long-term effects on organizational culture and capability. Companies optimizing purely for short-term financial metrics might choose replacement; companies optimizing for long-term organizational strength might choose augmentation.

Managing the Transition: Phasing and Pilot Approaches

Successful integration requires managing the transition carefully. Phasing AI adoption—implementing in some departments first, learning from those experiences, then expanding—allows organizations to develop effective integration approaches before scaling. Pilot projects identify challenges, develop solutions, and build organizational knowledge that makes broader rollout more effective. This takes longer than immediate, company-wide implementation, but produces better outcomes if the organization learns from early phases.

Pilots also provide early warning about integration challenges that company leadership might not anticipate. Maybe AI tools work wonderfully in theory but don't integrate well with existing workflows. Maybe employee resistance is higher than expected, creating change management challenges. Maybe the AI tools don't deliver promised capabilities in your specific context. Early pilots reveal these issues at smaller scale, where they're manageable. Full company rollout without pilots risks discovering these issues too late to easily correct them.

Policy Considerations and Societal Response

Government Role in Managing Disruption

Governments face choices about how actively to manage AI's disruption effects. Laissez-faire approaches trust that markets will adjust and workers will adapt through normal labor market mechanisms. This maximizes growth but allows disruption to concentrate on those least capable of managing it. Interventionist approaches use policy tools—retraining programs, income support, education investment, labor market regulations—to manage disruption effects. This reduces some disruption but potentially slows productive innovation and adaptation.

Most likely, effective government response falls between these extremes. Pure deregulation risks severe disruption that becomes politically unsustainable and economically wasteful. Pure protection that prevents AI adoption becomes economically destructive, allowing competitors to advance while you stagnate. The middle ground involves enabling AI adoption while investing in transition support, education, and infrastructure that allows people to adapt. This requires government resources but potentially less than managing social disruption from poorly-managed transitions.

Potential Policy Tools

Available policy tools include education and training investments, income support during transitions, healthcare accessibility independent of employment, geographic development incentives to prevent extreme concentration, and labor market policies that facilitate transitions while maintaining protections. Education investments in AI literacy, computational thinking, and related skills build population capability. Training programs help displaced workers develop new skills and transition to opportunity. Income support—whether through expanded unemployment insurance, transitional assistance, or stronger social safety nets—provides stability during transitions. Healthcare accessibility independent of employment removes a barrier to leaving disrupted industries or changing careers. Geographic development incentives can help distribute opportunity and reduce extreme concentration in few hubs.

These tools aren't free—they require government resources and tax revenue. However, they're likely cheaper than managing social chaos from unmanaged disruption, and they distribute AI's benefits more broadly than unmanaged transitions would achieve. The question is whether societies decide disruption management is important enough to fund, or whether they accept that disruption is the price of progress.

AI-equipped workers significantly outperform non-equipped workers across key customer service metrics. Estimated data highlights the competitive edge provided by AI tools.

Competitive Dynamics: Organizations and Countries

Organizational Competitive Advantage

Organizations that successfully integrate AI into augmented human-AI workflows gain competitive advantages that compound over time. Early movers develop expertise, build organizational knowledge about what works and what doesn't, and develop processes optimized for AI-human collaboration. Later movers face more mature tool markets and more developed best practices, reducing some risks, but lose first-mover advantages. This creates pressure for organizations to begin AI integration even amid uncertainty, because waiting creates competitive risk.

This competitive pressure cascades. If your competitors are becoming more productive through AI integration while you're not, you face two choices: integrate AI yourself or accept declining competitiveness. For organizations with significant margins, this choice might be manageable. For organizations already operating on thin margins, it becomes existential. This competitive escalation is likely to drive faster AI adoption than purely economic optimization would suggest, creating faster labor market disruption than would occur if organizations had more time to carefully plan transitions.

National and International Competitive Dynamics

At the national level, countries implementing AI broadly gain economic advantages over countries that do. Productivity improvements concentrate in AI-adopting nations, wage growth follows, and talented workers migrate toward opportunity. Countries falling behind on AI adoption face declining competitiveness, reduced investment, and talent outflows. This creates international pressure for rapid AI adoption regardless of domestic disruption effects. A nation concerned about managing disruption humanely still faces pressure to adopt AI broadly because not doing so creates international disadvantage that eventually becomes economically devastating.

This dynamic makes international coordination on AI disruption management theoretically valuable but practically difficult. Even if countries agreed that managing disruption is important, individual nations have incentives to advance their own AI capabilities while others are still adjusting. This prisoner's dilemma dynamic might accelerate disruption beyond what's socially optimal. Addressing it would require international agreements about AI development pace or mandatory disruption management, both of which face significant political barriers.

Building Resilience: Individual and Organizational Approaches

Personal Resilience Strategies

Individual workers can build resilience through several approaches. Financial resilience through emergency savings allows weathering periods of disruption, job changes, or retraining. Professional network maintenance ensures connections to opportunity when current positions become disrupted. Skills diversification—developing competence across multiple related domains—provides flexibility if specialization becomes obsolete. Psychological flexibility—willingness to learn new approaches, adapt methods, and accept that careers might not follow expected trajectories—helps navigate disruption. Continuous learning commitment builds AI literacy gradually rather than trying to catch up suddenly when disruption arrives.

These approaches aren't guarantees against disruption, but they reduce vulnerability. A person with substantial savings, broad professional network, diverse skills, psychological flexibility, and continuous learning habits can navigate disruption that would devastate someone lacking these qualities. Building these characteristics takes time and effort, but the payoff in resilience is substantial.

Organizational Resilience Through AI Integration

Organizations build resilience through deliberate AI integration approaches. Rather than treating AI as a cost-reduction tool to deploy suddenly, forward-thinking organizations treat AI as a long-term capability to develop gradually. This involves experimenting with AI tools to understand their strengths and limitations, training existing workers rather than replacing them, identifying roles where AI amplifies human capability, and developing processes where humans and AI collaborate effectively.

This gradual integration approach seems slow compared to replacement-focused approaches, but it builds organizational capability that persists as AI tools evolve. The organization develops expertise in AI use, workers develop comfort and proficiency, and processes become optimized for human-AI collaboration. When AI capabilities improve or new tools emerge, the organization can integrate them into existing frameworks rather than starting from scratch. This capability accumulation creates competitive advantage that goes beyond any single AI tool.

Case Studies: How Different Organizations Are Navigating Transition

Tech-Native Companies: Integrating AI as Normal Business Evolution

Tech companies with digital-native operations—companies founded in the post-internet era—navigate AI adoption differently than legacy companies. Their existing processes often involve software and automation, making AI integration feel like evolution rather than revolution. Their workforces expect rapid technological change and career evolution. Their organizational structures often permit experimentation and failure, which reduces barriers to trying new approaches. When these companies adopt AI, they do so in a context of existing technological sophistication and organizational flexibility. While they certainly face disruption, they navigate it within a cultural context that anticipates continuous change.

Legacy Organizations: Adapting Established Processes

Legacy organizations—companies with decades of history, established processes, and workforces that built careers in stable environments—face different challenges integrating AI. Existing processes are often deeply embedded and optimized for human work. Workforces include significant numbers of experienced workers whose entire careers occurred in pre-AI eras. Organizational structures and cultures often resist experimentation and rapid change. When these organizations attempt AI integration, they encounter more resistance, require more change management, and need longer transition periods. However, these organizations also often possess substantial resources to invest in integration and transition management.

Facing these challenges, some legacy organizations are beginning integration earlier than appears necessary, building organizational capability gradually before competitive pressure forces rapid transformation. This reduces disruption but requires executive commitment to long-term transformation rather than purely short-term financial optimization.

Looking Forward: Likely Scenarios for the Next 5-10 Years

Base Case: Gradual Disruption with Increasing Polarization

The most likely scenario over the next 5-10 years involves gradual, not catastrophic disruption, but with increasing polarization. Some occupations and sectors experience meaningful disruption—customer service, routine cognitive work, back-office operations—with 15-30% employment declines in exposed categories. Other sectors see minimal disruption or actually see growth—technology, healthcare, security, skilled trades. Overall employment might remain relatively stable, but employment in disrupted sectors shifts to growth sectors, creating winners and losers with insufficient transition support to prevent hardship.

Workers with AI skills experience increasing wage premiums—potentially 30-50% higher compensation than comparable workers without AI skills. Wage growth in exposed sectors stagnates or declines as labor oversupply develops. Income inequality increases. Geographic inequality increases as AI opportunity concentrates further. Education becomes increasingly important as skill determines labor market outcomes more than ever. Society benefits from AI productivity improvements, but the distribution of benefits becomes increasingly unequal.

Government policy remains reactive rather than proactive, with retraining and transition programs developing after disruption occurs rather than in anticipation. This creates periods of significant unemployment and hardship, eventually followed by adjustment. The adjustment is painful but manageable because most workers do eventually transition to opportunity, albeit often at lower wages than their previous positions offered.

Optimistic Scenario: Managed Transition with Broad Benefit Distribution

A more optimistic but less likely scenario involves deliberate policy and organizational responses that manage transition effectively and distribute AI benefits broadly. Educational institutions update curricula rapidly, ensuring new workers enter the labor market with AI skills. Governments invest significantly in retraining programs that successfully help displaced workers develop new skills and transition to opportunity. Organizations adopt AI in ways that augment workers rather than replacing them, reducing disruption. Strong labor protections and social safety nets prevent the worst hardship. In this scenario, society experiences disruption, but it's managed effectively, inequality increases modestly rather than dramatically, and most workers share in AI's productivity benefits through some combination of higher wages, better working conditions, and reduced work hours.

This scenario requires significant public investment and organizational commitment. It's technically achievable but politically challenging because it requires accepting short-term costs—spending resources on transition support, moving more slowly with AI integration than maximum growth would allow—to distribute long-term benefits more equally. Historical precedent suggests this level of management rarely occurs voluntarily without significant political pressure.

Pessimistic Scenario: Rapid Disruption and Severe Dislocation

A less likely but possible pessimistic scenario involves rapid, disruptive AI adoption that outpaces adjustment capacity. Organizations, facing competitive pressure, adopt AI faster than most workers can adapt. Education and training systems can't keep pace with needed skill changes. Governments provide insufficient transition support. Labor markets experience sharp dislocation with unemployment in disrupted sectors rising significantly. Income inequality increases dramatically as AI-skill premiums widen. Geographic inequality becomes extreme as opportunity concentrates in tech hubs. Social safety nets, inadequate to handle dislocation scale, become overwhelmed. The long-term outcomes might still be positive as society eventually adjusts, but the adjustment period involves severe hardship, social instability, and political upheaval.

This scenario is less likely because it would eventually trigger political response—voters suffering significant hardship pressure governments to intervene. However, the lag between disruption and response could create extended periods of severe dislocation. History suggests this happens occasionally when technological change outpaces adjustment capacity. The Great Depression resulted partly from economic dislocation from rapid industrialization and agricultural mechanization exceeding adjustment capacity. Similar dynamics could apply to AI if adoption becomes rapid enough and distributed adjustment capacity proves inadequate.

The Bottom Line: What Cisco's CEO Is Really Saying

When Robbins warns about "carnage along the way," he's articulating something important that often gets lost in debates about whether AI will create or destroy jobs overall. The answer to whether AI creates jobs—yes, it likely does, both directly in new AI-related fields and indirectly through productivity improvements that enable business expansion and new market creation. The answer to whether AI destroys jobs—also yes, it does, in disrupted fields where human workers become less necessary or less valuable. Both are simultaneously true. The tension between these realities creates disruption that persists even if the long-term balance is positive.

The real warning, translated from executive language, is roughly this: "The next 5-10 years will involve significant disruption. Some people will be displaced. Some organizations will fail. Some regions will decline. The overall economy will improve and society will ultimately benefit, but the transition will be painful and won't benefit everyone equally. If we manage it poorly, the pain will be severe and unnecessarily distributed. If we manage it well, we can mitigate the worst effects. The choice is ours, but we need to start managing it now rather than reacting after disruption occurs."

This isn't a prediction that AI itself is bad or that we should reject the technology. It's a call for serious attention to how we implement AI, how we support people adapting to its effects, and how we distribute its benefits. Robbins' experience having lived through the dot-com boom and bust likely informs this perspective—he's seen how technological transformation creates both tremendous opportunity and tremendous disruption, and he's suggesting we learn from that experience.

Conclusion: Preparing for Disruption You Don't Know When Will Arrive

The challenge of preparing for AI-driven labor market disruption is that the timing remains uncertain. We know significant disruption is coming, but "significant" could mean 2 years or 20 years. It could arrive all at once or gradually. It could be well-managed or chaotic. This uncertainty creates motivation challenges—it's hard to prioritize preparation for disruption that might not arrive in your career lifetime, even if the expected value of preparation is positive.

However, the actions that prepare you for AI disruption are also actions that improve your career resilience generally. Developing AI literacy, maintaining professional networks, building diverse skills, saving emergency resources, and remaining psychologically flexible—these all improve your capacity to navigate any disruption, whether AI-related or otherwise. The upside of preparation exists even if AI disruption never arrives at the scale some expect. This suggests that starting preparation now is prudent risk management rather than overreaction to speculative concerns.

For organizations, beginning AI integration deliberately and thoughtfully—experimenting with the technology, training workers, developing new workflows—is valuable regardless of how quickly disruption arrives. If AI adoption accelerates, early movers have advantages and experience. If disruption is slower than expected, organizations have still improved their technological sophistication and capabilities. This again suggests that beginning transition work now is prudent regardless of uncertainty about timing.

For policymakers, investing in education, supporting training, maintaining social safety nets, and encouraging geographic development are investments that create value even absent AI disruption. These investments improve society's productive capacity, resilience, and ability to manage various challenges. They're not wasted effort if AI disruption occurs more slowly than expected; they're contributions to social resilience regardless.

Ultimately, Robbins' warning asks us to take seriously the disruption that major technological transitions create. History shows these disruptions are real and sometimes severe. It asks us to prepare thoughtfully rather than hoping disruption doesn't arrive. And it asks us to consider how we want to manage disruption—whether we'll allow it to concentrate its effects on those least able to absorb them, or whether we'll invest in managing it in ways that distribute costs and benefits more equitably. The answer to that question will define whether AI's transition improves most people's lives or concentrates its benefits narrowly while creating widespread hardship. That choice isn't determined by technology—it's determined by human decisions about how to manage technology's effects.

For developers specifically, tools like Runable that help teams automate workflows and generate content using AI can accelerate productivity and reduce time spent on routine tasks, allowing focus on higher-value work. Understanding how to integrate such tools effectively into existing processes is part of the skill development that prepares workers for the AI era—knowing how to work alongside AI systems rather than viewing them as threats. Teams that adopt AI-powered productivity tools early develop capability and process understanding that positions them well for whatever disruption the labor market ultimately experiences.

FAQ

What does it mean when Cisco CEO says AI will cause "carnage" in the job market?

Robbins uses "carnage" to describe significant disruption during the transition period as companies adopt AI and labor markets adjust to new capabilities. It doesn't mean mass unemployment or societal collapse, but rather a period with substantial job losses in some sectors, wage pressure in disrupted categories, organizational failures, and difficult transitions for affected workers. Historical precedent suggests such disruptions are real but typically followed by eventual adjustment and recovery, though the transition period can involve significant hardship. The term emphasizes that despite AI's ultimate positive effects, the path to those effects involves disruption that requires management and support.

Is the real risk AI replacing workers, or skilled workers using AI outcompeting others?

The more nuanced risk is the latter. Rather than AI systems directly replacing entire categories of workers, the real disruption occurs when skilled professionals equipped with AI tools become dramatically more productive than their counterparts without AI skills. This creates competitive pressure where AI-equipped workers become more valuable to employers, pushing non-equipped workers into lower-wage positions or unemployment. A customer service representative with AI assistance might handle three times the workload of a non-assisted representative, making the AI-equipped worker more valuable despite the role itself persisting. This distinction matters because it changes how disruption manifests and what solutions might address it.

What specific occupations are most at risk from AI disruption?

Occupations most at risk share common characteristics: they involve routine cognitive tasks, rely on pattern recognition and data analysis, have well-defined processes, and can be decomposed into discrete steps. Customer service roles, data entry, basic accounting, routine legal research, technical documentation, and administrative positions fall into this category. McKinsey research found a 38% decline in job postings for high-AI-exposure roles between 2022 and 2025. However, even within these categories, workers who develop AI proficiency become more competitive, suggesting that the risk isn't uniform across all positions but concentrates on those who lack AI integration skills. Cybersecurity, paradoxically, shows increasing demand because AI creates both attacks and defense needs simultaneously.

How long will the disruptive period last before labor markets stabilize?

Historical precedent from previous technological disruptions suggests transitions last 5-15 years depending on adoption speed and adjustment capacity. The agricultural-to-industrial transition took decades; the internet revolution's labor market effects played out over 15-20 years. AI adoption appears faster than either of these, potentially compressing timelines. Most economists expect significant disruption concentrated in the next 5-10 years, with gradual adjustment continuing beyond that. However, uncertainty about AI capability advancement rates, adoption speed, and adjustment capacity makes precise forecasting impossible. What's certain is that the transition won't be instantaneous, creating a window for preparation, but also that waiting too long to adapt creates unnecessary vulnerability.

What's the difference between AI replacing workers and AI augmenting workers?

Replacement means AI systems eliminate the need for human workers in specific functions—a robot assembly line replaces assembly workers. Augmentation means AI tools enhance what human workers can accomplish—a developer using AI coding assistants becomes more productive but remains essential. The distinction matters profoundly for employment outcomes. Replacement creates displacement; augmentation creates productivity gains that employers can use to expand business, improve profitability, or reduce costs while maintaining headcount. From a worker's perspective, augmentation is far preferable because it increases your value rather than eliminating it. From an employer's perspective, the choice between replacement and augmentation depends on various factors including cost, worker availability, and organizational capabilities.

Why does cybersecurity face different disruption dynamics than other fields?

Cybersecurity operates in an adversarial landscape where defenders and attackers continuously escalate capabilities. As attackers gain access to AI tools for sophisticated attacks, defenders require AI to detect and respond to threats faster than humans alone can achieve. This creates demand for cybersecurity workers that doesn't depend on productivity gains reducing headcount—it depends on necessity and the arms race dynamic. An organization might automate away customer service jobs but can't meaningfully reduce cybersecurity staff because the threat landscape has fundamentally changed. This makes cybersecurity one of the few fields where AI adoption likely increases rather than decreases job demand.

How should workers prepare for AI disruption if they're unsure when it will arrive?

Effective preparation focuses on building durable capabilities rather than predicting exact timing. Developing AI literacy—understanding how AI works, experimenting with AI tools, and thinking about how AI might transform your field—creates value regardless of disruption timing. Maintaining diverse skills that transfer across domains provides flexibility if your specific specialization becomes disrupted. Building professional networks keeps you connected to opportunity if career transitions become necessary. Accumulating emergency financial reserves provides stability if transitions occur. Maintaining psychological flexibility about potential career changes helps you adapt if disruption arrives suddenly. These preparations improve your career resilience generally, beyond just AI disruption, making them prudent regardless of timing uncertainty.

What role should government play in managing AI disruption?

Governments can influence disruption outcomes through education investment ensuring workers develop AI skills, training programs helping displaced workers transition to new opportunities, social safety nets providing stability during transitions, healthcare accessibility independent of employment removing barriers to job changes, and geographic development incentives reducing extreme opportunity concentration. These interventions require resources but potentially prevent more costly disruption from unmanaged transitions. Historical precedent suggests purely laissez-faire approaches allow disruption to concentrate severely on those least able to absorb it, while purely protective approaches slow beneficial innovation. Most effective outcomes likely involve enabling AI adoption while investing in transition support, though this requires political commitment that's not always forthcoming.

Is Cisco's warning predicting AI will be a failure like the dot-com bubble?

No. Robbins is explicit that AI is "bigger than the internet," suggesting the underlying technology is genuinely transformative and beneficial. His warning isn't that AI will ultimately fail, but rather that the path to AI's long-term benefits will involve a boom-bust cycle similar to the dot-com era. The dot-com bubble burst, but the internet survived and became revolutionary. Similarly, AI might experience a corrective period after current enthusiasm, but the technology will likely prove transformative long-term. The disruption Robbins warns about is the inevitable consequence of the boom-bust cycle and the adjustment period between them, not the failure of the technology itself.

How does AI adoption speed affect disruption severity?

Faster adoption creates more severe disruption because adjustment capacity—the rate at which workers can retrain, education systems can update, and organizations can restructure—has limits. If AI adoption occurs gradually over 10-15 years, workers have time to develop skills, education systems can update, and organizations can transition deliberately. If adoption occurs rapidly over 2-3 years, adjustment capacity becomes overwhelmed, creating periods of higher unemployment, wage pressure, and social disruption. Organizations facing competitive pressure tend toward rapid adoption, which might accelerate disruption beyond socially optimal levels. This creates tension between pursuing maximum innovation speed and managing disruption humanely, with different societies likely resolving this tension differently based on their political values.

Key Takeaways

-

The Real Risk Is Skill Inequality: The disruption threat comes less from AI replacing workers entirely and more from AI-equipped workers outcompeting non-equipped workers, creating a bifurcated labor market.

-

Historical Patterns Show Transitions Are Real But Manageable: Previous technological disruptions (industrial revolution, internet adoption) created significant transition challenges but ultimately improved outcomes—the question is how well we manage the transition period.

-

Timing Uncertainty Creates Planning Challenges: While significant disruption is likely, uncertainty about when and how quickly it occurs creates motivation challenges for preparation, though preparation that improves general career resilience is wise regardless.

-

Different Occupations Face Different Risks: Routine cognitive work faces higher disruption risk; fields requiring complex judgment, emotional intelligence, or addressing AI-created problems face different, often lower, disruption risk.

-

Geographic and Organizational Variation Is Significant: Tech hubs and large organizations navigate disruption differently than secondary markets and small companies, potentially exacerbating existing regional inequality.

-

Augmentation Approaches Reduce Disruption Better Than Replacement Approaches: Organizations that use AI to enhance worker capability rather than replace workers manage disruption better and build longer-term organizational capability.

-

Cybersecurity Represents an Exception: Unlike most fields where AI increases productivity, cybersecurity faces increasing demand because AI creates both attacks and defensive requirements simultaneously.

-

Policy and Organizational Choices Matter: Disruption severity isn't determined by technology alone but by human decisions about how quickly to adopt, how to integrate AI, and how to support transitions.

-

Continuous Learning Becomes Essential: The AI era requires treating education as continuous throughout careers rather than a phase of life, fundamentally shifting how workers should approach skill development.

-

Preparation Now Reduces Disruption Later: Workers, organizations, and governments that prepare proactively for disruption navigate it better than those reacting after disruption occurs, suggesting investment in preparation is prudent risk management.

Related Articles

- AI-Powered Go-To-Market Strategy: 5 Lessons From Enterprise Transformation [2025]

- From AI Hype to Real ROI: Enterprise Implementation Guide [2025]

- Jensen Huang's Reality Check on AI: Why Practical Progress Matters More Than God AI Fears [2025]

- Shadow AI in 2025: Enterprise Control Crisis & Solutions

- AI Code Trust Crisis: Why Developers Don't Check & How to Fix It

- AI Actors & Digital Humans: The Future of Entertainment in 2025