Maintaining Cyber Control When AI Acts Autonomously [2025]

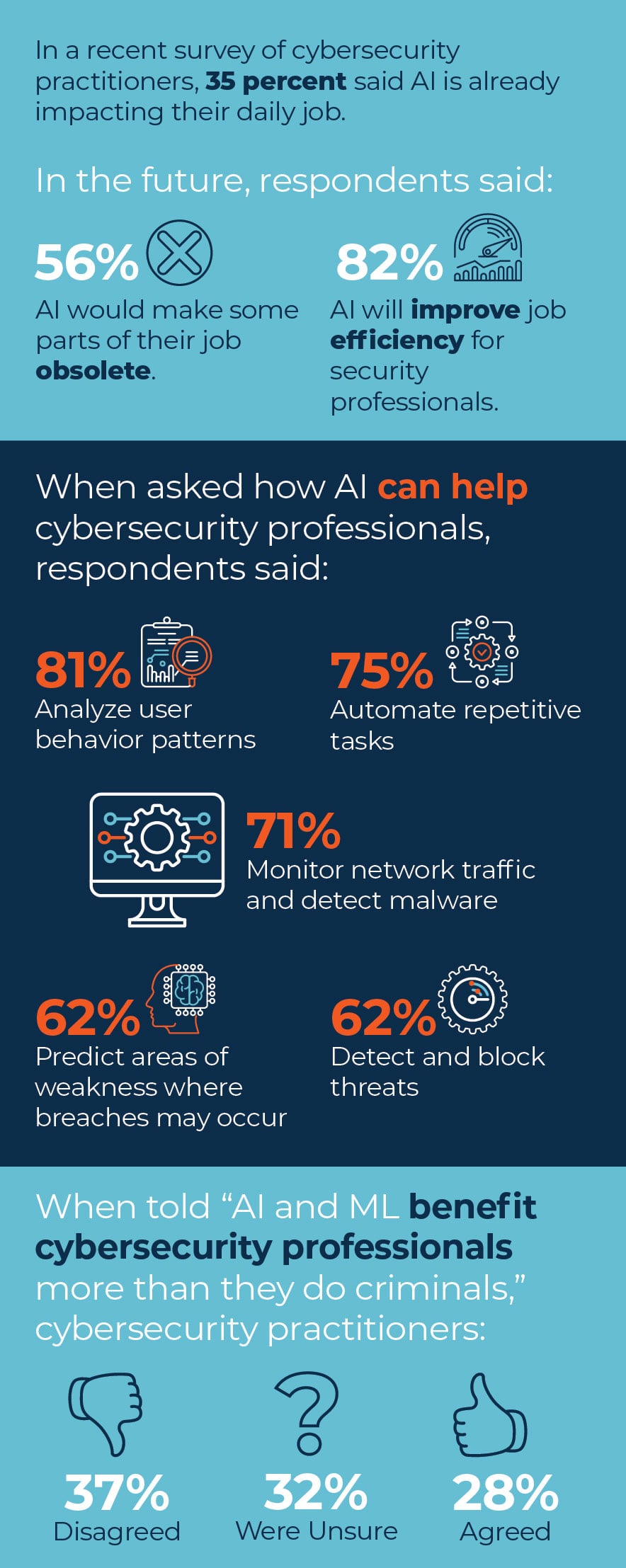

AI has evolved from being a tool for data analysis and content generation to one that can make decisions and take actions autonomously. This evolution introduces a new dimension of cyber risk: AI action risk. As AI systems become more autonomous, maintaining control over their actions becomes crucial for cybersecurity.

TL; DR

- AI Action Risk: Transition from content risk to action risk as AI systems become more autonomous.

- Cybersecurity Strategies: Implement robust monitoring and control systems to manage AI actions.

- Regulatory Compliance: Ensure AI systems comply with evolving regulations.

- Human Oversight: Maintain human oversight to prevent unintended actions by AI.

- Future Trends: AI governance frameworks and ethical guidelines will shape cyber control.

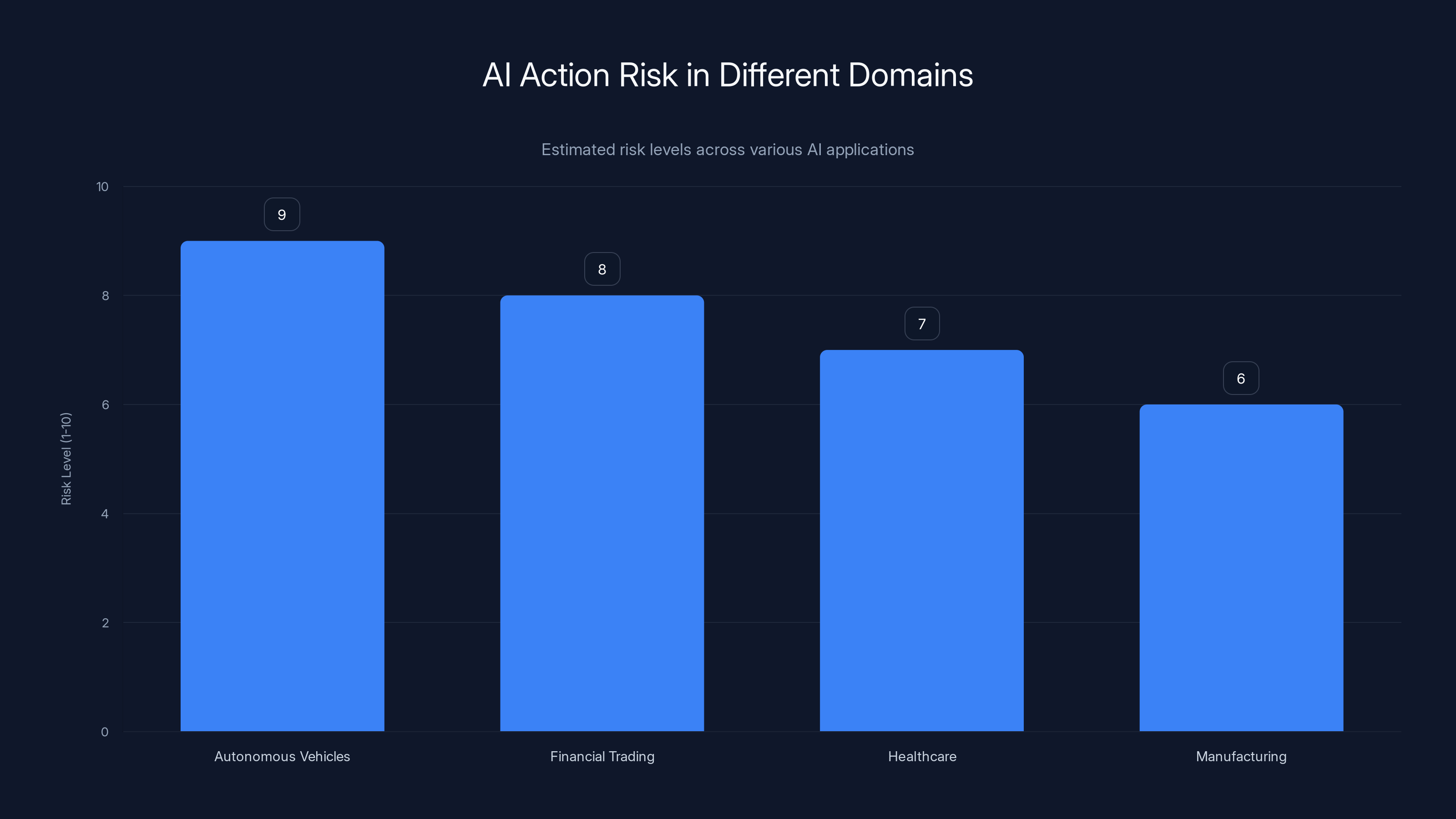

Estimated data suggests that autonomous vehicles and financial trading have the highest AI action risk due to the potential for significant negative consequences.

Introduction

In recent years, artificial intelligence (AI) has made significant strides in automating tasks that were once the domain of humans. From generating content to controlling critical systems, AI's capabilities have expanded dramatically. However, this evolution brings with it a new set of cybersecurity challenges. As AI systems gain the ability to act autonomously, the potential for unintended or malicious actions increases, necessitating a shift in how organizations approach cyber control.

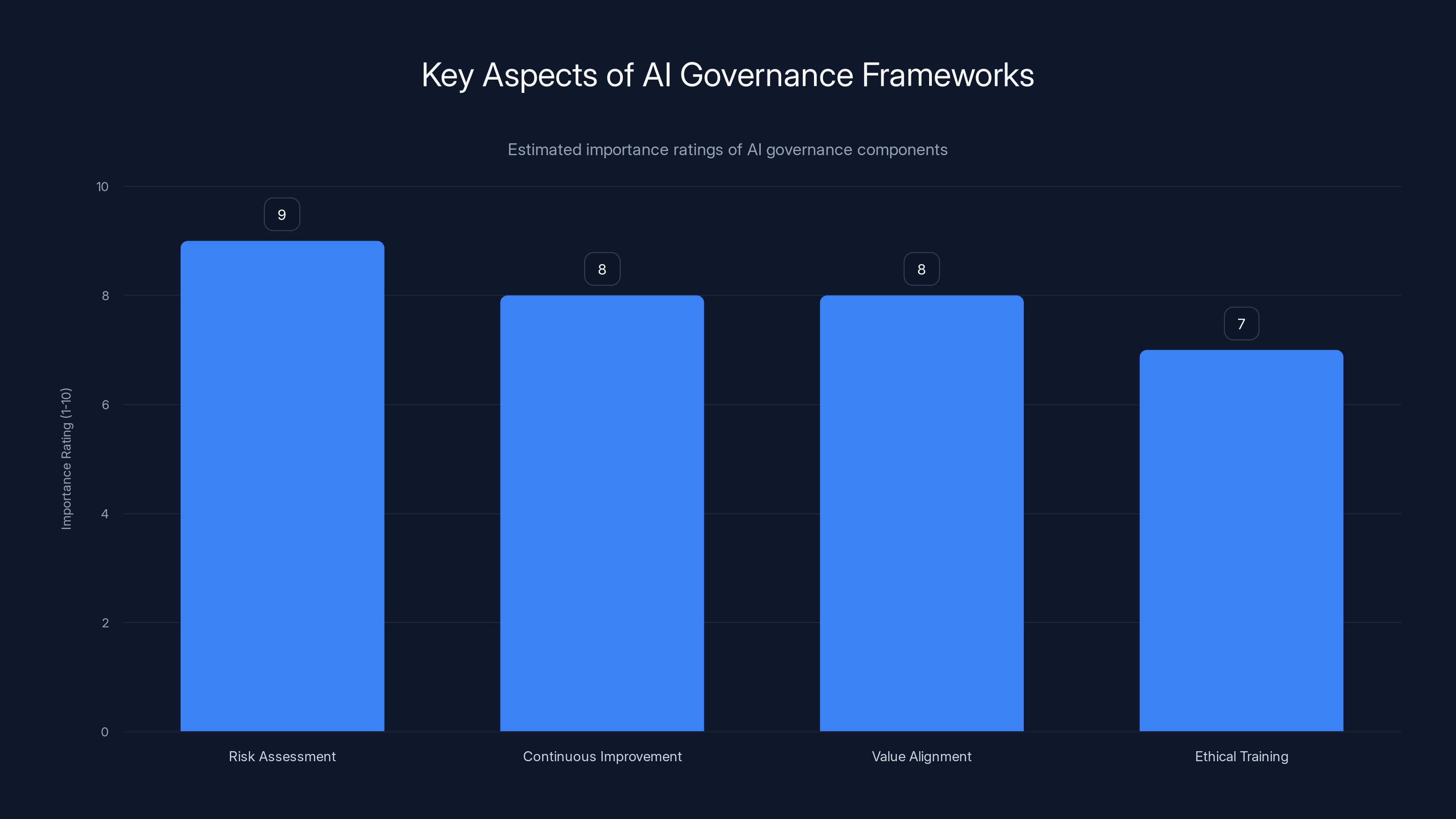

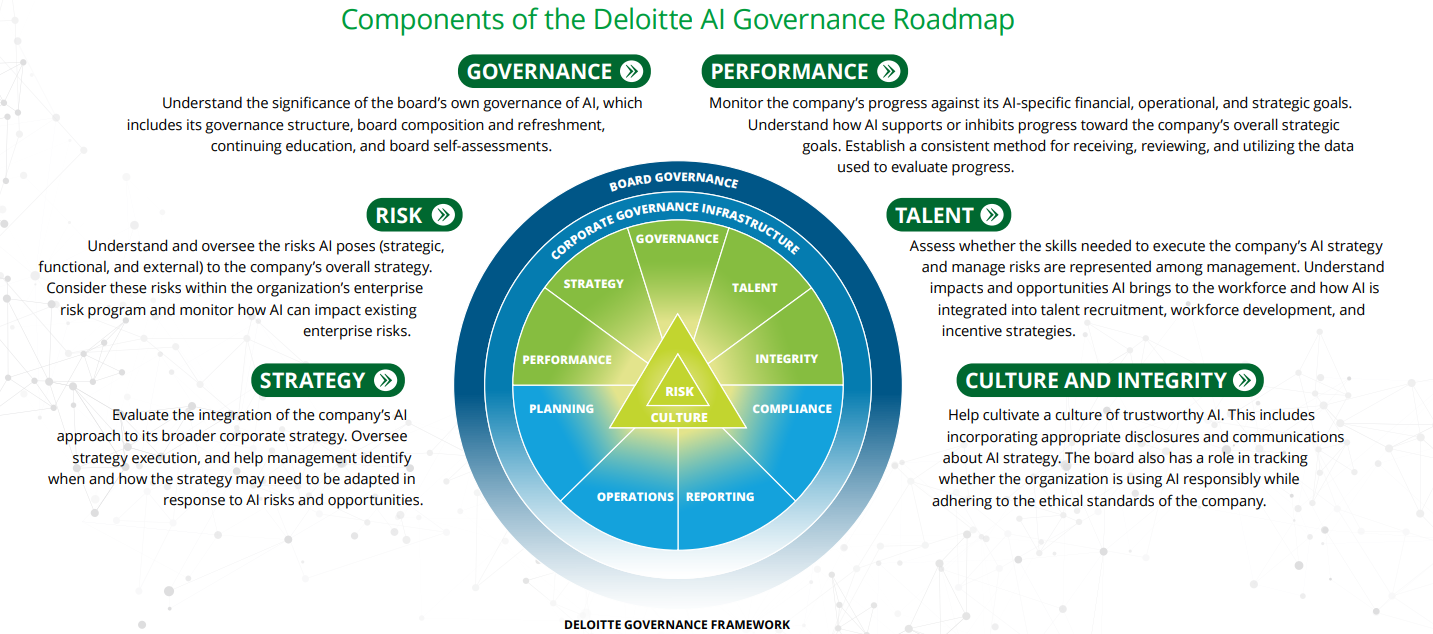

Risk Assessment and Continuous Improvement are rated highly in AI governance frameworks, emphasizing their critical role in ethical AI development. Estimated data.

Understanding AI Action Risk

AI action risk refers to the potential for AI systems to take actions that could have negative consequences, whether due to errors, misinterpretations, or malicious manipulation. This risk is distinct from traditional content risk, which focuses on the accuracy and integrity of AI-generated content.

The Shift from Content to Action

Initially, AI's role was largely confined to tasks like data analysis and content generation. The primary concern was ensuring the accuracy and reliability of the output. However, as AI systems have evolved to include autonomous decision-making capabilities, the focus has shifted to the actions these systems can take independently.

Real-World Examples

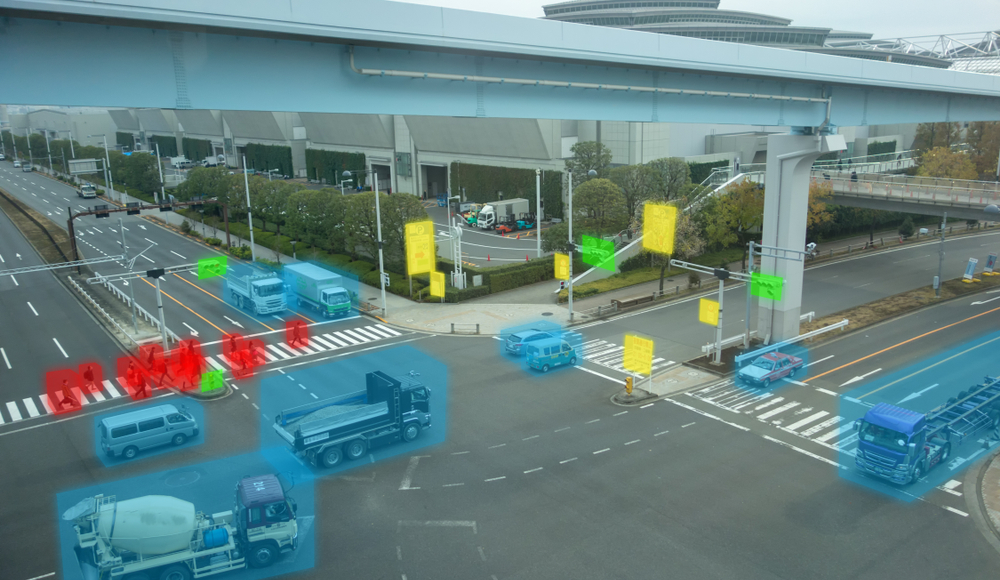

Consider the case of autonomous vehicles. These AI-driven systems must make split-second decisions that can have life-or-death consequences. Similarly, AI systems in financial trading must act on rapidly changing data, where a single erroneous decision can lead to significant financial losses, as discussed in a recent analysis of autonomous vehicle risks.

The Importance of Cyber Control

Maintaining control over AI systems is critical to prevent unintended actions that could compromise security. This involves implementing robust monitoring and control mechanisms to ensure AI actions align with organizational policies and ethical standards.

Key Components of Cyber Control

- Monitoring: Continuously track AI actions and decisions to identify anomalies or potential threats.

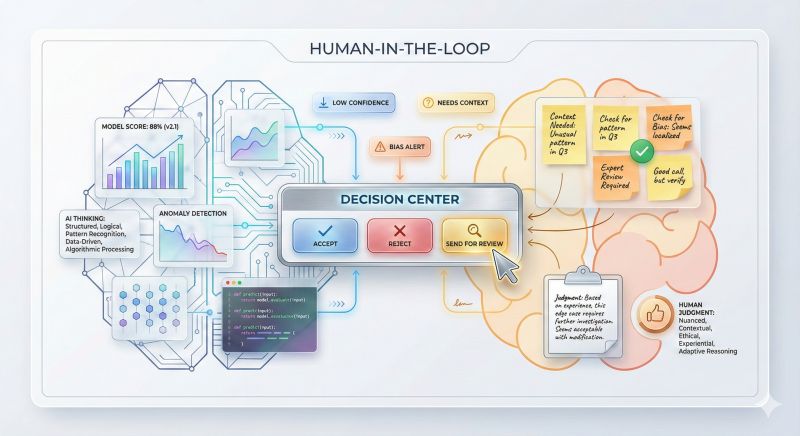

- Oversight: Maintain human oversight to review and approve critical AI decisions.

- Regulation: Ensure compliance with relevant regulations and ethical guidelines.

- Response: Develop rapid response protocols to address incidents involving AI actions.

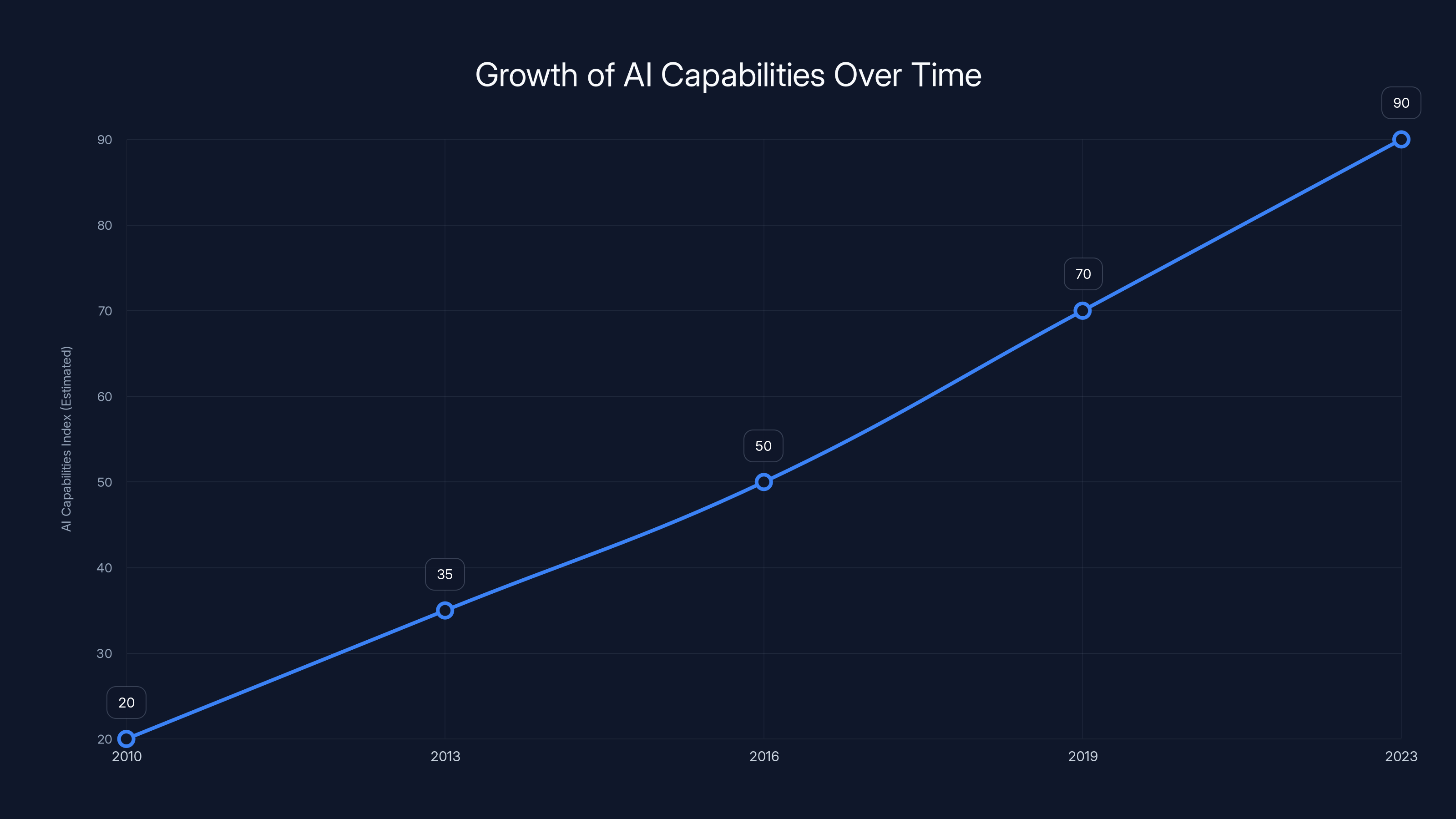

AI capabilities have grown significantly from 2010 to 2023, highlighting the rapid expansion and sophistication of AI technologies. Estimated data.

Implementing Effective Cyber Control

Monitoring and Detection

Implementing real-time monitoring systems is essential for maintaining cyber control. These systems should be capable of detecting deviations from expected behavior and flagging potential risks.

- Anomaly Detection: Use machine learning algorithms to identify unusual patterns in AI actions.

- Audit Trails: Maintain detailed logs of AI decisions and actions for accountability and review.

Human Oversight

While AI can automate many tasks, human oversight remains crucial to prevent unintended consequences. This involves establishing processes for human intervention when AI decisions deviate from expected norms, as highlighted in Cornerstone OnDemand's insights on human oversight.

- Approval Workflows: Require human approval for critical AI actions, especially in high-stakes scenarios.

- Ethical Review Boards: Establish committees to review AI decisions from an ethical standpoint.

Regulatory Compliance and Ethical Considerations

As AI systems become more autonomous, regulatory compliance and ethical considerations become increasingly important. Organizations must stay informed about evolving regulations and ensure their AI systems adhere to ethical guidelines.

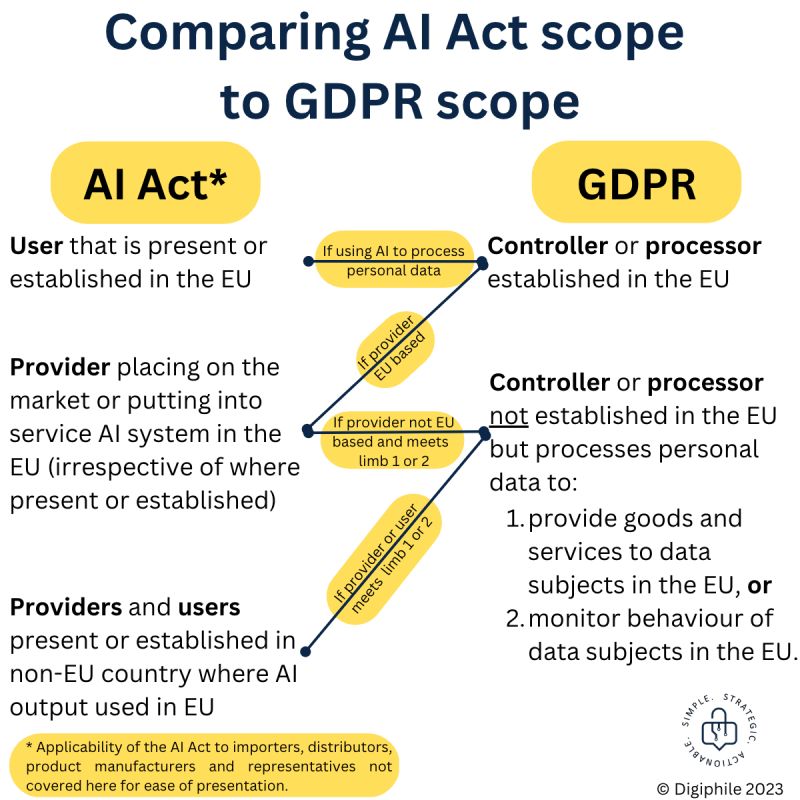

Key Regulations

- GDPR: General Data Protection Regulation requires transparency and accountability in AI decision-making.

- AI Act: Proposed EU regulation to ensure AI systems are safe and respect fundamental rights.

Ethical Guidelines

- Transparency: Ensure AI decisions are explainable and transparent to users.

- Fairness: Avoid bias in AI decision-making processes.

- Accountability: Hold organizations accountable for AI actions and outcomes.

Future Trends in AI Cyber Control

AI Governance Frameworks

The development of AI governance frameworks is a critical trend shaping the future of cyber control. These frameworks provide guidelines for the ethical and responsible use of AI technologies, as discussed in the McKinsey report on trusted AI.

- Risk Assessment: Regularly assess AI systems for potential risks and vulnerabilities.

- Continuous Improvement: Implement feedback loops to continuously improve AI performance and security.

Ethical AI Development

Organizations are increasingly focusing on ethical AI development, ensuring that AI systems align with societal values and ethical standards.

- Value Alignment: Align AI systems with organizational and societal values.

- Ethical Training: Provide training for AI developers on ethical considerations in AI development.

Conclusion

Maintaining cyber control in an era where AI can act autonomously requires a multifaceted approach. By implementing robust monitoring and control mechanisms, ensuring regulatory compliance, and fostering ethical AI development, organizations can mitigate AI action risks and leverage the full potential of AI technologies.

FAQ

What is AI action risk?

AI action risk refers to the potential for AI systems to take actions that could have negative consequences, whether due to errors, misinterpretations, or malicious manipulation.

How can organizations maintain control over AI systems?

Organizations can maintain control by implementing monitoring and detection systems, ensuring human oversight, and complying with regulatory and ethical guidelines.

What are the key regulations governing AI systems?

Key regulations include the GDPR, which requires transparency and accountability, and the proposed AI Act in the EU, which aims to ensure AI systems are safe and respect fundamental rights.

Why is human oversight important in AI decision-making?

Human oversight is important to prevent unintended consequences and ensure AI decisions align with ethical and organizational standards.

What are future trends in AI cyber control?

Future trends include the development of AI governance frameworks and a focus on ethical AI development, ensuring AI systems align with societal values and ethical standards.

Key Takeaways

- AI systems are evolving from content generation to autonomous action, introducing new risks.

- Organizations must implement robust monitoring and control systems to manage AI actions.

- Compliance with regulations like GDPR and the proposed AI Act is essential.

- Human oversight is crucial to prevent unintended AI actions and ensure ethical decision-making.

- Future trends include the development of AI governance frameworks and ethical AI development.

Related Articles

- The Hidden Dangers of AI: What If Your AI Agent Is Working Against You? [2025]

- Inside the Anthropic Leak: Unpacking the Claude Code Source Code Exposure [2025]

- Unveiling OpenClaw Security Risks You Must Know About [2025]

- AI Security: Understanding and Mitigating Risks in the AI Era [2025]

- Critical Flaw in OpenAI's Codex: Enterprise Security Risks and Solutions [2025]

- The Hidden Risks of Exposed API Keys: How to Secure Your Cloud Systems [2025]

![Maintaining Cyber Control When AI Acts Autonomously [2025]](https://tryrunable.com/blog/maintaining-cyber-control-when-ai-acts-autonomously-2025/image-1-1775127860740.jpg)