What Happened When I Watched Hours of Seeance 2.0 Videos

I sat down last Tuesday with a cup of cold coffee and decided to fall down a rabbit hole. Four hours later, I'd watched dozens of Seeance 2.0 videos, and honestly? I felt like I'd been staring into an uncanny valley made of pixels and bad decisions.

Seeance 2.0 isn't your typical AI video generator. It's a specialized tool from the Seeance team designed to create these hyper-realistic, deeply unsettling video sequences from text prompts. The results? They're simultaneously impressive and genuinely disturbing in ways that most AI video tools haven't quite achieved yet.

But here's the thing: that weirdness isn't a bug. It's almost a feature. And understanding why requires digging into what makes these videos so different from everything else you see online.

The core issue is that Seeance 2.0 operates in this strange middle ground. It's trained on real video data, so it understands motion, lighting, and physics in ways that earlier AI video models just didn't. But it's also fundamentally synthetic, which means it sometimes produces stuff that looks almost real but isn't quite there. That fractional miss is what creates the nightmare effect.

I watched videos of people with slightly-off faces. Not wrong enough to be obviously AI, but wrong enough to trigger every "something's not right here" alarm in your brain. I watched water that flowed kind of like water but with this strange, deliberate quality. I watched fabric move with an almost-but-not-quite naturalness that made you feel uncomfortable in a way you couldn't quite articulate.

This is actually a massive leap forward for AI video technology. Previous tools like Runway, Synthesia, and early versions of OpenAI's Sora could generate video, sure. But they often looked obviously fake—almost cartoonish in their artificiality. You'd watch them and think, "Okay, that's AI. No question."

Seeance 2.0 crosses a line. It makes you question what you're looking at. And that's both impressive and deeply unsettling.

The Technical Reality Behind the Nightmare

So what's actually happening under the hood that makes these videos so disturbing? It comes down to how Seeance 2.0 was trained and what it learned to optimize for.

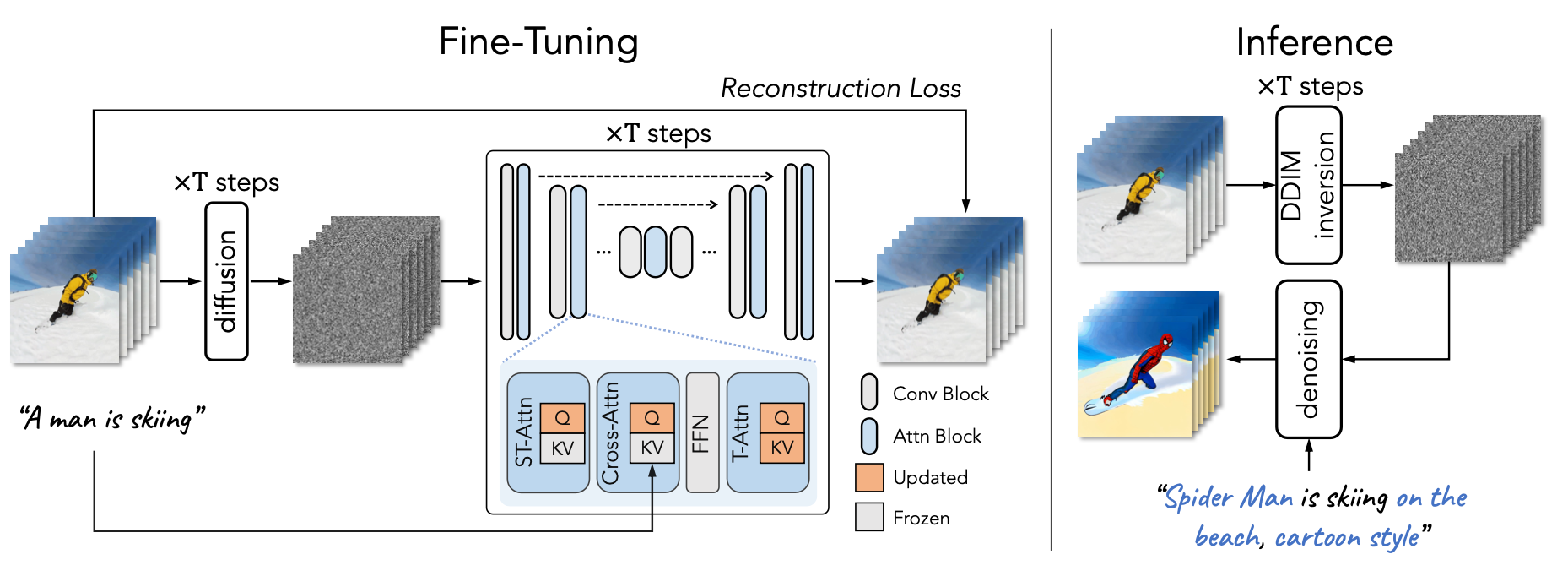

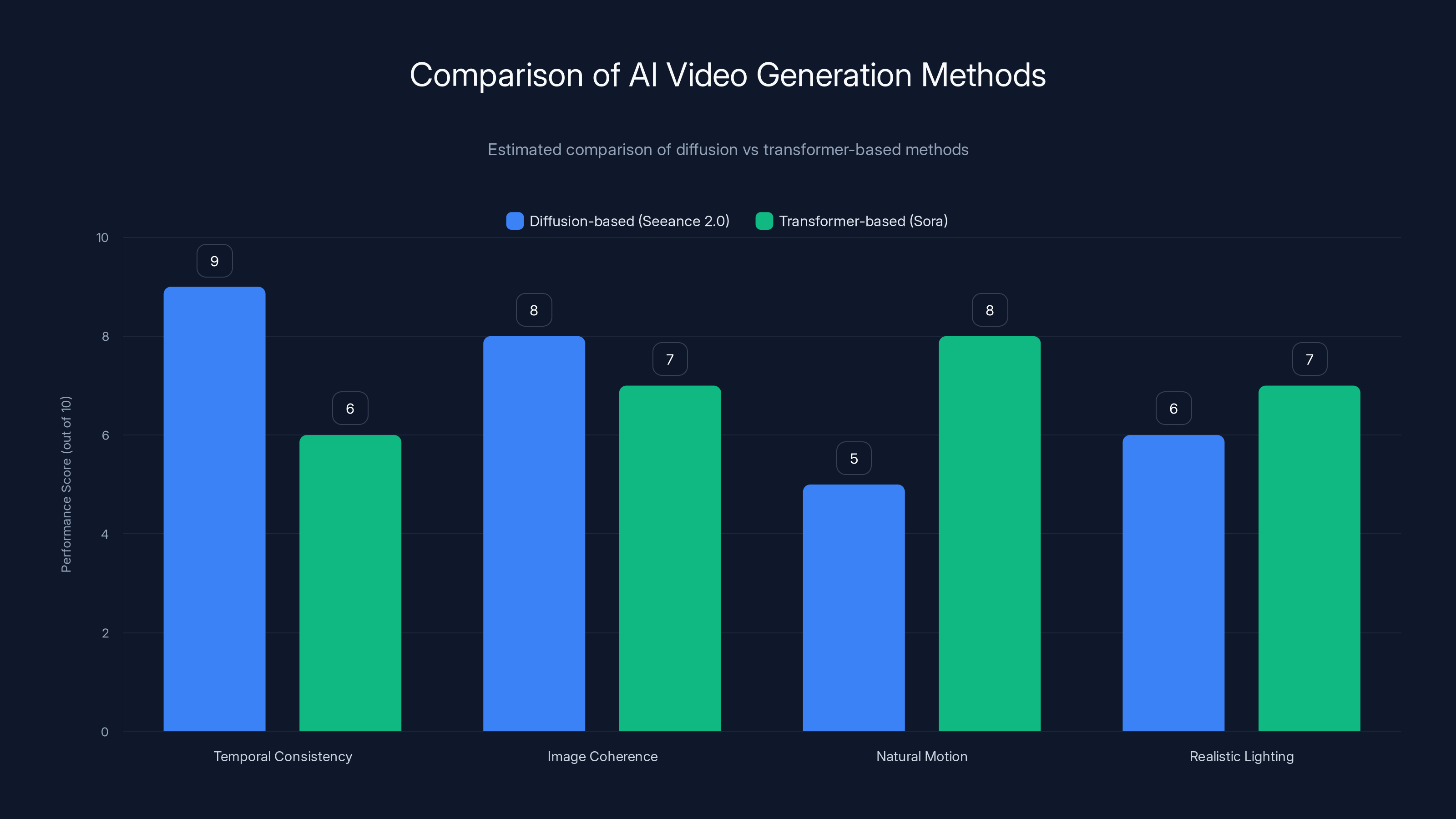

Seeance uses a diffusion-based approach, which is different from the transformer-based methods used by tools like Sora. Instead of predicting the next frame based on previous frames (which creates jittery, sometimes incoherent video), diffusion models start with noise and gradually "denoise" it into coherent images. When you apply this to video, it means every frame is being calculated based on the prompt, not just what came before.

That approach has a major advantage: temporal consistency. The video doesn't drift or lose coherence the way some AI videos do. But it has a cost.

Diffusion models tend to smooth things out. They average across lots of training data to find the "most likely" pixel values. For static images, that works great. For video, it creates this weird plasticity. Motion becomes overly fluid. Faces smooth out in ways that aren't quite right. Lighting becomes almost perfect, which sounds good until you realize that real-world lighting is actually kind of imperfect and that imperfection is what makes things look natural.

I watched a video of a person walking through a garden. Every single leaf moved with perfect, synchronized motion. The person's skin was flawless but weirdly plasticky. The shadows fell at just the right angles, but with an almost mathematical precision that made them feel artificial. That's not a failure of the technology—that's actually what happens when you get too good at averaging across thousands of training examples.

Another technical factor: temporal coherence requires consistency across frames. This means Seeance 2.0 has to "remember" what should be where as it generates each successive frame. That's computationally expensive, and sometimes it leads to weird artifacts. I watched a video where a person's hand passed through a coffee cup. Not obviously—just slightly. Enough that it registered as wrong.

The model also struggles with continuity of impossible things. If you ask it to generate a video of something that violates physics, it gets confused. It'll start generating one thing, realize it doesn't make sense, and then kind of... half-correct itself? The result is this disorienting motion that doesn't quite violate physics but doesn't quite follow them either.

There's also the issue of what researchers call "mode collapse." When you train an AI model on millions of videos, certain patterns show up far more often than others. Normal human walks, standard lighting conditions, common scenarios. The model learns these patterns deeply. So when you ask it to generate something unusual, it sometimes defaults back to these common patterns even when they don't fit your prompt. You ask for a video of someone on Mars, and you get someone walking in a landscape that looks almost alien but somehow also looks like Earth, just with the saturation cranked up.

Diffusion-based models like Seeance 2.0 excel in temporal consistency and image coherence but may lack natural motion and realistic lighting compared to transformer-based methods. Estimated data.

The Specific Videos That'll Make You Question Everything

Let me walk you through some of the ones that got to me.

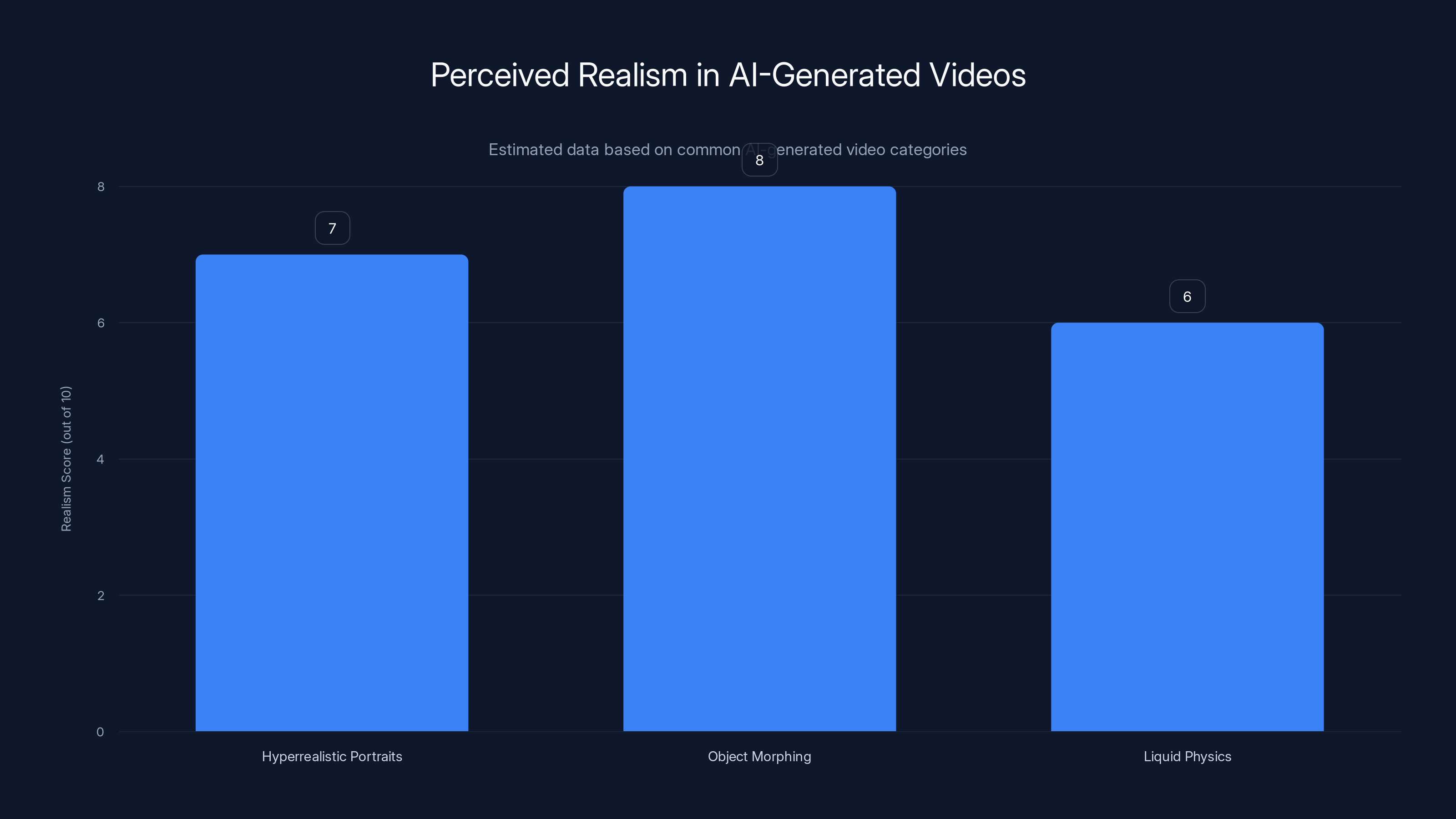

First, there's the "Hyperrealistic Portrait" videos. These are generated videos of people's faces, usually just looking at the camera or moving slightly. The faces are almost perfect. The skin tone is flawless. The hair moves realistically. But if you watch long enough, you notice that blinking is wrong—not the frequency, but the actual eyelid movement. It's too fast or too smooth. The micro-expressions are missing. Real faces have this constant subtle movement—tiny adjustments, micro-expressions, the way attention shifts the eyes. These AI faces are too static, like they're concentrating really hard on looking normal.

Second, there are the "Object Morphing" videos, where one object gradually transforms into another. A coffee cup becomes a plant becomes a book. They're technically incredible—the morphing is smooth, the materials look right—but watching it, you realize you're seeing something that's impossible. Your brain knows objects don't just become other objects. But the AI has done such a good job making the transition smooth that it short-circuits the uncanny valley. Instead of looking fake, it looks like you're watching magic. Which is equally disorienting.

Then there's the "Liquid Physics" category. Seeance 2.0 generates videos of water, milk, honey, and other liquids behaving in various ways. Here's where it gets weird: the liquids move correctly according to physics, but they move with this weird determinism. Real liquid is chaotic—splashes have randomness, surface tension creates unpredictable patterns. AI-generated liquid is too perfect. Every drop falls at just the right angle. Every splash happens at just the right moment. It's like watching physics equations come to life, which is beautiful but deeply wrong.

One video that particularly got to me: a person's hand writing with a pen on paper. The hand position was right. The arm moved correctly. But the pen stroke never actually made contact with the paper in the right way. There was this microscopic distance between the pen and the paper, just enough that your brain caught it but not enough to be obvious. That one-pixel gap between intention and reality is what made it nightmare-fuel.

Another series: food being prepared. Seeance 2.0 generated videos of vegetables being cut, ingredients being mixed. The knife cuts were too precise. The mixing was too uniform. Real cooking is messy—things fall on the wrong angle, mixing is uneven. But AI cooking is perfect, which somehow makes it less appetizing, not more.

Seeance 2.0 excels in photorealism but is more prone to the uncanny valley effect, while Runway ML offers greater creative flexibility and handles longer videos better. Estimated data.

Why This Matters for AI Video Technology

Okay, so Seeance 2.0 makes unsettling videos. But why should you care beyond the weird factor?

Because this is actually the frontier of AI video generation, and understanding what's happening here tells you everything about where the technology is heading.

For years, the knock against AI video was that it looked obviously fake. That was actually a feature, security-wise. Deepfakes were easier to spot because AI video had consistent artifacts. Now that's changing. Seeance 2.0 and similar tools are crossing into the territory where the videos look real but feel wrong. That's a fundamentally different problem.

The uncanny valley effect means we're at an inflection point. We're not at photorealism yet—there are still tells if you know what to look for. But we're close enough that casual viewers might not catch the errors. And that has implications for misinformation, for trust in digital media, for how we verify what we're seeing online.

It also tells us something about the nature of AI learning. Seeance 2.0's struggles aren't failures—they're signatures of how neural networks actually work. They learn statistical patterns. When those patterns produce something that's statistically correct but dynamically wrong, you get the uncanny valley. This isn't something you can engineer away easily. It's baked into how deep learning works.

There's also an interesting question about what constitutes "good" AI video. For commercial purposes, you might want obvious AI-generated content so people know they're looking at AI. For creative purposes, you might want something that looks real. For scientific purposes, you might want something that's accurate without looking real. Seeance 2.0 is excellent at none of these specifically—it's best at creating content that's disturbingly plausible. Which is its own category.

Comparing Seeance 2.0 to Other AI Video Tools

Let's talk about how Seeance 2.0 stacks up against the competition, because context matters here.

Seeance 2.0 vs. Runway ML

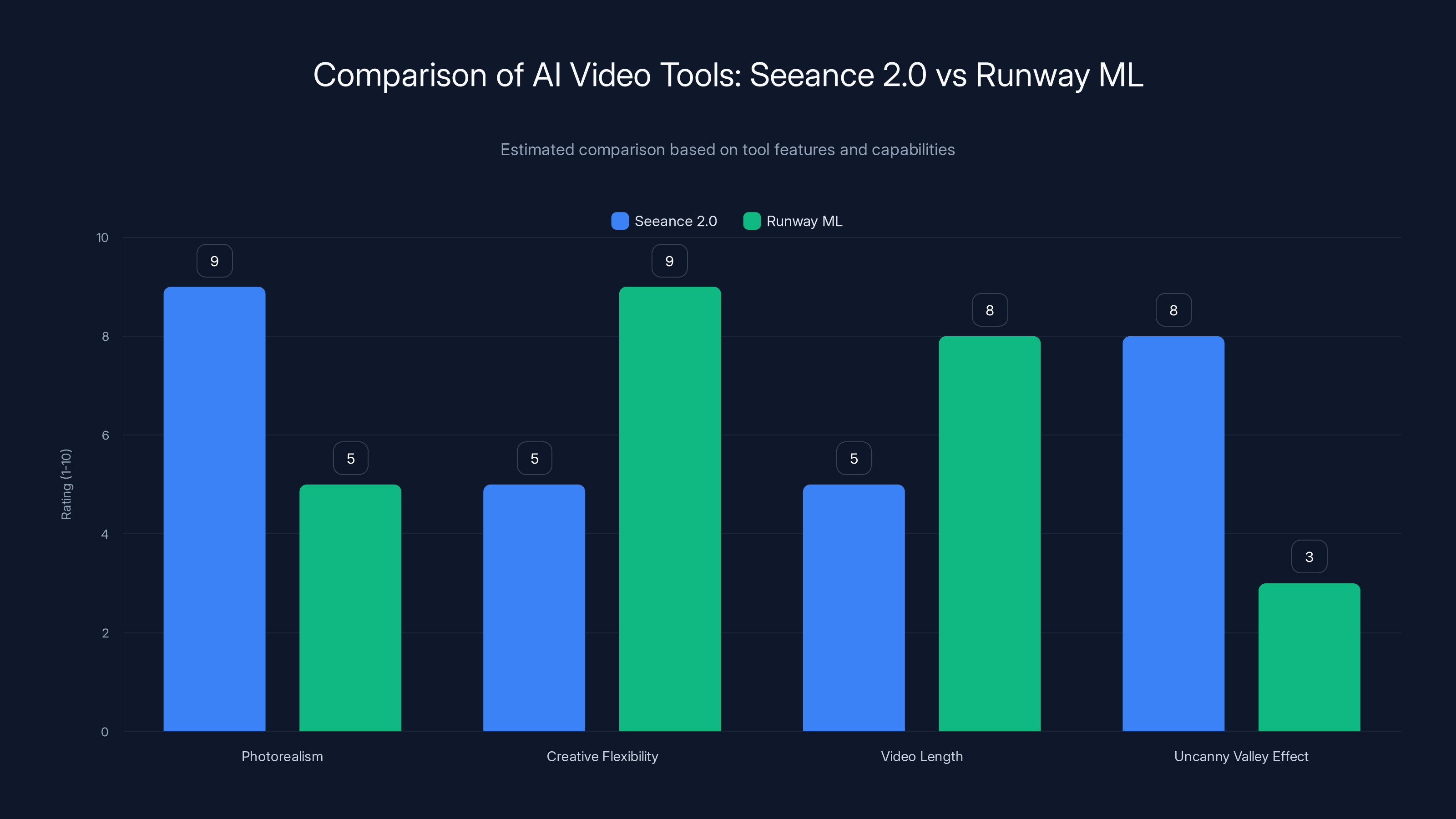

Runway ML has been the go-to tool for creative video generation for a few years now. It's easier to use, it integrates with existing workflows, and it's got a thriving community. But Runway's videos tend to have a slightly softer, more stylized look. They're clearly AI-generated, which isn't necessarily bad—it's actually useful for creative work where you want that aesthetic.

Seeance 2.0 goes harder at photorealism, which makes it better for certain use cases but worse for others. If you want obviously AI-generated content for a music video or artistic project, Runway might be better. If you want something that passes as real footage (for better or worse), Seeance 2.0 is the current leader.

Seeance 2.0 vs. Open AI Sora

Sora is the elephant in the room. Open AI's tool generates longer videos (up to one minute vs. Seeance's typically 10-30 seconds), and it has better understanding of complex prompts. But Sora is also more expensive and has limited availability.

When Sora came out, people immediately compared it to Seeance 2.0. Sora's videos look more real in many cases, with better understanding of camera movement and complex scenes. But Sora also sometimes has the same uncanny valley problems—it's just less consistent about it. You might get a perfect video one time and a weird one the next.

Seeance 2.0 is more consistent in its weirdness, which is actually useful if you understand what you're getting. Sora is more inconsistent, which is useful if you're hoping for the next great shot.

Seeance 2.0 vs. Synthesia

Synthesia focuses specifically on AI avatar videos and talking-head content. It's not really competition in the same way—different tool for different purposes. Synthesia's avatars move stiffly and sound better than they look, which makes them useful for corporate training videos. Seeance 2.0 isn't designed for that use case at all.

| Tool | Best For | Video Length | Realism Level | Cost |

|---|---|---|---|---|

| Seeance 2.0 | Photorealistic short videos | 10-30 seconds | Very high (uncanny valley) | Premium |

| Runway ML | Creative, stylized videos | 4-60 seconds | High but stylized | Moderate |

| Open AI Sora | Complex scenes, camera movement | Up to 60 seconds | Very high (less consistent) | Premium |

| Synthesia | AI avatars, talking heads | Flexible | Moderate (intentionally stylized) | Moderate |

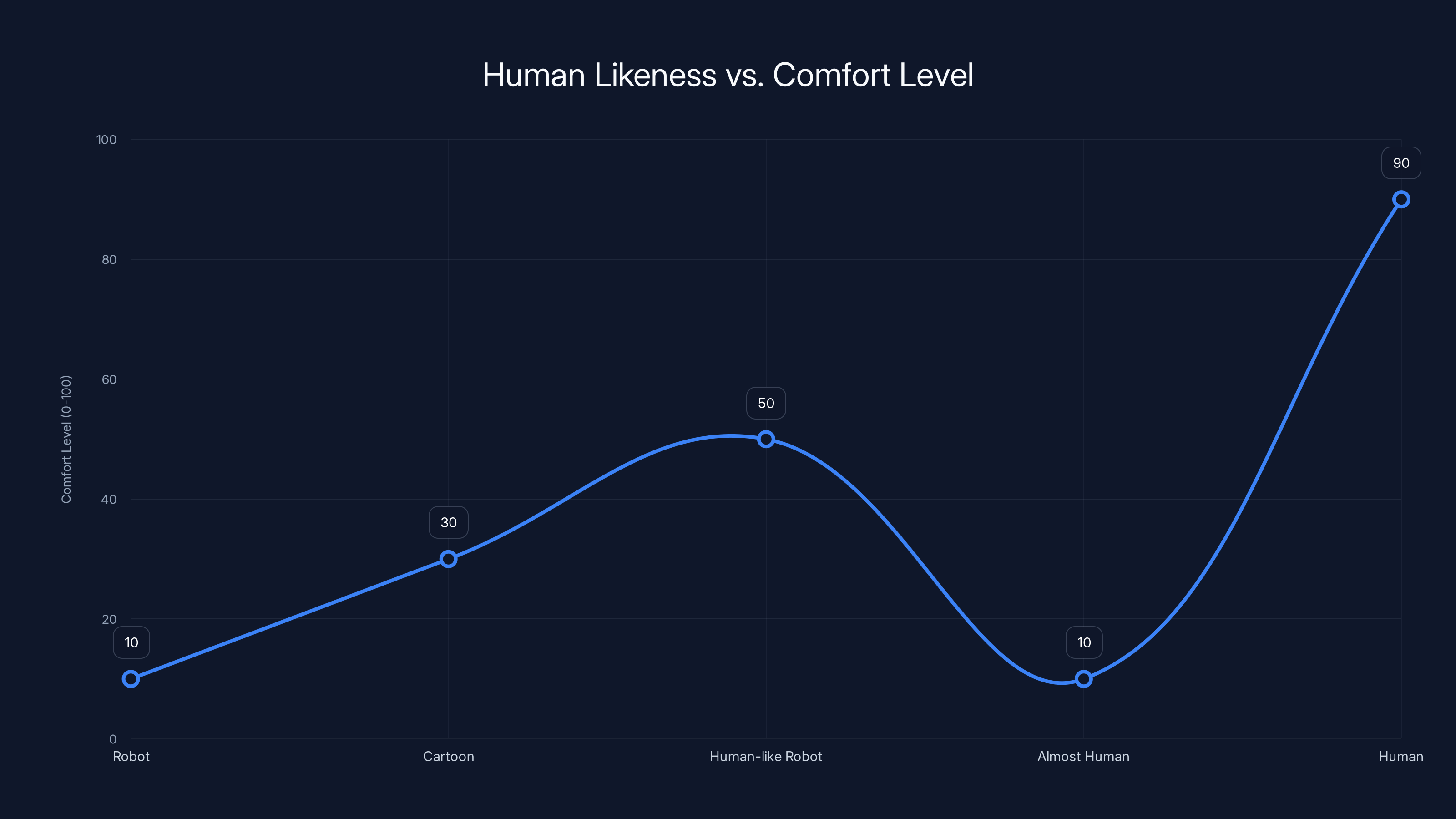

The Uncanny Valley effect shows a dip in comfort level when objects appear almost human but not quite, causing discomfort.

The Uncanny Valley Explained: Why Our Brains Hate This

Let's dig into the actual neuroscience here, because understanding why Seeance 2.0 videos are so disturbing requires understanding how your brain actually processes visual information.

Your brain has multiple systems for evaluating whether something is real or fake. The most famous is the uncanny valley hypothesis, proposed by roboticist Masahiro Mori. The idea is simple: as something becomes more human-like, we increasingly accept it—until it gets almost but not quite human, at which point we recoil.

The mechanism isn't fully understood, but it probably involves multiple neural systems working in parallel. One system recognizes objects and features—that's a face, that's a hand. Another system predicts motion and behavior. A third system processes emotions and intentions. When all of these are aligned, your brain accepts what it's seeing as real. When some are aligned and others aren't, your brain gets confused.

Seeance 2.0 videos are specifically good at creating this confusion. The facial recognition system says "that's a human face." The motion prediction system says "that movement is plausible." But the intention-reading system says "something's off about that expression." Your brain can't reconcile these signals, so it defaults to discomfort.

There's also the issue of expectation violations. Your brain is constantly predicting what it expects to see next. When reality matches those predictions, you feel comfortable. When reality violates those predictions, your brain flags it as important. Most AI video violations are so consistent that you quickly adapt—you expect weirdness from the specific tool, so weirdness becomes normal. But Seeance 2.0 is inconsistent in its weirdness. Sometimes it's perfect. Sometimes it's wrong. That inconsistency is what makes it nightmare-fuel.

There's a neurological component too. The amygdala—the part of your brain that processes fear—gets activated when something triggers prediction errors. Seeance 2.0 videos are prediction error machines. Every slight wrongness triggers a little burst of confusion and discomfort.

Interestingly, this response isn't cultural or learned. It appears to be somewhat hardwired. Even people who have never seen AI video before report the same discomfort. Your brain is detecting motion anomalies at a pre-conscious level.

Why These Videos Exist and Who's Using Them

So Seeance 2.0 makes creepy, unsettling videos. The obvious question: why would anyone use it?

The answer is more nuanced than you might think.

First, there's the research angle. Computer vision and AI researchers use Seeance 2.0 to understand how well current models can generate realistic video. The uncanny valley effect is actually useful data—it tells researchers where the model is succeeding and where it's failing. Every video is a data point about what aspects of physical reality are easiest and hardest to simulate.

Second, there's the artistic angle. Some creators specifically want that unsettling quality. There's a whole genre of AI art that leans into the weirdness, that uses the uncanny valley as aesthetic. Music videos, experimental films, installations. For these creators, the fact that Seeance 2.0 produces creepy, disorienting content is a feature, not a bug.

Third, there's the commercial angle—though this is more speculative. Companies working on video generation as a service can use Seeance 2.0 to benchmark their own systems. "Our system is better than Seeance 2.0 because it avoids these specific artifacts." It's useful as a reference point.

Fourth, and this is where it gets concerning, there's the misinformation potential. We're not at the point where Seeance 2.0 can reliably fool everyone—not yet. But as the technology improves, the concern about deepfakes becomes more acute. The existence of Seeance 2.0 forces us to reckon with the question of digital trust. If video can be faked this well, how do we know what we're looking at online?

There's also just the "because we can" angle. Researchers build things because they're technically interesting, not because anyone asked for them. Seeance 2.0 exists because the Seeance team figured out how to make it work, and then they released it to see what people would do with it.

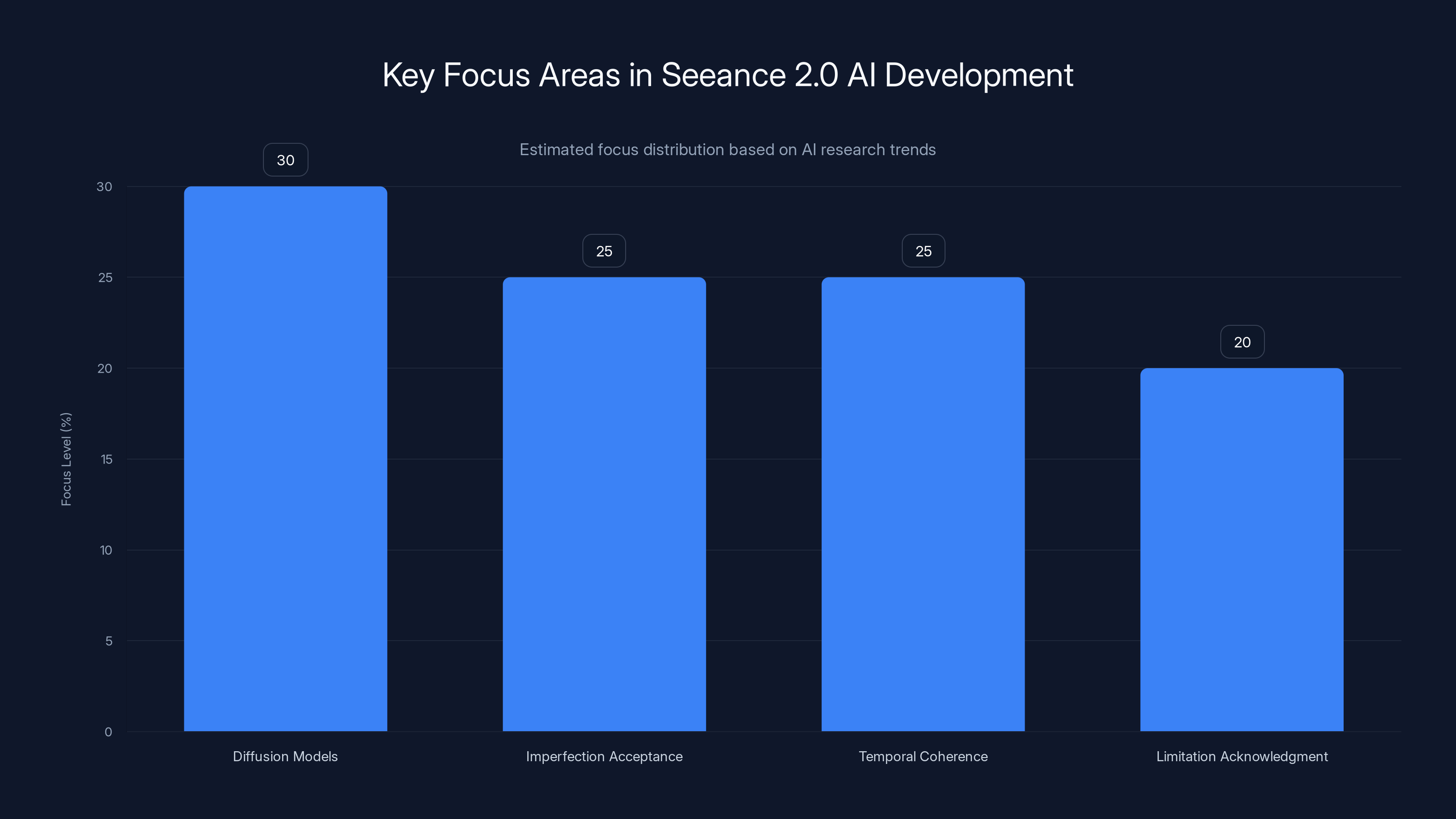

The chart highlights the key focus areas in Seeance 2.0's AI development, with diffusion models receiving the highest attention. Estimated data reflects current AI research trends.

The Implications for Trust and Verification

Here's what worries me about Seeance 2.0, and why I think it matters beyond just being creepy.

We live in a world where video evidence used to be fairly reliable. If you saw a video, you could reasonably assume it actually happened. That assumption is now broken. Not in the sense that we can't ever trust video again—we can use technical methods to verify authenticity—but in the sense that the default assumption of authenticity can no longer hold.

This matters because video is used to settle disputes. In courts, in insurance claims, in social media debates. The moment someone can point to a video and say "but that could be AI-generated," the evidentiary value of video drops dramatically.

We're not at the point where Seeance 2.0 can generate hour-long, complex videos that pass forensic scrutiny. But we're getting there. Other tools are improving. And the gap between what's technically possible and what's widely known to be possible is always a gap where problems flourish.

The solution isn't to ban AI video generation. That's both impossible and undesirable—the technology has legitimate uses. The solution is infrastructure for verification. Digital signatures, metadata that proves provenance, blockchain verification if you're into that. But most importantly, collective skepticism. Assume nothing until verified.

Seeance 2.0 is actually useful in forcing this reckoning earlier rather than later. It's weird and unsettling, which makes people pay attention. If the tool were perfectly photorealistic without any uncanny valley effect, people might use it without thinking about the implications. The fact that it's creepy makes people think.

What Seeance 2.0 Reveals About AI Development

Looking at Seeance 2.0, you can learn a lot about where AI research is headed and what the current generation of researchers cares about.

First, there's the focus on diffusion models. Multiple labs are betting that diffusion-based approaches are better than transformer or GAN-based approaches for video. Seeance 2.0's team clearly believes this. And they might be right—diffusion models have been surprisingly effective across image and audio generation. But like all architectural choices, there are trade-offs.

Second, there's the acceptance of imperfection. A few years ago, AI researchers were obsessed with hitting specific metrics—FID score, LPIPS, whatever. Seeance 2.0 and Sora both represent a shift toward "does this look good enough?" rather than "what's the perfect metric?" That's actually more mature thinking about what matters.

Third, there's the focus on temporal coherence. The big breakthrough in Seeance 2.0 was probably solving the problem of maintaining consistency across frames. That's where a lot of the uncanny valley effects come from—inconsistency. The more consistent the tool, the more real it looks (even if that consistency creates its own weirdness).

Fourth, there's the acceptance of limitations. Seeance 2.0 is open about what it can't do. It can't generate long videos. It struggles with complex hands. It has trouble with transparency and reflections. Being honest about limitations is actually rare in AI research, where there's a lot of incentive to oversell capabilities.

Estimated data suggests that 'Object Morphing' videos are perceived as the most realistic, while 'Liquid Physics' videos are seen as slightly less convincing due to their deterministic nature.

Practical Guide: Using Seeance 2.0 Effectively

If you're actually interested in using Seeance 2.0—and despite my warnings, some of you probably are—here's what you need to know.

Start with simple prompts. "A person walking through a park" rather than "a person wearing a complex outfit doing parkour through an urban environment." Simple prompts give the model fewer things to get wrong.

Describe lighting explicitly. One of the model's strengths is understanding lighting conditions. Use that. "Bright daylight with strong shadows" vs. "diffuse indoor lighting." Specific lighting descriptions actually make the output better.

Avoid complex hands. This is where the model most consistently fails. If your video doesn't require hands doing something specific, avoid them. If it does, keep the hand actions simple.

Test the output with the uncanny valley in mind. Watch for those tells I mentioned earlier. Blink patterns. Micro-expressions. The way fabric moves. Once you know what to look for, you can better evaluate whether the output is usable for your purpose.

Consider the purpose. If you're using it for creative work where weirdness is intentional, Seeance 2.0 is great. If you're using it for something that needs to pass as real, be prepared for scrutiny. People are getting better at spotting AI video.

Understand the ethical implications. Even if you're not trying to deceive anyone, think about what your use of this tool communicates about the trustworthiness of video in general.

The Future of AI Video Generation

So where does this all go from here?

Shortest prediction: within six months, we'll see Seeance 2.0 or similar tools get better at the things they're currently bad at. Hand interactions, reflections, complex expressions. The gap between "looks real" and "actually is real" will narrow further.

Medium prediction (1-2 years): We'll see tools that can generate longer videos—full minutes, potentially even longer. This changes the calculus significantly. Short videos can be forensically analyzed. Long videos with consistent coherence are much harder to verify or dismiss.

Longer prediction (3-5 years): Video authenticity will become a major concern in litigation, journalism, and politics. We'll probably see regulatory responses. "Deepfake" will become a common term in mainstream discourse, not just tech discourse.

And ultimately, we'll probably see bifurcation. Some tools will lean into photorealism and pursue it relentlessly. Others will lean into obvious AI aesthetics and pursue that. The in-between space where Seeance 2.0 currently lives—realistic enough to be unsettling but not realistic enough to be convincing—might actually be the transient space between eras rather than a stable category.

Why This Matters Even If You Never Use Seeance 2.0

Here's the thing I want to stress if you've made it this far: you don't need to care about Seeance 2.0 specifically to care about what Seeance 2.0 represents.

It represents the current frontier of AI video generation. It shows what's technically possible right now, not in five years or ten years, but right now. And if something is possible now, it will be cheaper, easier, and more accessible in a few months.

The uncanny valley effect of Seeance 2.0 is actually a gift. It makes the artificiality obvious enough that people notice. The real problem will be when the tools get better, when the artificiality becomes invisible. That's when the questions about trust and verification become urgent.

Seeance 2.0 also reveals how much progress has been made in AI research. A year ago, the idea of photorealistic AI video that triggers the uncanny valley would have seemed impossible. Today it's real. That speed of progress should concern us all.

But it's not all negative. AI video generation has legitimate uses. It can help in filmmaking, game development, education, accessibility. The technology itself is neutral. It's the use that matters.

What I came away with after watching hours of Seeance 2.0 videos wasn't fear, exactly. It was something more like clarity. The future is going to be weird. We're going to need better tools for verification. We're going to need clearer standards about what's acceptable in terms of AI-generated media. And most importantly, we're going to need a more general understanding that what we see online might not be what it appears to be.

Seeance 2.0 is just the beginning of that reckoning. And honestly, I'm glad it exists. Better to see the weirdness and think about it now than to be blindsided by something better and more convincing later.

FAQ

What exactly is Seeance 2.0?

Seeance 2.0 is an AI video generation tool that uses diffusion-based models to create photorealistic video from text prompts. It's specifically designed to prioritize visual quality and temporal coherence, which makes its outputs feel realistic but often unsettling due to the uncanny valley effect.

How does Seeance 2.0 differ from other AI video tools like Runway?

Runway ML prioritizes creative flexibility and stylization, while Seeance 2.0 prioritizes photorealism. Runway's videos look clearly AI-generated, which makes them useful for artistic projects. Seeance 2.0's videos aim for real-world authenticity, which makes them more unsettling when they fall slightly short of that goal. Seeance 2.0 generates shorter videos (10-30 seconds) while Runway handles longer formats more effectively.

Why do Seeance 2.0 videos feel so unsettling?

Seeance 2.0 videos trigger the uncanny valley effect—they're realistic enough to seem almost real but contain subtle imperfections that your brain detects and finds disturbing. These imperfections include slightly incorrect blinking patterns, plastic-looking skin, overly smooth motion, and micro-expression issues. The combination of realism with subtle wrongness is more disturbing than obviously fake AI video or genuinely authentic video.

What are the main technical limitations of Seeance 2.0?

Seeance 2.0 struggles most with complex hand interactions, reflections, transparent objects, and maintaining consistency in impossible physics scenarios. It also tends to default to common patterns when asked to generate unusual scenarios, and the maximum video length is typically 10-30 seconds, which limits its applications for longer narrative content.

Can Seeance 2.0 be used for creating deepfakes?

While Seeance 2.0 could theoretically be misused for creating misleading content, current limitations make it difficult to reliably create convincing deepfakes for most purposes. The videos require text prompts (you can't swap faces directly), and the visible artificiality means tech-savvy viewers can usually detect the AI generation. However, as AI video tools improve, this concern will become more serious.

How much does Seeance 2.0 cost?

Seeance 2.0 operates on a premium pricing model with credits-based usage. Exact pricing varies, but it's generally positioned as an expensive tool compared to free or low-cost alternatives like Runway's free tier. You should check the official Seeance website for current pricing, as these tools frequently update their cost structures.

What's the best way to prompt Seeance 2.0 for good results?

Use simple, specific prompts that describe lighting, composition, and motion clearly. Avoid complex hand interactions and transparent objects. Keep videos under 15 seconds. Be explicit about lighting conditions—the model understands lighting instructions well. Test outputs with the uncanny valley in mind, looking for incorrect blink patterns, micro-expression issues, and unnatural material behavior.

How does Seeance 2.0 compare to Open AI's Sora?

Sora generates longer videos (up to 60 seconds) and has better understanding of complex prompts and camera movements. However, Sora is less consistently photorealistic and is more expensive and less widely available. Seeance 2.0 is more focused on maximizing photorealism within shorter timeframes, making the two tools useful for different purposes. Sora is better for general creative video generation, while Seeance 2.0 is better for shorter, highly realistic shots.

What are the implications of Seeance 2.0 for video authenticity and trust?

Seeance 2.0 demonstrates that AI-generated video can now closely approach photorealism, raising serious questions about digital trust and verification. This doesn't mean video evidence is useless, but it means we can no longer assume that video footage is authentic simply because it looks real. Digital signatures, metadata verification, and forensic analysis will become increasingly important for confirming video authenticity.

Use Case: Need to generate realistic-looking product demos or tutorial videos without the uncanny valley weirdness? Create polished, AI-powered presentations in minutes.

Try Runable For Free

Key Takeaways

- Seeance 2.0 represents a frontier in AI video generation, creating photorealistic content that triggers the uncanny valley effect through subtle imperfections

- The tool's primary challenges include complex hand interactions, reflections, transparent objects, and maintaining temporal coherence across frames

- Compared to Runway ML (more stylized), OpenAI Sora (longer, more capable), and Synthesia (avatar-focused), Seeance 2.0 specifically optimizes for unsettling photorealism

- The uncanny valley effect results from multiple neural systems detecting inconsistencies between realistic features and subtle behavioral wrongness

- As AI video generation improves, concerns about deepfakes, digital trust, and video authenticity verification become increasingly urgent and important

Related Articles

- Samsung's AI Slop Ads: The Dark Side of AI Marketing [2025]

- Seedance 2.0 Sparks Hollywood Copyright War: What's Really at Stake [2025]

- SAG-AFTRA vs Seedance 2.0: AI-Generated Deepfakes Spark Industry Crisis [2025]

- Google's Tool for Removing Non-Consensual Images from Search [2025]

- India's New Deepfake Rules: What Platforms Must Know [2026]

- OpenAI's Super Bowl Hardware Hoax: What Really Happened [2025]

![Seeance 2.0 AI Videos: Why They're a Trippy Nightmare Dreamscape [2025]](https://tryrunable.com/blog/seeance-2-0-ai-videos-why-they-re-a-trippy-nightmare-dreamsc/image-1-1771882550054.jpg)