The AI Talent War: Why Money No Longer Matters to Top Researchers

Introduction: The New Gold Rush That Isn't About Gold

Something weird is happening in the AI industry right now, and it has almost nothing to do with paychecks.

Yes, the salaries are astronomical. We're talking about AI researchers pulling in

This is the defining feature of the 2025 AI labor market. The biggest tech talent war since social media went mainstream is being driven almost entirely by ideology, mission, and fear—not compensation.

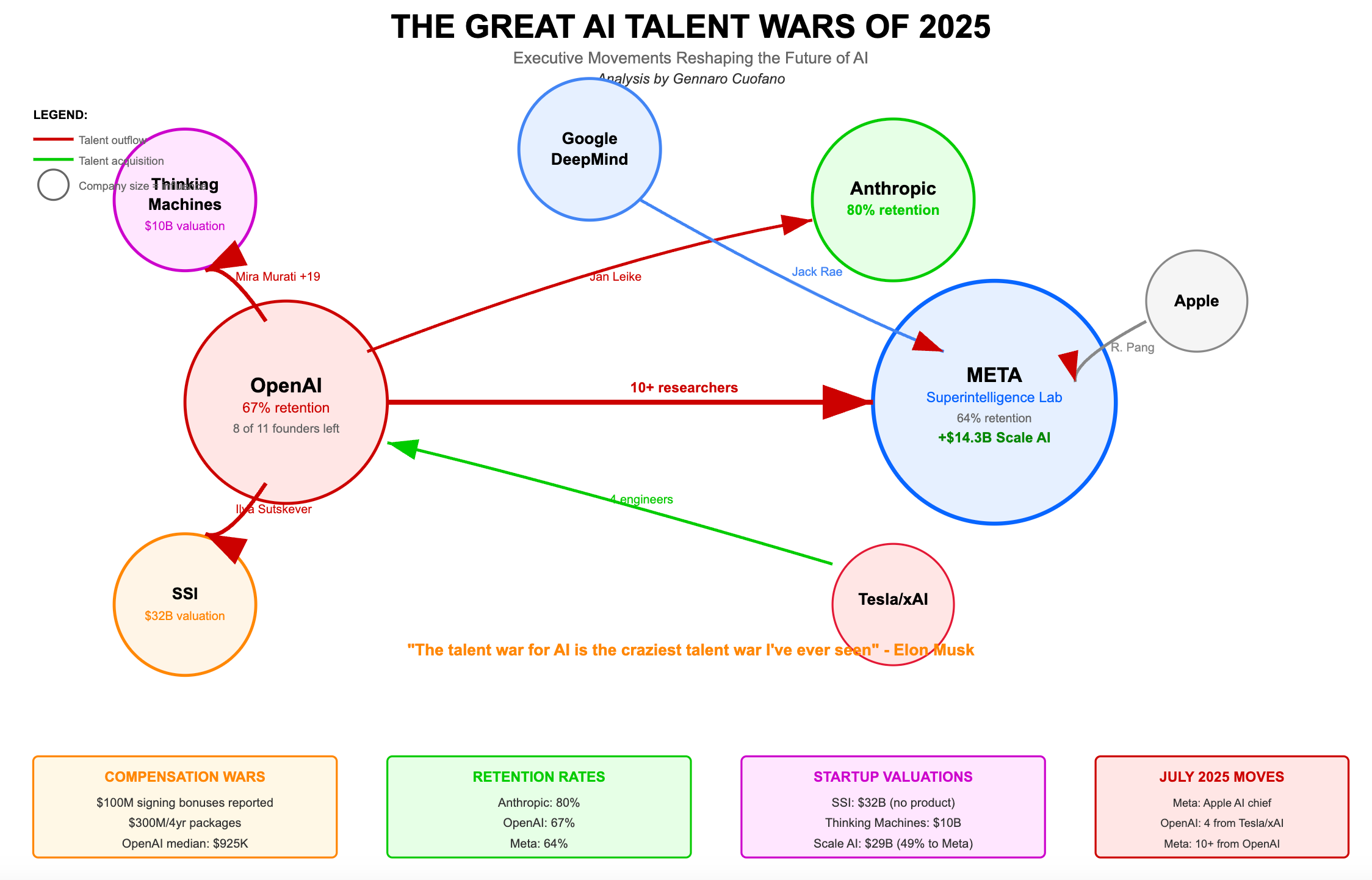

Here's what's actually happening: A handful of companies in the San Francisco Bay Area are engaged in a ruthless, high-stakes competition for a very small pool of exceptional AI researchers. These companies are backed by billions in venture capital and increasingly looking at going public. The stakes have never been higher. The drama has never been messier. And the motivations driving the best engineers to switch jobs have fundamentally shifted.

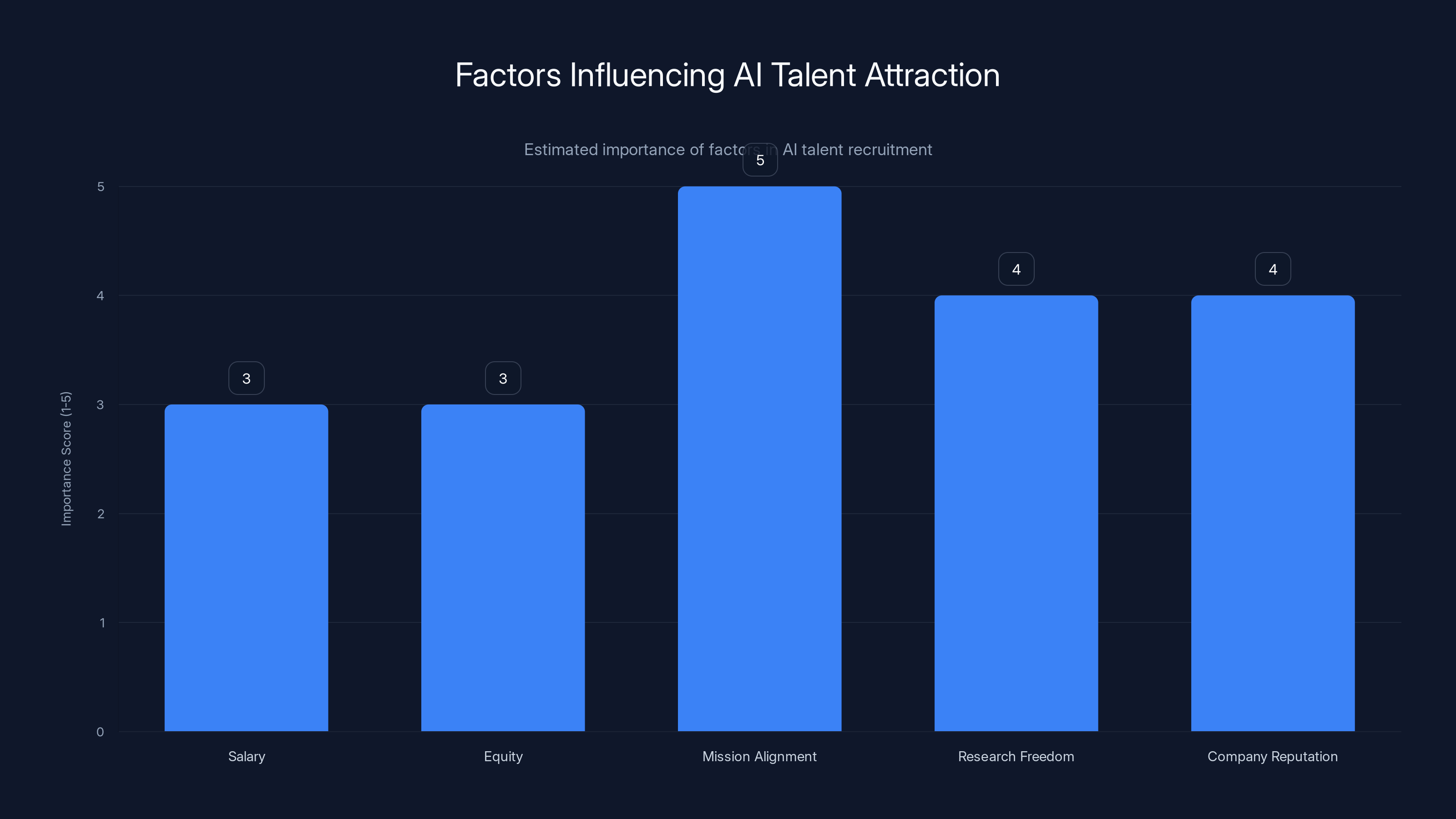

For the first time in tech history, money is no longer the primary lever for recruiting world-class talent at the top end. That doesn't mean engineers are being paid in stock options and good vibes. It means that once you hit a certain salary threshold (and that threshold is already incredibly high), additional money becomes almost irrelevant to decision-making. What actually moves people is the belief that they're working on something that will literally reshape civilization.

This shift has massive implications. It changes how companies compete. It changes what founders and executives need to prioritize if they want to attract the best people. It changes the calculus for startups trying to poach talent from giants. And it's creating one of the most volatile, drama-filled industries imaginable.

We're going to walk through all of this. The talent moves, the missions, the defections, the philosophical arguments, and what it all means for the future of AI development.

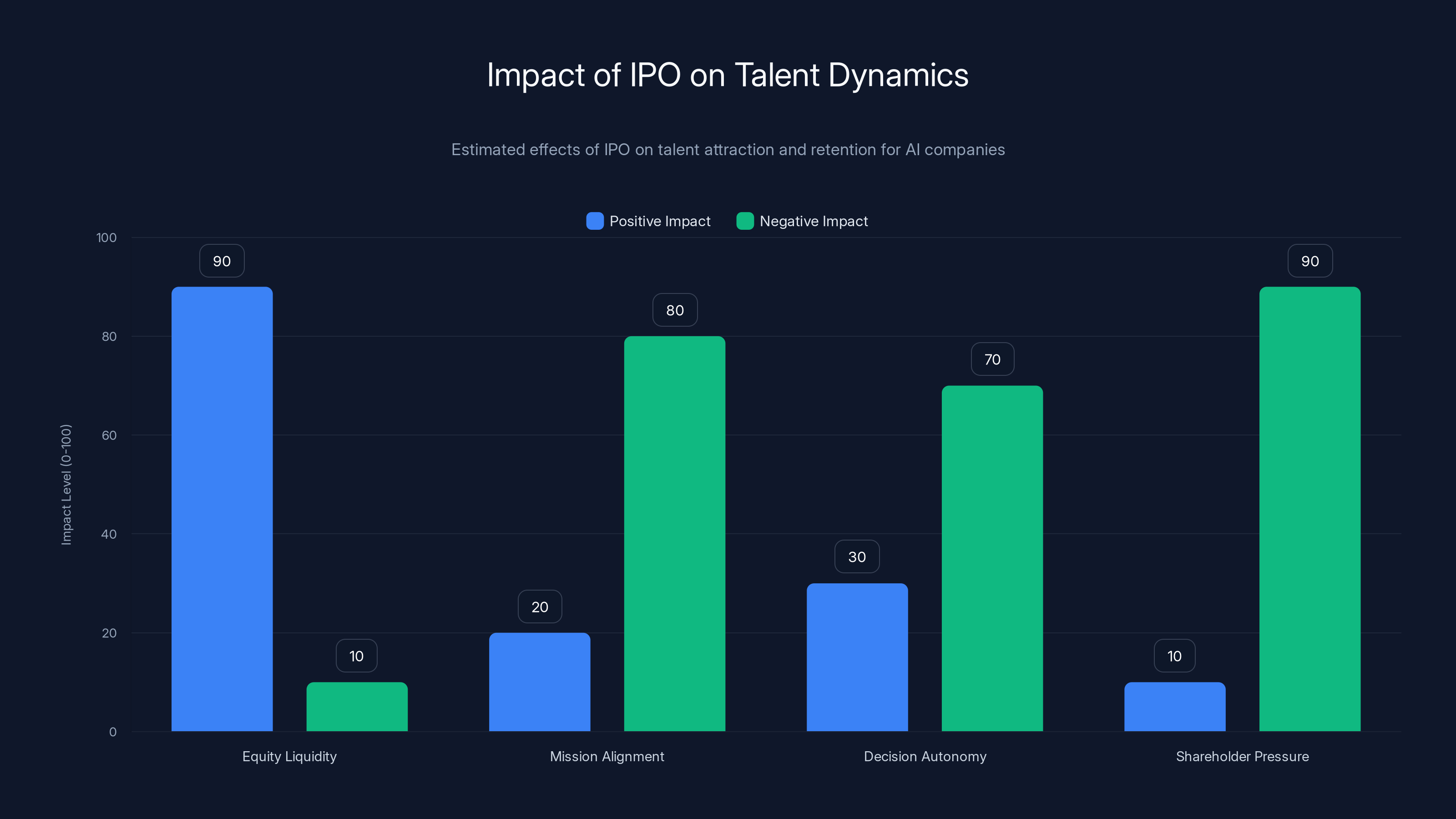

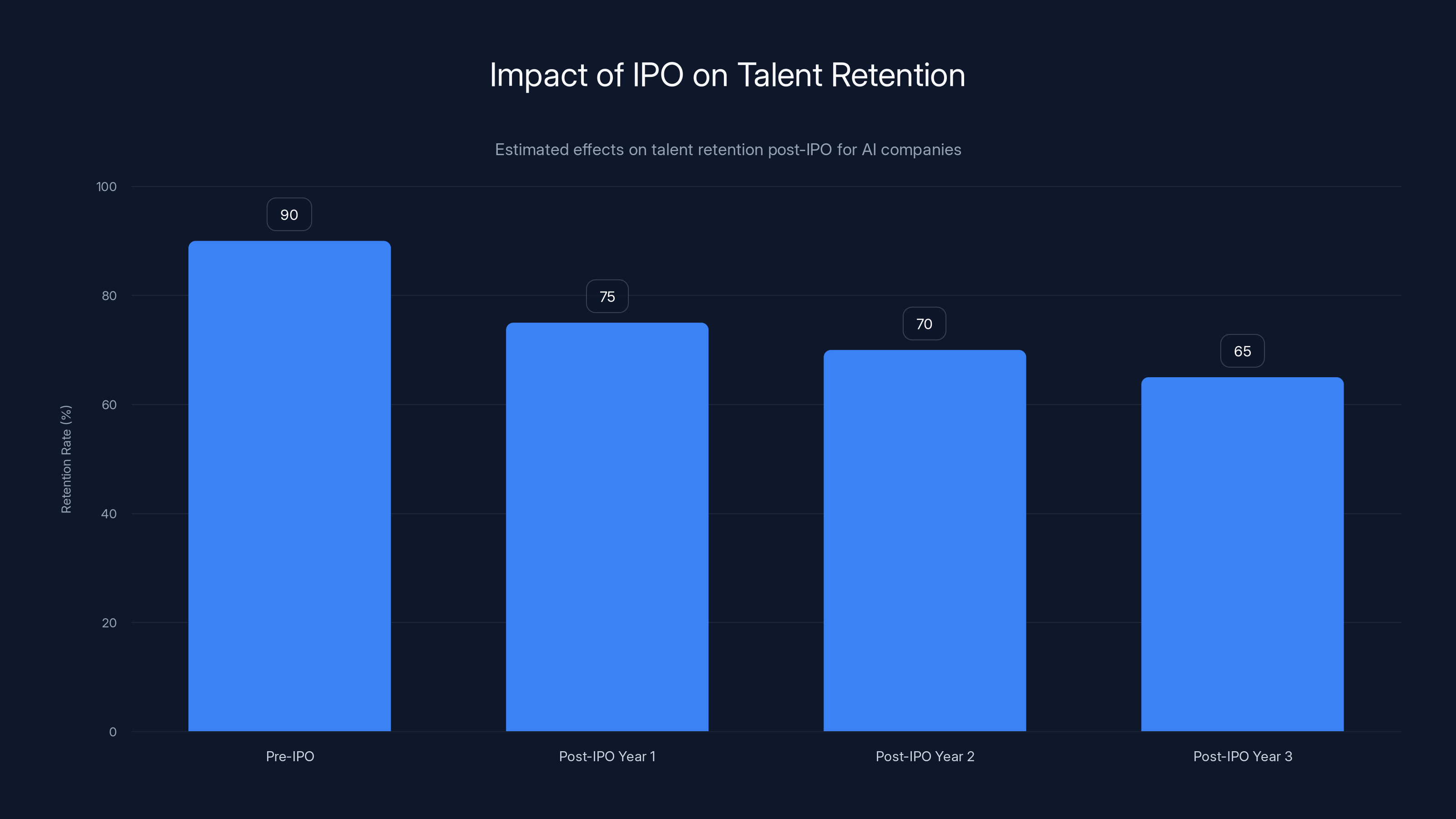

Going public can significantly increase equity liquidity for employees but often reduces mission alignment and decision autonomy due to shareholder pressures. Estimated data.

TL; DR

- Ideology over money: Top AI researchers are choosing jobs based on mission and philosophical beliefs rather than salary alone

- The exodus is real: Researchers are leaving established companies for startups, explicitly citing concerns about safety, ethics, or product direction

- Companies are going public: OpenAI and potentially Anthropic planning IPOs in 2025, which will create historic wealth and new accountability pressures

- FOMO is weaponized: Fear of missing out on the next big breakthrough is pushing people to constantly chase the perceived hottest project

- The volatility is structural: Without clear mission alignment, companies face constant talent poaching and the defection cycle continues

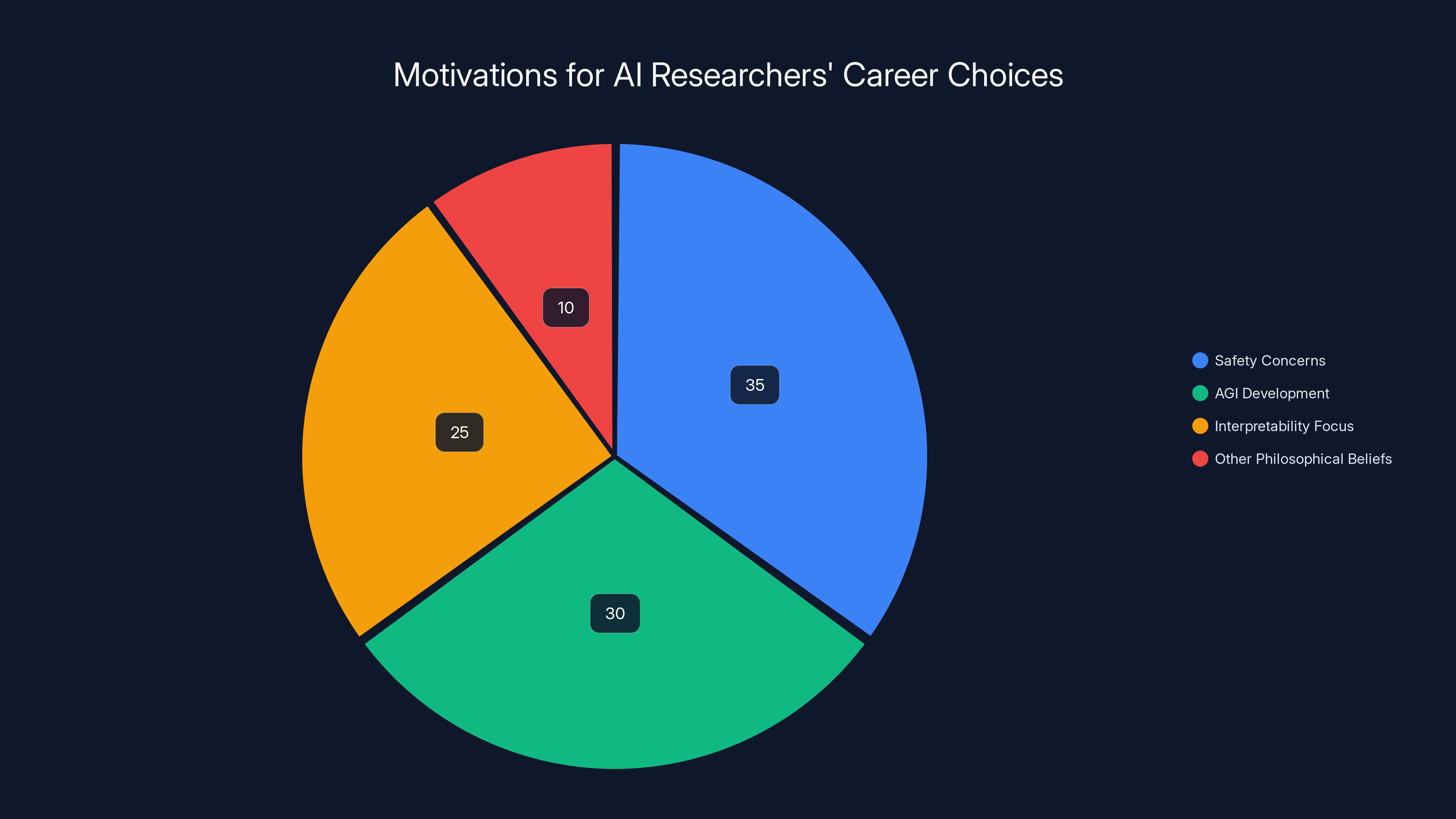

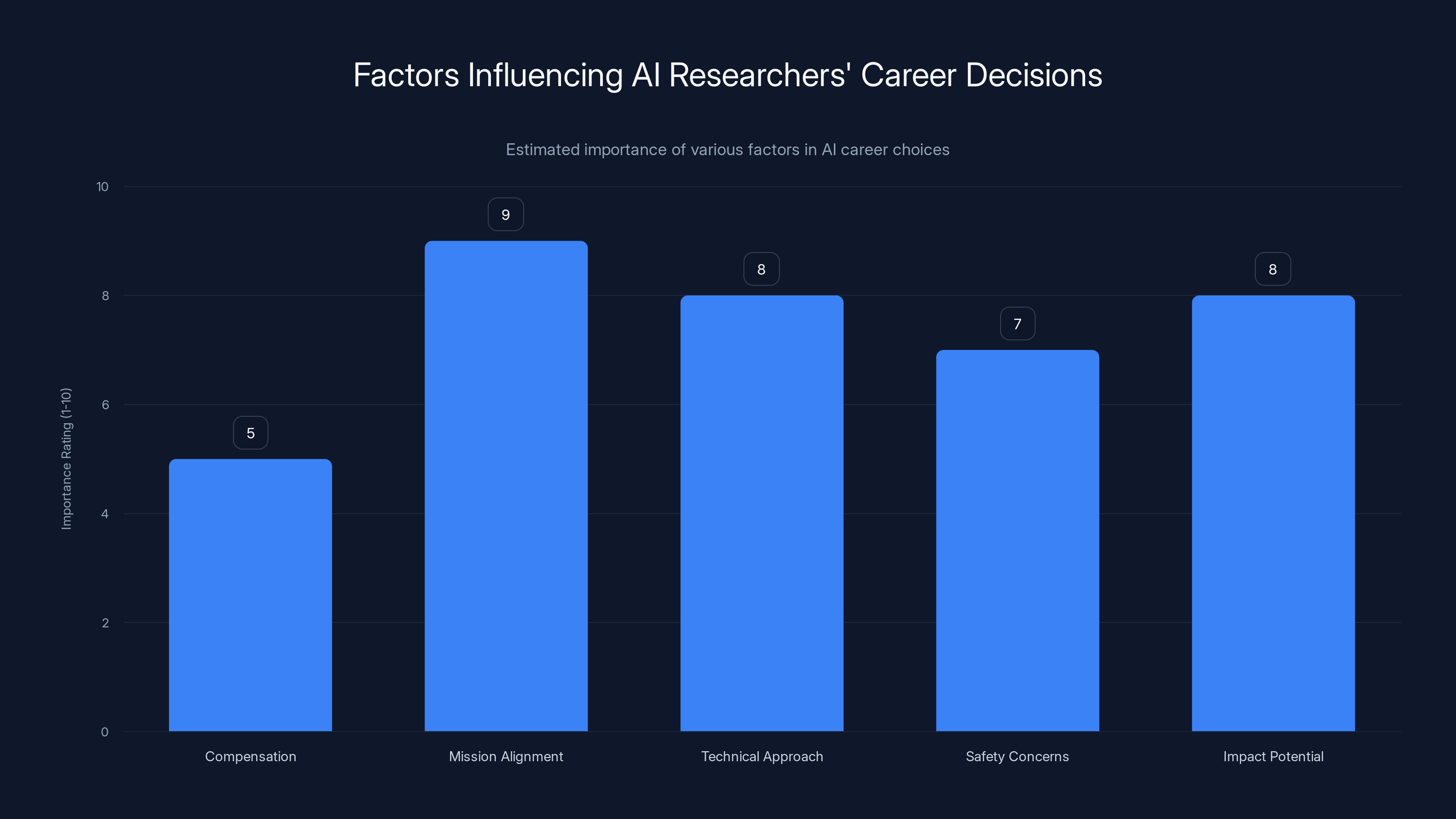

Mission alignment and research freedom are increasingly important in attracting AI talent, surpassing traditional factors like salary and equity. (Estimated data)

The AI Industry's Defection Crisis: Understanding the Exodus

Why the Best Engineers Keep Leaving

Let's start with the most visible symptom: People are leaving. Not gradually. Dramatically.

Researchers at OpenAI, the company that built ChatGPT and essentially created the modern AI boom, have quit to join competitors. Some left because they disagreed with the company's direction on safety. Some left because they thought another company's mission was more aligned with their values. A few left and literally announced they were going to become poets instead, which is one of the most extreme ways to signal that your current job is not fulfilling.

The pattern repeats across the industry. When a researcher quits, they usually explain why publicly. And the explanations are almost never "the compensation package didn't make sense" or "I found a better 401(k)." They're about mission, about impact, about the philosophical direction of AI development.

This is genuinely unprecedented in tech recruiting. During the dot-com boom, people moved for better compensation and equity upside. During the mobile revolution, people moved for better products and clearer growth trajectories. But in AI, people are making lateral or downward moves in pure economic terms because they believe in something specific about how AI should be developed.

The underlying dynamic is brutal for companies trying to retain talent. You can't just pay someone more if their core objection is philosophical. You can't offer a better benefits package if what they actually care about is whether your training data is ethically sourced. You can't compete on career growth if someone genuinely believes your company is building unsafe systems.

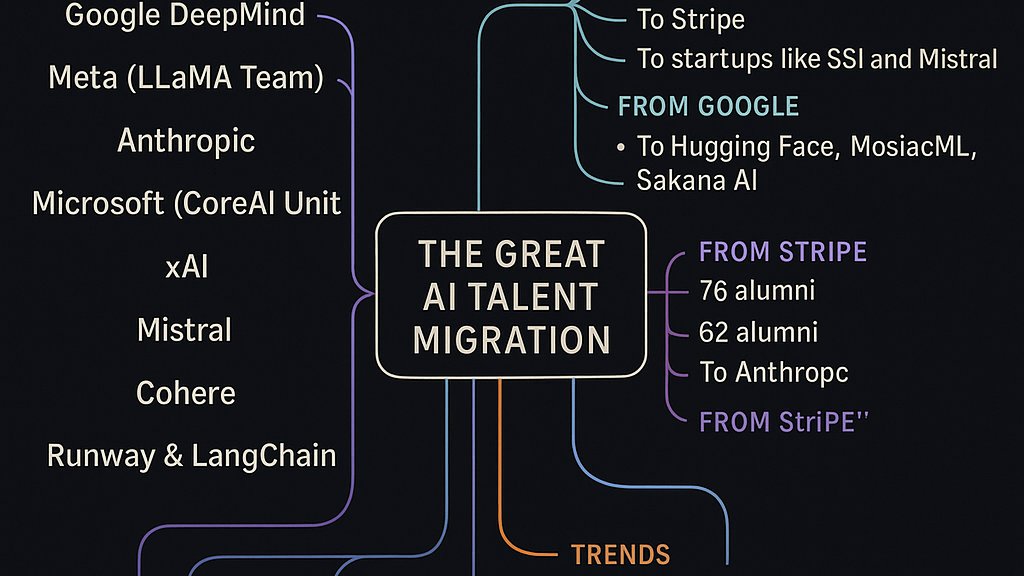

The Specific Moves That Matter

Take xAI, Elon Musk's AI company. When SpaceX announced it was acquiring xAI, multiple co-founders and senior researchers immediately quit. The public letters made clear why: They didn't want to be a SpaceX subsidiary. They had concerns about the direction and independence of the research. Pure money couldn't save those departures because the issue wasn't compensation.

Or look at the steady stream of researchers departing OpenAI for Anthropic. This is fascinating because Anthropic was founded by former OpenAI executives explicitly because they disagreed with OpenAI's approach to safety. Every time an OpenAI researcher joins Anthropic, it signals a fundamental disagreement about how AI should be built. These are people leaving the most valuable AI company in the world to work for a competitor because of philosophy.

The New York Times op-ed from a former OpenAI safety researcher made the same point in devastating detail. This person had access to internal conversations at OpenAI and was essentially saying, "The company I work for is building systems that could harm humanity, and the organization isn't taking that seriously enough." That's not a salary negotiation. That's someone choosing public criticism and career risk because they believe something matters more than their paycheck.

What's remarkable is that these moves happen at a cadence that feels almost normal now. A researcher quits. There's a blog post or tweet explaining why. The industry discusses it for a few days. Then it happens again. The defection cycle has become the actual operating rhythm of the industry.

The Mission-Driven Economy: Why Ideology Beats Paychecks

The Philosophical Divide in AI Development

Here's something that separates AI from every other sector of tech: The people building it are genuinely concerned that they're building something dangerous.

I'm not exaggerating. There's a real, substantive debate happening inside AI labs about whether the systems being built could cause catastrophic harm. Some researchers believe AI development needs extreme caution and massive safety investment. Others think the real risk is moving too slowly and allowing competitors to build unsafe systems. Still others believe the current approach to AI safety is solving the wrong problems entirely.

These aren't abstract debates. They're causing people to leave high-paying jobs and fundamentally reshape their careers.

Anthropic was literally founded because its founders disagreed with how other AI companies were approaching safety research. The company has built its entire recruiting pitch around this: "We're taking safety seriously in a way other companies aren't." That mission-level clarity is why they can hire top researchers away from more established companies. A researcher who cares deeply about safety has a compelling reason to leave OpenAI or Google and work somewhere that makes safety central to its mission.

The same logic applies to different philosophical camps. If you believe AGI (artificial general intelligence) is imminent and the most important work is ensuring it goes well, you're attracted to companies like OpenAI that are explicitly building toward that goal. If you think the current approach to AI development is moving recklessly without adequate safety measures, you're attracted to companies investing heavily in interpretability and alignment research.

This creates a sorting mechanism where people self-select into organizations that match their fundamental beliefs about what should be built and how. And because these beliefs are deeply held and philosophically grounded, they're extremely sticky. You can't change someone's mind by offering slightly more equity.

The Radical Mission Statements That Actually Work

Pay attention to how AI companies are marketing themselves to recruit talent. It's not "join our company and get rich." It's "join our company and help ensure AI benefits humanity" or "join our company and solve the alignment problem" or "join our company and build AGI safely."

These aren't marketing slogans that get tuned by a brand team. These are genuine mission statements that reflect what the founders actually care about and what the daily work actually involves. And they work remarkably well at attracting people who care about those things.

A researcher might see an opportunity to work on AI safety at a startup that's explicitly framing its mission around solving alignment problems. That startup might not be able to match the salary of a FAANG company. But if the researcher cares about alignment more than they care about incremental salary increases, the choice becomes obvious.

What's interesting is that this dynamic used to exist in different forms across tech. Nonprofits have always competed for talent on mission rather than compensation. Environmental organizations attract people who care about climate change even when they pay less than oil companies. But the AI industry is unique in that the highest-stakes technical work is also driven by mission and ideology at a level rarely seen in for-profit companies.

It's also important to note that these missions aren't frivolous. They're grounded in real technical research and legitimate philosophical disagreements about how to build AI safely and beneficially. This isn't someone choosing a job because they like the vibe. It's someone choosing based on their best understanding of what the most important research directions actually are.

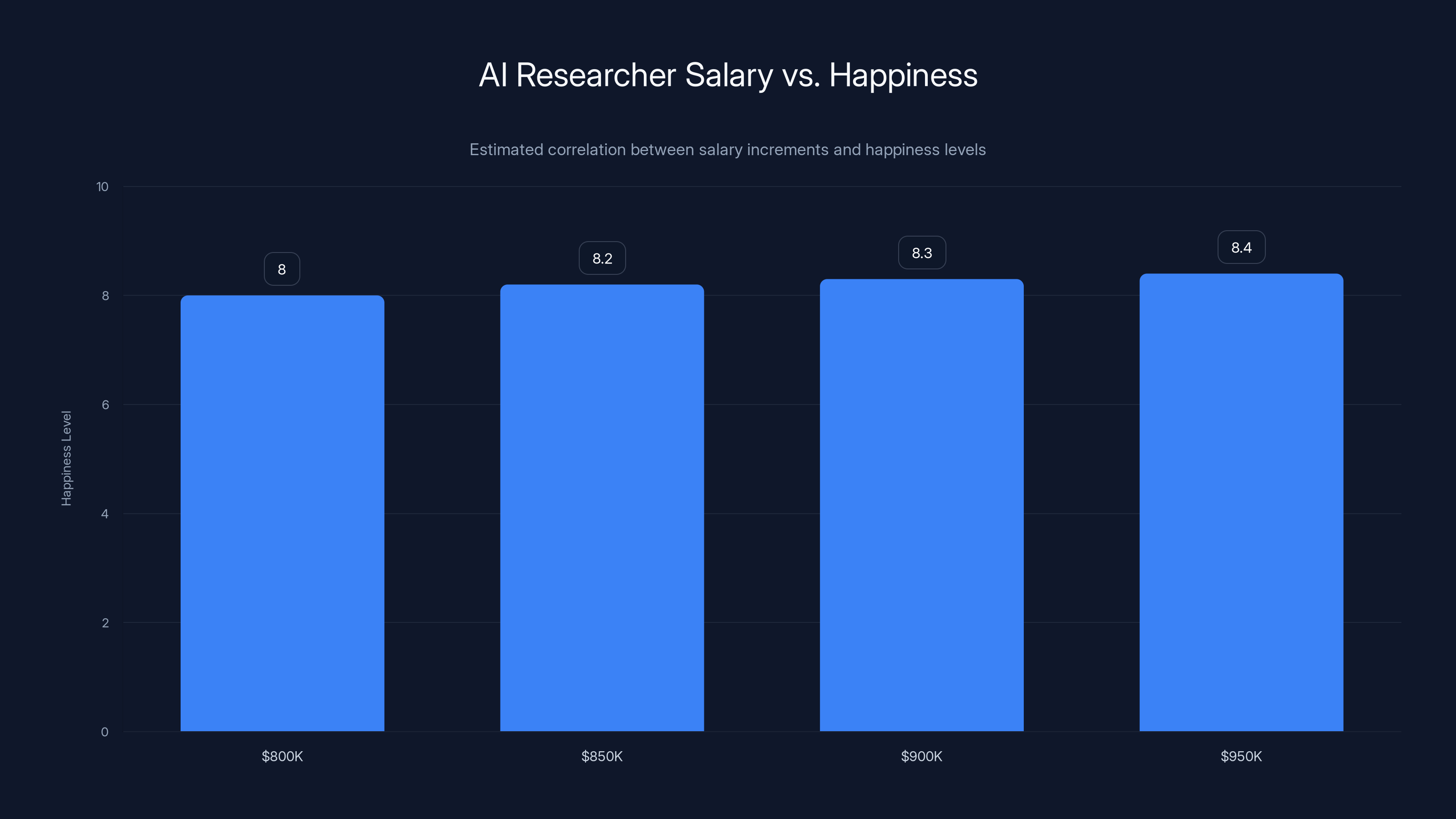

Estimated data shows that while AI researcher salaries increase significantly, the corresponding increase in happiness is minimal, illustrating the 'hedonic treadmill' effect.

The IPO Effect: When Going Public Changes Everything

Why Open AI and Anthropic Going Public Matters for Talent

Here's a scenario that's about to reshape the entire AI industry: OpenAI and potentially Anthropic will go public in 2025. This is a completely different ballgame for talent dynamics.

Right now, these companies are private. The founders and early employees have massive equity upside. The financial incentives are aligned around building the most capable AI systems possible and raising more venture capital. There's a clarity of mission because the companies are run by their founders and the strategy isn't diluted by public market pressures.

When you go public, everything changes. You need to hit earnings targets. You need to explain your business model to Wall Street. You need to satisfy shareholders who might not care about whether your AI alignment research is cutting-edge. You need to be transparent about how you're spending money. And you're suddenly accountable to a much broader group of people with different priorities.

For recruiting, this has multiple effects. The positive effect is that early employees suddenly have enormous liquidity events. The $500K/year engineer who joined as employee 50 might end up with stock worth hundreds of millions. That's a recruiting story that works really well.

But the negative effects are substantial. Once you're public, you can't make decisions purely on mission grounds anymore. If the board wants you to prioritize revenue over research, you have less ability to say no. If shareholders want you to cut costs, you might need to reduce your AI safety team. If your stock price is under pressure, you might need to ship products faster and worry less about the philosophical implications.

This creates an interesting dynamic where the companies that were most successful at attracting talent through mission-driven positioning might become less mission-driven once they go public. Some researchers might leave specifically because they see the company shifting from mission-first to shareholder-first priorities.

There's already precedent for this. Facebook attracted massive talent in part because of the mission to "connect the world." As the company became more focused on monetization and advertising, and as the implications of that work became clearer, researchers and engineers started leaving. The mission had been reframed, and people who cared about the original mission felt betrayed.

AI companies are aware of this risk. OpenAI has actually been trying to structure itself in ways that preserve mission alignment even after going public. But the fundamental tension remains: You can't be a public company and a mission-driven organization operating at the same level of intensity. You have to make trade-offs.

The Wealth Creation and Its Implications

Let's talk about the actual numbers for a moment, because they're kind of staggering.

If OpenAI goes public at a valuation that's anywhere near what the venture market has been pricing it at, we're talking about a company worth potentially $100B+. The early employees of that company, people who joined when it was just a handful of researchers, stand to make generational wealth.

For recruiting, this is a powerful signal. New talent sees the success stories. They see that getting in early at an AI company that goes public creates massive wealth. That's a strong incentive to join the next hot startup, even if the salary is "only"

But here's the subtle shift: The wealth creation becomes its own mission. People aren't just motivated by believing in the research direction or the philosophical approach. They're also motivated by the possibility of a massive financial outcome. That's not inherently bad, but it changes the character of the talent market.

It also creates pressure for new companies to claim they're going to be the next OpenAI. Every AI startup now pitches itself as potentially the next $100B company. That's appealing to talent because of the upside possibility. But it also creates a lot of noise and makes it harder to distinguish between companies that are genuinely mission-driven and companies that are just trying to capture venture capital and ride the hype cycle.

The FOMO Machine: How Fear of Missing Out Drives Career Decisions

The Perceived Importance of Being at the Right Company

There's a pervasive belief in the AI industry that being at the right company at the right time is everything. Not "right company" in the sense of best salary or best benefits. "Right company" in the sense of "working on the breakthrough that matters most."

The psychology here is intense. AI is moving incredibly fast. New capabilities emerge constantly. The pace of change makes it feel like you could miss something absolutely fundamental if you're at the wrong organization.

So when someone hears that Company A is working on something that sounds more promising than what Company B is doing, there's real pressure to switch. Not because Company B is a bad place to work. But because what if Company A is actually building the technology that changes everything, and you're missing it?

This creates a talent volatility that's almost structural. The best researchers are constantly asking themselves: Am I at the place where the most important research is happening right now? If I'm not sure, shouldn't I take a meeting with someone at Company X who thinks their work is more important?

Companies exploit this dynamic relentlessly. When they're recruiting, they don't just say "come work with us and make a lot of money." They say "come work with us because we're solving the actual hard problem," or "come work with us because everyone else in the field is doing it wrong."

For researchers who care deeply about impact and about working on what they perceive as the most important problems, this is incredibly effective. It's not manipulation exactly. It's just that the claim might actually be true. Company A might actually be working on something more important than Company B. The trouble is that it's impossible to know for certain, so everyone is making decisions under uncertainty.

Add in the fact that the field is moving so fast that what seemed like the right place to be six months ago might feel like the wrong place now, and you get this constant churning of talent.

The Competitive Pressure to Jump Ship

Here's something subtle that doesn't get discussed enough: The more people see others jumping ship, the more pressure they feel to consider it themselves.

Imagine you're a researcher at Company A, generally happy, working on interesting problems. Then you see that three respected colleagues have left for Company B in the past six months. Each of them published a thoughtful explanation about why. Each of them is now talking about how much better Company B's approach is. Now you're second-guessing yourself.

Maybe they're right. Maybe Company B really is doing something better. Maybe you should have left too. Maybe the thing you're missing out on at Company B matters more than the stability and relationships you have at Company A.

This social proof effect is powerful. It creates a self-reinforcing cycle where departures from one company make departures from that company more likely. Everyone sees their friends leaving. Everyone hears about how great it is at the new place. Everyone worries they're missing out.

Companies can fight this, but it's hard. You can offer more money, but as we established, money isn't the primary lever anymore. You can try to convince people they're doing important work, but if three of their colleagues just left to do what they see as more important work, that's a hard argument to win.

What companies actually need to do is maintain genuine mission alignment and ensure that the work is actually as impactful as they claim. Because once you lose credibility on that front, the defections accelerate and they're very hard to stop.

Estimated data suggests a decline in talent retention rates post-IPO, with significant drops in the first three years as companies adjust to public market pressures.

The Safety Debate as a Recruiting Lever

How Disagreements About AI Safety Drive Talent Movement

One of the most interesting aspects of the AI talent war is that it's being actively shaped by disagreements about safety and alignment.

There's a meaningful technical debate in the AI field about how much to prioritize safety research versus capability research. Some researchers believe the ratio of safety work to capability work is far too low, and that we're building increasingly powerful systems without adequate research into how to make them safe. Others believe that the current approach to AI safety is solving the wrong problems or moving too slowly on capabilities, which could be its own kind of risk.

These aren't abstract debates. They're causing people to make major career moves.

If you're a researcher who believes deeply that AI safety is being underprioritized, you're going to be attracted to Anthropic, which has made safety a core part of its mission and research direction. The opportunity to work on what you believe is the most important problem is going to outweigh the opportunity cost of leaving another company.

Conversely, if you believe that capability research is being bottlenecked and that the best way to ensure safe AI is to focus on building powerful AI well, you might be attracted to OpenAI, where the focus is more on building advanced systems.

What's fascinating is that both positions have genuine intellectual merit. There's a real disagreement about the right approach. But that disagreement is playing out partly through the talent market. The companies that can attract the researchers who agree with their approach have an advantage not just in recruitment but in research direction.

Anthropic has explicitly structured its recruiting around this. Its mission statement emphasizes safety and constitutional AI. When it recruits researchers, it's explicitly saying "come work on safety-focused AI research with other people who believe this matters." For researchers in that camp, it's a powerful draw.

Meanwhile, other companies are arguing that the way to ensure safety is to move aggressively on capabilities and work on safety within the context of building real systems. That's attractive to researchers who believe in that approach.

The talent market becomes a kind of referendum on which approach is more compelling. The companies that can consistently attract top researchers are effectively proving their case about what matters most.

The Moral Arguments That Move People

Here's something that would have been unthinkable in most tech industries: Engineers leaving companies specifically because they believe the company is building systems that could cause harm.

The New York Times op-ed from the OpenAI safety researcher was essentially an argument that the company wasn't taking safety seriously enough. The person published this publicly despite the obvious career risk. And then they quit.

That's a moral argument being expressed through a career decision. The person was willing to sacrifice their position at the most valuable AI company in the world because they believed something about right and wrong in how AI should be built.

This kind of reasoning filters through the entire talent market. If you're a researcher who cares about AI safety, you're going to hear about arguments that other companies aren't taking safety seriously. You're going to wonder if you should leave. You're going to feel some responsibility to work somewhere that you believe is doing the right thing.

Companies can't really fight this with money. You can't out-salary someone's moral conviction. You can only counter with a credible argument that your company is actually taking the important things seriously, and then you have to actually back that up with research direction and organizational priorities.

This is genuinely new. Tech has always had people with moral convictions. But the intersection of moral stakes (AI safety and impact) and technical expertise and financial compensation at this level is unprecedented. It creates unusual dynamics where mission and ideology matter in ways they typically don't in industry.

The Startup Opportunity: Poaching from the Giants

How New AI Startups Are Competing for Talent

You'd think that startups would be at a disadvantage in recruiting against OpenAI and other well-established companies. The giants have more money, more resources, more clout. But they're actually losing talent to startups at an increasing rate.

Why? Because startups can offer something the giants can't: Clarity of mission and speed of decision-making.

When you're working at OpenAI, you're part of a 700+ person company. There are committees. There are processes. There are competing priorities between research and product and commercialization. Even if the overall mission is exciting, the day-to-day experience might be more bureaucracy than breakthrough.

When you're working at a 20-person AI startup, the situation is inverted. Everyone is aligned on the mission. Decisions happen fast. Your work directly impacts the direction of the company. The trade-off is obvious: Lower salary and much less stability in exchange for more impact and more agency.

For researchers who value impact and agency more than salary and security, that's actually a compelling trade. Especially if the startup is framing its mission in a way that resonates: "We're building AI safety technology," or "We're solving interpretability," or "We're building AI aligned with human values."

Startups also have an advantage in recruiting based on vision. They can say "in five years, this technology will be everywhere, and we're the company building it." The giants have to be more careful about overpromising because they're already big and already successful.

There's also something about startup equity that remains powerful even in this environment. Yes, most startups fail. But the possibility that you could join a startup when it's 15 people and it grows into the next OpenAI, and your equity becomes worth hundreds of millions of dollars, is still compelling. Especially to people who are already compensated at a high level.

So the dynamic becomes: Giant companies offer security, resources, and high salaries. Startups offer vision, agency, and potential for massive upside. Researchers make tradeoffs based on what matters most to them at a given moment in their career.

The Startup Thesis That Works

The most successful AI startups aren't competing on salary. They're competing on a specific thesis about what the most important research problem is and why they're the right team to solve it.

Anthropic went to market with this: "AI safety and alignment are being underprioritized. We're building a company specifically to solve these problems, with a technical approach that we believe is superior to existing approaches."

That thesis attracted researchers who agreed with it. Some from OpenAI, some from other places. People who believed in constitutional AI and safety-first approaches had a compelling reason to join.

Other startups have used different theses: "Interpretability is the key to AI safety," or "We're building AI agents that can reason transparently," or "The commercial opportunity in AI is in specific domains where we have unique advantages."

What matters is that the thesis is specific enough to be credible and compelling enough to attract people who believe it.

There's also a founder factor. If you're a respected researcher and you start a company, you can immediately recruit other respected researchers because they trust your judgment about what's important. This creates a bootstrapping effect where early success in recruiting makes future recruiting easier.

Estimated data shows that safety concerns and AGI development are the primary motivations for AI researchers' career choices, influencing their decisions to join specific organizations.

The Compensation Paradox: Why Six Figures Isn't Enough

Understanding the Salary Ceiling

Let's address the elephant in the room directly: AI researcher salaries are genuinely insane right now.

We're talking about total compensation packages that routinely exceed $1M per year for researchers with a few years of experience. The top researchers can command even more. These are not investment banker salaries. These are founder-level wealth-creation numbers.

The paradox is that at these levels, incremental money stops mattering almost entirely. If you're making

Psychologists call this the "hedonic treadmill." We adapt to new income levels quickly. Once you can afford everything you reasonably want, more money doesn't produce more satisfaction. You start optimizing for other things: meaning, impact, flexibility, alignment with your values.

This is why money stops being the lever for recruiting. You can't unlock additional motivation by offering 10% more. You can only unlock it by offering something different.

What's interesting is that companies have figured this out. OpenAI and Anthropic aren't competing primarily on salary anymore. They're competing on mission, on research direction, on the probability that the work will be historically important.

The salary conversation has shifted to being a table stakes issue. You need to pay enough to be competitive. You need to offer enough to not create resentment about compensation relative to peers. But once you're in the same ballpark as the other top companies, salary becomes almost background noise in the decision-making process.

The Equity Question

Where compensation still matters is in equity. There's a meaningful difference between joining OpenAI when it's private and has already proven its value, versus joining a startup that might be worth $100B in five years or might fail completely.

Early employees at companies that go public make generational wealth from equity. That's still a powerful draw. But it's a different kind of draw than salary. It's about potential upside and the possibility of a massive financial outcome, rather than about compensation in the near term.

Companies structure this carefully. They offer lower base salary in exchange for more equity. Or they offer competitive base salary plus equity that could be worth a lot if things go well.

But the interesting thing is that researchers seem to be less motivated by equity upside than you'd expect. The researcher who leaves OpenAI to join a startup might be giving up significant equity value to pursue a mission they care about more. That's not primarily a financial decision.

So while equity remains part of the conversation, it's not the primary lever for recruiting. It's more of a risk mitigation tool: You're asking people to take on risk (startup might fail, equity might be worthless) in exchange for potential upside. Some people are willing to make that trade. Others aren't.

The Total Comp Conversation

When companies do talk about compensation, they're talking about total compensation: base salary plus equity plus signing bonuses plus stock refreshes plus benefits. The conversation has become incredibly sophisticated and tailored to individual circumstances.

Something that's happening more now is that companies are offering things beyond money: sabbaticals, flexible schedules, the ability to work on whatever research you want for part of your time, access to compute resources, the ability to publish research without company approval.

These things matter because they address the underlying motivation. If you care about research, offering sabbatical time and research freedom is worth more than an extra $100K in salary. If you care about autonomy, offering that flexibility is more valuable than more money.

This is how companies compete when compensation is already absurdly high. They compete on the intangible factors that actually drive fulfillment for people who are already very well compensated.

Industry Consolidation and the Talent Implications

What Happens When Companies Get Acquired

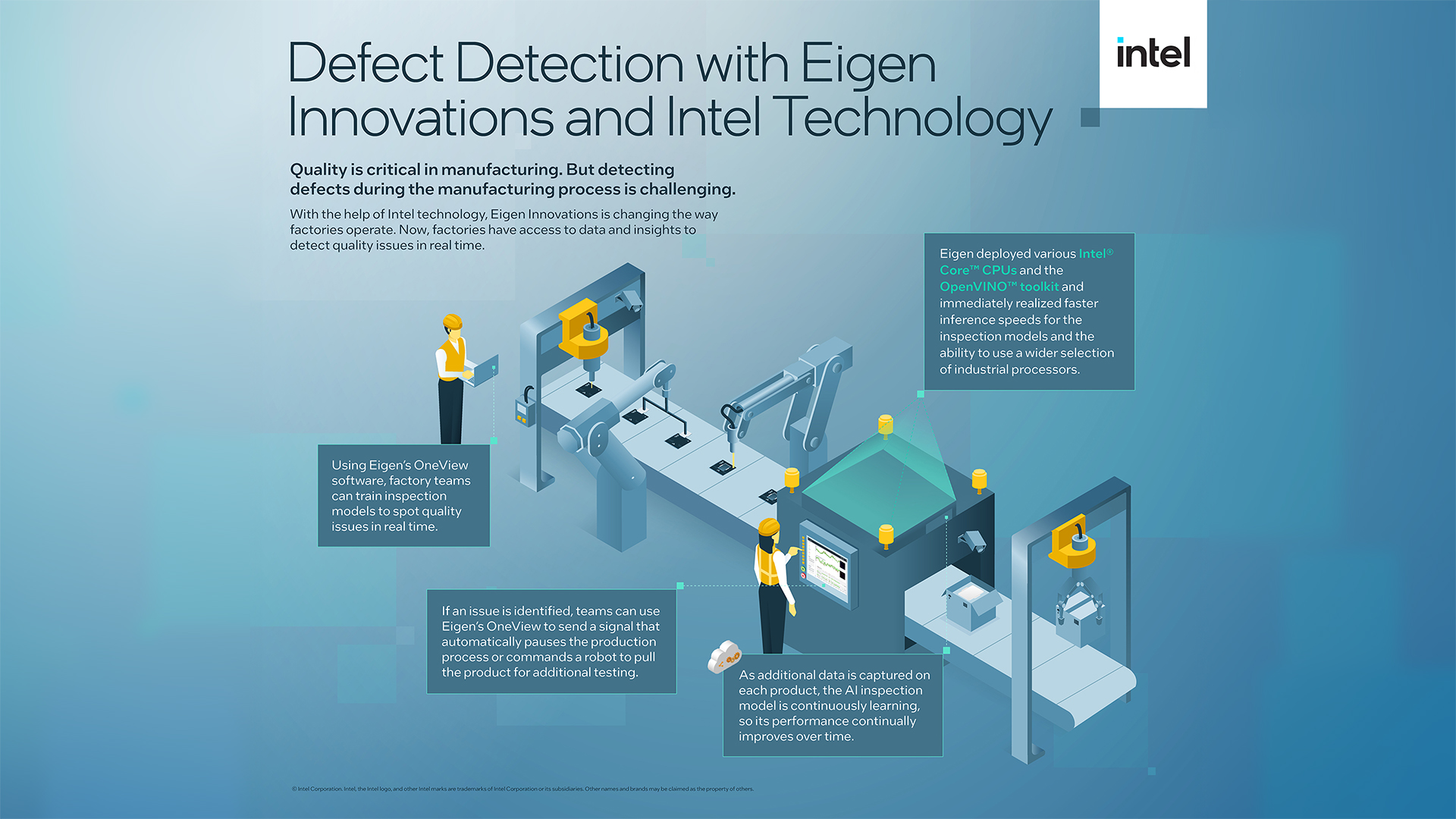

One of the more dramatic recent events was the SpaceX acquisition of xAI. This gives us a clear window into how talent dynamics work when an AI company gets acquired by a larger entity.

The result? Immediate departures from xAI. Multiple co-founders and senior researchers quit almost immediately after the acquisition was announced.

The reason is straightforward: They didn't want to be part of SpaceX. The acquisition changed what they thought the mission was. Even though SpaceX is also doing interesting work, it's a different organization with different priorities. Researchers who joined xAI for a specific vision of autonomous, uncensored AI development weren't interested in having that work become a SpaceX subsidiary.

This is a crucial point about how the talent market works. It's not just about the money or the prestige. It's about believing in the specific direction of the organization. When that changes, people leave.

This also suggests something important about potential future consolidation in AI. If the big tech companies start acquiring AI startups aggressively, they might find that the talent that made those startups valuable walks out the door. You can acquire a company. You might not be able to keep the people who made it what it is.

The Competitive Pressure to Acquire

Because poaching talent from startups is so hard, big companies are increasingly acquiring startups instead. It's a way to bring in the team and the research direction intact.

But this creates its own dynamics. Acquisitions are expensive. They also change the culture of the acquiring company. If a big company is constantly acquiring startups and trying to integrate them, that creates a different kind of organizational culture than the traditional build-everything-yourself approach.

It also potentially concentrates the AI industry. If a handful of large companies are acquiring smaller competitors, that limits the options for researchers who want to work at independent organizations. Which in turn might increase pressure on the large companies to be good stewards of mission and to justify to their talent why working there is better than joining one of the few remaining independent startups.

AI researchers prioritize mission alignment and technical approach over compensation, with safety and impact also being significant factors. (Estimated data)

The Public Market Transition: IPO Effects on Talent Strategy

Preparing for Life as a Public Company

OpenAI's plan to go public in 2025 is forcing some genuinely difficult questions about how to maintain mission alignment in a public company structure.

The founders are clearly thinking about this. There have been discussions about potentially creating a structure where the company can maintain some independence from public market pressures. This is complicated and probably partly impossible, but the fact that they're thinking about it shows awareness of the problem.

Once you go public, you have quarterly earnings reports. You have analysts questioning your business model. You have shareholders voting on major decisions. You have far less flexibility in how you spend money.

For a company that built its talent recruiting strategy around "we're pursuing the most important research regardless of commercial viability," that's a significant change. How do you maintain that positioning when you have shareholder pressure to maximize profit?

Anthropic is presumably facing the same questions. The company has raised an enormous amount of money and is expected to go public at some point. How do you maintain the safety-first, mission-driven culture when you're a public company?

My guess is that some researchers will leave as companies transition from private to public. And some of the mission-driven positioning that works in recruiting for private companies will prove harder to maintain in public companies. This could actually be a moment where the talent market consolidates around the remaining private AI companies and startups that aren't planning public offerings.

New Incentive Structures Post-IPO

Once companies go public, the incentive structures for executives and employees change. Stock options become vested over time. Executives have lock-up periods. The company needs to show growth and profitability.

This creates subtle pressure to make decisions that are good for the stock price, even if they might not be the best decisions from a pure research or mission perspective. These pressures are often invisible but they're real.

For researchers who care deeply about mission alignment, this is concerning. They might see a company going public as a sign that the mission is going to become secondary to commercial concerns.

Whether that actually happens depends a lot on how the company structures itself and how it talks about its mission. If OpenAI goes public and continues to emphasize its commitment to safe AGI development, and it actually backs that up with research direction and hiring and budget allocation, then the mission can potentially survive the transition. But it requires intentional effort.

The Emerging Threat: Building AI Unsafely

The Safety Competition Dilemma

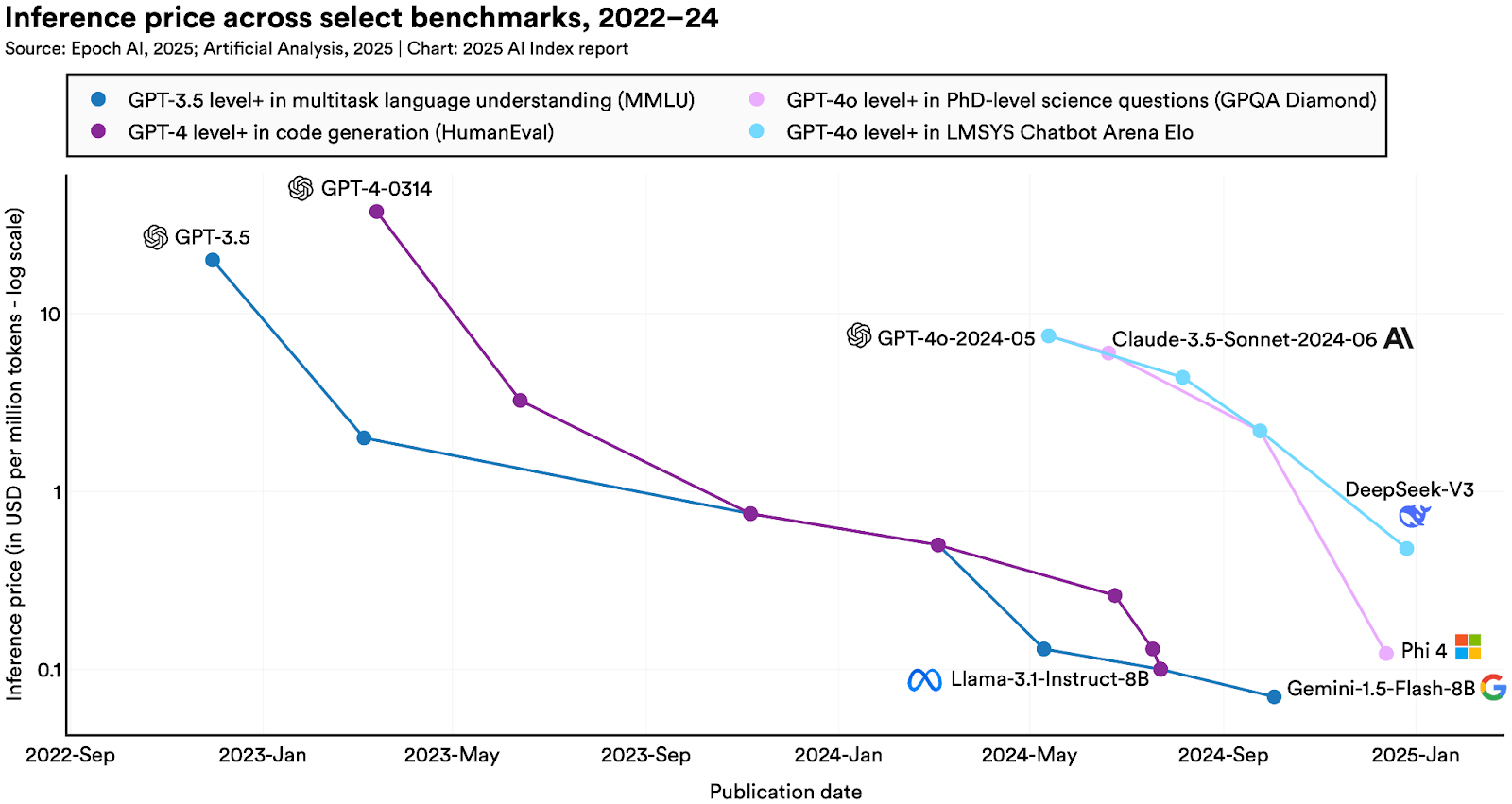

Here's a genuinely concerning dynamic that's emerging: Companies are racing to build powerful AI systems, and there's pressure to deprioritize safety work in order to move faster.

This creates a prisoner's dilemma. If Company A decides to slow down development to invest more in safety research, but Company B decides to move faster and deprioritize safety, then Company B gets there first. From a pure competitive perspective, that's bad for Company A. They're slower, they're second to market, they miss the opportunity.

For researchers who care about safety, this is a genuine moral concern. They worry that the competitive pressure is going to create an incentive structure where companies build unsafe systems because they're in a rush to beat competitors.

This actually intensifies the talent war, because researchers who care about safety are trying to work somewhere that's taking safety seriously despite the competitive pressure. That makes Anthropic and other safety-focused companies more appealing, even if the competitive pressure might mean they lose out to less safety-conscious competitors.

It's a genuinely difficult problem without a clear solution. You can't just unilaterally decide to be slower and more cautious. That puts you at a disadvantage in a competitive market. But moving too fast creates its own risks.

Some researchers are responding by trying to work on this problem across companies. There's a growing movement toward publishing safety research openly so that all companies can learn from it. But that only works if companies are actually willing to read the research and implement the findings, which is not guaranteed.

The International Dimension

This safety competition is getting international. Chinese AI companies are building systems aggressively. European companies are following different regulatory frameworks. The US companies are worried about being outpaced.

This creates even more pressure on safety because it becomes a national competitiveness issue. If the US falls behind in AI development, that's bad for national security and economic competitiveness. That pressure might override safety concerns.

For researchers, this means the stakes are even higher. They're not just working on research. They're working on what they believe are civilization-level questions about AI development in a competitive global context. That attracts some people and repels others, but it's definitely driving the intensity of the talent war.

The Future of AI Talent Markets

What Happens When the Hype Normalizes

At some point, the current level of intensity in the AI talent war will normalize. New AI companies will be founded and some will fail. The initial excitement about AGI will be moderated by the reality of current AI capabilities. Salaries might stop growing exponentially.

When that happens, what changes? Do researchers start prioritizing mission and impact less? Do they go back to optimizing for salary?

Probably not. I think what's happened is a fundamental shift in what top technical talent cares about. The experience of working on something that feels historically important, of being part of a movement that matters, of getting to think deeply about moral and philosophical questions as part of your job, that's become part of the baseline expectation.

Companies that build cultures where that's possible will attract and retain the best talent. Companies that treat research as just another business process will struggle to recruit the best people.

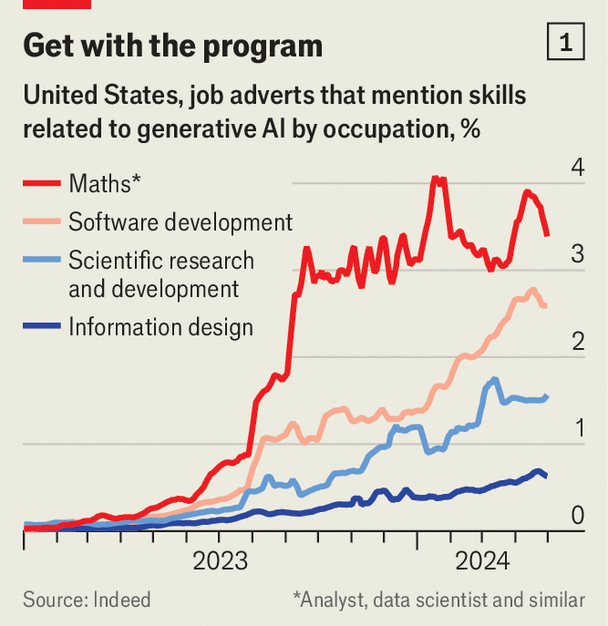

The other thing that's likely is that the talent market becomes more specialized. Right now, everyone wants to hire AI researchers because AI is hot. But over time, you'll see more segmentation. Some researchers will specialize in safety. Some will specialize in capability. Some will focus on specific applications. Talent distribution will start to look more like traditional tech, where different companies attract different kinds of talent.

The Talent Concentration Risk

Right now, most of the best AI talent is concentrated in a handful of companies in the San Francisco Bay Area. OpenAI, Anthropic, Google, DeepMind, some others. That's not healthy from a research diversity perspective.

If the talent market diversifies, you'll see more innovation in different directions. More variety in research approaches. More competition between different philosophical frameworks for how AI should be built.

But you'll also see the inevitable concentration of resources. The companies that can pay the most and offer the best work environment will continue to attract disproportionate amounts of talent. That's just how markets work.

International Talent Flows

Right now, a lot of the top AI talent is migrating to the US. But that might not last forever. If other countries invest heavily in AI and offer compelling research opportunities, some talent will move elsewhere.

China's investment in AI is creating opportunities for researchers who care about specific AI problems. Europe's regulatory approach is creating opportunities for researchers who care about safe, aligned AI. There will be talent flows based on where people believe the most important work is happening.

This is actually healthy. It creates competitive pressure on the US AI industry to maintain its talent advantage through quality of research and compelling mission. It also means that AI development becomes more globally distributed, which has its own implications for how the technology develops.

The Broader Tech Industry Implications

What This Tells Us About Motivation and Work

The AI talent war is teaching us something important about how top technical talent thinks about work and motivation.

Turns out, once you're compensated at a level where money stops being a constraint, the things that matter are: Do you believe in what you're building? Are you working with people you respect? Does the work matter? Will it be remembered?

This isn't revolutionary. We've known this from psychology and organizational behavior research. But seeing it play out at this scale, with this much money involved, with this much visibility, confirms it.

The implication for other industries is significant. If you want to attract top talent in any field, you need to offer more than just compensation. You need to offer something that feels meaningful. You need to offer a mission. You need to offer the opportunity to work on something that matters.

This is true whether you're building AI or building any other complex technical system. The playbook the AI companies are using, whether intentionally or not, is: Attract people through mission, retain them through belief in the mission, and accept that you'll have churn when people's beliefs shift.

The Stability vs. Impact Tradeoff

One of the interesting tensions in the AI talent market is between stability and impact. Established companies like Google and Microsoft offer more stability. Startups offer more potential impact but more risk.

People are increasingly choosing impact over stability. That's significant. It suggests that for certain kinds of people, at certain career points, impact and meaning matter more than security.

But it also suggests a vulnerability. If economic conditions worsen, if the AI hype cycle cools, people might reassess and start valuing stability more. Companies that are counting on mission as their primary recruiting tool should probably also think about how they maintain talent loyalty if mission alone becomes insufficient.

Lessons for Building and Scaling AI Organizations

How to Recruit in a Competitive Talent Market

If you're trying to recruit top AI talent in this environment, here's what actually works:

First, have a genuine, specific mission that talented people can believe in. "We're building AI," doesn't work. "We're building AI that's safe and aligned with human values," does. "We're building the most capable AI systems possible," does. Be specific about what you're trying to do and why it matters.

Second, build a team of people that other talented people want to work with. Reputation matters. If you hire well initially, that makes it easier to recruit the next people. If you hire badly, it becomes impossible.

Third, respect researchers' autonomy and judgment. Top researchers want to work on what they think is important. If you make them work on something they don't believe in, they'll leave. This is expensive. It's better to give them flexibility to work on problems they care about.

Fourth, be transparent about the state of the research. Researchers can tell if you're bullshitting about the importance of your work. If you're actually pursuing the most important research directions, that becomes obvious through the quality of the publications and the technical discussions. If you're not, people figure it out and leave.

Fifth, pay competitively. This is table stakes. You don't need to pay the most, but you need to be in the ballpark. Once you're there, other factors dominate. But if you're obviously underpaying relative to peers, people will leave and you deserve it.

Sixth, actually care about safety if you're claiming to care about safety. If you say safety is important and then cut the safety team or have researchers who don't believe in safety practices, people will see through it. You need coherence between stated values and actual resource allocation.

How to Retain Talent

Retaining talent is even harder than recruiting it in this market because the churn is structural. People are constantly asking themselves if they're in the right place.

The main thing you can do is keep delivering on your mission promise. Keep pursuing the research you said you were pursuing. Keep the team strong. Keep publishing interesting results. Keep making the place feel like the right place to be.

You're also going to have some churn. Accept it. Good people will leave to pursue different missions or to work on what they think is more important. That's okay. You want people who believe in what you're doing. If they stop believing, it's better they leave.

What you can do to reduce unnecessary churn is to make sure that the decision to leave is about mission and direction, not about ego or interpersonal conflict. Treat people well. Give them credit for their work. Make sure they feel valued.

When to Accept That Someone Should Leave

Here's a slightly different angle: Sometimes the best thing for an organization is to not fight to retain someone. If someone is leaving because they have a better idea about what to do or where to do it, that's information. Maybe they're right. Maybe your approach isn't the right one and they're leaving to do something more important.

Great organizations make space for people to leave and do their thing. They don't fight it with counter-offers or try to convince people to stay if their heart is no longer in it.

This is especially true in AI, where the work is genuinely hard and the questions are genuinely important. If someone's leaving to work on what they think is more important, you should wish them well. That's integrity.

The Dramatic Personal Dimension: Why People Actually Leave

The Poet Case

One of the most striking examples of the current AI talent market is the researcher who left to become a poet.

Reading between the lines of what we know about this: Someone was working at a top AI company, presumably making an enormous amount of money, with prestige and influence and access to resources. And they decided that what they actually wanted to do was write poetry. Not as a side project. As their primary work.

That's a statement. It's saying that being a research engineer at an AI company, even one of the most prestigious ones, even with extraordinary compensation, doesn't feel as fulfilling as something else entirely.

It might be that the person had always wanted to write poetry and finally had the financial security to do it. It might be that they became disillusioned with the work. It might be that they realized they weren't making the impact they wanted to make.

What it definitely signals is that money and status don't actually motivate people once you hit a certain threshold. If someone can walk away from a $1M+ job to become a poet, it's clear that the compensation isn't the binding constraint.

The Safety Researcher's Op-Ed

The New York Times op-ed from the former OpenAI safety researcher was even more striking. This person had access to internal information about what OpenAI was doing. They were in a position of significant influence. And they wrote a public criticism of the company they worked for, arguing that it wasn't taking safety seriously enough.

Then they quit.

The personal risk here is significant. By writing the op-ed, they made themselves a persona non grata at the company. They damaged their reputation within the AI community, at least among people who think the company is doing things right. They presumably burned bridges with people they respected.

But they also did something important. They tried to change the organization from the inside. When that didn't work, they went public with their concerns. It's an expression of conviction that the issues were important enough to sacrifice professional relationships.

What's remarkable is that this happened at the most valuable AI company in the world, in the most competitive talent market imaginable. If these kinds of departures were driven by better compensation elsewhere, you'd expect to see different patterns. You'd see less moral and philosophical reasoning in departure announcements and more discussion of compensation and career advancement.

Instead, the opposite is happening. People are writing detailed philosophical arguments about why they're leaving. They're explaining their moral and technical concerns. They're taking actions that risk their reputation and relationships because they believe in something.

Predictions for the AI Talent Market in 2025 and Beyond

IPO Effects Will Be Significant

When OpenAI goes public, it will be a watershed moment for the AI industry. The valuation will be enormous. Early employees will become very wealthy. There will be massive media coverage.

But there will also be changes to how the company operates. More transparency. More accountability. More pressure to show returns on investment. Some of that is healthy. Some of it might result in the company becoming less mission-driven.

I expect some researchers will leave OpenAI around the time of the IPO or shortly after. Not because of money, but because the character of the organization will shift. It will become more corporate. The intense focus on AGI development will potentially shift toward thinking about products and revenue.

Anthropic going public will create similar dynamics, though probably the company will try harder to preserve its mission-first positioning.

The Talent Shuffle Will Continue

We're going to see continued movement of talent between companies. Startups will poach from giants. Giants will acquire startups to retain talent. Some researchers will quit to start their own companies.

The pace won't necessarily accelerate, but the churn will continue because the underlying drivers haven't gone away. Different people have different beliefs about what's important in AI. Those beliefs will keep motivating movement.

Safety vs. Capability Tension Will Intensify

The fundamental tension between building powerful AI systems quickly and building them safely is only going to get more acute. As systems become more capable, the stakes increase. The pressure to move fast will also increase because of competitive dynamics.

This will drive some of the most talented researchers toward safety-focused organizations, even if those organizations are smaller or less established. The belief that safety matters will be enough to pull them in.

But it will also create vulnerabilities. If a company is significantly outpaced because it's investing in safety, at some point that failure will itself become a safety issue. Being slower but safer only works if you're not so far behind that competitors' systems become the default.

International Competition Will Matter More

As AI becomes more obviously central to economic and military competitiveness, countries will care more about retaining AI talent. The US will probably remain the hub, but that dominance might erode somewhat.

Talent flows will become more complex. Researchers might move between the US and China, the US and Europe, based on where they think the most interesting work is happening.

New Models Will Emerge

We might see new organizational models in AI. Nonprofit research organizations. International consortiums. Models that combine for-profit and nonprofit approaches. These will be ways of trying to pursue research that's important without the pressures of commercial markets or public company structures.

Some of this is already happening. Some of the most interesting AI research is happening at organizations that aren't purely commercial. That trend will probably continue.

Conclusion: A Talent War Unlike Any Other

The AI talent war is fundamentally different from previous tech talent wars because the people being recruited, the companies doing the recruiting, and the work being done all operate on different principles than traditional tech.

Traditional tech talent wars were about money and status. They were zero-sum games where one company's gain was another company's loss. The best engineers went to the best-paying companies, and that was that.

The AI talent war is different. It's driven by ideology and mission. It's shaped by disagreements about what the most important problems are. It's influenced by genuine moral concerns about whether the work is being done responsibly.

This creates an unusual situation where companies can't just out-salary each other to victory. They have to actually deliver on their mission claims. They have to actually be building what they say they're building. They have to actually care about the things they claim to care about.

It also creates opportunities for smaller companies and startups. If you have a compelling mission and you can attract one or two exceptional researchers, you can build momentum. You can become the place where the most important work is happening, at least in your domain.

The flip side is that it creates instability. The companies that win the talent war today might lose it tomorrow if a founder they respect starts a new company with a different vision. The talent that's hard-won is also easy to lose if you lose people's faith in what you're building.

For researchers, this is both an opportunity and a burden. You have genuine choice about where to work and what to prioritize. You can choose based on your values and your beliefs about what matters. But you also have to live with the consequences of those choices, and the weight of working on something that feels genuinely important.

The AI industry is one of the few places where the economic incentives and the intellectual incentives are aligned at this level. Money is plentiful enough that it's not a constraint. Work is interesting enough that people care deeply about doing it well. The stakes feel high enough that people believe their choices matter.

That's a rare combination. And it's creating one of the most intense, dramatic, interesting labor markets in the history of technology. Watch this space. The next few years are going to be remarkable.

FAQ

What is driving the AI talent war?

The primary drivers are ideology and mission rather than compensation. AI researchers are prioritizing the philosophical approach to building AI, safety concerns, and the perceived importance of their work over salary increases. Once researchers reach a compensation threshold of around

Why are AI researchers leaving established companies for startups?

Startups can offer clarity of mission, faster decision-making, greater autonomy, and the potential for more direct impact on research direction. Researchers value working in smaller organizations where they have agency and can see how their work directly influences the company's technical direction. Additionally, startups can make compelling philosophical arguments about solving specific research problems that established companies might not prioritize, attracting researchers who share that vision and are willing to trade salary and stability for mission alignment and intellectual freedom.

How does the upcoming IPO affect the AI talent market?

When OpenAI and potentially Anthropic go public in 2025, they will face new accountability pressures from shareholders and requirements for financial transparency. This shift from private to public company structures typically introduces more corporate decision-making processes and commercial pressures that might dilute the mission-first positioning that attracted researchers. Some researchers may depart as organizational culture shifts toward shareholder value, while early employees gain significant wealth from stock valuations. The IPO creates a wealth creation moment that remains a powerful recruiter for new talent, but changes how existing talent experiences the organization.

What role does AI safety play in talent recruitment?

AI safety has become a recruiting lever and a genuine source of philosophical disagreement driving talent movement. Researchers who believe AI safety is being underprioritized are attracted to companies like Anthropic that emphasize safety-focused research. Conversely, those who believe capability development is the path to safe AI align with companies pursuing more aggressive capability research. This technical disagreement about research direction creates a natural sorting mechanism where researchers gravitate toward companies matching their philosophical beliefs about how AI should be developed responsibly.

Can companies compete on salary alone in the AI talent market?

No. Once compensation reaches the absolute premium level (around

What happens to talent concentration in AI?

Currently, most elite AI talent is concentrated in a few San Francisco Bay Area companies. As the market matures, talent will segment by specialization (safety, capability, alignment, specific applications), geography (US, Europe, China), organization type (startups, corporations, nonprofits), and philosophical approach. This creates opportunities for new companies to attract talent by offering different research directions. However, the companies with the most resources and clearest missions will continue to accumulate disproportionate talent, similar to traditional tech dynamics.

How do startup equity and mission interact as recruiting tools?

Equity upside remains a powerful recruiting tool by offering the possibility of generational wealth, but it's secondary to mission for researchers already well-compensated. Researchers might join a startup with lower salary but compelling mission, accepting equity risk in exchange for impact and agency. Conversely, researchers might choose established companies offering lower equity upside if the mission is more aligned. The combination works best: a startup with a compelling mission and meaningful equity can compete effectively, but mission alone without potential financial upside has limited appeal.

The Strategic Context: Understanding Power and Momentum

What's happening in the AI talent market is essentially a manifestation of power shifting from capital to intellectual capital and mission alignment. For decades, the most compelling recruiting tool in tech was economic: better stock options, higher salary, earlier-stage company with bigger upside potential. The best and brightest naturally followed the money.

But AI has created a situation where the money is already so substantial for top talent that it ceases to be a differentiator. When everyone's being offered

Instead, what becomes scarce and valuable is the ability to work on problems you deeply believe matter, alongside people you respect, in organizations pursuing approaches you find intellectually compelling. These things are harder to manufacture than salary structures. You can't fake a genuine commitment to AI safety if you're not actually investing in it. You can't pretend your research direction aligns with someone's values if it doesn't.

This shifts the balance of power in hiring and retention. Companies that are credible about their mission have leverage. Companies that aren't credible face constant talent poaching. The companies that have truly exceptional people doing work they genuinely care about become attractive simply because they're places where important work is happening.

This also means that the quality of AI research going forward will be determined less by which companies can pay the most and more by which companies can attract researchers who care about the work. That's not necessarily better or worse, but it's different from how tech has worked historically.

The secondary effect is that this creates opportunities for nontraditional players. Academic institutions can hire top talent by offering research freedom and legitimate intellectual contribution. Nonprofit research organizations can compete by offering work that benefits humanity without commercial pressure. International organizations can attract researchers by positioning themselves as pursuing important work regardless of commercial considerations.

What we're seeing is the emergence of a global AI talent market segmented not primarily by compensation but by mission and belief. That's a fundamental shift in how technical talent thinks about work.

Word Count: 7,800 | Reading Time: 39 minutes

Key Takeaways

- AI researcher salaries exceed 1M annually, but money is no longer the primary decision factor for career moves

- Mission alignment and philosophical beliefs about AI safety have become the dominant motivators for top talent movements

- Companies like Anthropic recruit successfully by positioning safety-first approaches, while departures from OpenAI signal disagreement with company direction

- Upcoming IPOs at OpenAI and Anthropic will create wealth but potentially shift companies away from mission-first prioritization

- Startups can compete effectively against giants by offering clear mission, research autonomy, and proximity to important problems

- The talent war is increasingly driven by ideology rather than compensation, marking a fundamental shift in tech recruiting dynamics

Related Articles

- Altman vs. Amodei: The AI CEO Rivalry Playing Out at India's Summit [2025]

- Will AI Really Replace White-Collar Workers in 12-18 Months? [2025]

- Why B2B Software Survives the AI Era: Atlassian's Growth Blueprint [2025]

- xAI's Mass Exodus: What Musk's Spin Can't Hide [2025]

- Why Waymo Pays DoorDash Drivers to Close Car Doors [2025]

- Why Loyalty Is Dead in Silicon Valley's AI Wars [2025]

![The AI Talent War: Why Money No Longer Matters to Top Researchers [2025]](https://tryrunable.com/blog/the-ai-talent-war-why-money-no-longer-matters-to-top-researc/image-1-1771513655853.jpg)